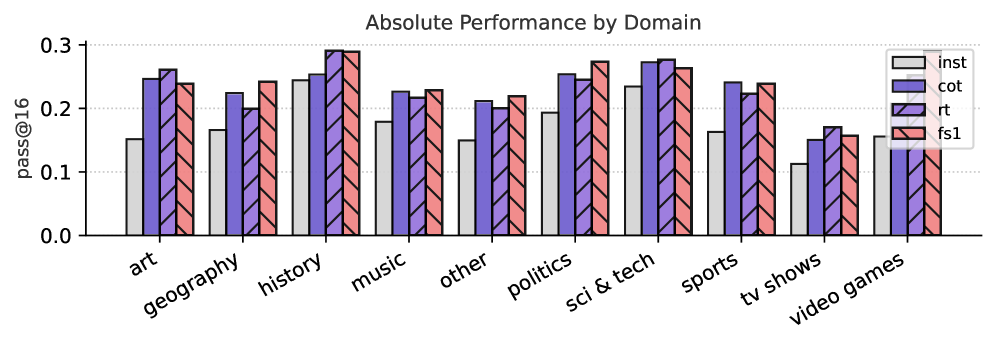

## Bar Chart: Absolute Performance by Domain

### Overview

The image presents a bar chart comparing the performance of different models (inst, cot, rt, fs1) across various domains (art, geography, history, music, other, politics, sci & tech, sports, tv shows, video games). The performance metric is "pass@16", representing the probability of a correct answer.

### Components/Axes

* **Title:** "Absolute Performance by Domain" - positioned at the top-center of the chart.

* **X-axis:** Represents the domains. The categories are: "art", "geography", "history", "music", "other", "politics", "sci & tech", "sports", "tv shows", "video games".

* **Y-axis:** Represents the "pass@16" score, ranging from 0.0 to 0.3, with tick marks at 0.0, 0.1, 0.2, and 0.3. The axis is labeled "pass@16".

* **Legend:** Located in the top-right corner, identifying the four data series:

* "inst" - Light Blue

* "cot" - Light Purple

* "rt" - Dark Gray

* "fs1" - Pink/Red

### Detailed Analysis

Each domain has four bars representing the "pass@16" score for each model. I will analyze each domain individually, noting approximate values.

* **Art:** inst ≈ 0.26, cot ≈ 0.24, rt ≈ 0.24, fs1 ≈ 0.24

* **Geography:** inst ≈ 0.27, cot ≈ 0.25, rt ≈ 0.23, fs1 ≈ 0.24

* **History:** inst ≈ 0.28, cot ≈ 0.26, rt ≈ 0.24, fs1 ≈ 0.25

* **Music:** inst ≈ 0.29, cot ≈ 0.27, rt ≈ 0.22, fs1 ≈ 0.25

* **Other:** inst ≈ 0.23, cot ≈ 0.21, rt ≈ 0.21, fs1 ≈ 0.22

* **Politics:** inst ≈ 0.27, cot ≈ 0.25, rt ≈ 0.23, fs1 ≈ 0.24

* **Sci & Tech:** inst ≈ 0.28, cot ≈ 0.27, rt ≈ 0.25, fs1 ≈ 0.26

* **Sports:** inst ≈ 0.26, cot ≈ 0.25, rt ≈ 0.23, fs1 ≈ 0.24

* **TV Shows:** inst ≈ 0.23, cot ≈ 0.22, rt ≈ 0.18, fs1 ≈ 0.22

* **Video Games:** inst ≈ 0.21, cot ≈ 0.19, rt ≈ 0.16, fs1 ≈ 0.18

**Trends:**

* The "inst" model generally performs the best across most domains, with a slight upward trend in performance from "art" to "sci & tech", then a decline.

* "cot" consistently performs well, usually second to "inst".

* "rt" generally has the lowest scores across all domains.

* "fs1" performance is variable, sometimes close to "cot" and sometimes closer to "rt".

### Key Observations

* The "music" domain shows the largest difference in performance between the "inst" and "rt" models.

* "TV Shows" and "Video Games" have the lowest overall performance scores across all models.

* The "inst" model consistently outperforms the others, but the margin varies by domain.

* The "rt" model consistently underperforms compared to the other three.

### Interpretation

The chart demonstrates the varying performance of different models across a range of knowledge domains. The "inst" model appears to be the most robust, achieving the highest "pass@16" scores in most categories. This suggests that the "inst" model is better at answering questions across a broader range of topics. The consistently lower performance of the "rt" model indicates it may struggle with the complexity or nuance of the questions posed in these domains. The lower scores in "TV Shows" and "Video Games" could indicate a lack of training data or inherent difficulty in these areas. The differences in performance between domains highlight the importance of domain-specific knowledge and the challenges of building general-purpose question-answering systems. The chart provides valuable insights into the strengths and weaknesses of each model, which can inform future development efforts.