\n

## Hierarchical Flowchart: XAI Methods in LLMs

### Overview

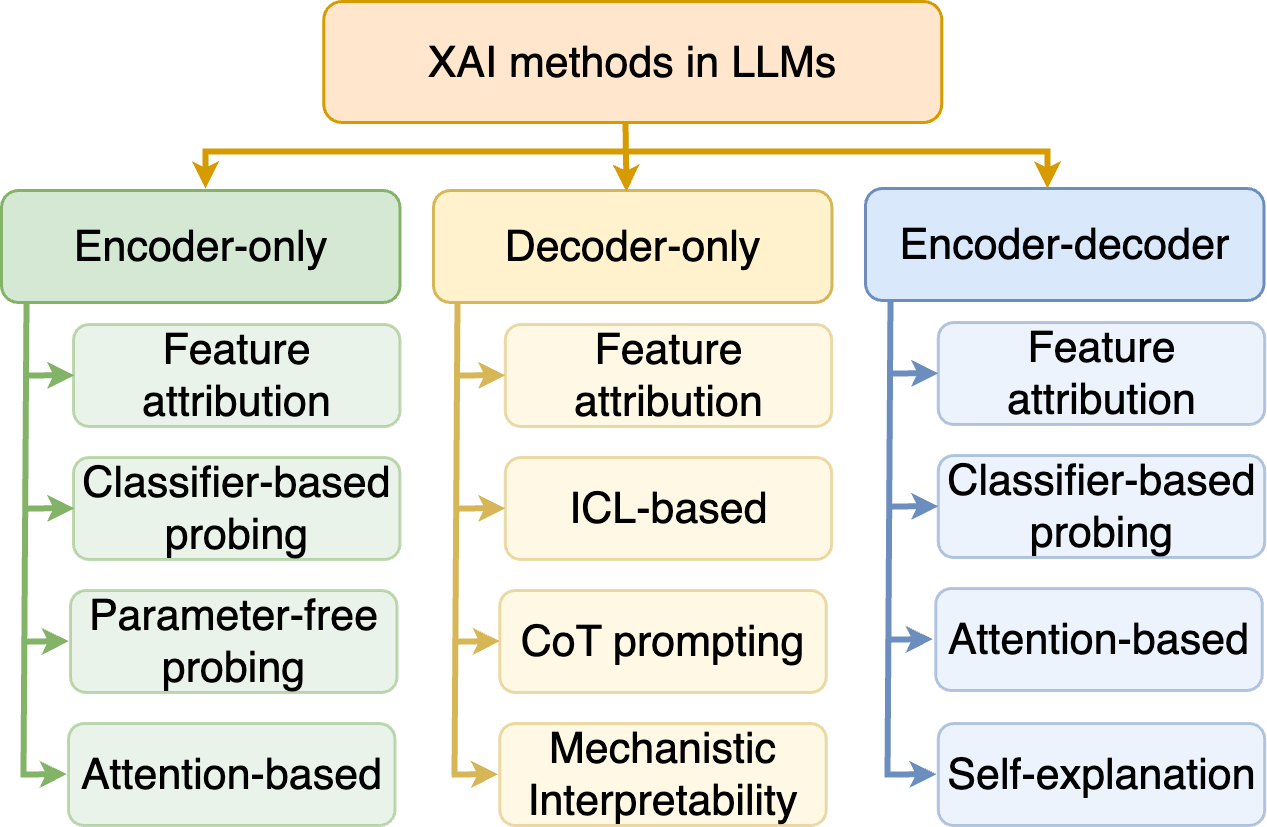

The image is a hierarchical flowchart diagram illustrating the categorization of Explainable AI (XAI) methods as applied to Large Language Models (LLMs). The diagram organizes methods based on the underlying LLM architecture they are most associated with: Encoder-only, Decoder-only, and Encoder-decoder. The structure is a top-down tree with a single root node branching into three primary categories, each of which further branches into specific method types.

### Components/Axes

* **Root Node (Top Center):** A single orange-bordered box containing the title "XAI methods in LLMs".

* **Primary Categories (Second Level):** Three distinct, color-coded boxes arranged horizontally below the root.

* **Left (Green):** "Encoder-only"

* **Center (Yellow):** "Decoder-only"

* **Right (Blue):** "Encoder-decoder"

* **Method Nodes (Third Level):** Lists of specific XAI methods branch downward from each primary category box. The connecting arrows share the color of their parent category.

* **Layout:** The diagram uses a clean, structured layout with consistent spacing. The legend is implicit in the color-coding of the boxes and arrows, which directly maps to the three architectural categories.

### Detailed Analysis

The diagram explicitly lists the following XAI methods under each architectural category:

**1. Encoder-only (Green Column, Left)**

* Methods are connected by green arrows.

* The listed methods, from top to bottom, are:

1. Feature attribution

2. Classifier-based probing

3. Parameter-free probing

4. Attention-based

**2. Decoder-only (Yellow Column, Center)**

* Methods are connected by yellow arrows.

* The listed methods, from top to bottom, are:

1. Feature attribution

2. ICL-based (In-Context Learning based)

3. CoT prompting (Chain-of-Thought prompting)

4. Mechanistic Interpretability

**3. Encoder-decoder (Blue Column, Right)**

* Methods are connected by blue arrows.

* The listed methods, from top to bottom, are:

1. Feature attribution

2. Classifier-based probing

3. Attention-based

4. Self-explanation

### Key Observations

* **Common Methods:** "Feature attribution" appears in all three categories, indicating it is a fundamental technique applicable across LLM architectures.

* **Architectural Specificity:** Certain methods are unique to a specific architecture in this taxonomy:

* **Decoder-only:** ICL-based, CoT prompting, and Mechanistic Interpretability are listed exclusively here.

* **Encoder-only:** Parameter-free probing is listed exclusively here.

* **Encoder-decoder:** Self-explanation is listed exclusively here.

* **Shared Methods:** "Classifier-based probing" and "Attention-based" methods are shared between the Encoder-only and Encoder-decoder categories but are not listed under Decoder-only.

* **Visual Organization:** The color-coding (green, yellow, blue) provides immediate visual grouping, making it easy to associate methods with their parent architectural category.

### Interpretation

This diagram serves as a conceptual taxonomy, not a data chart. It suggests that the choice of XAI method for an LLM is not one-size-fits-all but is influenced by the model's core architecture.

* **Relationship Between Elements:** The flowchart implies a "is applicable to" or "is commonly used with" relationship. It organizes the landscape of XAI techniques by grouping them according to the model type they are designed to interpret.

* **Underlying Message:** The diagram highlights that while some explanation techniques (like feature attribution) are broadly useful, others are tailored to the unique properties of specific architectures. For example, methods like CoT prompting and ICL-based explanations are particularly relevant for autoregressive decoder-only models (like GPT), which excel at in-context learning and step-by-step reasoning. Conversely, "Self-explanation" is positioned as a method for encoder-decoder models (like T5), which may have different internal representations suitable for generating their own explanations.

* **Purpose:** This is a pedagogical or survey-style diagram intended to provide a structured overview of the XAI field for LLMs, helping researchers or practitioners navigate the available techniques based on the model they are working with.