## Scatter Plot Comparison: Accuracy vs. Cost for Two Language Models

### Overview

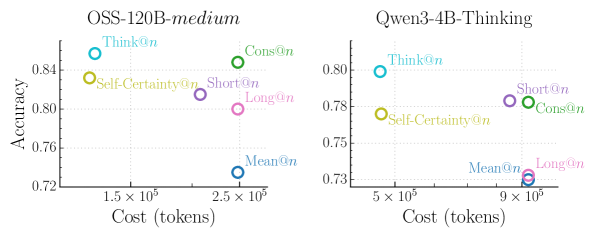

The image displays two side-by-side scatter plots comparing the performance (Accuracy) against computational cost (in tokens) for various inference methods applied to two different large language models. The left plot is for a model labeled "OSS-120B-medium," and the right plot is for "Qwen3-4B-Thinking." Each data point represents a specific method, identified by a unique color and label.

### Components/Axes

**Common Elements (Both Plots):**

* **Chart Type:** Scatter plot.

* **Y-Axis:** Labeled "Accuracy." The scale is linear.

* **X-Axis:** Labeled "Cost (tokens)." The scale is logarithmic (base 10), indicated by the tick labels (e.g., 1.5 x 10⁵, 5 x 10⁵).

* **Data Series:** Six distinct methods, each represented by a colored circle and a text label. The legend is embedded directly next to each data point.

**Left Plot: OSS-120B-medium**

* **Title:** "OSS-120B-medium" (top center).

* **Y-Axis Range:** Approximately 0.72 to 0.86.

* **X-Axis Range:** Approximately 1.0 x 10⁵ to 3.0 x 10⁵ tokens.

* **Data Points & Labels (with approximate coordinates):**

1. **Think@n** (Cyan): Top-left quadrant. Accuracy ≈ 0.85, Cost ≈ 1.2 x 10⁵.

2. **Self-Certainty@n** (Yellow): Upper-left quadrant. Accuracy ≈ 0.83, Cost ≈ 1.3 x 10⁵.

3. **Cons@n** (Green): Top-right quadrant. Accuracy ≈ 0.85, Cost ≈ 2.6 x 10⁵.

4. **Short@n** (Purple): Center-right. Accuracy ≈ 0.81, Cost ≈ 2.2 x 10⁵.

5. **Long@n** (Pink): Center-right, below Short@n. Accuracy ≈ 0.80, Cost ≈ 2.5 x 10⁵.

6. **Mean@n** (Blue): Bottom-right quadrant. Accuracy ≈ 0.73, Cost ≈ 2.5 x 10⁵.

**Right Plot: Qwen3-4B-Thinking**

* **Title:** "Qwen3-4B-Thinking" (top center).

* **Y-Axis Range:** Approximately 0.73 to 0.81.

* **X-Axis Range:** Approximately 4.0 x 10⁵ to 1.0 x 10⁶ tokens.

* **Data Points & Labels (with approximate coordinates):**

1. **Think@n** (Cyan): Top-left quadrant. Accuracy ≈ 0.80, Cost ≈ 5.0 x 10⁵.

2. **Self-Certainty@n** (Yellow): Upper-left quadrant. Accuracy ≈ 0.78, Cost ≈ 5.5 x 10⁵.

3. **Short@n** (Purple): Upper-right quadrant. Accuracy ≈ 0.78, Cost ≈ 8.5 x 10⁵.

4. **Cons@n** (Green): Upper-right quadrant, below Short@n. Accuracy ≈ 0.78, Cost ≈ 9.0 x 10⁵.

5. **Mean@n** (Blue): Bottom-right quadrant. Accuracy ≈ 0.73, Cost ≈ 9.5 x 10⁵.

6. **Long@n** (Pink): Bottom-right quadrant, overlapping/very close to Mean@n. Accuracy ≈ 0.73, Cost ≈ 9.5 x 10⁵.

### Detailed Analysis

**Trend Verification & Spatial Grounding:**

* **OSS-120B-medium Plot:** There is a general, loose trend where methods with higher accuracy (Think@n, Cons@n) are positioned higher on the y-axis. However, cost does not correlate perfectly with accuracy. `Think@n` achieves the highest accuracy at the lowest cost. `Cons@n` matches its accuracy but at more than double the cost. `Mean@n` is a clear outlier, incurring high cost for the lowest accuracy.

* **Qwen3-4B-Thinking Plot:** The data points are more tightly clustered in accuracy (0.73-0.80) but span a wider cost range. `Think@n` again offers the best accuracy-to-cost ratio. `Short@n` and `Cons@n` have nearly identical accuracy and cost. `Mean@n` and `Long@n` are clustered together at the high-cost, low-accuracy corner.

### Key Observations

1. **Consistent Top Performer:** The `Think@n` method (cyan) consistently achieves the highest or near-highest accuracy at the lowest relative cost in both models.

2. **Cost-Accuracy Disconnect:** Higher cost does not guarantee higher accuracy. For example, `Mean@n` (blue) is among the most expensive methods in both plots but yields the lowest accuracy.

3. **Model-Specific Scaling:** The "Qwen3-4B-Thinking" model operates at a significantly higher token cost range (5x10⁵ to 1x10⁶) compared to "OSS-120B-medium" (1x10⁵ to 3x10⁵) for these methods, despite being a smaller model (4B vs. 120B parameters). This suggests the "Thinking" variant may involve more verbose or complex internal reasoning steps.

4. **Method Clustering:** In the Qwen3 model, `Short@n` and `Cons@n` converge to nearly the same point, while `Mean@n` and `Long@n` converge at another. This suggests similar performance profiles for these method pairs within this specific model.

### Interpretation

This visualization demonstrates a critical trade-off in language model inference: the balance between output quality (accuracy) and computational expense (token cost). The data suggests that not all "chain-of-thought" or sampling-based methods are created equal.

* **Efficiency of `Think@n`:** The `Think@n` method appears to be the most efficient strategy, providing a strong accuracy boost without a proportional increase in token usage. This could imply it generates more focused or effective reasoning traces.

* **Inefficiency of Averaging (`Mean@n`):** The poor performance of `Mean@n` (likely averaging multiple outputs) is striking. It consumes substantial resources (high token cost) for minimal accuracy gain, suggesting that simple averaging may not be an effective strategy for these tasks and models, or may even be detrimental.

* **Model Behavior Differences:** The stark difference in cost scales between the two models highlights how architectural choices (like a dedicated "Thinking" mode) can fundamentally alter the resource profile of inference techniques, independent of raw model size. The tighter clustering in the Qwen3 plot may indicate less variance in how its different sampling strategies perform.

**In summary, the charts argue for careful selection of inference methods, as the most expensive approach is not the most effective. `Think@n` emerges as a particularly compelling method for achieving high accuracy with controlled cost across different model architectures.**