\n

## Line Chart: Surprisal vs. Training Steps for Match and Mismatch Conditions

### Overview

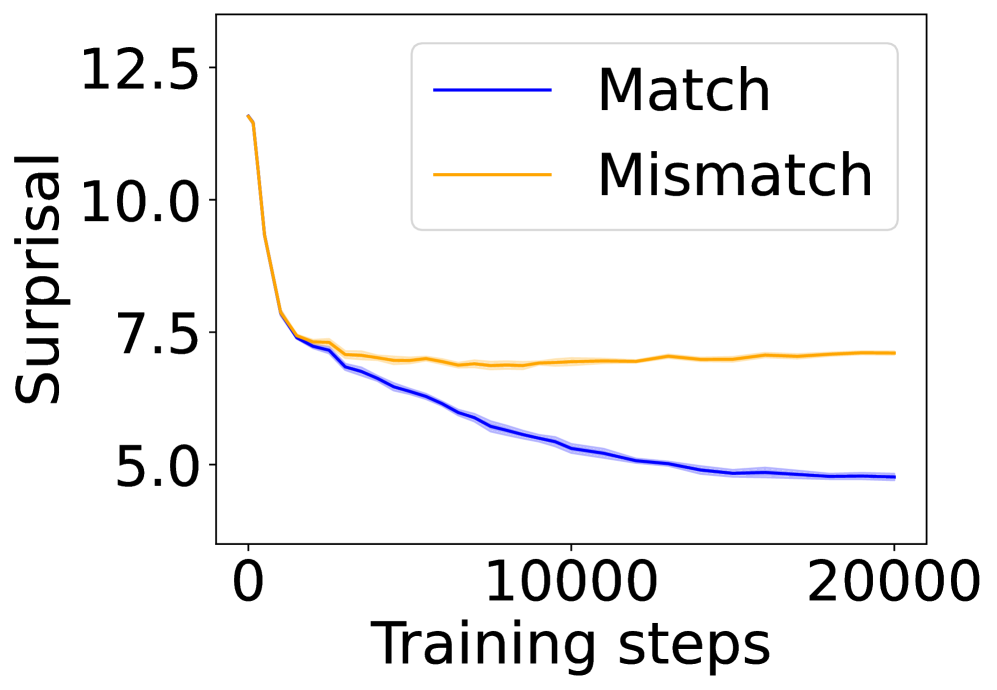

The image is a line chart plotting "Surprisal" (a measure of prediction uncertainty or information content) against "Training steps" for two distinct conditions: "Match" and "Mismatch." The chart illustrates how the surprisal value evolves over the course of model training for these two scenarios.

### Components/Axes

* **X-Axis (Horizontal):**

* **Label:** "Training steps"

* **Scale:** Linear scale from 0 to 20,000.

* **Major Tick Marks:** 0, 10000, 20000.

* **Y-Axis (Vertical):**

* **Label:** "Surprisal"

* **Scale:** Linear scale from approximately 4.0 to 13.0.

* **Major Tick Marks:** 5.0, 7.5, 10.0, 12.5.

* **Legend:**

* **Position:** Top-right corner of the plot area.

* **Entry 1:** A solid blue line labeled "Match".

* **Entry 2:** A solid orange line labeled "Mismatch".

### Detailed Analysis

**Data Series Trends and Approximate Values:**

1. **"Match" Series (Blue Line):**

* **Trend:** The line exhibits a steep, continuous downward slope that gradually flattens over time. It shows a consistent decrease in surprisal as training progresses.

* **Key Points (Approximate):**

* Step 0: ~12.5

* Step 2,500: ~7.5

* Step 10,000: ~5.5

* Step 20,000: ~5.0

2. **"Mismatch" Series (Orange Line):**

* **Trend:** The line shows an initial sharp decline similar to the "Match" line, but then plateaus and remains relatively flat for the remainder of the training steps, with minor fluctuations.

* **Key Points (Approximate):**

* Step 0: ~12.5 (coincides with the "Match" line start)

* Step 2,500: ~7.5 (coincides with the "Match" line at this point)

* Step 10,000: ~7.0

* Step 20,000: ~7.2

**Spatial Relationship:** The two lines originate from the same point at step 0. They remain closely aligned until approximately step 2,500, after which they diverge. The blue "Match" line continues its descent below the orange "Mismatch" line, creating a widening gap. The legend is positioned in the upper right quadrant, not overlapping with the primary data trends.

### Key Observations

1. **Initial Convergence:** Both conditions start with identical high surprisal (~12.5) and improve at a nearly identical rate for the first ~2,500 training steps.

2. **Divergence Point:** A clear divergence occurs around step 2,500. The "Match" condition continues to improve (lower surprisal), while the "Mismatch" condition's improvement stalls.

3. **Final State:** By step 20,000, there is a significant and sustained gap between the two conditions. The "Match" surprisal (~5.0) is substantially lower than the "Mismatch" surprisal (~7.2).

4. **Plateau Behavior:** The "Mismatch" line exhibits a plateau after the initial drop, indicating that further training does not lead to significant reduction in surprisal for this condition.

### Interpretation

This chart demonstrates a fundamental difference in how a model learns from "Matched" versus "Mismatched" data or conditions during training.

* **What the data suggests:** The model is able to continuously reduce its prediction error (surprisal) on data that matches its training distribution or expected patterns ("Match"). However, for data that is mismatched—perhaps out-of-distribution, adversarial, or contradictory—the model's ability to improve hits a ceiling very early in training. The initial rapid improvement suggests the model learns basic, generalizable features applicable to both conditions, but the divergence indicates it cannot effectively learn or adapt to the specific characteristics of the "Mismatch" condition beyond a certain point.

* **Relationship between elements:** The shared starting point and initial parallel decline establish a common baseline. The subsequent divergence is the chart's central narrative, highlighting the limitation in the model's learning capacity for mismatched scenarios. The plateau of the "Mismatch" line is the critical visual evidence of this limitation.

* **Notable implications:** This pattern is indicative of a model that may perform well on in-distribution tasks but lacks robustness or generalization to certain types of distributional shift. The persistent gap suggests that simply increasing training steps is not a solution for improving performance on the "Mismatch" condition; a change in the model architecture, training data, or objective function would likely be required.