## Diagram: Learning Pipeline from Nature to Human Cognition

### Overview

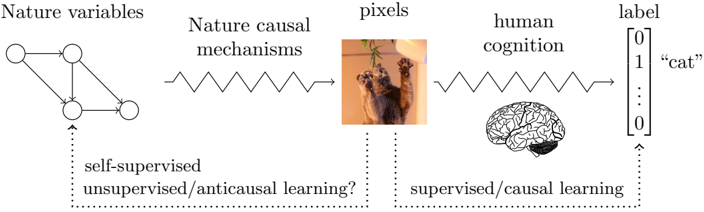

The image is a conceptual diagram illustrating a pipeline or process flow, likely representing a model of learning or perception in artificial intelligence or cognitive science. It depicts a sequence from natural phenomena to human-like labeling, with annotations indicating different learning paradigms. The diagram is composed of text labels, abstract graphical elements, and two photographic illustrations.

### Components/Axes

The diagram is organized horizontally from left to right, with a secondary flow indicated by dotted arrows below the main sequence.

**Main Horizontal Flow (Left to Right):**

1. **"Nature variables"**: Represented by a directed graph (nodes and edges) on the far left.

2. **"Nature causal mechanisms"**: Represented by a wavy, oscillating line (like a spring or wave) connecting the graph to the next element.

3. **"pixels"**: Represented by a small, square photograph of a tabby cat lying on its back, paws in the air.

4. **"human cognition"**: Represented by a wavy line (similar to the previous one) connecting the cat image to an illustration of a human brain.

5. **"label"**: Represented by a vertical vector or column containing the numbers `0`, `1`, a vertical ellipsis (`⋮`), and `0`. To the right of this vector is the text `"cat"` in quotes.

**Secondary Flow (Bottom, indicated by dotted arrows):**

* A dotted arrow originates from the "Nature variables" graph and points downward to the text: **"self-supervised"** and **"unsupervised/anticausal learning?"**.

* A second dotted arrow originates from the "pixels" (cat image) and points downward to the text: **"supervised/causal learning"**.

* A third dotted arrow originates from the "label" vector and points downward to the same text: **"supervised/causal learning"**.

### Detailed Analysis

The diagram constructs a narrative of information transformation:

1. **Origin (Nature):** The process begins with abstract "Nature variables" modeled as a causal graph. The connection to "Nature causal mechanisms" suggests these variables interact through underlying physical or logical laws.

2. **Perception (Pixels):** These causal mechanisms produce observable data, exemplified by the "pixels" of a cat image. This represents the raw sensory input.

3. **Interpretation (Cognition):** The raw pixel data is processed by "human cognition," symbolized by the brain. This stage implies the application of learned patterns, context, and understanding.

4. **Output (Label):** The final output is a discrete "label" (`"cat"`), represented as a one-hot encoded vector (where the second position is `1` and others are `0`). This is the categorical identification.

The lower annotations map learning paradigms to stages of this pipeline:

* **Self-supervised / Unsupervised / Anticausal learning?** is linked to the initial "Nature variables." This suggests learning the structure of the world (the causal graph) without explicit labels, possibly in an anticausal direction (from effects to causes).

* **Supervised / Causal learning** is linked to both the "pixels" (input data) and the final "label" (output). This represents the standard supervised learning setup where a model learns a direct (causal) mapping from input data to known output labels.

### Key Observations

* **Dual Representation:** The diagram uses both abstract symbols (graphs, vectors, wavy lines) and concrete images (cat photo, brain illustration) to bridge theoretical concepts with tangible examples.

* **Question Mark:** The inclusion of a question mark in `"unsupervised/anticausal learning?"` introduces an element of uncertainty or hypothesis regarding the applicability of that learning paradigm to the "Nature variables" stage.

* **Vector Notation:** The label is explicitly shown as a numerical vector (`[0, 1, ..., 0]`), which is a standard format in machine learning for categorical data, grounding the abstract concept in technical practice.

* **Spatial Layout:** The main flow is linear and left-aligned. The learning paradigm annotations are placed directly below the components they relate to, creating a clear visual association.

### Interpretation

This diagram presents a **Peircean investigative model** of perception and learning. It argues that what we call "understanding" (e.g., recognizing a cat) is not a direct readout of nature but a multi-stage inferential process:

1. **Firstness (Quality/Possibility):** The "Nature variables" and their causal mechanisms represent the underlying, unobservable reality—the realm of pure possibility and law.

2. **Secondness (Reaction/Fact):** The "pixels" represent the brute fact of sensory experience—the reaction of our sensors (or cameras) to that reality. This is the dyadic encounter.

3. **Thirdness (Representation/Law):** "Human cognition" and the final "label" represent the interpretive law or habit. It is the mediating representation that connects the sensory fact (pixels) back to the underlying reality (nature) in a meaningful way, allowing for prediction and communication.

The annotations on learning paradigms suggest a critical insight: **Supervised learning** (mapping pixels to labels) mimics the final, human-like cognitive step. However, a deeper, more fundamental form of learning—potentially **self-supervised or unsupervised**—is required to grasp the initial "Nature variables" and their causal structure. The question mark implies that whether this early-stage learning is truly "anticausal" (inferring causes from effects) is an open or context-dependent question.

In essence, the diagram posits that robust AI or cognitive systems may need to learn not just the final labeling function, but also the causal structure of the world that generates the data in the first place. The "cat" is not just a pattern in pixels; it is the end product of a causal chain in nature, which our cognition has learned to invert.