## Diagram: Fallacy Classification Pipeline

### Overview

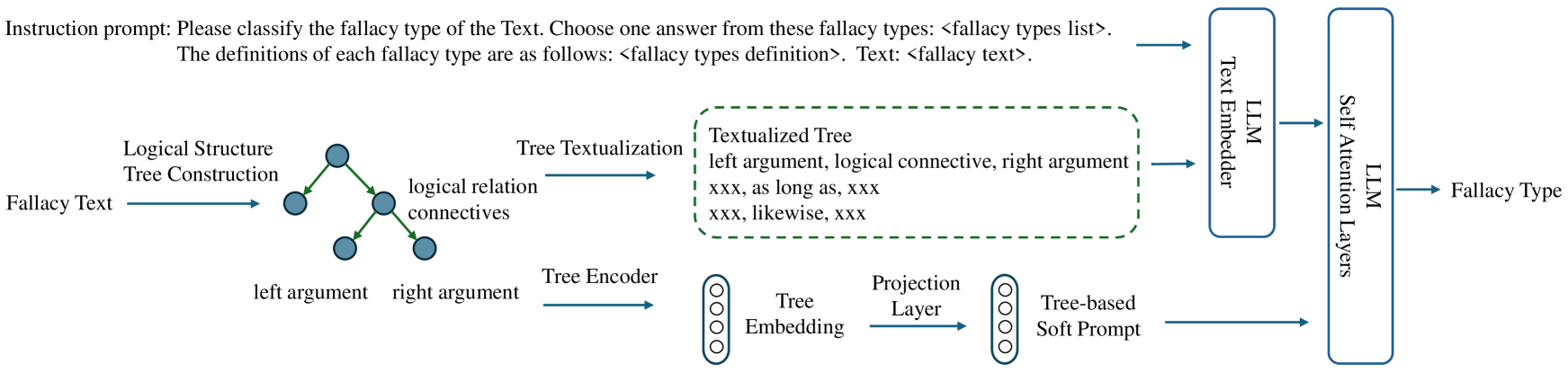

The image is a technical flowchart illustrating a two-pathway pipeline for classifying logical fallacies in text. The system combines symbolic logical structure analysis with a Large Language Model (LLM) to produce a final "Fallacy Type" classification. The process begins with an instruction prompt and raw "Fallacy Text," processes it through parallel structural and embedding pathways, and converges in an LLM for final prediction.

### Components/Axes

The diagram is organized into several distinct regions and components:

1. **Instruction Prompt (Top Region):**

* **Text:** "Instruction prompt: Please classify the fallacy type of the Text. Choose one answer from these fallacy types: <fallacy types list>. The definitions of each fallacy type are as follows: <fallacy types definition>. Text: <fallacy text>."

* **Function:** This text block defines the core task and provides the template for the input to the final LLM component. It uses placeholders (`<...>`) for dynamic content.

2. **Input (Leftmost Element):**

* **Label:** "Fallacy Text"

* **Function:** The raw text input containing a potential logical fallacy to be analyzed.

3. **Pathway 1: Symbolic Logical Structure Processing (Upper-Middle Region):**

* **Component 1:** "Logical Structure Tree Construction"

* **Input:** "Fallacy Text"

* **Output:** A tree diagram with nodes (blue circles) and directed edges (green arrows). Labels on the edges include "logical relation connectives." The leaf nodes are labeled "left argument" and "right argument."

* **Component 2:** "Tree Textualization"

* **Input:** The constructed logical tree.

* **Output:** A dashed green box labeled "Textualized Tree." Inside, it lists a template and examples:

* "left argument, logical connective, right argument"

* "xxx, as long as, xxx"

* "xxx, likewise, xxx"

4. **Pathway 2: Neural Embedding Processing (Lower-Middle Region):**

* **Component 1:** "Tree Encoder"

* **Input:** The same logical structure tree from Pathway 1.

* **Output:** "Tree Embedding" (represented by a vertical column of four circles).

* **Component 2:** "Projection Layer"

* **Input:** "Tree Embedding"

* **Output:** "Tree-based Soft Prompt" (another vertical column of four circles).

5. **Convergence & LLM Processing (Right Region):**

* **Component 1:** "LLM Text Embedder"

* **Inputs:**

1. The original "Instruction prompt" (from the top).

2. The "Textualized Tree" from Pathway 1.

* **Component 2:** "LLM Self Attention Layers"

* **Input:** The output from the "LLM Text Embedder."

* **Additional Input:** The "Tree-based Soft Prompt" from Pathway 2.

* **Final Output:** An arrow points from the "LLM Self Attention Layers" to the label "Fallacy Type."

### Detailed Analysis

The diagram details a sophisticated hybrid architecture:

* **Flow Direction:** The process flows from left to right. The initial "Fallacy Text" splits into two parallel processing streams that later merge.

* **Pathway 1 (Symbolic):** Converts raw text into an explicit logical tree structure, then converts that tree back into a structured textual format ("Textualized Tree"). This path emphasizes interpretable, rule-based logical relationships.

* **Pathway 2 (Neural):** Encodes the same logical tree into a dense vector representation ("Tree Embedding") and projects it into a "Soft Prompt." This path creates a continuous, model-ready representation of the logical structure.

* **Integration:** Both pathways feed into a central LLM. The "Textualized Tree" is combined with the instruction prompt and embedded. Crucially, the "Tree-based Soft Prompt" is injected directly into the LLM's self-attention layers, suggesting it acts as a guiding signal or bias for the model's processing of the textual input.

### Key Observations

1. **Dual Representation:** The system explicitly maintains two representations of the logical structure: a human-readable textual form and a machine-optimized embedding form.

2. **Soft Prompting:** The use of a "Tree-based Soft Prompt" indicates a parameter-efficient tuning method, where the logical structure influences the LLM without modifying its core weights.

3. **Placeholder Syntax:** The instruction prompt uses clear placeholders (`<fallacy types list>`, `<fallacy text>`), indicating this is a template for a dynamic system.

4. **Visual Coding:** Green is used for the logical structure pathway (tree edges, textualization box), while blue is used for nodes and the LLM components, creating a visual distinction between the symbolic and neural elements.

### Interpretation

This diagram represents a neuro-symbolic approach to a complex NLP task. The core innovation is the dual use of logical structure:

1. **Explicit Guidance:** By textualizing the logical tree, the system provides the LLM with a clean, structured representation of the argument's form, potentially making fallacies more detectable than in raw, noisy text.

2. **Implicit Guidance:** The soft prompt derived from the tree embedding likely steers the LLM's internal attention mechanisms to focus on the logically salient parts of the input text as defined by the constructed tree.

The pipeline suggests that pure LLM reasoning on fallacy text may be insufficient or unreliable. By first parsing the text into a formal logical structure and then using that structure to condition the LLM's processing, the system aims to achieve more accurate, robust, and potentially interpretable fallacy classification. The architecture implies that the *form* of an argument (its logical skeleton) is as important as its *content* (the specific words used) for identifying reasoning errors.