TECHNICAL ASSET FINGERPRINT

61dca7fa0b1065859f53efbd

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

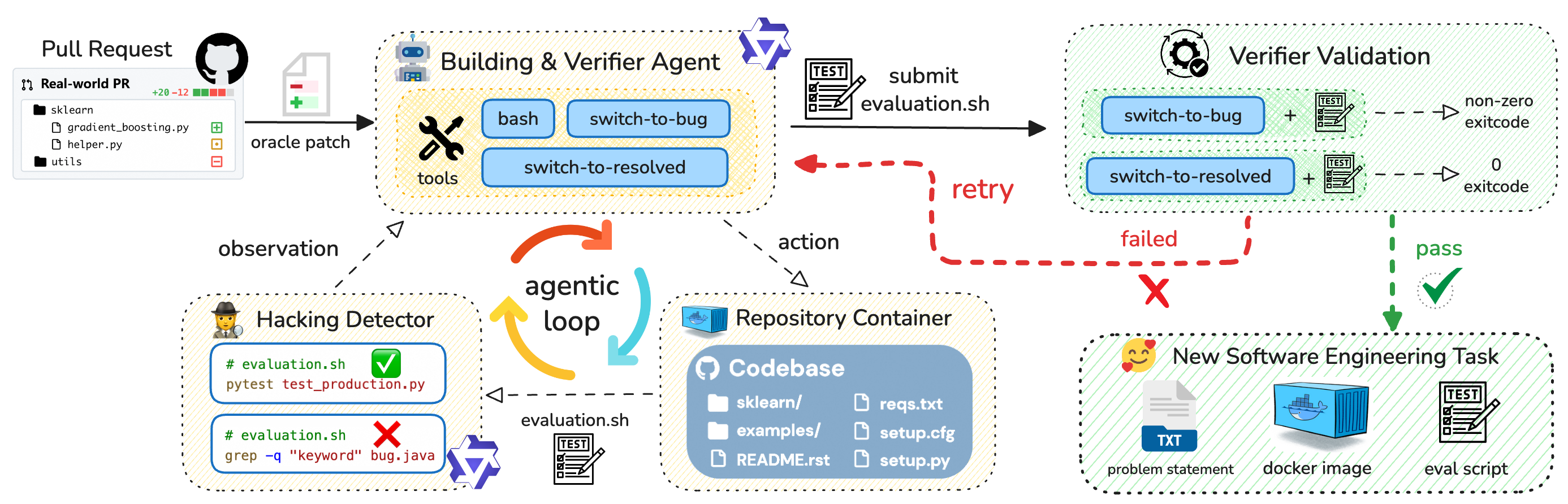

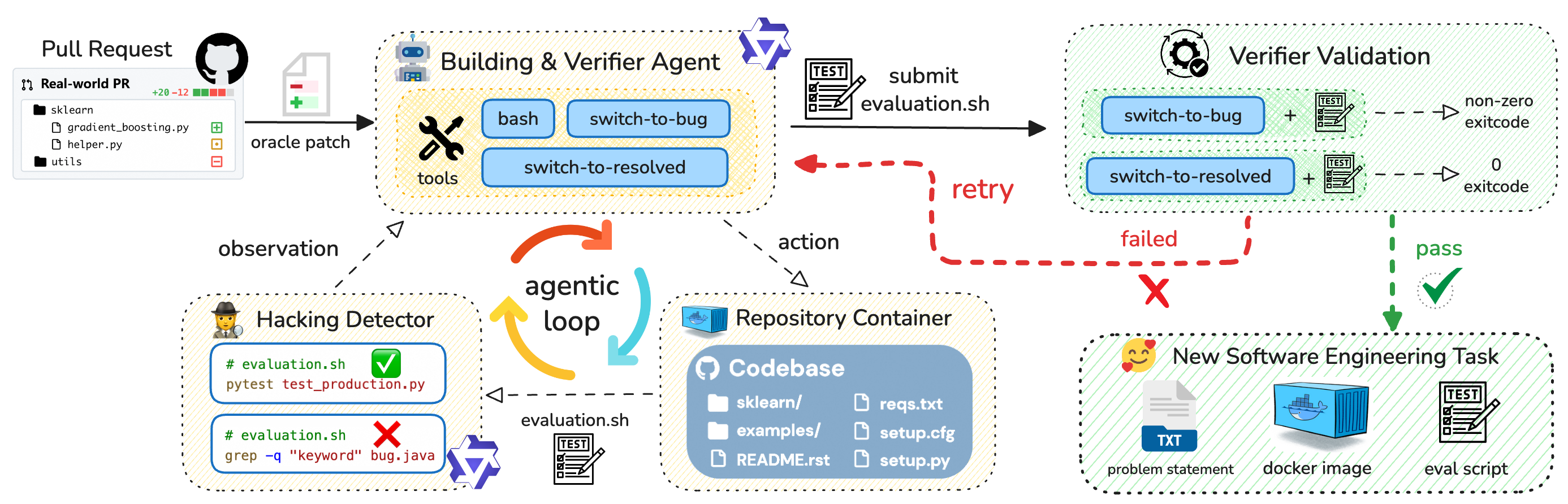

## Diagram: Automated Software Patch Evaluation and Validation Pipeline

### Overview

This image is a technical flowchart illustrating an automated system for evaluating software patches submitted via pull requests. The system uses AI agents to build, test, and verify patches, with mechanisms to detect malicious code ("hacking") and generate new software engineering tasks upon successful validation. The process is cyclical and includes retry logic.

### Components/Elements

The diagram is organized into several interconnected functional blocks, each with specific icons, labels, and text.

1. **Pull Request (Top-Left)**

* **Icon:** GitHub logo.

* **Title:** "Pull Request"

* **Sub-element:** A box labeled "Real-world PR" showing a diff summary: `+20 -12` with green and red bars.

* **File List:**

* `sklearn/` (folder icon)

* `gradient_boosting.py` (file icon with a green plus)

* `helper.py` (file icon with an orange square)

* `utils` (folder icon with a red minus)

* **Output Arrow:** Labeled "oracle patch", pointing to the "Building & Verifier Agent".

2. **Building & Verifier Agent (Top-Center)**

* **Icon:** A robot head.

* **Title:** "Building & Verifier Agent"

* **Sub-element:** A dashed box labeled "tools" containing three blue buttons:

* `bash`

* `switch-to-bug`

* `switch-to-resolved`

* **Output Arrow:** A solid black arrow labeled "submit evaluation.sh" pointing to "Verifier Validation".

3. **Verifier Validation (Top-Right)**

* **Icon:** A gear with a checkmark.

* **Title:** "Verifier Validation"

* **Process Flow:**

* **Path 1 (Top):** `switch-to-bug` + `TEST` icon → dashed arrow → `non-zero exitcode`

* **Path 2 (Bottom):** `switch-to-resolved` + `TEST` icon → dashed arrow → `0 exitcode`

* **Outcome Arrows:**

* A green dashed arrow labeled "pass" with a green checkmark points down to "New Software Engineering Task".

* A red dashed arrow labeled "failed" with a red 'X' points left, leading to a "retry" loop back to the "Building & Verifier Agent".

4. **Hacking Detector (Bottom-Left)**

* **Icon:** A detective/spy.

* **Title:** "Hacking Detector"

* **Sub-elements:** Two code blocks monitoring `evaluation.sh`:

* **Block 1 (Pass):** `# evaluation.sh` with a green checkmark. Code: `pytest test_production.py`

* **Block 2 (Fail):** `# evaluation.sh` with a red 'X'. Code: `grep -q "keyword" bug.java`

* **Connection:** A dashed arrow labeled "observation" points from the "Building & Verifier Agent" to this block. Another dashed arrow labeled "evaluation.sh" with a `TEST` icon points from this block to the "Repository Container".

5. **Repository Container (Bottom-Center)**

* **Icon:** A Docker container.

* **Title:** "Repository Container"

* **Sub-element:** A blue box labeled "Codebase" with a GitHub logo, listing:

* `sklearn/` (folder)

* `examples/` (folder)

* `README.rst` (file)

* `reqs.txt` (file)

* `setup.cfg` (file)

* `setup.py` (file)

* **Connection:** A dashed arrow labeled "action" points from the "Building & Verifier Agent" to this block.

6. **New Software Engineering Task (Bottom-Right)**

* **Icon:** A smiling face with hearts.

* **Title:** "New Software Engineering Task"

* **Output Elements:**

* A document icon labeled "TXT" with the text "problem statement".

* A Docker container icon labeled "docker image".

* A `TEST` script icon labeled "eval script".

7. **Central Agentic Loop**

* **Label:** "agentic loop"

* **Visual:** A circular flow of three curved arrows (orange, yellow, light blue) connecting the "Building & Verifier Agent", "Hacking Detector", and "Repository Container".

### Detailed Analysis

The diagram details a closed-loop, automated workflow:

1. **Input:** A real-world pull request (PR) containing code changes (e.g., to `gradient_boosting.py`) is the starting input.

2. **Agent Processing:** The "Building & Verifier Agent" receives the PR patch. It has tools (`bash`, `switch-to-bug`, `switch-to-resolved`) to manipulate the codebase state. It submits an `evaluation.sh` script for validation.

3. **Validation:** The "Verifier Validation" block tests the patch in two states:

* **`switch-to-bug`:** Introduces the bug. A passing test here (non-zero exit code) confirms the bug exists.

* **`switch-to-resolved`:** Applies the patch. A passing test here (zero exit code) confirms the patch fixes the bug.

4. **Decision Point:**

* **Pass:** If validation succeeds (green path), the system generates a "New Software Engineering Task" comprising a problem statement, a docker image, and an evaluation script.

* **Fail:** If validation fails (red path), a "retry" signal is sent back to the "Building & Verifier Agent" to attempt a new solution.

5. **Security Monitoring:** The "Hacking Detector" observes the agent's actions and the `evaluation.sh` script. It checks for malicious patterns (e.g., `grep -q "keyword" bug.java`), which would cause a failure (red X). A legitimate test command (`pytest test_production.py`) passes (green check).

6. **Environment:** The "Repository Container" provides the isolated codebase environment (`sklearn/`, `examples/`, etc.) where the agent performs its actions.

### Key Observations

* **Dual Validation Logic:** The system doesn't just check if a patch works; it first verifies the bug is present (`switch-to-bug` + test should fail) and then verifies the patch fixes it (`switch-to-resolved` + test should pass). This is a robust testing methodology.

* **Security as a First-Class Check:** The "Hacking Detector" is a dedicated component that runs in parallel, scrutinizing the agent's scripts for signs of malicious intent, not just functional correctness.

* **Retry Mechanism:** The workflow is designed to be iterative. An agent that produces an invalid or malicious patch receives a "failed" signal and can try again.

* **Output as a New Task:** The successful output isn't just a "merged PR." It's a packaged "New Software Engineering Task," suggesting this system might be used to generate training data or benchmarks for other AI agents.

### Interpretation

This diagram represents a sophisticated **AI-driven software engineering agent evaluation framework**. Its core purpose is to autonomously assess whether an AI agent can correctly fix a real-world bug in a codebase without introducing security vulnerabilities or "hacking" the test suite.

The **Peircean investigation** reveals:

* **Sign (Representation):** The flowchart symbols (icons, arrows, boxes) represent the components of an automated DevOps/ML Ops pipeline.

* **Object (Referent):** The actual process of an AI agent attempting to solve a GitHub pull request issue in a sandboxed environment.

* **Interpretant (Meaning):** The system enforces a rigorous, multi-stage validation protocol. It moves beyond simple "pass/fail" on a test suite to include:

1. **Bug Existence Verification:** Ensuring the test is meaningful.

2. **Patch Correctness Verification:** Ensuring the solution works.

3. **Security/Intent Verification:** Ensuring the agent isn't cheating.

4. **Generative Output:** Creating a new, validated task from the successful interaction.

The **"agentic loop"** is central, indicating the agent (Building & Verifier) continuously interacts with its environment (Repository Container) and receives feedback (from Hacking Detector and Verifier Validation) to refine its actions. This is a classic sense-think-act cycle for autonomous agents.

The ultimate implication is that this framework can be used to **benchmark or train AI coding agents** in a safe, automated, and highly controlled manner, producing validated datasets of solved problems for further research. The presence of `sklearn` in the example PR suggests a focus on machine learning libraries.

DECODING INTELLIGENCE...