## Flowchart: Software Engineering Task Automation Pipeline

### Overview

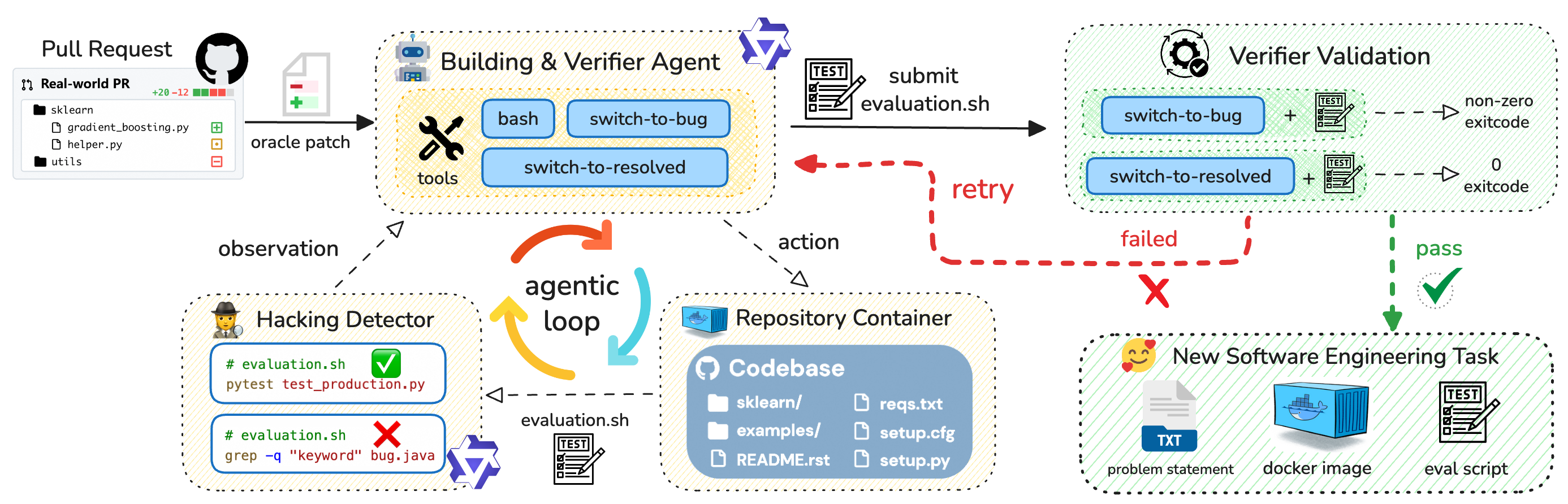

The diagram illustrates an automated software engineering workflow involving pull request handling, code verification, security checks, and task execution. It features feedback loops, conditional branching, and integration with containerized environments.

### Components/Axes

1. **Pull Request Section** (Top-left)

- Label: "Real-world PR"

- Sub-components:

- `sklearn` directory

- `gradient_boosting.py`

- `helper.py`

- `utils` directory

- Status indicators: `+20 -12` (green/red squares)

2. **Building & Verifier Agent** (Center-left)

- Tools: `bash`, `switch-to-bug`, `switch-to-resolved`

- Connections:

- Input from Pull Request

- Output to Repository Container

- Feedback to Verifier Validation

3. **Hacking Detector** (Bottom-left)

- Evaluation scripts:

- `pytest test_production.py` (✓ pass)

- `grep -q "keyword" bug.java` (✗ fail)

- Icon: 🕵️♂️ (detective)

4. **Repository Container** (Center)

- Codebase structure:

- `sklearn/`

- `examples/`

- `README.rst`

- `reqs.txt`

- `setup.cfg`

- `setup.py`

5. **Verifier Validation** (Top-right)

- Conditions:

- `switch-to-bug` + non-zero exit code → 🔴 fail

- `switch-to-resolved` + 0 exit code → ✅ pass

- Icon: ⚙️ (gear with checkmark)

6. **New Software Engineering Task** (Bottom-right)

- Elements:

- Problem statement (📄)

- Docker image (📦)

- Evaluation script (📝)

- Icon: 😊 (smiling face)

### Detailed Analysis

- **Pull Request Flow**:

- PR → Building Agent → Repository Container

- Verifier Validation → New Task (if pass) or Retry (if fail)

- **Agentic Loop**:

- Orange/blue arrows indicate iterative testing/verification cycles

- Red dashed "retry" path for failed evaluations

- **Security Checks**:

- Hacking Detector analyzes evaluation scripts for malicious patterns

- Keyword detection in Java files (`bug.java`)

- **Containerization**:

- Docker image integration for isolated task execution

- File structure mirrors typical Python project layout

### Key Observations

1. **Conditional Branching**:

- Verification outcomes directly control task progression

- Failed security checks trigger retries rather than immediate rejection

2. **Automation Indicators**:

- Agentic loop suggests AI/ML-driven decision making

- Containerized environment enables reproducible testing

3. **Security Integration**:

- Hacking Detector operates in parallel with core verification

- Malicious pattern detection uses both static (grep) and dynamic (pytest) analysis

4. **Exit Code Semantics**:

- Non-zero exit codes trigger bug investigation

- Zero exit codes enable task resolution

### Interpretation

This diagram represents a sophisticated CI/CD pipeline with embedded security and quality assurance mechanisms. The agentic loop suggests autonomous system behavior where:

1. Pull requests trigger automated building and verification

2. Security checks run concurrently with functional testing

3. Failures initiate iterative debugging rather than blocking

4. Successful validations spawn new engineering tasks

The use of containerization (Docker) and repository structure implies cloud-native development practices. The hacking detector's dual approach (static pattern matching + dynamic testing) indicates a layered security strategy. The smiling face icon in the final task suggests positive user experience outcomes when the pipeline succeeds.