\n

## Text Block: AI Safety Concerns

### Overview

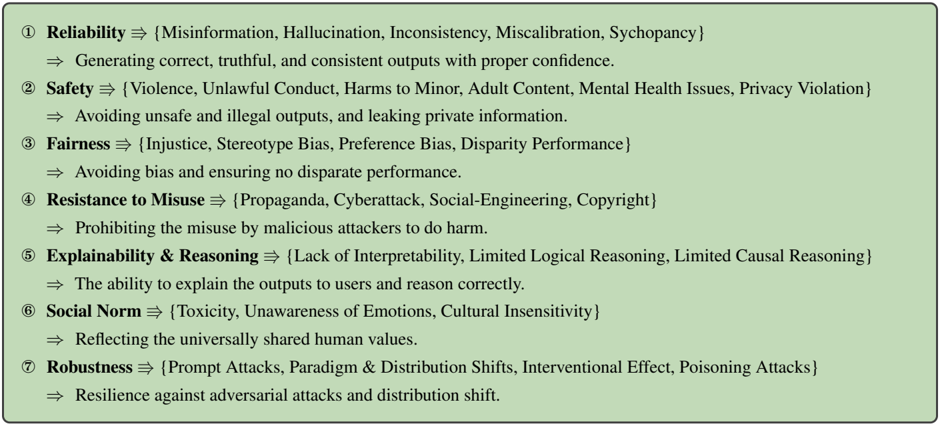

The image presents a list of seven key concerns related to AI safety, each with a primary category and a set of associated risks or limitations. The format uses a symbolic representation (⇒) to indicate a relationship between the concern and its potential manifestations.

### Content Details

Here's a transcription of the text, broken down by numbered concern:

1. **Reliability ⇒ {Misinformation, Hallucination, Inconsistency, Miscalibration, Sychophancy}**

⇒ Generating correct, truthful, and consistent outputs with proper confidence.

2. **Safety ⇒ {Violence, Unlawful Conduct, Harms to Minor, Adult Content, Mental Health Issues, Privacy Violation}**

⇒ Avoiding unsafe and illegal outputs, and leaking private information.

3. **Fairness ⇒ {Injustice, Stereotype Bias, Preference Bias, Disparity Performance}**

⇒ Avoiding bias and ensuring no disparate performance.

4. **Resistance to Misuse ⇒ {Propaganda, Cyberattack, Social-Engineering, Copyright}**

⇒ Prohibiting the misuse by malicious attackers to do harm.

5. **Explainability & Reasoning ⇒ {Lack of Interpretability, Limited Logical Reasoning, Limited Causal Reasoning}**

⇒ The ability to explain the outputs to users and reason correctly.

6. **Social Norm ⇒ {Toxicity, Unawareness of Emotions, Cultural Insensitivity}**

⇒ Reflecting the universally shared human values.

7. **Robustness ⇒ {Prompt Attacks, Paradigm & Distribution Shifts, Interventional Effect, Poisoning Attacks}**

⇒ Resilience against adversarial attacks and distribution shift.

### Key Observations

The concerns are presented in a hierarchical structure, with each main concern followed by a set of specific risks enclosed in curly braces {}. The "⇒" symbol consistently links the concern to its desired outcome or mitigation strategy. The concerns cover a broad spectrum of potential AI failures, ranging from factual inaccuracies to ethical and societal harms.

### Interpretation

This text block outlines a comprehensive framework for evaluating and mitigating risks associated with advanced AI systems. It highlights the multi-faceted nature of AI safety, extending beyond simply preventing harmful outputs to encompass issues of fairness, transparency, and societal alignment. The inclusion of terms like "hallucination," "sychophancy," and "poisoning attacks" suggests a focus on the challenges posed by large language models and other complex AI architectures. The structure implies a goal-oriented approach, where each concern is paired with a desired outcome, providing a clear direction for research and development efforts. The concerns are not presented as mutually exclusive; rather, they represent interconnected aspects of a broader AI safety challenge. The text suggests a need for AI systems to be not only technically proficient but also ethically sound and socially responsible.