## Diagram: AI Safety and Ethics Principles Framework

### Overview

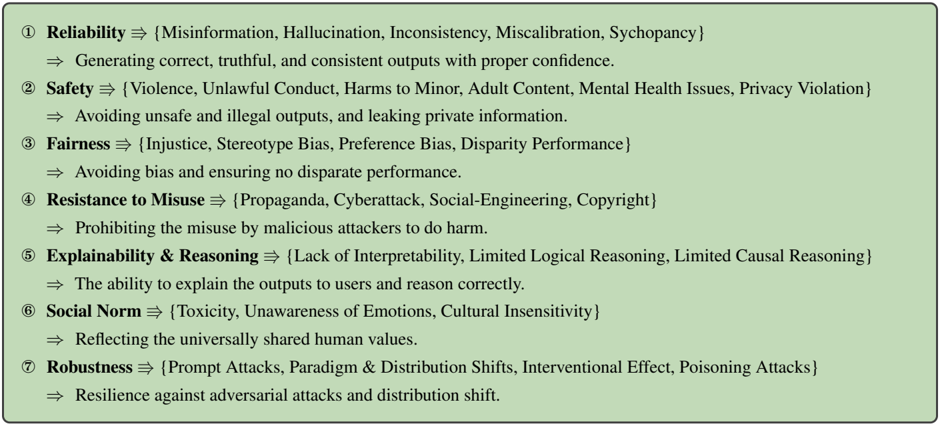

The image displays a structured, numbered list of seven core principles for responsible AI systems. Each principle is presented with a bolded title, a set of associated negative concepts or risks in curly braces, and a concise definition of the principle's goal. The entire list is contained within a light green rectangular box with a thin black border.

### Components/Axes

The diagram is a textual list, not a chart with axes. Its components are:

- **Numbered Items**: Seven principles, numbered ① through ⑦.

- **Principle Titles**: Bolded keywords (e.g., **Reliability**, **Safety**).

- **Risk Sets**: Curly braces `{...}` containing comma-separated lists of associated risks or failure modes.

- **Definitions**: A line starting with `⇒` that describes the positive goal of the principle.

- **Layout**: The text is left-aligned within a single-column container. The background is a solid light green (`#d5e8d4` approximate).

### Detailed Analysis / Content Details

The following text is transcribed precisely from the image:

① **Reliability** ⇒ {Misinformation, Hallucination, Inconsistency, Miscalibration, Sychopancy}

⇒ Generating correct, truthful, and consistent outputs with proper confidence.

② **Safety** ⇒ {Violence, Unlawful Conduct, Harms to Minor, Adult Content, Mental Health Issues, Privacy Violation}

⇒ Avoiding unsafe and illegal outputs, and leaking private information.

③ **Fairness** ⇒ {Injustice, Stereotype Bias, Preference Bias, Disparity Performance}

⇒ Avoiding bias and ensuring no disparate performance.

④ **Resistance to Misuse** ⇒ {Propaganda, Cyberattack, Social-Engineering, Copyright}

⇒ Prohibiting the misuse by malicious attackers to do harm.

⑤ **Explainability & Reasoning** ⇒ {Lack of Interpretability, Limited Logical Reasoning, Limited Causal Reasoning}

⇒ The ability to explain the outputs to users and reason correctly.

⑥ **Social Norm** ⇒ {Toxicity, Unawareness of Emotions, Cultural Insensitivity}

⇒ Reflecting the universally shared human values.

⑦ **Robustness** ⇒ {Prompt Attacks, Paradigm & Distribution Shifts, Interventional Effect, Poisoning Attacks}

⇒ Resilience against adversarial attacks and distribution shift.

### Key Observations

1. **Logical Structure**: Each entry follows a consistent pattern: Principle Name → Associated Risks (in braces) → Positive Definition. This creates a clear "problem/solution" or "risk/mitigation" pairing for each concept.

2. **Comprehensive Scope**: The principles cover a wide spectrum of concerns, from technical performance (Reliability, Robustness) and security (Resistance to Misuse) to ethical and social alignment (Fairness, Social Norm) and transparency (Explainability & Reasoning).

3. **Specificity of Risks**: The risk sets are not vague; they list concrete failure modes (e.g., "Hallucination," "Poisoning Attacks," "Cultural Insensitivity"), making the principles actionable.

4. **Visual Presentation**: The use of bolding, symbols (⇒, {}), and numbered circles creates a clear visual hierarchy that aids in scanning and reference. The light green background provides a neutral, non-alarming container for the serious subject matter.

### Interpretation

This diagram presents a foundational framework for evaluating and building responsible AI systems. It functions as a taxonomy of failure modes and a corresponding set of design goals.

* **Relationship Between Elements**: The seven principles are interdependent. For example, a system cannot be truly **Safe** if it is not also **Robust** against attacks that could force it to produce unsafe content. Similarly, **Fairness** is undermined by a lack of **Reliability** across different demographic groups. **Explainability & Reasoning** is a cross-cutting principle that enables the verification and trust required for all the others.

* **Underlying Philosophy**: The framework moves beyond simple "avoid harm" directives. It acknowledges specific technical challenges (e.g., distribution shifts, prompt attacks) and complex social concepts (e.g., cultural insensitivity, universally shared human values). The inclusion of "Sychopancy" (likely a typo for "Sycophancy") under Reliability is particularly insightful, highlighting the risk of AI models agreeing with users at the expense of truthfulness.

* **Purpose and Utility**: This list serves as a checklist for developers, auditors, and policymakers. It defines the multidimensional nature of "good" AI, arguing that optimizing for a single metric (like accuracy) is insufficient. The absence of quantitative data or performance metrics indicates this is a conceptual or requirements-level document, meant to define *what* needs to be achieved rather than *how* to measure it. The final principle, **Robustness**, acts as a capstone, emphasizing that all the preceding goals must be maintained even under adversarial conditions or changing environments.