## Structured Ethical Guidelines List: AI System Requirements

### Overview

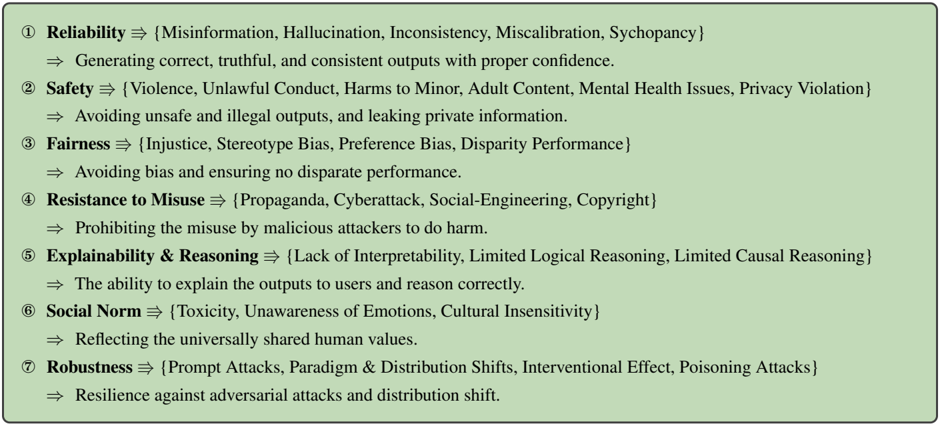

The image presents a structured list of seven key ethical and operational requirements for AI systems, organized hierarchically with bolded category titles and bullet-pointed subpoints. The content focuses on ensuring responsible AI behavior across technical, social, and security dimensions.

### Components/Axes

1. **Categories**:

- Reliability

- Safety

- Fairness

- Resistance to Misuse

- Explainability & Reasoning

- Social Norm

- Robustness

2. **Subpoint Structure**:

- Each category includes:

- A set of **specific risks/threats** (e.g., "Misinformation," "Violence") in curly braces.

- A **goal statement** describing the desired outcome (e.g., "Avoiding unsafe outputs").

### Detailed Analysis

1. **Reliability**

- Risks: Misinformation, Hallucination, Inconsistency, Miscalibration, Schizophrenia

- Goal: Generate correct, truthful, and consistent outputs with proper confidence.

2. **Safety**

- Risks: Violence, Unlawful Conduct, Harms to Minor, Adult Content, Mental Health Issues, Privacy Violation

- Goal: Avoid unsafe/illegal outputs and prevent privacy leaks.

3. **Fairness**

- Risks: Injustice, Stereotype Bias, Preference Bias, Disparity Performance

- Goal: Eliminate bias and ensure equitable performance.

4. **Resistance to Misuse**

- Risks: Propaganda, Cyberattack, Social-Engineering, Copyright

- Goal: Prevent malicious exploitation (e.g., deepfakes, phishing).

5. **Explainability & Reasoning**

- Risks: Lack of Interpretability, Limited Logical/Causal Reasoning

- Goal: Enable transparent explanations and logical reasoning for users.

6. **Social Norm**

- Risks: Toxicity, Unawareness of Emotions, Cultural Insensitivity

- Goal: Align outputs with universally shared human values.

7. **Robustness**

- Risks: Prompt Attacks, Paradigm Shifts, Interventional Effect, Poisoning Attacks

- Goal: Maintain resilience against adversarial attacks and distribution shifts.

### Key Observations

- **Hierarchical Organization**: Categories are prioritized numerically (1–7), suggesting a framework for evaluation or implementation.

- **Risk-Goal Symmetry**: Each category pairs concrete risks with actionable goals, emphasizing proactive mitigation.

- **Technical-Social Balance**: Combines technical challenges (e.g., "Prompt Attacks") with societal concerns (e.g., "Cultural Insensitivity").

### Interpretation

This list outlines a comprehensive ethical framework for AI development, addressing both technical robustness (e.g., resistance to poisoning attacks) and societal impact (e.g., fairness, cultural sensitivity). The structure implies a layered approach:

1. **Foundational Requirements** (Reliability, Safety) ensure basic functionality and harm prevention.

2. **Equity and Transparency** (Fairness, Explainability) address systemic biases and user trust.

3. **Security and Societal Alignment** (Resistance to Misuse, Social Norm) protect against exploitation and cultural harm.

4. **Adaptability** (Robustness) ensures long-term resilience in dynamic environments.

The absence of numerical data suggests this is a conceptual guideline rather than an empirical study. The emphasis on "misuse" and "robustness" reflects growing concerns about AI weaponization and real-world deployment challenges.