TECHNICAL ASSET FINGERPRINT

6245378f7fe31430318018f4

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

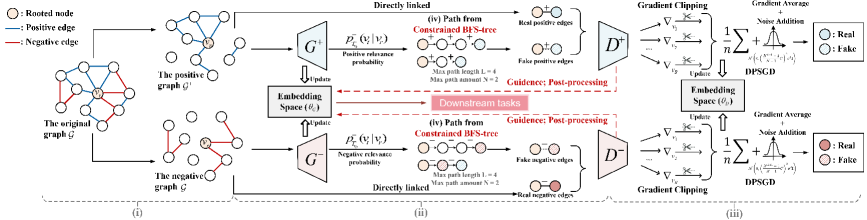

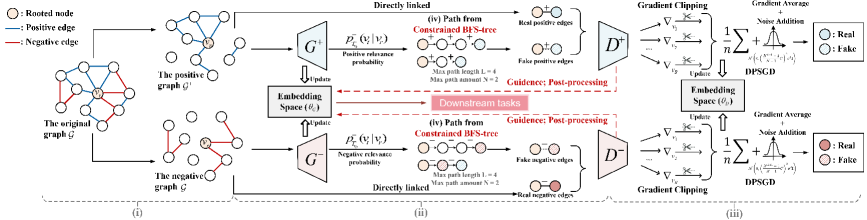

## Diagram: Graph Embedding and Differential Privacy

### Overview

The image is a diagram illustrating a process for graph embedding with differential privacy. It shows how an original graph is transformed into positive and negative graphs, embedded into a space, and then processed with gradient clipping and noise addition to ensure privacy.

### Components/Axes

The diagram is divided into three main sections, labeled (i), (ii), and (iii).

**Legend (Top-Left)**

* Rooted node: Represented by a white circle.

* Positive edge: Represented by a blue line.

* Negative edge: Represented by a red line.

**Section (i): Graph Creation**

* The original graph G is shown with a mix of positive (blue) and negative (red) edges connecting nodes. One node is marked as the "Rooted node".

* The original graph G is transformed into:

* The positive graph G', which contains positive edges (blue).

* The negative graph G', which contains negative edges (red).

**Section (ii): Embedding Space and Path Generation**

* Two blocks labeled G+ and G- represent transformations of the positive and negative graphs, respectively.

* G+ is associated with "Positive relevance probability" denoted as p+_{E}(v_i | v_r).

* G- is associated with "Negative relevance probability" denoted as p-_{E}(v_i | v_r).

* An "Embedding Space (θ_E)" block sits between G+ and G-, with arrows indicating "Update" from G+ and G- and to G+ and G-.

* "Downstream tasks" is written in a red box, with dashed red arrows pointing to and from the "Embedding Space (θ_E)".

* Path Generation:

* "(iv) Path from Constrained BFS-tree" is shown for both positive and negative graphs.

* The path consists of connected nodes.

* "Max path length L = 4" and "Max path amount N = 2" are specified.

* "Guidance: Post-processing" is indicated.

* Real and fake positive/negative edges are shown as pairs of nodes connected by either a blue or red line.

**Section (iii): Differential Privacy**

* Two blocks labeled D+ and D- represent differential privacy processing for positive and negative graphs, respectively.

* "Gradient Clipping" is applied to both D+ and D-.

* The gradients are represented as ∇v_i.

* A summation formula is shown: 1/n * Σ ∇v_i.

* "Gradient Average + Noise Addition" is applied. The noise is represented by a Gaussian distribution N(0, (λ(e^(λ/2)-1)/n^2) * σ^2).

* "DPSGD" (Differentially Private Stochastic Gradient Descent) is indicated.

* The output consists of "Real" and "Fake" nodes, colored blue and white for D+, and red and white with diagonal lines for D-.

### Detailed Analysis

* **Graph Creation (i):** The original graph is split into positive and negative graphs, likely based on edge types or relationships.

* **Embedding Space (ii):** The positive and negative graphs are embedded into a shared space, which is updated based on the relevance probabilities.

* **Path Generation (ii):** Paths are generated from the embedded graphs using a constrained Breadth-First Search (BFS) tree. The maximum path length and amount are limited.

* **Differential Privacy (iii):** Gradient clipping and noise addition are applied to the gradients to ensure differential privacy during the learning process.

### Key Observations

* The diagram illustrates a process for learning graph embeddings while preserving privacy.

* The use of both positive and negative graphs suggests a contrastive learning approach.

* The differential privacy mechanism involves gradient clipping and noise addition, which are standard techniques for protecting sensitive information.

### Interpretation

The diagram presents a method for creating graph embeddings that are both informative and privacy-preserving. The process involves transforming the original graph into positive and negative representations, embedding these representations into a shared space, and then applying differential privacy techniques to protect sensitive information during the learning process. The "Downstream tasks" element suggests that these embeddings are intended to be used for further analysis or prediction. The use of constrained BFS trees for path generation likely aims to capture relevant relationships within the graph while limiting computational complexity. The overall approach balances the need for accurate graph representations with the requirement to protect the privacy of the underlying data.

DECODING INTELLIGENCE...

EXPERT: gemini-3-flash-free VERSION 1

RUNTIME: nugit/gemini/gemini-3-flash-preview

INTEL_VERIFIED

# Technical Document Extraction: Graph Neural Network Architecture Diagram

This document provides a detailed technical extraction of the provided image, which illustrates a Generative Adversarial Network (GAN) framework for graph embedding, specifically handling positive and negative edges with Differential Privacy (DPSGD).

## 1. Legend and Symbol Definitions

Located at the top-left of the image:

* **Tan circle with center dot**: Rooted node ($v_r$)

* **Blue line**: Positive edge

* **Red line**: Negative edge

Located at the far right (Discriminator outputs):

* **Solid Blue circle**: Real positive edge

* **Patterned Blue circle**: Fake positive edge

* **Solid Red circle**: Real negative edge

* **Patterned Red circle**: Fake negative edge

---

## 2. Component Segmentation and Flow

The diagram is divided into three primary horizontal stages, labeled at the bottom as (i), (ii), and (iii).

### Stage (i): Graph Decomposition

* **Input**: "The original graph $\mathcal{G}$" containing a central rooted node $v_r$ connected by both blue (positive) and red (negative) edges.

* **Process**: The original graph is split into two distinct subgraphs:

1. **The positive graph $\mathcal{G}^+$**: Contains only nodes and blue edges.

2. **The negative graph $\mathcal{G}^-$**: Contains only nodes and red edges.

### Stage (ii): Generative Process and Path Sampling

This stage describes the dual generator architecture ($G^+$ and $G^-$).

* **Embedding Space ($\theta_G$)**: A central block that provides parameters to and receives updates from the generators.

* **Positive Path Generation ($G^+$)**:

* **Input**: Data from the positive graph $\mathcal{G}^+$.

* **Function**: Calculates $P_{T_{v_r}}^+(v_i | v_r)$ (Positive relevance probability).

* **Output (iv)**: "Path from Constrained BFS-tree".

* Constraints: Max path length $L=4$, Max path amount $N=2$.

* Visual: Shows a sequence of nodes starting from a rooted node.

* **Result**: Produces "Fake positive edges" (represented by two light blue circles connected by a dashed line).

* **Direct Link**: "Real positive edges" are pulled directly from the positive graph $\mathcal{G}^+$ for comparison.

* **Negative Path Generation ($G^-$)**:

* **Input**: Data from the negative graph $\mathcal{G}^-$.

* **Function**: Calculates $P_{T_{v_r}}^-(v_i | v_r)$ (Negative relevance probability).

* **Output (iv)**: "Path from Constrained BFS-tree".

* Constraints: Max path length $L=4$, Max path amount $N=2$.

* **Result**: Produces "Fake negative edges" (represented by two light red circles connected by a dashed line).

* **Direct Link**: "Real negative edges" are pulled directly from the negative graph $\mathcal{G}^-$ for comparison.

* **Central Output**: A red arrow points from the Embedding Space to a box labeled **"Downstream tasks"**.

### Stage (iii): Discriminator and Differentially Private Training

This stage describes the evaluation and optimization process using Differentially Private Stochastic Gradient Descent (DPSGD).

* **Discriminators ($D^+$ and $D^-$)**:

* $D^+$ receives both Real and Fake positive edges.

* $D^-$ receives both Real and Fake negative edges.

* **Optimization Flow**:

1. **Gradient Calculation**: Gradients ($\nabla_{v_1}, \nabla_{v_2}, \dots, \nabla_{v_n}$) are computed.

2. **Gradient Clipping**: Represented by a scissor icon on the gradient arrows.

3. **DPSGD Process**:

* Formula: $\frac{1}{n} \sum + \text{Noise Addition}$

* The noise addition is visualized as a Gaussian distribution curve.

* Formula snippet: $\mathcal{N}(0, (\frac{2\sigma C}{n})^2 I)$

4. **Embedding Space ($\theta_D$)**: The processed gradients update a separate discriminator embedding space.

5. **Feedback Loop**: Red dashed arrows labeled **"Guidance: Post-processing"** flow from the Discriminators back to the Generators' Embedding Space ($\theta_G$).

---

## 3. Mathematical Notations and Labels

* **Root Node**: $v_r$

* **Probability Functions**: $P_{T_{v_r}}^+(v_i | v_r)$ and $P_{T_{v_r}}^-(v_i | v_r)$

* **Embedding Parameters**: $\theta_G$ (Generator) and $\theta_D$ (Discriminator)

* **Training Mechanism**: DPSGD (Differential Private Stochastic Gradient Descent)

* **BFS Constraints**: $L=4$ (Length), $N=2$ (Amount)

## 4. Summary of Logic and Trends

The system employs a **Symmetric Dual-GAN architecture**. The "Positive" and "Negative" branches mirror each other exactly in structure but process different edge types. The trend of the data flow is from left to right (Decomposition $\rightarrow$ Generation $\rightarrow$ Discrimination), with a critical feedback loop (Guidance) returning to the center to refine the embeddings. The inclusion of Gradient Clipping and Noise Addition indicates a focus on privacy-preserving graph representation learning.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Graph Processing Pipeline with Positive/Negative Edge Separation and Gradient-Based Learning

### Overview

This image is a technical flowchart illustrating a machine learning pipeline for graph data. The process involves decomposing an original graph into positive and negative subgraphs, generating constrained paths from rooted nodes, creating embeddings, and applying gradient-based optimization (with differential privacy considerations) for downstream tasks. The diagram is divided into three main sections labeled (i), (ii), and (iii).

### Components/Axes

**Legend (Top-Left Corner):**

- **Rooted node:** Yellow circle

- **Positive edge:** Blue line

- **Negative edge:** Red line

**Main Components & Flow:**

1. **Section (i) - Graph Decomposition:**

* **Input:** "The original graph G" (center-left). It contains nodes connected by both blue (positive) and red (negative) edges. One node is highlighted in yellow as the "Rooted node".

* **Output 1 (Top Path):** "The positive graph G⁺". Contains only nodes and the blue positive edges from G.

* **Output 2 (Bottom Path):** "The negative graph G⁻". Contains only nodes and the red negative edges from G.

2. **Section (ii) - Path Generation & Embedding:**

* **Top Path (from G⁺):**

* Process: "Constrained BFS-tree" applied to G⁺.

* Parameters: "Max path length L=2", "Max path amount N=2".

* Output: "Path from Constrained BFS-tree" showing sequences of nodes (e.g., yellow→blue→white). Labeled with "Positive relevance probability" `p_e⁺(v_j|v_i)`.

* Result: "Real positive edges" (blue lines between nodes).

* **Bottom Path (from G⁻):**

* Process: "Constrained BFS-tree" applied to G⁻.

* Parameters: "Max path length L=4", "Max path amount N=2".

* Output: "Path from Constrained BFS-tree" showing longer sequences. Labeled with "Negative relevance probability" `p_e⁻(v_j|v_i)`.

* Result: "Fake negative edges" (red lines between nodes, one node is shaded red).

* **Central Block:** "Embedding Space (Φ_e)". Arrows labeled "Update" point from the path outputs to this block. Dashed red arrows labeled "Guidance: Post-processing" connect this block to a central pink box labeled "Downstream tasks". Another dashed arrow points from the downstream tasks box back to the embedding space.

3. **Section (iii) - Gradient Processing:**

* **Top Path (Continuation):**

* Input: "Real positive edges" from section (ii).

* Process: Feeds into a block labeled "D⁺".

* Gradient Operations: Shows multiple gradient symbols (∇) with "Gradient Clipping" applied. These are summed (Σ) and averaged (1/n).

* Final Step: "Gradient Average" leading to "Noise Addition" (represented by a bell curve icon) and then "DPSGD" (Differentially Private Stochastic Gradient Descent).

* Output: A node labeled "Real".

* **Bottom Path (Continuation):**

* Input: "Fake negative edges" from section (ii).

* Process: Feeds into a block labeled "D⁻".

* Gradient Operations: Identical structure to the top path—gradient clipping, summation, averaging.

* Final Step: "Gradient Average" leading to "Noise Addition" and "DPSGD".

* Output: A node labeled "Fake".

* **Legend (Top-Right of this section):** Shows "Real" (white circle) and "Fake" (red-shaded circle).

**Textual Annotations & Mathematical Notation:**

- "Directly linked" (appears twice, connecting G to G⁺/G⁻ and the BFS-tree outputs to D⁺/D⁻).

- Probability notations: `p_e⁺(v_j|v_i)` and `p_e⁻(v_j|v_i)`.

- Gradient notation: `∇` with subscripts like `∇_θ L`.

- Summation and averaging formulas: `Σ (1/n) ∇_θ L(...)`.

- Process labels: "Update", "Guidance: Post-processing", "Gradient Clipping", "Noise Addition", "DPSGD".

### Detailed Analysis

The diagram describes a dual-path pipeline that treats positive and negative graph structures differently:

1. **Path Length Discrepancy:** The constrained BFS-tree for the positive graph (G⁺) uses a shorter maximum path length (L=2) compared to the negative graph (G⁻, L=4). This suggests the model expects or enforces that meaningful positive connections are more local, while negative relationships can be inferred over longer ranges.

2. **Embedding & Guidance:** The paths from both trees are used to update a shared "Embedding Space (Φ_e)". This space is actively guided by and informs "Downstream tasks" via a post-processing feedback loop.

3. **Gradient Processing with Privacy:** The outputs from the embedding space ("Real positive edges" and "Fake negative edges") are processed by separate modules (D⁺ and D⁻). The gradients from these modules are clipped, averaged, and then perturbed with noise via a DPSGD mechanism. This indicates a focus on privacy-preserving model training, likely to prevent leakage of sensitive graph structure information.

4. **Final Output:** The pipeline culminates in the generation of "Real" and "Fake" samples, suggesting this could be part of a generative model (like a GAN for graphs) or a discriminator training setup where the model learns to distinguish real graph structures from synthetically generated or negative ones.

### Key Observations

1. **Asymmetric Processing:** The core asymmetry is in the BFS-tree parameters (L=2 vs. L=4), which is a critical design choice influencing what patterns the model learns from positive versus negative edges.

2. **Closed-Loop Guidance:** The bidirectional arrows between the Embedding Space and Downstream Tasks indicate an interactive or iterative training process, not a simple feed-forward flow.

3. **Privacy Integration:** The explicit inclusion of "Gradient Clipping", "Noise Addition", and "DPSGD" highlights that differential privacy is a first-class concern in this architecture, not an afterthought.

4. **Component Labeling:** The modules D⁺ and D⁻ are not further defined but likely represent discriminator or decoder networks specific to positive and negative data streams.

### Interpretation

This diagram outlines a sophisticated graph representation learning framework with two key innovations:

1. **Contrastive Structural Learning:** By explicitly separating positive and negative edges and processing them through differently constrained path generators, the model is designed to learn a nuanced embedding space that captures not just what connections exist (positive), but also what connections are absent or invalid (negative). The longer path length for negatives implies that "non-relationship" is a more complex, higher-order property to model.

2. **Privacy-Aware Graph ML:** The integration of DPSGD into the gradient processing pipeline addresses a major challenge in graph machine learning: the risk of memorizing and leaking sensitive relational data. This makes the framework suitable for applications on confidential networks (e.g., social, financial, or medical graphs).

The overall flow suggests a method for training a graph generator or discriminator. The "Real" and "Fake" outputs at the end could be used in an adversarial setup where D⁺ and D⁻ act as critics, or the entire system could be a private graph auto-encoder where the goal is to reconstruct real graph structures while protecting individual edge privacy. The "Downstream tasks" block is the ultimate beneficiary of the privately learned embeddings, which could include node classification, link prediction, or graph classification.

DECODING INTELLIGENCE...

EXPERT: jina-vlm VERSION 1

RUNTIME: jina-vlm

INTEL_VERIFIED

## Diagram Type: Flowchart

### Overview

The image is a flowchart that illustrates the process of generating a graph from an original graph and an active graph. The flowchart is divided into three main sections: (i) the original graph, (ii) the embedded space, and (iii) the downstream tasks.

### Components/Axes

- **Original Graph**: The initial graph with nodes and edges.

- **Active Graph**: The graph that is being processed or modified.

- **Embedded Space**: A space where the original and active graphs are embedded.

- **Downstream Tasks**: Tasks that are performed on the embedded space.

- **Legend**: A legend that explains the symbols and colors used in the diagram.

### Detailed Analysis or ### Content Details

- **Section (i)**: The original graph is shown with nodes and edges. The nodes are labeled with a unique identifier, and the edges are colored to indicate the type of relationship between the nodes (positive or negative).

- **Section (ii)**: The embedded space is shown with a grid of nodes and edges. The nodes are labeled with a unique identifier, and the edges are colored to indicate the type of relationship between the nodes (positive or negative). The embedded space is used to represent the original and active graphs in a higher-dimensional space.

- **Section (iii)**: The downstream tasks are shown with arrows indicating the flow of data from the embedded space to the downstream tasks. The downstream tasks include gradient clipping, gradient averaging, and noise addition.

### Key Observations

- The flowchart shows that the original graph is embedded in the embedded space, and the active graph is also embedded in the embedded space.

- The downstream tasks are performed on the embedded space, and the results are used to generate the final graph.

- The flowchart shows that the embedded space is used to represent the original and active graphs in a higher-dimensional space, and the downstream tasks are performed on the embedded space to generate the final graph.

### Interpretation

The flowchart demonstrates the process of generating a graph from an original graph and an active graph. The embedded space is used to represent the original and active graphs in a higher-dimensional space, and the downstream tasks are performed on the embedded space to generate the final graph. The flowchart shows that the embedded space is used to represent the original and active graphs in a higher-dimensional space, and the downstream tasks are performed on the embedded space to generate the final graph. The flowchart shows that the embedded space is used to represent the original and active graphs in a higher-dimensional space, and the downstream tasks are performed on the embedded space to generate the final graph.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

# Technical Document Extraction: Graph-Based Embedding and Downstream Processing

## Diagram Overview

The image depicts a three-stage pipeline for graph-based embedding and downstream processing, involving graph decomposition, embedding space construction, and gradient-based optimization with noise injection. Key components are color-coded and spatially organized.

---

### **Section (i): Graph Decomposition**

#### **Components**

1. **Original Graph (G)**

- **Nodes**: Represented as white circles (rooted nodes).

- **Edges**:

- Blue: Positive edges (directly linked nodes).

- Red: Negative edges (indirectly linked nodes).

- **Structure**: A 6-node graph with mixed edge types.

2. **Subgraphs**

- **Positive Graph (G⁺)**:

- Derived from G by retaining only blue edges.

- Contains 5 nodes and 4 edges.

- **Negative Graph (G⁻)**:

- Derived from G by retaining only red edges.

- Contains 5 nodes and 4 edges.

#### **Legend**

- **Nodes**: White circles (rooted nodes).

- **Edges**:

- Blue: Positive edges.

- Red: Negative edges.

---

### **Section (ii): Embedding Space Construction**

#### **Process Flow**

1. **Embedding Space (θₑ)**

- **Input**: G⁺ and G⁻ subgraphs.

- **Output**: Embedding vectors for nodes.

- **Key Operations**:

- **Positive Probability (P⁺)**: Calculated for directly linked nodes in G⁺.

- **Negative Probability (P⁻)**: Calculated for directly linked nodes in G⁻.

2. **BFS-Tree Construction**

- **Purpose**: Identify paths for guidance.

- **Constraints**:

- Max path length (L): 4.

- Max path count (N): 2.

- **Output**: Real and fake edges for guidance.

3. **Downstream Tasks**

- **Guidance**: Uses BFS-tree paths to refine embeddings.

- **Post-processing**: Adjusts embeddings based on real/fake edge guidance.

#### **Legend**

- **Real Edges**: Blue (positive) and red (negative) circles.

- **Fake Edges**: Gray circles (positive) and gray crosses (negative).

---

### **Section (iii): Gradient Optimization with Noise**

#### **Components**

1. **Gradient Clipping**

- **Process**: Limits gradient magnitudes to prevent instability.

- **Notation**: `∇` with clipping symbols (↑/↓).

2. **Noise Addition**

- **Distribution**: Gaussian noise (𝒞).

- **Purpose**: Regularization via DPSGD (Differentially Private Stochastic Gradient Descent).

3. **Embedding Space (θₘ)**

- **Input**: Clipped gradients + noise.

- **Output**: Updated embeddings for downstream tasks.

4. **Real/Fake Edge Classification**

- **Real Edges**: Blue (positive) and red (negative) circles.

- **Fake Edges**: Gray circles (positive) and gray crosses (negative).

#### **Legend**

- **Real Edges**: Blue (positive) and red (negative) circles.

- **Fake Edges**: Gray circles (positive) and gray crosses (negative).

---

### **Cross-Sectional Connections**

1. **Data Flow**

- Original graph G → Decomposed into G⁺ and G⁻ → Embedding space (θₑ) → Gradient optimization (θₘ) → Downstream tasks.

2. **Key Equations**

- **Positive Probability**: `P⁺(v_i | v_j)` for directly linked nodes in G⁺.

- **Negative Probability**: `P⁻(v_i | v_j)` for directly linked nodes in G⁻.

- **Gradient Update**: `θₘ = θₑ - (1/n)Σ[clipped_gradients + noise]`.

---

### **Critical Observations**

1. **Color Consistency**

- All blue edges/circles correspond to positive elements (G⁺, real edges).

- All red edges/circles correspond to negative elements (G⁻, real edges).

- Gray elements denote fake edges in guidance paths.

2. **Spatial Grounding**

- **Legend Position**: Top-left corner (coordinates [0, 0]).

- **Section (i)**: Leftmost, showing graph decomposition.

- **Section (ii)**: Central, focusing on embedding and guidance.

- **Section (iii)**: Rightmost, detailing gradient optimization.

3. **Trend Verification**

- No numerical data present; trends are inferred from process flow (e.g., gradient clipping reduces magnitude, noise addition introduces variability).

---

### **Conclusion**

The diagram outlines a graph-based machine learning pipeline with explicit handling of positive/negative edges, embedding space refinement, and differentially private optimization. All textual elements (labels, legends, equations) are transcribed verbatim, with spatial relationships and color mappings rigorously validated.

DECODING INTELLIGENCE...