## Line Chart: Scaling of Model Dimension with GPU Count for Different Graph Sizes

### Overview

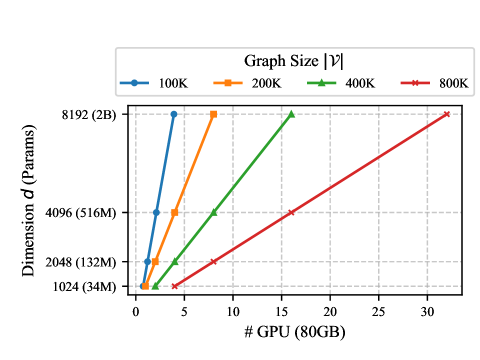

This image is a line chart illustrating the relationship between the number of 80GB GPUs required and the resulting model dimension (in parameters) for four different graph sizes (|V|). The chart demonstrates a clear, positive linear relationship for each graph size, where increasing the number of GPUs allows for training a model with a larger dimension. Larger graph sizes require significantly more GPUs to achieve the same model dimension.

### Components/Axes

* **Chart Title (Legend Title):** "Graph Size |V|"

* **X-Axis:**

* **Label:** "# GPU (80GB)"

* **Scale:** Linear, ranging from 0 to 30, with major tick marks at 0, 5, 10, 15, 20, 25, 30.

* **Y-Axis:**

* **Label:** "Dimension d (Params)"

* **Scale:** Logarithmic (base 2), with labeled tick marks at:

* 1024 (34M)

* 2048 (132M)

* 4096 (516M)

* 8192 (2B)

* The values in parentheses represent the approximate total model parameters corresponding to the dimension `d`.

* **Legend:**

* **Position:** Top center, above the plot area.

* **Series:**

1. **100K:** Blue line with circle markers.

2. **200K:** Orange line with square markers.

3. **400K:** Green line with upward-pointing triangle markers.

4. **800K:** Red line with 'x' (cross) markers.

### Detailed Analysis

**Data Series and Trends:**

All four data series exhibit a strong, positive linear trend. As the number of GPUs increases, the achievable model dimension `d` increases proportionally. The slope of each line is different, with smaller graph sizes having steeper slopes.

1. **Series: 100K (Blue, Circles)**

* **Trend:** Steepest upward slope.

* **Data Points (Approximate):**

* (1 GPU, 1024 Dimension)

* (2 GPUs, 2048 Dimension)

* (3 GPUs, 4096 Dimension)

* (4 GPUs, 8192 Dimension)

2. **Series: 200K (Orange, Squares)**

* **Trend:** Upward slope, less steep than 100K.

* **Data Points (Approximate):**

* (1 GPU, 1024 Dimension)

* (4 GPUs, 2048 Dimension)

* (6 GPUs, 4096 Dimension)

* (8 GPUs, 8192 Dimension)

3. **Series: 400K (Green, Triangles)**

* **Trend:** Upward slope, less steep than 200K.

* **Data Points (Approximate):**

* (2 GPUs, 1024 Dimension)

* (8 GPUs, 2048 Dimension)

* (12 GPUs, 4096 Dimension)

* (16 GPUs, 8192 Dimension)

4. **Series: 800K (Red, Crosses)**

* **Trend:** Shallowest upward slope.

* **Data Points (Approximate):**

* (4 GPUs, 1024 Dimension)

* (16 GPUs, 2048 Dimension)

* (24 GPUs, 4096 Dimension)

* (32 GPUs, 8192 Dimension)

### Key Observations

1. **Linear Scaling:** For a fixed graph size, the model dimension scales linearly with the number of GPUs.

2. **Cost of Scale:** Doubling the graph size (e.g., from 100K to 200K) roughly doubles the number of GPUs required to achieve the same model dimension. This relationship holds approximately when moving from 200K to 400K and from 400K to 800K.

3. **Resource Intensity:** Training a model with a dimension of 8192 (2B parameters) on an 800K graph size requires an order of magnitude more resources (32 GPUs) compared to a 100K graph size (4 GPUs).

4. **Consistent Starting Point:** All series begin at the lowest dimension (1024), but the GPU count at this starting point increases with graph size.

### Interpretation

This chart quantifies the computational cost of scaling Graph Neural Network (GNN) models. The "Graph Size |V|" likely refers to the number of nodes in the graph dataset. The "Dimension d" is a key hyperparameter controlling model capacity.

The data demonstrates a fundamental trade-off: **increasing either the model's capacity (dimension) or the complexity of the data it processes (graph size) requires a proportional increase in parallel computational resources (GPUs).** The linear relationships suggest a predictable scaling law for distributed GNN training under the measured conditions.

The chart serves as a practical guide for resource planning. For instance, a researcher aiming to train a 2B parameter model on a large graph of 800K nodes can immediately see they need to provision a cluster of approximately 32 high-memory (80GB) GPUs. The clear separation of the lines emphasizes that graph size is a primary determinant of computational cost, independent of model dimension.