## Grouped Bar Chart: Qwen2.5-14B-Instruct Model Accuracy

### Overview

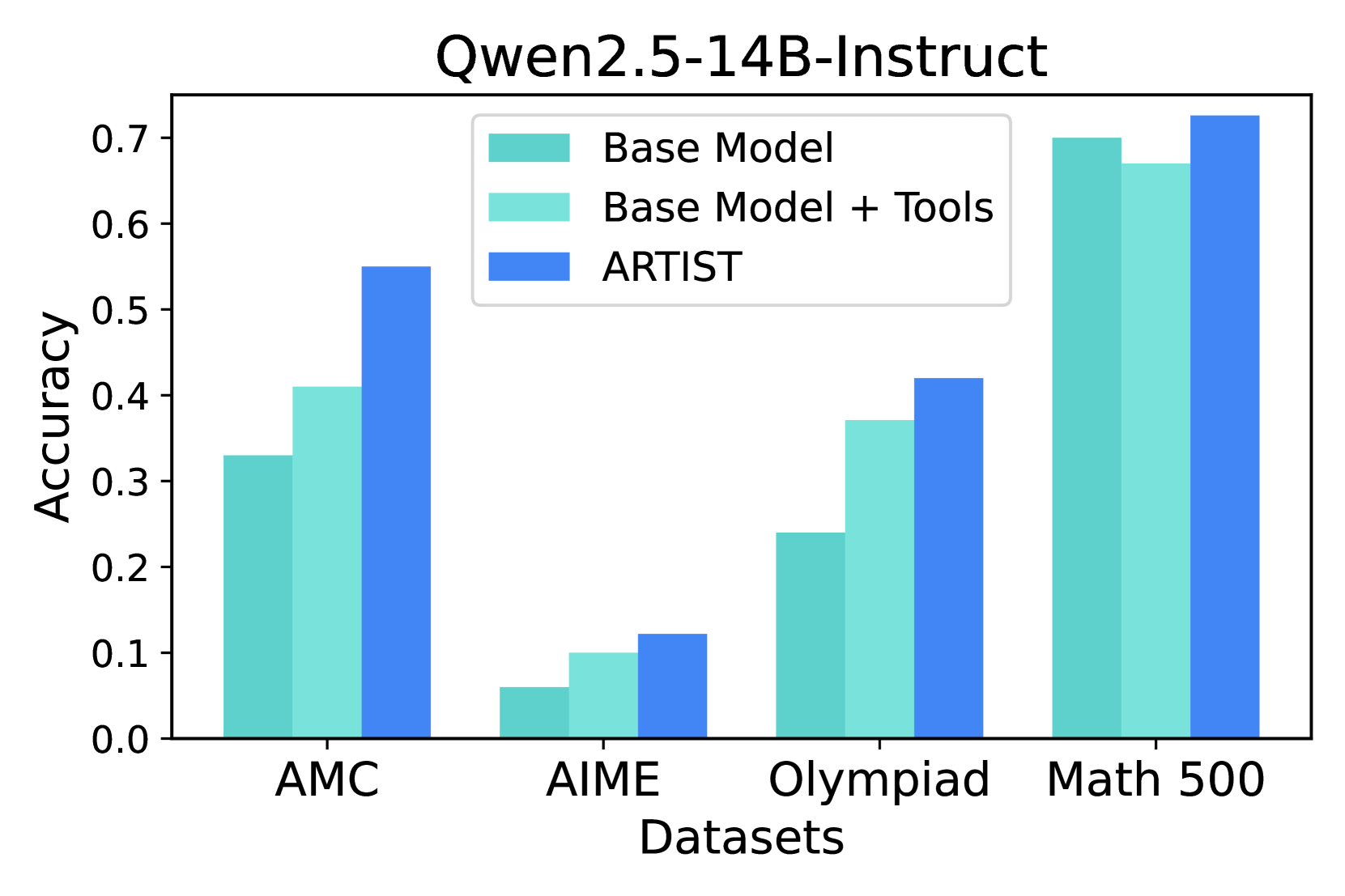

This image is a grouped bar chart titled "Qwen2.5-14B-Instruct". It compares the accuracy performance of three different model configurations across four distinct mathematical problem-solving datasets. The chart visually demonstrates the relative effectiveness of each configuration on each dataset.

### Components/Axes

* **Chart Title:** "Qwen2.5-14B-Instruct" (centered at the top).

* **Y-Axis:** Labeled "Accuracy". The scale runs from 0.0 to 0.7, with major tick marks at 0.1 intervals (0.0, 0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7).

* **X-Axis:** Labeled "Datasets". It contains four categorical groups:

1. AMC

2. AIME

3. Olympiad

4. Math 500

* **Legend:** Positioned in the top-center of the chart area. It defines three data series by color:

* **Base Model:** Represented by a teal/turquoise bar (approximate hex: #66c2a5).

* **Base Model + Tools:** Represented by a light teal/aquamarine bar (approximate hex: #abdda4).

* **ARTIST:** Represented by a medium blue bar (approximate hex: #3288bd).

### Detailed Analysis

The chart presents accuracy scores for each model configuration on each dataset. Values are approximate based on visual alignment with the y-axis.

**1. AMC Dataset (Leftmost group):**

* **Trend:** Accuracy increases from Base Model to Base Model + Tools to ARTIST.

* **Data Points:**

* Base Model: ~0.33

* Base Model + Tools: ~0.41

* ARTIST: ~0.55

**2. AIME Dataset (Second group from left):**

* **Trend:** Accuracy increases from Base Model to Base Model + Tools to ARTIST. This dataset shows the lowest overall scores.

* **Data Points:**

* Base Model: ~0.06

* Base Model + Tools: ~0.10

* ARTIST: ~0.12

**3. Olympiad Dataset (Third group from left):**

* **Trend:** Accuracy increases from Base Model to Base Model + Tools to ARTIST.

* **Data Points:**

* Base Model: ~0.24

* Base Model + Tools: ~0.37

* ARTIST: ~0.42

**4. Math 500 Dataset (Rightmost group):**

* **Trend:** Accuracy is high for all models. The Base Model and Base Model + Tools scores are very close, with ARTIST showing a slight improvement.

* **Data Points:**

* Base Model: ~0.70

* Base Model + Tools: ~0.67

* ARTIST: ~0.73

### Key Observations

1. **Consistent Hierarchy:** The ARTIST model configuration achieves the highest accuracy on all four datasets.

2. **Dataset Difficulty:** The AIME dataset appears to be the most challenging, with all models scoring below 0.15. The Math 500 dataset appears to be the least challenging, with all models scoring near or above 0.67.

3. **Impact of Tools:** Adding tools to the Base Model ("Base Model + Tools") provides a clear accuracy boost on the AMC, AIME, and Olympiad datasets. However, on the Math 500 dataset, its performance is slightly *lower* than the Base Model alone.

4. **Greatest Improvement:** The most significant performance jump from the Base Model to ARTIST occurs on the AMC dataset (an increase of approximately 0.22 points).

### Interpretation

This chart evaluates the mathematical reasoning capabilities of the Qwen2.5-14B-Instruct model under different conditions. The data suggests that the **ARTIST** method or framework provides a robust and consistent improvement in accuracy across a variety of mathematical problem sets, from competition-level (AMC, AIME, Olympiad) to more general benchmarks (Math 500).

The fact that ARTIST outperforms the "Base Model + Tools" indicates that its advantage is not merely from tool augmentation but likely involves a more sophisticated approach to problem-solving. The anomaly on the Math 500 dataset, where "Base Model + Tools" slightly underperforms the Base Model, could suggest that for certain, possibly more straightforward problem types, the tool-use process might introduce minor overhead or error without a compensating benefit. Overall, the chart makes a strong case for the efficacy of the ARTIST approach for enhancing the mathematical problem-solving performance of this language model.