## Diagram: Optimization Process Flow

### Overview

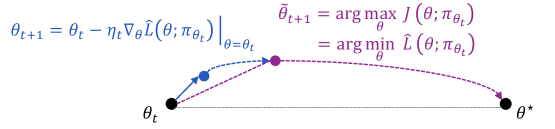

The image is a technical diagram illustrating two different optimization update rules in machine learning, visually comparing their trajectories from an initial parameter state (θ_t) toward an optimal state (θ*). It combines mathematical equations with a graphical representation of parameter space movement.

### Components/Axes

**Top Section (Equations):**

1. **Left Equation (Blue Text):**

`θ_{t+1} = θ_t - η_t ∇_θ L̂(θ; π_{θ_t}) |_{θ=θ_t}`

* This is a standard gradient descent update rule.

* **Components:** `θ_{t+1}` (updated parameters), `θ_t` (current parameters), `η_t` (learning rate), `∇_θ` (gradient operator), `L̂` (estimated loss function), `π_{θ_t}` (policy parameterized by `θ_t`).

2. **Right Equation (Purple Text):**

`θ̃_{t+1} = arg max_θ J(θ; π_{θ_t})`

`= arg min_θ L̂(θ; π_{θ_t})`

* This defines an alternative update rule that seeks the parameters maximizing an objective `J` (or equivalently, minimizing the estimated loss `L̂`) directly, given the current policy `π_{θ_t}`.

* **Components:** `θ̃_{t+1}` (updated parameters via this rule), `arg max_θ`/`arg min_θ` (argument of the maximum/minimum), `J` (objective function).

**Bottom Section (Visual Diagram):**

* **Points:**

* `θ_t`: Black dot, positioned at the bottom-left. Represents the starting parameter state at time `t`.

* `θ_{t+1}`: Blue dot, positioned to the right and slightly above `θ_t`. Represents the parameter state after one step of the gradient descent update (left equation).

* `θ̃_{t+1}`: Purple dot, positioned further right and higher than `θ_{t+1}`. Represents the parameter state after one step of the `arg max`/`arg min` update (right equation).

* `θ*`: Black dot, positioned at the bottom-right. Represents the optimal parameter state.

* **Arrows/Paths:**

* **Solid Blue Arrow:** Connects `θ_t` to `θ_{t+1}`. Corresponds to the gradient descent update (left equation).

* **Dashed Purple Arrow:** Connects `θ_t` to `θ̃_{t+1}`. Corresponds to the `arg max`/`arg min` update (right equation).

* **Dotted Black Line:** Connects `θ_t` directly to `θ*`. Represents a hypothetical direct path to the optimum.

* **Dashed Purple Arc:** Connects `θ̃_{t+1}` to `θ*`. Suggests a subsequent path from the intermediate point to the optimum.

### Detailed Analysis

* **Spatial Grounding & Color Correspondence:**

* The **blue** text of the left equation corresponds to the **blue** dot (`θ_{t+1}`) and the **solid blue arrow**.

* The **purple** text of the right equation corresponds to the **purple** dot (`θ̃_{t+1}`) and the **dashed purple arrow** and arc.

* The **black** dots (`θ_t`, `θ*`) and the **dotted black line** are neutral, representing start and end points.

* **Trend Verification:**

* The **gradient descent path (blue)** shows a small, incremental step from `θ_t` toward the general direction of `θ*`, but it lands at `θ_{t+1}`, which is not on the direct line to `θ*`.

* The **`arg max`/`arg min` path (purple dashed)** shows a larger, more direct leap from `θ_t` to `θ̃_{t+1}`, which is positioned closer to the vertical level of `θ*` and further along the horizontal axis.

* The **dotted black line** shows the ideal, straight-line path from start to optimum.

### Key Observations

1. The diagram visually contrasts a local, gradient-based step (blue) with a more global, objective-based step (purple).

2. The point `θ̃_{t+1}` is depicted as being "higher" (potentially indicating a higher objective value `J`) and further along the path to `θ*` than `θ_{t+1}` after a single update.

3. The dashed purple arc from `θ̃_{t+1}` to `θ*` implies that the `arg max` update places the parameters on a more favorable trajectory for reaching the optimum, possibly requiring fewer subsequent steps.

### Interpretation

This diagram is likely from a reinforcement learning or optimization theory context. It illustrates a conceptual comparison between two fundamental update strategies:

* **Gradient Descent (Blue):** A first-order method that follows the local slope of the loss landscape. It's computationally efficient but can be slow, myopic, and prone to getting stuck in local minima or taking a circuitous path.

* **Direct Objective Optimization (Purple):** A method that, at each step, seeks the parameters that would be optimal *if the current policy (π_{θ_t}) were fixed*. This is a more aggressive, "look-ahead" or "policy improvement" step. In algorithms like Trust Region Policy Optimization (TRPO) or Proximal Policy Optimization (PPO), such steps are constrained to ensure stability.

The visual metaphor suggests that while the gradient step makes safe, small progress, the direct optimization step makes a more significant leap toward regions of higher reward (or lower loss), potentially leading to faster overall convergence to the optimum `θ*`. The diagram argues for the potential efficiency of the latter approach by showing its intermediate point (`θ̃_{t+1}`) as being qualitatively closer to the goal.