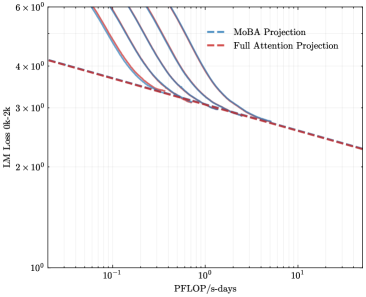

## Line Chart: Comparison of MoBA Projection vs. Full Attention Projection Scaling Laws

### Overview

The image is a log-log line chart comparing the scaling behavior of two projection methods for language models: "MoBA Projection" and "Full Attention Projection." It plots model loss against computational cost, illustrating how each method's performance improves with increased compute.

### Components/Axes

* **Chart Type:** 2D line chart with logarithmic scales on both axes.

* **X-Axis:**

* **Label:** `PFLOP/s-days`

* **Scale:** Logarithmic (base 10).

* **Range & Ticks:** Visible ticks at `10^-1` (0.1), `10^0` (1), and `10^1` (10). The axis extends slightly beyond these points.

* **Y-Axis:**

* **Label:** `LM Loss (bpb)` - Likely "Language Model Loss in bits per byte."

* **Scale:** Logarithmic (base 10).

* **Range & Ticks:** Visible ticks at `10^0` (1), `2 x 10^0` (2), `3 x 10^0` (3), `4 x 10^0` (4), and `6 x 10^0` (6).

* **Legend:**

* **Position:** Top-right corner of the plot area.

* **Entries:**

1. `MoBA Projection` - Represented by a blue dashed line (`--`).

2. `Full Attention Projection` - Represented by a red dashed line (`--`).

### Detailed Analysis

The chart displays two distinct, downward-sloping lines on the log-log plot, indicating a power-law relationship between compute (PFLOP/s-days) and loss for both methods.

**1. MoBA Projection (Blue Dashed Line):**

* **Trend:** The line slopes steeply downward from left to right, indicating a strong inverse relationship between compute and loss.

* **Data Points (Approximate):**

* At `~0.1 PFLOP/s-days`, Loss is `~6 bpb`.

* At `~0.5 PFLOP/s-days`, Loss is `~4 bpb`.

* At `~1 PFLOP/s-days`, Loss is `~3 bpb`.

* At `~2 PFLOP/s-days`, Loss is `~2.5 bpb`.

* The line continues to decrease beyond `2 PFLOP/s-days`.

**2. Full Attention Projection (Red Dashed Line):**

* **Trend:** The line also slopes downward but with a shallower slope compared to the MoBA line.

* **Data Points (Approximate):**

* At `~0.1 PFLOP/s-days`, Loss is `~4.2 bpb`.

* At `~0.5 PFLOP/s-days`, Loss is `~3.2 bpb`.

* At `~1 PFLOP/s-days`, Loss is `~3 bpb`.

* At `~2 PFLOP/s-days`, Loss is `~2.8 bpb`.

* At `~10 PFLOP/s-days`, Loss is `~2.2 bpb`.

**3. Relationship Between Lines:**

* The two lines intersect at approximately `1 PFLOP/s-days` and `3 bpb`.

* **To the left of the intersection (Compute < 1 PFLOP/s-day):** The Full Attention line is below the MoBA line, meaning Full Attention achieves lower loss for the same compute budget in this regime.

* **To the right of the intersection (Compute > 1 PFLOP/s-day):** The MoBA line is below the Full Attention line, meaning MoBA Projection achieves lower loss for the same compute budget in this higher-compute regime.

### Key Observations

1. **Scaling Laws:** Both methods follow predictable power-law scaling (linear on a log-log plot).

2. **Crossover Point:** A critical crossover occurs at ~1 PFLOP/s-day. The relative efficiency of the two methods flips at this point.

3. **Slope Difference:** The MoBA Projection line has a steeper negative slope than the Full Attention Projection line. This indicates that MoBA's loss improves more rapidly per unit of additional compute.

4. **Performance Gap:** At the lowest compute shown (~0.1 PFLOP/s-day), Full Attention has a significant advantage (~1.8 bpb lower loss). At the highest compute shown (~10 PFLOP/s-day), MoBA has a smaller but clear advantage (~0.6 bpb lower loss).

### Interpretation

This chart presents a comparative analysis of scaling efficiency for two architectural components in language modeling. The data suggests a fundamental trade-off:

* **Full Attention Projection** is more **compute-efficient at lower scales**. For projects with limited computational resources (< 1 PFLOP/s-day), it delivers better loss performance.

* **MoBA Projection** demonstrates **superior scaling efficiency at higher scales**. As the computational budget increases beyond the crossover point, it becomes the more effective method, yielding lower loss for the same investment in compute.

The steeper slope of the MoBA line is the key finding. It implies that the MoBA method has a better "scaling exponent" – each doubling of compute yields a greater reduction in loss compared to Full Attention, but only after a certain compute threshold is passed. This could be due to MoBA (likely a "Mixture of Block Attention" or similar sparse method) having higher fixed overhead or less efficient use of very small compute budgets, but better utilization of parallelism and memory at scale.

**Conclusion:** The choice between MoBA and Full Attention Projection is not absolute but depends on the target operational scale. The chart provides a quantitative guide for this architectural decision based on available computational resources.