## Diagram: Retrieval-Augmented Generation (RAG) System Flow

### Overview

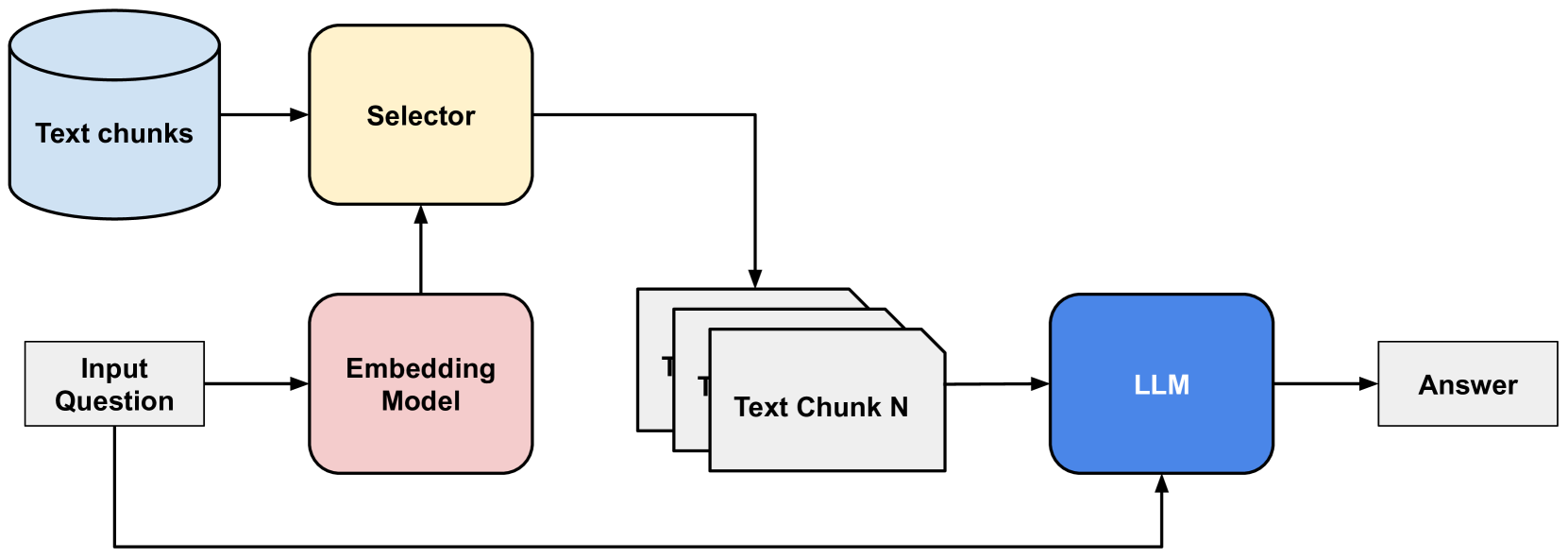

This diagram illustrates a typical workflow for a Retrieval-Augmented Generation (RAG) system. The system takes an input question, processes it through an embedding model, retrieves relevant text chunks from a data source, and then uses a Large Language Model (LLM) to generate an answer based on the retrieved information and the original question.

### Components/Axes

This diagram does not contain axes or legends as it is a process flow diagram. The components are:

* **Text chunks**: Represented by a light blue cylinder, this is the data source from which information will be retrieved.

* **Selector**: Represented by a yellow rounded rectangle, this component likely selects relevant text chunks.

* **Input Question**: Represented by a white rectangle, this is the user's query.

* **Embedding Model**: Represented by a pink rounded rectangle, this model processes the input question to create embeddings.

* **Text Chunk N**: Represented by a stack of white document-like shapes, these are the retrieved text chunks that will be used by the LLM. The "N" indicates a variable number of chunks.

* **LLM**: Represented by a blue rounded rectangle, this is the Large Language Model responsible for generating the final answer.

* **Answer**: Represented by a white rectangle, this is the output of the system.

### Detailed Analysis or Content Details

The diagram depicts the following flow of information and processes:

1. **Input Question**: The process begins with an "Input Question" (white rectangle, bottom-left).

2. **Embedding Model**: The "Input Question" is fed into the "Embedding Model" (pink rounded rectangle, center-left).

3. **Selector**: The "Embedding Model" outputs to the "Selector" (yellow rounded rectangle, top-center). Simultaneously, the "Text chunks" (light blue cylinder, top-left) also feed into the "Selector". This suggests the "Selector" uses the embeddings of the question to identify relevant "Text chunks".

4. **Retrieved Text Chunks**: The "Selector" then outputs to a stack of "Text Chunk N" (white document shapes, center-right). This represents the relevant pieces of text retrieved from the "Text chunks" data source.

5. **LLM Input**: Both the "Input Question" and the "Text Chunk N" are fed into the "LLM" (blue rounded rectangle, right-center). This indicates the LLM will use both the original question and the retrieved context to formulate an answer.

6. **Answer Generation**: The "LLM" processes these inputs and outputs an "Answer" (white rectangle, far-right).

### Key Observations

* The diagram clearly outlines a sequential process with distinct stages.

* The "Selector" plays a crucial role in bridging the "Text chunks" and the "Embedding Model" with the final LLM, implying a retrieval mechanism.

* The "Input Question" is used both for embedding and directly by the LLM, suggesting it provides both the query context and the prompt for generation.

* The use of "Text Chunk N" implies that the system can retrieve multiple pieces of relevant information.

### Interpretation

This diagram illustrates a common architecture for enhancing the capabilities of Large Language Models by providing them with external, relevant knowledge. The "Embedding Model" converts the "Input Question" into a numerical representation that can be used to find similar representations within the "Text chunks". The "Selector" acts as the retrieval component, identifying and fetching the most pertinent "Text chunks" based on the question's embedding. These retrieved chunks, along with the original question, are then passed to the "LLM". This approach, known as Retrieval-Augmented Generation (RAG), allows the LLM to access and synthesize information beyond its training data, leading to more accurate, up-to-date, and contextually relevant answers. The system effectively grounds the LLM's responses in specific factual information, mitigating issues like hallucination and improving the reliability of the generated output.