\n

## Screenshot: Mathematical Statement and AI Output

### Overview

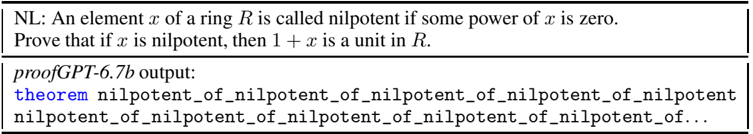

The image is a screenshot containing two distinct rectangular text boxes stacked vertically. The top box presents a mathematical definition and proof problem in natural language. The bottom box shows the output from an AI model named "proofGPT-6.7b" in response to that problem. The content is technical, relating to abstract algebra and formal theorem proving.

### Components/Axes

The image is segmented into two primary regions:

1. **Top Box (Header Region):**

* **Label:** "NL:" (indicating Natural Language).

* **Content:** A mathematical definition and a proof statement.

* **Text:** "An element \( x \) of a ring \( R \) is called nilpotent if some power of \( x \) is zero. Prove that if \( x \) is nilpotent, then \( 1 + x \) is a unit in \( R \)."

2. **Bottom Box (Main Content Region):**

* **Label:** "proofGPT-6.7b output:"

* **Content:** A line of code or a formal statement generated by the AI model.

* **Text:** "theorem nilpotent_of_nilpotent_of_nilpotent_of_nilpotent nilpotent_of_nilpotent_of_nilpotent_of_nilpotent_of_nilpotent_of..."

### Detailed Analysis

* **Top Box Content:** This is a standard problem in ring theory. A nilpotent element is one that becomes zero when raised to some integer power \( n \). The task is to prove that adding 1 to such an element results in a *unit* (an element with a multiplicative inverse).

* **Bottom Box Content:** The AI's output is not a coherent proof. It consists of the keyword `theorem` followed by a single, extremely long identifier. This identifier is formed by the phrase `nilpotent_of_` repeated many times (the exact count is truncated by the image border, indicated by "..."). The repetition suggests a failure mode where the model enters a degenerate loop, generating a nonsensical token sequence instead of a valid logical argument.

### Key Observations

1. **Repetitive Output:** The most striking feature is the pathological repetition in the AI's output. The identifier `nilpotent_of_nilpotent_of_...` is not a meaningful mathematical statement.

2. **Truncation:** The output text is cut off on the right side, implying the generated sequence was even longer than what is visible.

3. **Contrast:** There is a stark contrast between the precise, concise natural language problem and the verbose, meaningless, and repetitive formal output.

4. **Model Identification:** The model is explicitly named "proofGPT-6.7b," suggesting it is a large language model (6.7 billion parameters) fine-tuned or prompted for proof generation.

### Interpretation

This image serves as a diagnostic example or a failure case in AI-assisted formal mathematics. It demonstrates a specific failure mode of a language model when tasked with generating a formal proof.

* **What it suggests:** The model, when given a valid mathematical prompt, did not produce a syntactically or semantically correct proof. Instead, it generated a degenerate output characterized by excessive repetition of a single phrase. This could indicate issues with the model's training, its decoding strategy (e.g., temperature, top-p sampling), or its ability to handle the specific formal language (like Lean, Coq, or Isabelle) it is outputting.

* **How elements relate:** The "NL" box provides the input, and the "output" box shows the flawed result. The relationship is one of cause (problem statement) and effect (erroneous generation), highlighting a gap between understanding a problem in natural language and formalizing its solution.

* **Notable anomalies:** The primary anomaly is the non-linguistic, repetitive token sequence. In a functional system, one would expect a structured proof with steps like `have`, `apply`, `ring`, etc. The output here contains none of that structure, representing a complete breakdown in the generation process. This is not an incorrect proof; it is a failure to produce anything resembling a proof.