\n

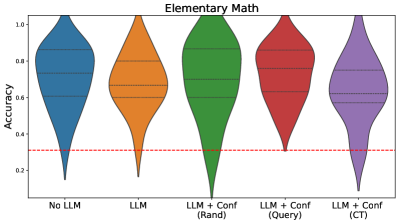

## Violin Plot: Elementary Math Accuracy by LLM Configuration

### Overview

The image is a violin plot titled "Elementary Math," comparing the distribution of accuracy scores across five different configurations involving Large Language Models (LLMs). The plot visualizes the probability density of the data at different values, with internal horizontal lines indicating quartiles. A horizontal red dashed line serves as a reference baseline.

### Components/Axes

* **Title:** "Elementary Math" (centered at the top).

* **Y-Axis:**

* **Label:** "Accuracy" (rotated vertically on the left side).

* **Scale:** Linear scale from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-Axis:**

* **Categories (from left to right):**

1. "No LLM"

2. "LLM"

3. "LLM + Conf (Rand)"

4. "LLM + Conf (Query)"

5. "LLM + Conf (CT)"

* **Reference Line:** A horizontal red dashed line at y = 0.3, spanning the full width of the plot.

* **Legend:** Implicit in the x-axis category labels. Each category is represented by a uniquely colored violin plot:

* "No LLM": Blue

* "LLM": Orange

* "LLM + Conf (Rand)": Green

* "LLM + Conf (Query)": Red

* "LLM + Conf (CT)": Purple

### Detailed Analysis

The analysis proceeds by isolating each violin plot (category) from left to right.

1. **"No LLM" (Blue, far left):**

* **Trend/Shape:** The distribution is broad and somewhat bimodal, with a wider section in the upper half (0.6-0.9) and a narrower tail extending down to ~0.1.

* **Key Values (Approximate):**

* Median (middle horizontal line): ~0.60

* Interquartile Range (IQR, distance between upper and lower quartile lines): ~0.45 to ~0.80

* Full Range: ~0.10 to ~1.00

2. **"LLM" (Orange, second from left):**

* **Trend/Shape:** Similar broad shape to "No LLM," but the central mass appears slightly higher and the lower tail is less pronounced.

* **Key Values (Approximate):**

* Median: ~0.65

* IQR: ~0.50 to ~0.85

* Full Range: ~0.15 to ~1.00

3. **"LLM + Conf (Rand)" (Green, center):**

* **Trend/Shape:** This distribution is notably different. It is more concentrated in the middle-lower range, with a pronounced bulge around 0.4-0.6 and a long, thin tail extending down to near 0.0.

* **Key Values (Approximate):**

* Median: ~0.50 (visibly lower than the first two)

* IQR: ~0.35 to ~0.70

* Full Range: ~0.00 to ~1.00 (widest range, with the lowest minimum value)

4. **"LLM + Conf (Query)" (Red, second from right):**

* **Trend/Shape:** The distribution is more compact and shifted upward. The bulk of the data is concentrated between 0.6 and 0.9, with a shorter lower tail.

* **Key Values (Approximate):**

* Median: ~0.75

* IQR: ~0.60 to ~0.85

* Full Range: ~0.30 to ~1.00

5. **"LLM + Conf (CT)" (Purple, far right):**

* **Trend/Shape:** This is the most compact and highest-performing distribution. It has a tight concentration in the upper range (0.7-0.95) and the shortest lower tail.

* **Key Values (Approximate):**

* Median: ~0.80

* IQR: ~0.70 to ~0.90

* Full Range: ~0.40 to ~1.00 (highest minimum value)

### Key Observations

1. **Performance Hierarchy:** There is a clear visual hierarchy in median accuracy: `LLM + Conf (CT)` > `LLM + Conf (Query)` > `LLM` ≈ `No LLM` > `LLM + Conf (Rand)`.

2. **Variability:** The "LLM + Conf (Rand)" configuration shows the highest variability (widest range, long lower tail), while "LLM + Conf (CT)" shows the lowest variability (most compact shape).

3. **Baseline Comparison:** All distributions, including their lower tails, are predominantly above the red dashed reference line at 0.3, suggesting this line may represent a baseline like random chance or a minimal acceptable threshold.

4. **Impact of Confidence Methods:** The "Conf" (confidence calibration) methods have divergent effects. The "(Rand)" variant appears detrimental, lowering median accuracy and increasing variance. The "(Query)" and "(CT)" variants are beneficial, increasing median accuracy and reducing variance compared to the base "LLM" and "No LLM" conditions.

### Interpretation

This chart demonstrates the impact of different LLM augmentation strategies on performance consistency and accuracy in an elementary math context.

* **Core Finding:** Simply using an LLM ("LLM") provides a marginal accuracy boost over no LLM ("No LLM"), but with similar high variability. The critical factor is *how* the LLM is configured.

* **The Role of Confidence Calibration:** The data suggests that naive or random confidence calibration ("LLM + Conf (Rand)") is counterproductive, harming both average performance and reliability. In contrast, structured confidence calibration methods ("Query" and especially "CT") significantly improve outcomes.

* **"CT" as the Optimal Strategy:** The "LLM + Conf (CT)" configuration is superior, yielding the highest typical accuracy and the most predictable performance (lowest spread). This implies that the "CT" method effectively aligns the model's confidence with its correctness, reducing both errors and uncertainty.

* **Practical Implication:** For deploying LLMs in educational or scoring systems for elementary math, implementing a robust confidence calibration technique like "CT" is crucial for achieving high and reliable accuracy, far more so than just using a base LLM. The red line at 0.3 likely signifies that all tested methods perform meaningfully above a trivial baseline.