\n

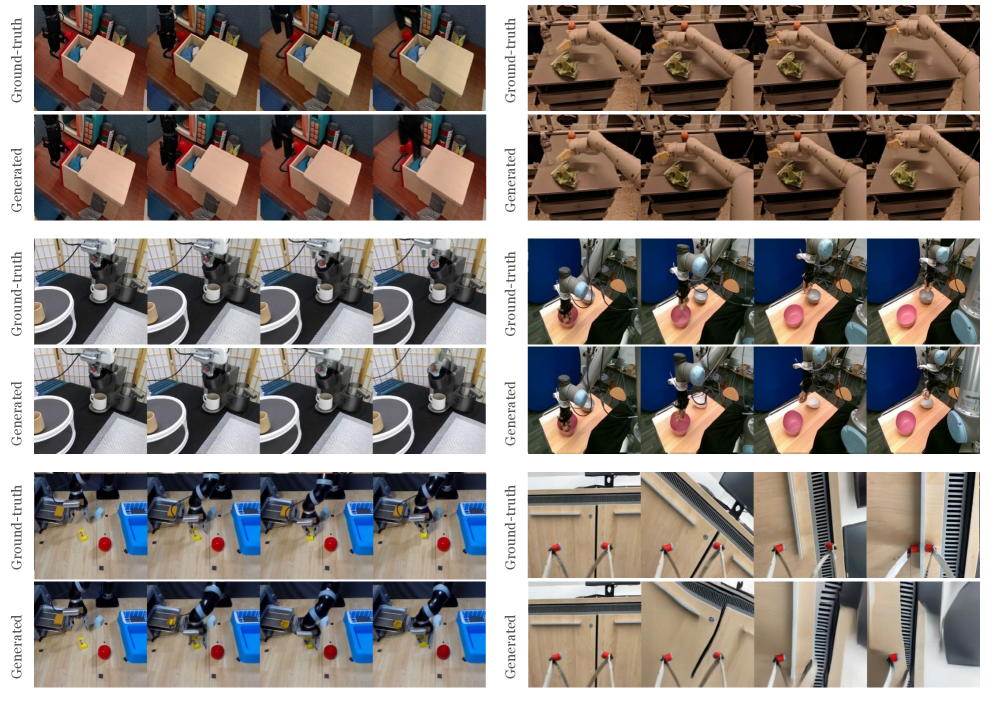

## [Comparative Image Grid]: Ground-Truth vs. Generated Robotic Manipulation Sequences

### Overview

The image is a composite grid containing six distinct panels arranged in a 3x2 layout. Each panel presents a side-by-side comparison of two image sequences: a "Ground-truth" sequence (top row) and a "Generated" sequence (bottom row). Each sequence consists of four sequential frames depicting a robotic arm performing a specific manipulation task in a simulated or controlled environment. The primary purpose is to visually compare the fidelity of generated video sequences against real or reference (ground-truth) sequences for various robotic tasks.

### Components/Axes

* **Structure:** A 3-row by 2-column grid of panels.

* **Panel Labeling:** Each panel is divided into two horizontal rows.

* **Top Row Label:** "Ground-truth" (text rotated 90 degrees counter-clockwise, positioned vertically along the left edge of the row).

* **Bottom Row Label:** "Generated" (text rotated 90 degrees counter-clockwise, positioned vertically along the left edge of the row).

* **Content per Row:** Each row within a panel contains four sequential image frames, showing a progression of a robotic task.

* **Language:** All labels are in English.

### Detailed Analysis

The image contains no charts, graphs, or data tables. It is a visual comparison grid. The analysis below isolates each panel and describes the visible components and actions.

**Panel 1 (Top-Left):**

* **Task:** A robotic arm interacts with a light-colored rectangular box and a smaller blue object on a wooden surface.

* **Ground-truth Sequence:** Shows the arm approaching, contacting, and slightly displacing the blue object next to the box. Lighting and shadows are consistent.

* **Generated Sequence:** Appears to closely replicate the scene geometry, object colors (box: beige, object: blue), and the arm's motion trajectory. The visual fidelity is high, with minor potential differences in shadow softness.

**Panel 2 (Top-Right):**

* **Task:** A robotic arm manipulates a crumpled green object (possibly a cloth or bag) on a dark tabletop.

* **Ground-truth Sequence:** The arm's gripper pinches and lifts the green object.

* **Generated Sequence:** Replicates the complex deformation of the green object and the gripper's interaction. The texture and color of the object and the arm's metallic finish are well-matched.

**Panel 3 (Middle-Left):**

* **Task:** A robotic arm performs a task involving a white cylindrical container and a black object on a white surface with a grid pattern.

* **Ground-truth Sequence:** The arm moves the black object towards or into the white container.

* **Generated Sequence:** Accurately reproduces the high-contrast scene (black object, white container/surface) and the precise motion of the arm.

**Panel 4 (Middle-Right):**

* **Task:** A robotic arm moves a pink spherical object on a light wooden table. A blue partition is visible in the background.

* **Ground-truth Sequence:** The arm pushes or rolls the pink sphere across the table.

* **Generated Sequence:** Successfully generates the distinct pink color of the sphere, the wood grain texture, and the rolling motion. The background blue partition is also present.

**Panel 5 (Bottom-Left):**

* **Task:** A robotic arm interacts with objects near a blue bin. A red sphere and a yellow cube are on the floor.

* **Ground-truth Sequence:** The arm appears to be positioning itself relative to the blue bin and the objects.

* **Generated Sequence:** Correctly renders the bright blue bin, red sphere, and yellow cube, along with the arm's configuration. The spatial relationships between objects are maintained.

**Panel 6 (Bottom-Right):**

* **Task:** A close-up view focusing on a robotic gripper with red markers at its tips, interacting with a metallic, grooved surface (possibly a heatsink or part of a machine).

* **Ground-truth Sequence:** Shows the gripper approaching and making contact with the grooved surface.

* **Generated Sequence:** Replicates the detailed geometry of the gripper (including the red tips) and the fine, repetitive pattern of the metallic grooves. The lighting and reflections on the metal are consistent.

### Key Observations

1. **High Visual Fidelity:** In all six panels, the "Generated" sequences demonstrate a strong visual resemblance to their corresponding "Ground-truth" sequences in terms of scene layout, object identity, color, texture, and lighting.

2. **Motion Consistency:** The generated sequences appear to capture the correct temporal progression and motion dynamics of the robotic arms and manipulated objects.

3. **Task Diversity:** The grid showcases a variety of robotic manipulation tasks involving different objects (rigid, deformable), surfaces, and camera perspectives (wide shots and close-ups).

4. **Labeling Consistency:** The "Ground-truth" and "Generated" labels are applied uniformly across all panels, establishing a clear comparative framework.

### Interpretation

This image serves as a qualitative evaluation figure, likely from a research paper or technical report on video generation or simulation for robotics. The core message is that the generative model being demonstrated can produce highly realistic and physically plausible video sequences of robotic manipulation tasks that are visually indistinguishable (or very close) from real-world or high-fidelity simulation recordings ("Ground-truth").

The **Peircean investigative** reading suggests this is an *iconic* and *indexical* representation. It is *iconic* because the generated images resemble the real ones. It is *indexical* because the side-by-side comparison points directly to the model's capability—the generated sequence is an index of the model's performance. The absence of quantitative metrics implies the argument is being made through visual evidence, trusting the viewer to perceive the similarity. The selection of diverse tasks (handling rigid boxes, deformable cloths, spheres, close-up interactions) is intended to demonstrate the model's generalization capability across different manipulation scenarios. The high fidelity suggests potential applications in generating training data for robots, creating realistic simulations, or validating planning algorithms.