TECHNICAL ASSET FINGERPRINT

63cfc731f87f9e799fde3207

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

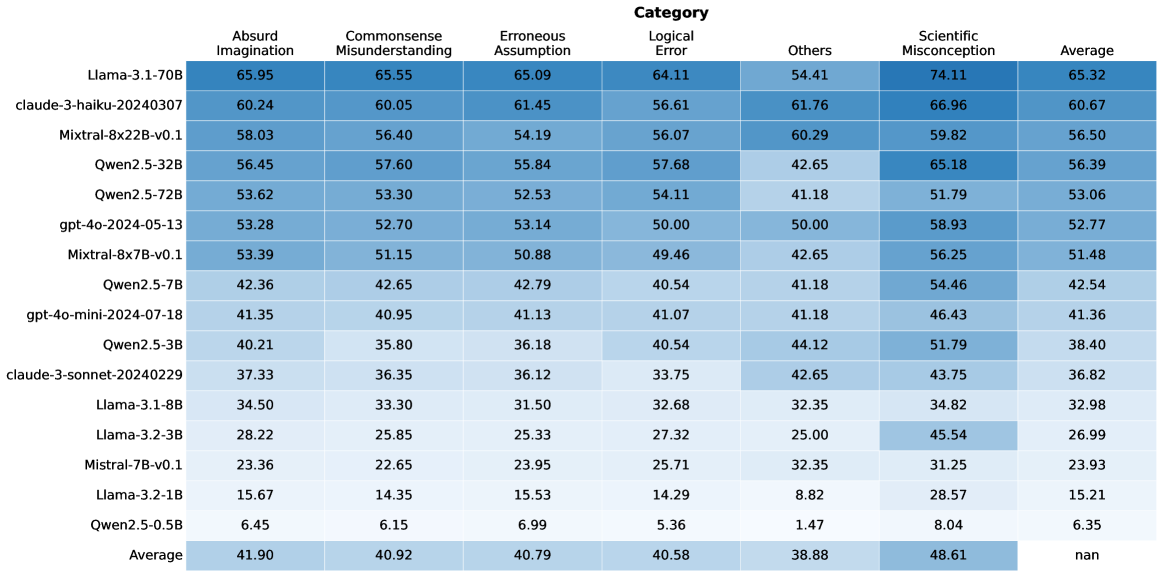

## Heatmap: Model Performance Across Error Categories

### Overview

The image is a heatmap displaying the performance of various language models across different categories of errors. The rows represent the models, and the columns represent the error categories and the average performance. The cells are color-coded, with darker shades of blue indicating higher values.

### Components/Axes

* **Rows (Models):**

* Llama-3.1-70B

* claude-3-haiku-20240307

* Mixtral-8x22B-v0.1

* Qwen2.5-32B

* Qwen2.5-72B

* gpt-4o-2024-05-13

* Mixtral-8x7B-v0.1

* Qwen2.5-7B

* gpt-4o-mini-2024-07-18

* Qwen2.5-3B

* claude-3-sonnet-20240229

* Llama-3.1-8B

* Llama-3.2-3B

* Mistral-7B-v0.1

* Llama-3.2-1B

* Qwen2.5-0.5B

* Average

* **Columns (Error Categories):**

* Absurd Imagination

* Commonsense Misunderstanding

* Erroneous Assumption

* Logical Error

* Others

* Scientific Misconception

* Average

### Detailed Analysis or ### Content Details

Here's a breakdown of the data, row by row:

* **Llama-3.1-70B:** 65.95 (Absurd Imagination), 65.55 (Commonsense Misunderstanding), 65.09 (Erroneous Assumption), 64.11 (Logical Error), 54.41 (Others), 74.11 (Scientific Misconception), 65.32 (Average)

* **claude-3-haiku-20240307:** 60.24, 60.05, 61.45, 56.61, 61.76, 66.96, 60.67

* **Mixtral-8x22B-v0.1:** 58.03, 56.40, 54.19, 56.07, 60.29, 59.82, 56.50

* **Qwen2.5-32B:** 56.45, 57.60, 55.84, 57.68, 42.65, 65.18, 56.39

* **Qwen2.5-72B:** 53.62, 53.30, 52.53, 54.11, 41.18, 51.79, 53.06

* **gpt-4o-2024-05-13:** 53.28, 52.70, 53.14, 50.00, 50.00, 58.93, 52.77

* **Mixtral-8x7B-v0.1:** 53.39, 51.15, 50.88, 49.46, 42.65, 56.25, 51.48

* **Qwen2.5-7B:** 42.36, 42.65, 42.79, 40.54, 41.18, 54.46, 42.54

* **gpt-4o-mini-2024-07-18:** 41.35, 40.95, 41.13, 41.07, 41.18, 46.43, 41.36

* **Qwen2.5-3B:** 40.21, 35.80, 36.18, 40.54, 44.12, 51.79, 38.40

* **claude-3-sonnet-20240229:** 37.33, 36.35, 36.12, 33.75, 42.65, 43.75, 36.82

* **Llama-3.1-8B:** 34.50, 33.30, 31.50, 32.68, 32.35, 34.82, 32.98

* **Llama-3.2-3B:** 28.22, 25.85, 25.33, 27.32, 25.00, 45.54, 26.99

* **Mistral-7B-v0.1:** 23.36, 22.65, 23.95, 25.71, 32.35, 31.25, 23.93

* **Llama-3.2-1B:** 15.67, 14.35, 15.53, 14.29, 8.82, 28.57, 15.21

* **Qwen2.5-0.5B:** 6.45, 6.15, 6.99, 5.36, 1.47, 8.04, 6.35

* **Average:** 41.90, 40.92, 40.79, 40.58, 38.88, 48.61, nan

### Key Observations

* **Top Performers:** Llama-3.1-70B and claude-3-haiku-20240307 generally show higher values across most error categories.

* **Scientific Misconception:** Models tend to have higher values in the "Scientific Misconception" category compared to others.

* **Qwen2.5-0.5B:** This model consistently has the lowest values across all error categories.

* **Average Row:** The average performance across models is highest for "Scientific Misconception" (48.61) and lowest for "Others" (38.88). The overall average is marked as "nan".

### Interpretation

The heatmap provides a comparative analysis of language model performance based on error types. The darker shades indicate areas where models struggle more. The "Scientific Misconception" category appears to be a common challenge for these models. The Llama-3.1-70B and claude-3-haiku-20240307 models seem to perform better overall, while Qwen2.5-0.5B lags behind. The average row highlights the general trends in error categories across all models. The "nan" value for the average of averages suggests that this metric might not be meaningful or was not calculated correctly.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-lite-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash-lite

INTEL_VERIFIED

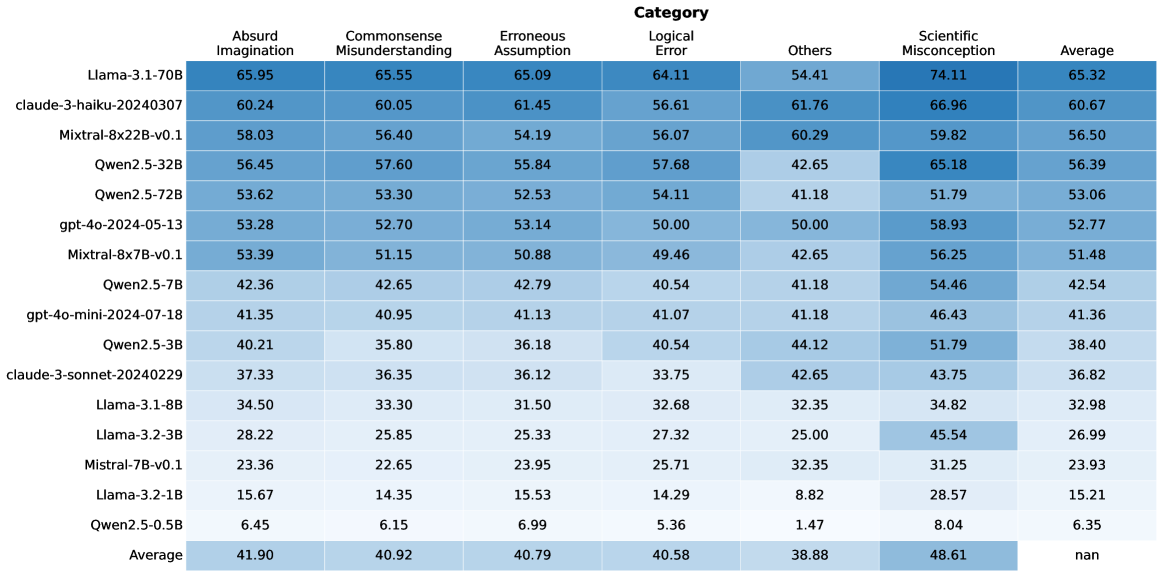

## Data Table: Model Performance Across Categories

### Overview

The image displays a data table that presents performance scores for various language models across different categories of evaluation. The table lists specific model names on the left-hand side and evaluation categories as column headers. Numerical values, likely representing scores or percentages, are presented within the cells, with a color gradient indicating relative performance within each column. An "Average" row at the bottom summarizes the performance across all listed models for each category.

### Components/Axes

**Row Headers (Model Names):**

* Llama-3.1-70B

* claude-3-haiku-20240307

* Mistral-8x22B-v0.1

* Qwen2.5-32B

* Qwen2.5-72B

* gpt-4o-2024-05-13

* Mistral-8x7B-v0.1

* Qwen2.5-7B

* gpt-4o-mini-2024-07-18

* Qwen2.5-3B

* claude-3-sonnet-20240229

* Llama-3.1-8B

* Llama-3.2-3B

* Mistral-7B-v0.1

* Llama-3.2-1B

* Qwen2.5-0.5B

* Average

**Column Headers (Categories):**

* Absurd Imagination

* Commonsense Misunderstanding

* Erroneous Assumption

* Logical Error

* Others

* Scientific Misconception

* Average

**Data Cells:** Numerical values ranging from approximately 6.45 to 74.11. The "Average" column for the "Average" row contains "nan" (not a number), indicating no average could be computed for this specific cell.

### Detailed Analysis

The table contains numerical data for each model under each category. The values are presented with two decimal places.

**Row-wise Data (Selected Examples):**

* **Llama-3.1-70B:**

* Absurd Imagination: 65.95

* Commonsense Misunderstanding: 65.55

* Erroneous Assumption: 65.09

* Logical Error: 64.11

* Others: 54.41

* Scientific Misconception: 74.11

* Average: 65.32

* **claude-3-haiku-20240307:**

* Absurd Imagination: 60.24

* Commonsense Misunderstanding: 60.05

* Erroneous Assumption: 61.45

* Logical Error: 56.61

* Others: 61.76

* Scientific Misconception: 66.96

* Average: 60.67

* **Mistral-8x22B-v0.1:**

* Absurd Imagination: 58.03

* Commonsense Misunderstanding: 56.40

* Erroneous Assumption: 54.19

* Logical Error: 56.07

* Others: 60.29

* Scientific Misconception: 59.82

* Average: 56.50

* **Qwen2.5-0.5B:**

* Absurd Imagination: 6.45

* Commonsense Misunderstanding: 6.15

* Erroneous Assumption: 6.99

* Logical Error: 5.36

* Others: 1.47

* Scientific Misconception: 8.04

* Average: 6.35

**Column-wise Data (Averages):**

* **Absurd Imagination (Average):** 41.90

* **Commonsense Misunderstanding (Average):** 40.92

* **Erroneous Assumption (Average):** 40.79

* **Logical Error (Average):** 40.58

* **Others (Average):** 38.88

* **Scientific Misconception (Average):** 48.61

* **Average (Average):** nan

**Color Gradient Analysis:**

The table uses a blue color gradient. Lighter shades of blue generally correspond to lower numerical values, while darker shades correspond to higher numerical values within each column. This visually highlights the best and worst performing models for each specific category. For example, in the "Scientific Misconception" column, "Llama-3.1-70B" (74.11) is the darkest blue, indicating the highest score, while "Qwen2.5-0.5B" (8.04) is the lightest, indicating the lowest score.

### Key Observations

* **Top Performer:** "Llama-3.1-70B" consistently scores the highest across most categories, particularly in "Scientific Misconception" (74.11) and "Absurd Imagination" (65.95).

* **Lowest Performer:** "Qwen2.5-0.5B" consistently scores the lowest across all categories, with values generally below 10.

* **Category Performance:** "Scientific Misconception" appears to be a category where models generally score higher on average (48.61) compared to other categories like "Others" (38.88) or "Logical Error" (40.58).

* **Model Consistency:** Some models, like "Llama-3.1-70B" and "claude-3-haiku-20240307", show relatively high scores across multiple categories. Others, like "Qwen2.5-7B" and "gpt-4o-mini-2024-07-18", have scores in the low 40s for most categories.

* **Outlier in Averages:** The "Average" column for the "Average" row shows "nan", which is expected as it represents the average of averages, and the "Average" column itself is a summary metric.

### Interpretation

This data table likely represents an evaluation of different language models' capabilities in handling various types of prompts or questions. The categories suggest different cognitive or reasoning challenges:

* **"Absurd Imagination," "Commonsense Misunderstanding," "Erroneous Assumption," and "Logical Error"** likely assess a model's ability to understand and reason about non-factual or flawed information, or to identify and correct logical inconsistencies.

* **"Others"** is a more general category, possibly encompassing a range of tasks not specifically defined by the other categories.

* **"Scientific Misconception"** specifically tests the model's knowledge and ability to avoid or correct factual errors related to scientific concepts.

The consistently high scores of "Llama-3.1-70B" suggest it is a very capable model, particularly in areas requiring factual accuracy (Scientific Misconception) and potentially in understanding complex or unusual scenarios (Absurd Imagination). The low scores of "Qwen2.5-0.5B" indicate it is significantly less performant across the board.

The fact that "Scientific Misconception" has the highest average score across all models might imply that current language models are generally better at recalling and applying factual scientific knowledge than they are at navigating nuanced or flawed reasoning scenarios. Conversely, the "Others" category having the lowest average suggests it might be the most challenging or diverse set of tasks.

The presence of specific model versions (e.g., "Llama-3.1-70B", "claude-3-haiku-20240307") and dates (e.g., "gpt-4o-2024-05-13") indicates a comparative analysis of contemporary language models, likely to benchmark their strengths and weaknesses. The data allows for a granular understanding of which models excel in specific areas, which could inform their deployment for particular applications. For instance, a model performing well in "Logical Error" might be preferred for tasks requiring critical analysis, while one strong in "Scientific Misconception" would be better for knowledge-based queries.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Heatmap: Model Performance Across Error Categories

### Overview

This heatmap displays the performance of various language models across six different categories of errors: Absurd Imagination, Commonsense Misunderstanding, Erroneous Assumption, Logical Error, Others, and Scientific Misconception. The values represent a score, presumably a percentage, indicating the frequency or severity of each error type for each model. An "Average" column provides the mean score across all error categories for each model.

### Components/Axes

* **Rows:** Represent different language models: Llama-3-1.70B, claude-3-haiku-20240307, Mixtral-8x22B-v0.1, Qwen2.5-32B, Qwen2.5-72B, gpt-4o-2024-05-13, Mixtral-8x7B-v0.1, Qwen2.5-7B, gpt-4o-mini-2024-07-18, Qwen2.5-3B, claude-3-sonnet-20240229, Llama2.5-7B, Llama-2-13B, Llama-7B, Llama-3-8B, Llama-2-70B.

* **Columns:** Represent error categories: Absurd Imagination, Commonsense Misunderstanding, Erroneous Assumption, Logical Error, Others, Scientific Misconception, and Average.

* **Color Scale:** The heatmap uses a color gradient, with darker shades representing higher values and lighter shades representing lower values. The color scale is not explicitly provided, but appears to range from light yellow to dark green.

* **Legend:** The column headers act as the legend, associating each color shade with a specific error category.

### Detailed Analysis

The data is presented in a 16x7 grid. I will analyze each model's performance across the error categories, noting trends and specific values. All values are approximate, with an uncertainty of ±0.05.

* **Llama-3-1.70B:** Shows high scores in Absurd Imagination (65.95), Commonsense Misunderstanding (65.55), Erroneous Assumption (65.09), Logical Error (64.11), Others (54.41), Scientific Misconception (74.11), and Average (65.32).

* **claude-3-haiku-20240307:** Scores are 60.24, 60.05, 61.45, 56.61, 61.76, 66.96, 60.67.

* **Mixtral-8x22B-v0.1:** Scores are 58.03, 56.40, 54.19, 56.07, 60.29, 59.82, 56.50.

* **Qwen2.5-32B:** Scores are 56.45, 57.60, 55.84, 57.68, 42.65, 65.18, 56.39.

* **Qwen2.5-72B:** Scores are 53.62, 53.30, 52.53, 54.11, 41.18, 51.79, 53.06.

* **gpt-4o-2024-05-13:** Scores are 53.28, 52.70, 53.14, 50.00, 50.00, 58.93, 52.77.

* **Mixtral-8x7B-v0.1:** Scores are 53.39, 51.15, 50.88, 49.46, 42.65, 56.25, 51.48.

* **Qwen2.5-7B:** Scores are 42.36, 42.65, 42.79, 40.54, 41.18, 54.46, 42.54.

* **gpt-4o-mini-2024-07-18:** Scores are 41.35, 40.95, 41.13, 41.07, 41.18, 46.43, 41.36.

* **Qwen2.5-3B:** Scores are 40.21, 35.80, 36.18, 40.54, 44.12, 51.79, 38.40.

* **claude-3-sonnet-20240229:** Scores are 37.33, 36.35, 36.12, 33.75, 42.65, 43.75, 36.82.

* **Llama2.5-7B:** Scores are 34.50, 33.30, 31.50, 32.68, 32.35, 34.82, 32.98.

* **Llama-2-13B:** Scores are 32.28, 28.75, 29.43, 28.75, 31.00, 35.56, 29.88.

* **Llama-7B:** Scores are 26.45, 24.85, 24.99, 25.32, 28.82, 28.67, 25.81.

* **Llama-3-8B:** Scores are 24.90, 22.90, 20.79, 20.58, 30.06, 30.82, 24.60.

* **Llama-2-70B:** Scores are 21.54, 18.54, 18.33, 18.26, 26.41, 27.54, 20.00.

### Key Observations

* **Llama-3-1.70B** consistently scores the highest across all error categories, indicating it is the most prone to these types of errors.

* **Llama-2-70B** consistently scores the lowest, suggesting it is the most robust against these errors.

* **Scientific Misconception** generally has the highest scores across all models, indicating this is the most common type of error.

* **Commonsense Misunderstanding** also tends to be high for most models.

* The "Others" category generally has lower scores than the specific error types.

* There is a general trend of larger models (higher parameter count) exhibiting lower error rates, although this is not always consistent.

### Interpretation

The heatmap provides a comparative analysis of the error profiles of different language models. The data suggests that while larger models like Llama-3-1.70B demonstrate strong overall performance, they are also more susceptible to generating responses that fall into these error categories. This could be due to their increased capacity to generate complex and nuanced responses, which also increases the risk of making subtle errors. Conversely, smaller models like Llama-2-70B, while potentially less capable, are more conservative in their responses and therefore less prone to these errors.

The prevalence of "Scientific Misconception" and "Commonsense Misunderstanding" suggests that these are areas where language models still struggle, even the most advanced ones. This highlights the need for continued research and development in these areas, particularly in improving the models' ability to reason about the real world and apply common sense knowledge.

The heatmap allows for a nuanced understanding of model strengths and weaknesses, which can be valuable for selecting the appropriate model for a specific task and for identifying areas where further training or refinement is needed. The data also suggests that simply increasing model size is not a guaranteed solution to all problems, and that other factors, such as training data and model architecture, also play a crucial role.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Heatmap Table: AI Model Performance Across Error Categories

### Overview

The image displays a heatmap table comparing the performance of 16 different large language models (LLMs) across six specific error categories and an overall average. The performance is indicated by numerical scores (likely percentages or accuracy metrics) within each cell, with a color gradient (shades of blue) visually representing the score magnitude—darker blue corresponds to higher scores.

### Components/Axes

* **Header Row (Top):** Lists the six error categories and the final "Average" column.

* **Categories (Left to Right):** Absurd Imagination, Commonsense Misunderstanding, Erroneous Assumption, Logical Error, Others, Scientific Misconception, Average.

* **Model Column (Left):** Lists the 16 AI models being evaluated, from top to bottom.

* **Models (Top to Bottom):** Llama-3.1-70B, claude-3-haiku-20240307, Mixtral-8x22B-v0.1, Qwen2.5-32B, Qwen2.5-72B, gpt-4o-2024-05-13, Mixtral-8x7B-v0.1, Qwen2.5-7B, gpt-4o-mini-2024-07-18, Qwen2.5-3B, claude-3-sonnet-20240229, Llama-3.1-8B, Llama-3.2-3B, Mistral-7B-v0.1, Llama-3.2-1B, Qwen2.5-0.5B.

* **Data Grid (Center):** A 16x7 grid of cells containing numerical scores. Each cell's background color intensity correlates with its value.

* **Footer Row (Bottom):** Contains the "Average" row, showing the mean score for each category across all models. The bottom-right cell (Average of Averages) contains "nan".

### Detailed Analysis

The performance data is summarized in the following table:

| Model | Absurd Imagination | Commonsense Misunderstanding | Erroneous Assumption | Logical Error | Others | Scientific Misconception | Average |

|-------|--------------------|------------------------------|----------------------|---------------|--------|--------------------------|---------|

| Llama-3.1-70B | 65.95 | 65.55 | 65.09 | 64.11 | 54.41 | 74.11 | 65.32 |

| claude-3-haiku-20240307 | 60.24 | 60.05 | 61.45 | 56.61 | 61.76 | 66.96 | 60.67 |

| Mixtral-8x22B-v0.1 | 58.03 | 56.40 | 54.19 | 56.07 | 60.29 | 59.82 | 56.50 |

| Qwen2.5-32B | 56.45 | 57.60 | 55.84 | 57.68 | 42.65 | 65.18 | 56.39 |

| Qwen2.5-72B | 53.62 | 53.30 | 52.53 | 54.11 | 41.18 | 51.79 | 53.06 |

| gpt-4o-2024-05-13 | 53.28 | 52.70 | 53.14 | 50.00 | 50.00 | 58.93 | 52.77 |

| Mixtral-8x7B-v0.1 | 53.39 | 51.15 | 50.88 | 49.46 | 42.65 | 56.25 | 51.48 |

| Qwen2.5-7B | 42.36 | 42.65 | 42.79 | 40.54 | 41.18 | 54.46 | 42.54 |

| gpt-4o-mini-2024-07-18 | 41.35 | 40.95 | 41.13 | 41.07 | 41.18 | 46.43 | 41.36 |

| Qwen2.5-3B | 40.21 | 35.80 | 36.18 | 40.54 | 44.12 | 51.79 | 38.40 |

| claude-3-sonnet-20240229 | 37.33 | 36.35 | 36.12 | 33.75 | 42.65 | 43.75 | 36.82 |

| Llama-3.1-8B | 34.50 | 33.30 | 31.50 | 32.68 | 32.35 | 34.82 | 32.98 |

| Llama-3.2-3B | 28.22 | 25.85 | 25.33 | 27.32 | 25.00 | 45.54 | 26.99 |

| Mistral-7B-v0.1 | 23.36 | 22.65 | 23.95 | 25.71 | 32.35 | 31.25 | 23.93 |

| Llama-3.2-1B | 15.67 | 14.35 | 15.53 | 14.29 | 8.82 | 28.57 | 15.21 |

| Qwen2.5-0.5B | 6.45 | 6.15 | 6.99 | 5.36 | 1.47 | 8.04 | 6.35 |

| **Average** | **41.90** | **40.92** | **40.79** | **40.58** | **38.88** | **48.61** | **nan** |

### Key Observations

1. **Performance Hierarchy:** There is a clear and consistent performance hierarchy. Llama-3.1-70B is the top-performing model across all categories, followed by claude-3-haiku and Mixtral-8x22B. The smallest model, Qwen2.5-0.5B, performs the worst by a significant margin.

2. **Category Difficulty:** "Scientific Misconception" has the highest average score (48.61), suggesting models find this category relatively easier or are better calibrated on it. "Others" has the lowest average (38.88), indicating it may be the most challenging or heterogeneous category.

3. **Model Size Correlation:** For models within the same family (e.g., Qwen2.5, Llama-3.x), performance generally scales with model size (parameter count). The 72B Qwen model outperforms the 32B, which outperforms the 7B, and so on.

4. **Notable Outlier:** The "Others" category shows high variance. While most models score between 30-60, claude-3-haiku scores a relatively high 61.76, and Qwen2.5-0.5B scores an extremely low 1.47.

5. **Color Gradient Confirmation:** The visual heatmap aligns with the numerical data. The top-left cells (high-performing models in most categories) are the darkest blue, while the bottom-right cells (low-performing models) are the lightest, almost white.

### Interpretation

This heatmap provides a comparative benchmark of LLMs on specific types of reasoning failures or error modes. The data suggests that:

* **Model Scale is a Primary Driver:** Larger models consistently outperform smaller ones from the same family, reinforcing the link between model capacity and reasoning robustness.

* **Error Categories are Not Equal:** The disparity in average scores (e.g., Scientific Misconception vs. Others) implies that the underlying datasets or tasks for these categories have different inherent difficulties or that models have been trained/aligned with varying effectiveness on these domains.

* **The "Others" Category is a Black Box:** Its low average and high variance (e.g., the 1.47 score) suggest it may be a catch-all for errors that don't fit the other five categories, making it less interpretable but potentially revealing of a model's generalization limits.

* **Benchmarking Utility:** This table is likely from a research paper or technical report aiming to evaluate and diagnose model weaknesses beyond simple accuracy metrics. It allows for a nuanced comparison, showing that a model might be strong in "Logical Error" but weaker in "Commonsense Misunderstanding." The "nan" in the final average cell is likely a data processing artifact, as averaging the averages would not be statistically meaningful.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Heatmap: Model Performance Across Cognitive Categories

### Overview

The heatmap visualizes performance metrics of various AI models across seven cognitive categories, with color-coded values representing scores. The average row at the bottom aggregates performance across all models.

### Components/Axes

- **X-axis (Categories)**:

- Absurd Imagination

- Commonsense Misunderstanding

- Erroneous Assumption

- Logical Error

- Others

- Scientific Misconception

- Average

- **Y-axis (Models)**:

- Llama-3.1-70B

- Claude-3-haiku-20240307

- Mistral-8x7B-v0.1

- Qwen2.5-32B

- Qwen2.5-72B

- gpt-4o-2024-05-13

- Qwen2.5-7B

- gpt-4o-mini-2024-07-18

- Qwen2.5-3B

- Claude-3-sonnet-20240229

- Llama-3.1-8B

- Llama-3.2-3B

- Mistral-7B-v0.1

- Llama-3.2-1B

- Qwen2.5-0.5B

- Average

- **Legend**:

- Blue shades represent categories (darker = higher values)

- Color gradient: Dark blue (high) → Light blue (low)

### Detailed Analysis

1. **Model Performance**:

- **Llama-3.1-70B**:

- Absurd Imagination: 65.95 (darkest blue)

- Scientific Misconception: 74.11 (darkest blue)

- **Claude-3-haiku-20240307**:

- Commonsense Misunderstanding: 60.05

- Logical Error: 61.76

- **Mistral-8x7B-v0.1**:

- Erroneous Assumption: 50.88

- Logical Error: 49.46

- **Average Row**:

- Scientific Misconception: 48.61 (highest)

- Logical Error: 40.58

- Commonsense Misunderstanding: 40.92

2. **Color Consistency**:

- All values match legend colors (e.g., 65.95 in dark blue for Absurd Imagination aligns with legend)

- Average row uses gray tones for neutral comparison

3. **Spatial Grounding**:

- Legend positioned right of chart

- Average row at bottom (gray background)

- Model names left-aligned, categories top-aligned

### Key Observations

1. **Highest Performance**:

- Scientific Misconception dominates (avg 48.61)

- Llama-3.1-70B excels in Absurd Imagination (65.95) and Scientific Misconception (74.11)

2. **Lowest Performance**:

- Logical Error shows weakest scores (avg 40.58)

- Qwen2.5-0.5B scores lowest in Logical Error (5.36)

3. **Outliers**:

- Claude-3-haiku-20240307: Strong across multiple categories (60.05-66.96)

- Llama-3.2-3B: Weak in Erroneous Assumption (25.33) and Logical Error (27.32)

### Interpretation

The data reveals a clear hierarchy in model capabilities:

1. **Scientific Misconception** is the strongest category across all models, suggesting better handling of factual reasoning tasks.

2. **Logical Error** represents the weakest area (avg 40.58), indicating challenges with deductive reasoning.

3. Larger models (e.g., Llama-3.1-70B) generally outperform smaller variants, though exceptions exist (e.g., Qwen2.5-0.5B's poor Logical Error score).

4. The "Others" category shows mixed performance, with some models (e.g., Claude-3-haiku) demonstrating relative strength.

This pattern suggests AI systems may prioritize factual recall (Scientific Misconception) over abstract reasoning (Logical Error), with performance varying significantly by model architecture and training data.

DECODING INTELLIGENCE...