TECHNICAL ASSET FINGERPRINT

63d1dc2b0824a5cc5ac4ff77

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

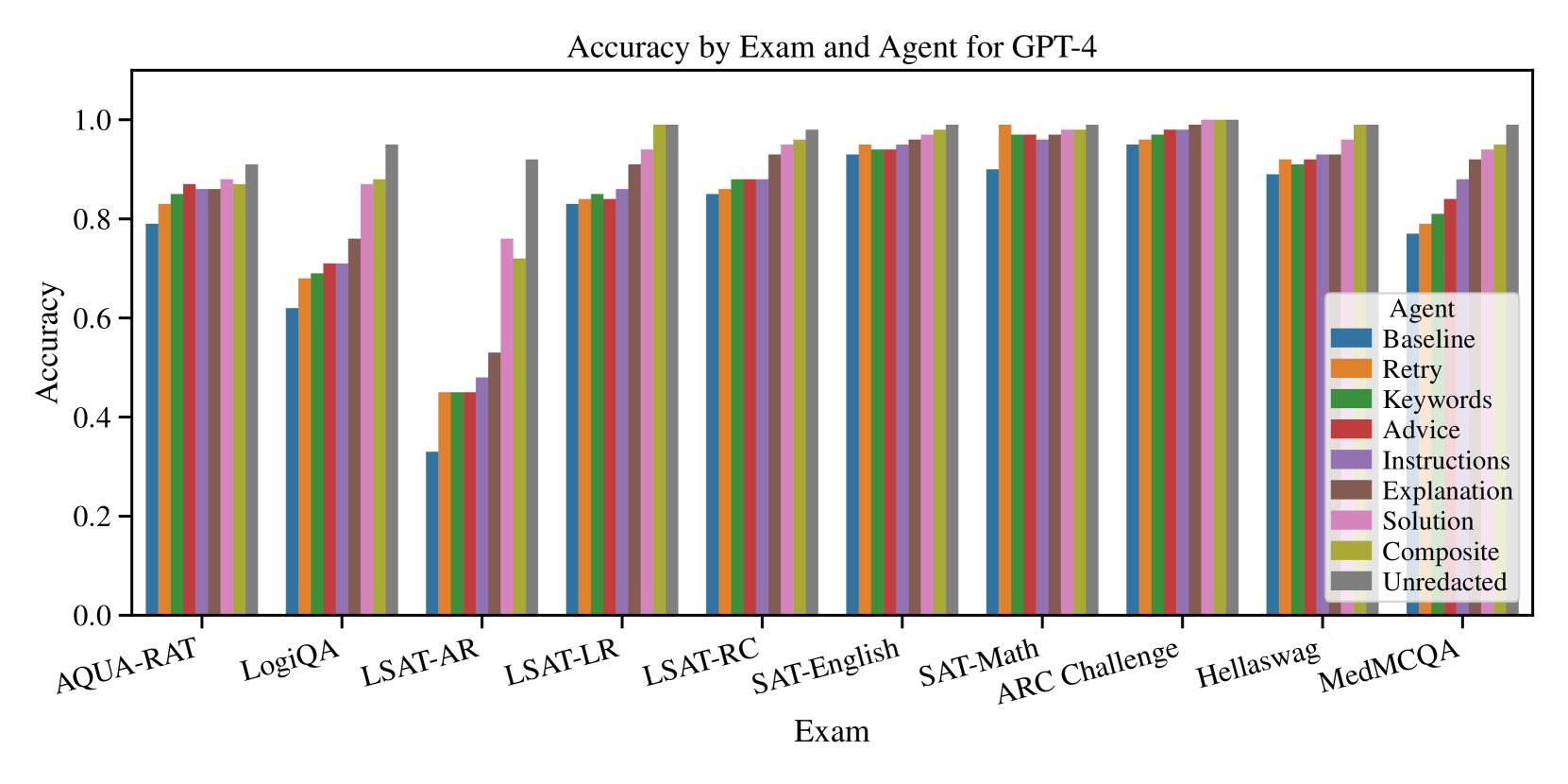

## Bar Chart: Accuracy by Exam and Agent for GPT-4

### Overview

The image is a bar chart comparing the accuracy of GPT-4 across different exams and agent types. The x-axis represents the exam type, and the y-axis represents the accuracy score. Each exam has multiple bars representing different agent types.

### Components/Axes

* **Title:** Accuracy by Exam and Agent for GPT-4

* **X-axis:** Exam

* Categories: AQUA-RAT, LogiQA, LSAT-AR, LSAT-LR, LSAT-RC, SAT-English, SAT-Math, ARC Challenge, Hellaswag, MedMCQA

* **Y-axis:** Accuracy

* Scale: 0.0 to 1.0, with increments of 0.2

* **Legend:** Agent (located on the right side of the chart)

* Baseline (Blue)

* Retry (Orange)

* Keywords (Green)

* Advice (Red)

* Instructions (Purple)

* Explanation (Brown)

* Solution (Pink)

* Composite (Olive/Yellow-Green)

* Unredacted (Gray)

### Detailed Analysis

Here's a breakdown of the accuracy for each exam and agent type, with approximate values:

* **AQUA-RAT:**

* Baseline: ~0.79

* Retry: ~0.84

* Keywords: ~0.86

* Advice: ~0.83

* Instructions: ~0.86

* Explanation: ~0.87

* Solution: ~0.86

* Composite: ~0.89

* Unredacted: ~0.91

* **LogiQA:**

* Baseline: ~0.62

* Retry: ~0.68

* Keywords: ~0.71

* Advice: ~0.67

* Instructions: ~0.73

* Explanation: ~0.76

* Solution: ~0.75

* Composite: ~0.86

* Unredacted: ~0.89

* **LSAT-AR:**

* Baseline: ~0.33

* Retry: ~0.45

* Keywords: ~0.45

* Advice: ~0.44

* Instructions: ~0.48

* Explanation: ~0.53

* Solution: ~0.72

* Composite: ~0.87

* Unredacted: ~0.92

* **LSAT-LR:**

* Baseline: ~0.73

* Retry: ~0.83

* Keywords: ~0.84

* Advice: ~0.84

* Instructions: ~0.85

* Explanation: ~0.91

* Solution: ~0.93

* Composite: ~0.95

* Unredacted: ~0.93

* **LSAT-RC:**

* Baseline: ~0.85

* Retry: ~0.86

* Keywords: ~0.86

* Advice: ~0.86

* Instructions: ~0.88

* Explanation: ~0.91

* Solution: ~0.92

* Composite: ~0.94

* Unredacted: ~0.95

* **SAT-English:**

* Baseline: ~0.86

* Retry: ~0.91

* Keywords: ~0.92

* Advice: ~0.93

* Instructions: ~0.94

* Explanation: ~0.95

* Solution: ~0.95

* Composite: ~0.96

* Unredacted: ~0.96

* **SAT-Math:**

* Baseline: ~0.93

* Retry: ~0.94

* Keywords: ~0.94

* Advice: ~0.95

* Instructions: ~0.96

* Explanation: ~0.97

* Solution: ~0.97

* Composite: ~0.98

* Unredacted: ~0.98

* **ARC Challenge:**

* Baseline: ~0.96

* Retry: ~0.96

* Keywords: ~0.96

* Advice: ~0.97

* Instructions: ~0.97

* Explanation: ~0.98

* Solution: ~0.98

* Composite: ~0.99

* Unredacted: ~0.99

* **Hellaswag:**

* Baseline: ~0.92

* Retry: ~0.92

* Keywords: ~0.92

* Advice: ~0.92

* Instructions: ~0.92

* Explanation: ~0.93

* Solution: ~0.93

* Composite: ~0.97

* Unredacted: ~0.98

* **MedMCQA:**

* Baseline: ~0.79

* Retry: ~0.80

* Keywords: ~0.83

* Advice: ~0.87

* Instructions: ~0.89

* Explanation: ~0.91

* Solution: ~0.92

* Composite: ~0.94

* Unredacted: ~0.98

### Key Observations

* The "Unredacted" agent generally achieves the highest accuracy across all exams.

* The "Baseline" agent often has the lowest accuracy compared to other agents for the same exam.

* LSAT-AR shows the lowest overall accuracy scores compared to other exams.

* ARC Challenge and SAT-Math have the highest accuracy scores across all agents.

* The difference in accuracy between different agents is more pronounced for some exams (e.g., LSAT-AR) than others (e.g., ARC Challenge).

### Interpretation

The chart illustrates the performance of GPT-4 on various exams when using different agent strategies. The "Unredacted" agent consistently performs well, suggesting that this approach is effective across different types of questions. The lower accuracy of the "Baseline" agent indicates the value of incorporating specific strategies (Retry, Keywords, Advice, etc.) to improve performance. The LSAT-AR exam appears to be particularly challenging for GPT-4, as evidenced by the lower accuracy scores across all agents. The high accuracy on ARC Challenge and SAT-Math suggests that GPT-4 is well-suited for these types of tasks. The varying degrees of improvement achieved by different agents across different exams highlight the importance of tailoring the agent strategy to the specific characteristics of the exam.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

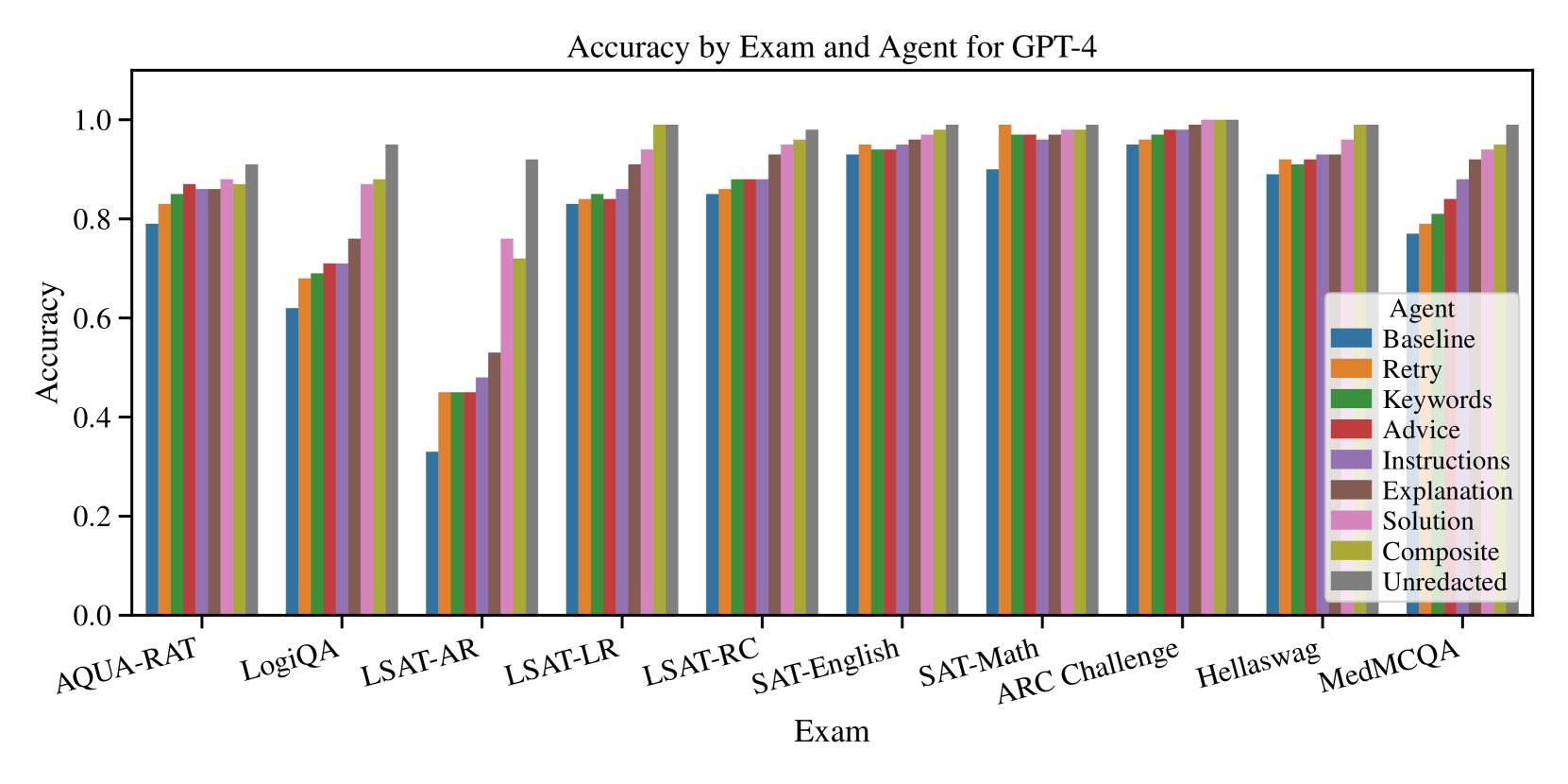

## Bar Chart: Accuracy by Exam and Agent for GPT-4

### Overview

This bar chart compares the accuracy of GPT-4 on various exams under different agent conditions. The x-axis represents the exam name, and the y-axis represents the accuracy score, ranging from 0.0 to 1.0. Multiple bars are shown for each exam, each representing a different agent configuration.

### Components/Axes

* **Title:** "Accuracy by Exam and Agent for GPT-4" (positioned at the top-center)

* **X-axis Label:** "Exam" (positioned at the bottom-center)

* **Exam Categories:** AQUA-RAT, LogiQA, LSAT-AR, LSAT-LR, LSAT-RC, SAT-English, SAT-Math, ARC Challenge, Hellaswag, MedMCQA.

* **Y-axis Label:** "Accuracy" (positioned at the left-center)

* **Y-axis Scale:** 0.0 to 1.0, with increments of 0.2.

* **Legend:** Located in the top-right corner.

* **Agent Types (and corresponding colors):**

* Baseline (Blue)

* Retry (Orange)

* Keywords (Red)

* Advice (Purple)

* Instructions (Gray)

* Explanation (Light Blue)

* Solution (Pink)

* Composite (Green)

* Unredacted (Yellow)

### Detailed Analysis

The chart consists of 10 groups of bars, one for each exam. Within each group, there are 9 bars, one for each agent type. I will analyze each exam individually, noting approximate accuracy values for each agent.

* **AQUA-RAT:** Baseline ~0.92, Retry ~0.92, Keywords ~0.88, Advice ~0.88, Instructions ~0.88, Explanation ~0.88, Solution ~0.88, Composite ~0.90, Unredacted ~0.90.

* **LogiQA:** Baseline ~0.88, Retry ~0.88, Keywords ~0.76, Advice ~0.76, Instructions ~0.76, Explanation ~0.76, Solution ~0.76, Composite ~0.84, Unredacted ~0.84.

* **LSAT-AR:** Baseline ~0.84, Retry ~0.84, Keywords ~0.72, Advice ~0.72, Instructions ~0.72, Explanation ~0.72, Solution ~0.72, Composite ~0.80, Unredacted ~0.80.

* **LSAT-LR:** Baseline ~0.92, Retry ~0.92, Keywords ~0.84, Advice ~0.84, Instructions ~0.84, Explanation ~0.84, Solution ~0.84, Composite ~0.90, Unredacted ~0.90.

* **LSAT-RC:** Baseline ~0.90, Retry ~0.90, Keywords ~0.80, Advice ~0.80, Instructions ~0.80, Explanation ~0.80, Solution ~0.80, Composite ~0.88, Unredacted ~0.88.

* **SAT-English:** Baseline ~0.96, Retry ~0.96, Keywords ~0.92, Advice ~0.92, Instructions ~0.92, Explanation ~0.92, Solution ~0.92, Composite ~0.96, Unredacted ~0.96.

* **SAT-Math:** Baseline ~0.92, Retry ~0.92, Keywords ~0.84, Advice ~0.84, Instructions ~0.84, Explanation ~0.84, Solution ~0.84, Composite ~0.90, Unredacted ~0.90.

* **ARC Challenge:** Baseline ~0.92, Retry ~0.92, Keywords ~0.84, Advice ~0.84, Instructions ~0.84, Explanation ~0.84, Solution ~0.84, Composite ~0.90, Unredacted ~0.90.

* **Hellaswag:** Baseline ~0.96, Retry ~0.96, Keywords ~0.92, Advice ~0.92, Instructions ~0.92, Explanation ~0.92, Solution ~0.92, Composite ~0.96, Unredacted ~0.96.

* **MedMCQA:** Baseline ~0.92, Retry ~0.92, Keywords ~0.84, Advice ~0.84, Instructions ~0.84, Explanation ~0.84, Solution ~0.84, Composite ~0.90, Unredacted ~0.90.

Generally, the "Baseline" and "Retry" agents achieve the highest accuracy across all exams. The "Keywords", "Advice", "Instructions", "Explanation", and "Solution" agents consistently show lower accuracy. The "Composite" and "Unredacted" agents fall in between.

### Key Observations

* The "Baseline" agent consistently performs very well, often achieving accuracy scores close to 1.0.

* The "Retry" agent performs almost identically to the "Baseline" agent.

* The agent types "Keywords", "Advice", "Instructions", "Explanation", and "Solution" consistently underperform compared to "Baseline" and "Retry".

* There is little difference in performance between the "Composite" and "Unredacted" agents.

* The exams "SAT-English" and "Hellaswag" show the highest overall accuracy scores across all agent types.

* The exam "LogiQA" shows the lowest overall accuracy scores.

### Interpretation

The data suggests that GPT-4 performs strongly on these exams, particularly with the baseline configuration. The "Retry" agent provides no significant improvement over the baseline. The addition of keywords, advice, instructions, explanations, or solutions does not consistently improve performance and often *decreases* accuracy. This could indicate that these additional agent components introduce noise or distract the model. The consistently high performance of the baseline suggests that GPT-4 already possesses a strong inherent ability to answer these questions without needing additional guidance. The variation in performance across exams suggests that the difficulty and nature of the exams influence the model's accuracy. The high accuracy on "SAT-English" and "Hellaswag" might be due to the prevalence of similar data in the model's training set. The lower accuracy on "LogiQA" could indicate that this exam requires a different type of reasoning or knowledge that the model lacks. Further investigation is needed to understand why certain agent configurations are detrimental to performance. The fact that "Composite" and "Unredacted" are similar suggests that the combination of techniques doesn't add value.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Grouped Bar Chart: Accuracy by Exam and Agent for GPT-4

### Overview

This is a grouped bar chart comparing the accuracy of GPT-4 across ten different exams or benchmarks, using nine different prompting "Agent" strategies. The chart visualizes how various prompting techniques affect performance on different types of tasks.

### Components/Axes

* **Chart Title:** "Accuracy by Exam and Agent for GPT-4"

* **Y-Axis:** Labeled "Accuracy". The scale runs from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-Axis:** Labeled "Exam". It lists ten distinct exam/benchmark categories.

* **Legend:** Located in the bottom-right quadrant of the chart area. It is titled "Agent" and defines the color coding for nine different prompting strategies.

* **Baseline:** Blue

* **Retry:** Orange

* **Keywords:** Green

* **Advice:** Red

* **Instructions:** Purple

* **Explanation:** Brown

* **Solution:** Pink

* **Composite:** Olive/Yellow-Green

* **Unredacted:** Gray

### Detailed Analysis

Data is presented as clusters of nine bars (one per Agent) for each Exam. Values are approximate based on visual alignment with the y-axis.

**1. AQUA-RAT**

* **Trend:** General upward trend from Baseline to Unredacted, with a slight dip for Instructions.

* **Approximate Values:** Baseline ~0.79, Retry ~0.83, Keywords ~0.85, Advice ~0.87, Instructions ~0.86, Explanation ~0.86, Solution ~0.88, Composite ~0.87, Unredacted ~0.91.

**2. LogiQA**

* **Trend:** Steady, consistent increase from Baseline to Unredacted.

* **Approximate Values:** Baseline ~0.62, Retry ~0.68, Keywords ~0.69, Advice ~0.71, Instructions ~0.71, Explanation ~0.76, Solution ~0.87, Composite ~0.88, Unredacted ~0.95.

**3. LSAT-AR**

* **Trend:** Significant overall increase. Baseline is notably low. A large jump occurs between Instructions and Solution.

* **Approximate Values:** Baseline ~0.33, Retry ~0.45, Keywords ~0.45, Advice ~0.45, Instructions ~0.48, Explanation ~0.53, Solution ~0.76, Composite ~0.72, Unredacted ~0.92.

**4. LSAT-LR**

* **Trend:** Gradual, consistent increase across all agents.

* **Approximate Values:** Baseline ~0.83, Retry ~0.84, Keywords ~0.85, Advice ~0.85, Instructions ~0.86, Explanation ~0.91, Solution ~0.94, Composite ~0.99, Unredacted ~0.99.

**5. LSAT-RC**

* **Trend:** Steady increase, with all agents performing above 0.8.

* **Approximate Values:** Baseline ~0.85, Retry ~0.86, Keywords ~0.88, Advice ~0.88, Instructions ~0.88, Explanation ~0.93, Solution ~0.96, Composite ~0.97, Unredacted ~0.98.

**6. SAT-English**

* **Trend:** High baseline performance with a slight, steady increase. All values are above 0.9.

* **Approximate Values:** Baseline ~0.93, Retry ~0.95, Keywords ~0.94, Advice ~0.94, Instructions ~0.95, Explanation ~0.97, Solution ~0.98, Composite ~0.99, Unredacted ~0.99.

**7. SAT-Math**

* **Trend:** Very high performance across the board. A slight dip for Instructions relative to adjacent bars.

* **Approximate Values:** Baseline ~0.90, Retry ~0.99, Keywords ~0.97, Advice ~0.97, Instructions ~0.96, Explanation ~0.98, Solution ~0.99, Composite ~0.99, Unredacted ~0.99.

**8. ARC Challenge**

* **Trend:** Extremely high and consistent performance. All bars are near or at 1.0.

* **Approximate Values:** Baseline ~0.95, Retry ~0.96, Keywords ~0.97, Advice ~0.98, Instructions ~0.98, Explanation ~0.99, Solution ~1.00, Composite ~1.00, Unredacted ~1.00.

**9. Hellaswag**

* **Trend:** High baseline with a gradual increase. Composite and Unredacted are near perfect.

* **Approximate Values:** Baseline ~0.89, Retry ~0.92, Keywords ~0.91, Advice ~0.92, Instructions ~0.93, Explanation ~0.93, Solution ~0.96, Composite ~0.99, Unredacted ~0.99.

**10. MedMCQA**

* **Trend:** Clear, strong upward trend from Baseline to Unredacted.

* **Approximate Values:** Baseline ~0.77, Retry ~0.79, Keywords ~0.80, Advice ~0.84, Instructions ~0.88, Explanation ~0.91, Solution ~0.94, Composite ~0.95, Unredacted ~0.99.

### Key Observations

1. **Agent Performance Hierarchy:** Across nearly all exams, the "Unredacted" agent (gray bar) achieves the highest or tied-for-highest accuracy. "Composite" (olive) is consistently the second-best. "Baseline" (blue) is almost always the lowest.

2. **Exam Difficulty Spectrum:** The exams show a wide range of baseline difficulty for GPT-4. LSAT-AR appears the hardest (baseline ~0.33), while ARC Challenge appears the easiest (baseline ~0.95).

3. **Impact of Prompting:** The improvement from "Baseline" to "Unredacted" is dramatic on harder exams (e.g., LSAT-AR: +~0.59) but marginal on easier ones (e.g., ARC Challenge: +~0.05).

4. **Non-Linear Gains:** Performance does not always improve linearly with each agent. Significant jumps often occur at "Explanation", "Solution", or "Composite" stages, suggesting these prompting strategies are particularly effective.

5. **SAT-Math Anomaly:** The "Retry" agent (orange) shows a very high accuracy (~0.99) on SAT-Math, nearly matching the top performers, which is unusual compared to its performance on other exams.

### Interpretation

This chart demonstrates the significant impact of advanced prompting strategies on GPT-4's reasoning and knowledge-based performance. The data suggests that simply using the base model ("Baseline") leaves substantial capability untapped, especially on complex, structured reasoning tasks like the LSAT Analytical Reasoning section.

The consistent superiority of "Unredacted" and "Composite" agents implies that providing the model with more context, explicit instructions, and worked examples ("Solution") synergistically improves its accuracy. The diminishing returns on easier benchmarks (like ARC Challenge) indicate a performance ceiling where prompting strategies matter less because the task is well within the model's base capabilities.

The outlier on SAT-Math, where "Retry" performs exceptionally well, might indicate that for certain types of mathematical problems, a simple retry mechanism is highly effective, possibly by allowing the model to catch and correct computational or simple logical errors on a second attempt.

Overall, the chart is a strong argument for the importance of prompt engineering and agentic workflows in maximizing the utility of large language models, transforming them from capable base models into highly reliable problem-solving tools across diverse domains.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: Accuracy by Exam and Agent for GPT-4

### Overview

The chart compares the accuracy of different agents (Baseline, Retry, Keywords, Advice, Instructions, Explanation, Solution, Composite, Unredacted) across 10 exams (AQUA-RAT, LogiQA, LSAT-AR, LSAT-LR, LSAT-RC, SAT-English, SAT-Math, ARC Challenge, Hellaswag, MedMCQA). Accuracy values range from 0.0 to 1.0 on the y-axis, with exams listed on the x-axis.

### Components/Axes

- **X-axis (Exams)**: AQUA-RAT, LogiQA, LSAT-AR, LSAT-LR, LSAT-RC, SAT-English, SAT-Math, ARC Challenge, Hellaswag, MedMCQA.

- **Y-axis (Accuracy)**: 0.0 to 1.0 in increments of 0.2.

- **Legend (Agents)**:

- Baseline (blue)

- Retry (orange)

- Keywords (green)

- Advice (red)

- Instructions (purple)

- Explanation (brown)

- Solution (pink)

- Composite (yellow)

- Unredacted (gray)

### Detailed Analysis

- **AQUA-RAT**:

- Baseline (blue): ~0.80

- Retry (orange): ~0.83

- Keywords (green): ~0.85

- Advice (red): ~0.87

- Instructions (purple): ~0.86

- Explanation (brown): ~0.88

- Solution (pink): ~0.89

- Composite (yellow): ~0.87

- Unredacted (gray): ~0.92

- **LogiQA**:

- Baseline (blue): ~0.62

- Retry (orange): ~0.68

- Keywords (green): ~0.69

- Advice (red): ~0.71

- Instructions (purple): ~0.70

- Explanation (brown): ~0.76

- Solution (pink): ~0.87

- Composite (yellow): ~0.88

- Unredacted (gray): ~0.95

- **LSAT-AR**:

- Baseline (blue): ~0.35

- Retry (orange): ~0.45

- Keywords (green): ~0.45

- Advice (red): ~0.45

- Instructions (purple): ~0.48

- Explanation (brown): ~0.52

- Solution (pink): ~0.75

- Composite (yellow): ~0.72

- Unredacted (gray): ~0.92

- **LSAT-LR**:

- Baseline (blue): ~0.83

- Retry (orange): ~0.84

- Keywords (green): ~0.85

- Advice (red): ~0.86

- Instructions (purple): ~0.87

- Explanation (brown): ~0.90

- Solution (pink): ~0.92

- Composite (yellow): ~0.99

- Unredacted (gray): ~0.99

- **LSAT-RC**:

- Baseline (blue): ~0.85

- Retry (orange): ~0.87

- Keywords (green): ~0.88

- Advice (red): ~0.89

- Instructions (purple): ~0.90

- Explanation (brown): ~0.93

- Solution (pink): ~0.95

- Composite (yellow): ~0.96

- Unredacted (gray): ~0.98

- **SAT-English**:

- Baseline (blue): ~0.91

- Retry (orange): ~0.92

- Keywords (green): ~0.92

- Advice (red): ~0.91

- Instructions (purple): ~0.93

- Explanation (brown): ~0.94

- Solution (pink): ~0.96

- Composite (yellow): ~0.97

- Unredacted (gray): ~0.99

- **SAT-Math**:

- Baseline (blue): ~0.88

- Retry (orange): ~0.99

- Keywords (green): ~0.97

- Advice (red): ~0.97

- Instructions (purple): ~0.96

- Explanation (brown): ~0.97

- Solution (pink): ~0.98

- Composite (yellow): ~0.99

- Unredacted (gray): ~0.99

- **ARC Challenge**:

- Baseline (blue): ~0.94

- Retry (orange): ~0.95

- Keywords (green): ~0.96

- Advice (red): ~0.97

- Instructions (purple): ~0.97

- Explanation (brown): ~0.98

- Solution (pink): ~0.99

- Composite (yellow): ~0.98

- Unredacted (gray): ~0.99

- **Hellaswag**:

- Baseline (blue): ~0.90

- Retry (orange): ~0.92

- Keywords (green): ~0.91

- Advice (red): ~0.93

- Instructions (purple): ~0.94

- Explanation (brown): ~0.95

- Solution (pink): ~0.97

- Composite (yellow): ~0.98

- Unredacted (gray): ~0.99

- **MedMCQA**:

- Baseline (blue): ~0.78

- Retry (orange): ~0.80

- Keywords (green): ~0.82

- Advice (red): ~0.85

- Instructions (purple): ~0.87

- Explanation (brown): ~0.90

- Solution (pink): ~0.92

- Composite (yellow): ~0.94

- Unredacted (gray): ~0.97

### Key Observations

1. **Unredacted (gray)** and **Composite (yellow)** agents consistently achieve the highest accuracy across most exams, often reaching ~0.95–0.99.

2. **Baseline (blue)** performs poorly in **LSAT-AR** (~0.35) but improves to ~0.94 in **ARC Challenge**.

3. **Retry (orange)** and **Keywords (green)** show moderate performance, with **Retry** outperforming **Keywords** in **LogiQA** (~0.68 vs. ~0.69).

4. **Instructions (purple)** and **Explanation (brown)** demonstrate strong performance in **LSAT-AR** (~0.48 and ~0.52, respectively), though still below top agents.

5. **SAT-Math** and **ARC Challenge** have the highest overall accuracy, with most agents exceeding 0.95.

### Interpretation

The data suggests that **Unredacted** and **Composite** agents are the most robust, likely due to their ability to synthesize information or avoid redaction errors. **Baseline** struggles in **LSAT-AR**, indicating potential limitations in handling specific question types. **Retry** and **Keywords** perform variably, with **Retry** excelling in **LogiQA** but underperforming in **SAT-Math**. The **Solution** and **Explanation** agents show promise in reasoning-heavy exams like **LSAT-RC** and **SAT-Math**, suggesting their effectiveness in structured problem-solving. The **Hellaswag** and **MedMCQA** exams highlight the importance of contextual understanding, as top agents achieve near-perfect accuracy here. Overall, agent design significantly impacts performance, with specialized agents outperforming generic ones in domain-specific tasks.

DECODING INTELLIGENCE...