## Bar Chart: Accuracy Comparison of Qwen2.5-7B-Instruct vs. GPT-4o Across Benchmarks

### Overview

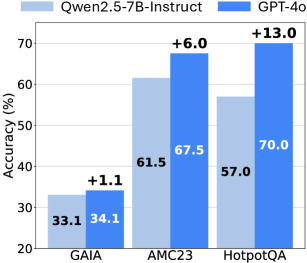

This image displays a bar chart comparing the "Accuracy (%)" of two language models, "Qwen2.5-7B-Instruct" and "GPT-4o", across three different benchmarks: "GAIA", "AMC23", and "HotpotQA". The chart uses grouped bars to show the performance of each model on each benchmark, with numerical labels indicating precise accuracy percentages and the performance difference of GPT-4o over Qwen2.5-7B-Instruct.

### Components/Axes

* **Chart Type**: Grouped Bar Chart.

* **Legend**: Positioned at the top-center of the chart.

* Light blue bar color represents: "Qwen2.5-7B-Instruct"

* Dark blue bar color represents: "GPT-4o"

* **Y-axis (Left)**:

* Title: "Accuracy (%)"

* Scale: Ranges from 20 to 70, with major grid lines at 10-unit intervals (20, 30, 40, 50, 60, 70).

* **X-axis (Bottom)**:

* Categories (from left to right): "GAIA", "AMC23", "HotpotQA".

* **Data Labels**: Numerical values are displayed directly on top of each bar, indicating the exact accuracy percentage.

* **Difference Labels**: Numerical values prefixed with a "+" sign are displayed above the dark blue (GPT-4o) bars, indicating the absolute difference in accuracy between GPT-4o and Qwen2.5-7B-Instruct for that specific benchmark.

### Detailed Analysis

The chart presents three groups of bars, each representing a benchmark, with two bars per group comparing the two models.

1. **GAIA Benchmark**:

* The light blue bar (Qwen2.5-7B-Instruct) shows an accuracy of **33.1%**.

* The dark blue bar (GPT-4o) shows an accuracy of **34.1%**.

* The difference label above the GPT-4o bar is **+1.1**, indicating GPT-4o performed 1.1 percentage points better than Qwen2.5-7B-Instruct.

* Trend: GPT-4o shows a slight but positive improvement over Qwen2.5-7B-Instruct on the GAIA benchmark.

2. **AMC23 Benchmark**:

* The light blue bar (Qwen2.5-7B-Instruct) shows an accuracy of **61.5%**.

* The dark blue bar (GPT-4o) shows an accuracy of **67.5%**.

* The difference label above the GPT-4o bar is **+6.0**, indicating GPT-4o performed 6.0 percentage points better than Qwen2.5-7B-Instruct.

* Trend: GPT-4o demonstrates a noticeable improvement over Qwen2.5-7B-Instruct on the AMC23 benchmark.

3. **HotpotQA Benchmark**:

* The light blue bar (Qwen2.5-7B-Instruct) shows an accuracy of **57.0%**.

* The dark blue bar (GPT-4o) shows an accuracy of **70.0%**.

* The difference label above the GPT-4o bar is **+13.0**, indicating GPT-4o performed 13.0 percentage points better than Qwen2.5-7B-Instruct.

* Trend: GPT-4o exhibits a substantial improvement over Qwen2.5-7B-Instruct on the HotpotQA benchmark, marking the largest performance gap among the three tasks.

### Key Observations

* GPT-4o consistently outperforms Qwen2.5-7B-Instruct across all three benchmarks presented.

* The performance gap between GPT-4o and Qwen2.5-7B-Instruct varies significantly across benchmarks, ranging from a minimal +1.1% on GAIA to a substantial +13.0% on HotpotQA.

* Both models achieve their highest accuracy on the HotpotQA benchmark for GPT-4o (70.0%) and AMC23 for Qwen2.5-7B-Instruct (61.5%).

* The lowest accuracy for both models is observed on the GAIA benchmark.

### Interpretation

This bar chart strongly suggests that GPT-4o generally possesses superior accuracy compared to Qwen2.5-7B-Instruct across the evaluated benchmarks. The consistent positive differences indicate a robust performance advantage for GPT-4o.

The varying magnitudes of the performance gap are particularly insightful. On tasks like GAIA, the models are relatively close in performance, implying that Qwen2.5-7B-Instruct might be competitive in certain domains or for specific types of questions. However, on benchmarks like AMC23 and especially HotpotQA, GPT-4o demonstrates a significantly higher capability. The large difference on HotpotQA, which is often a complex multi-hop question answering dataset, could indicate GPT-4o's advanced reasoning or information synthesis abilities.

Overall, the data highlights GPT-4o as a more accurate model for these specific tasks, with its strengths becoming more pronounced on more challenging or complex benchmarks. This information would be crucial for developers or researchers deciding which model to utilize for applications requiring high accuracy in similar domains.