## Screenshot: Simulation Environment with UI Overlay

### Overview

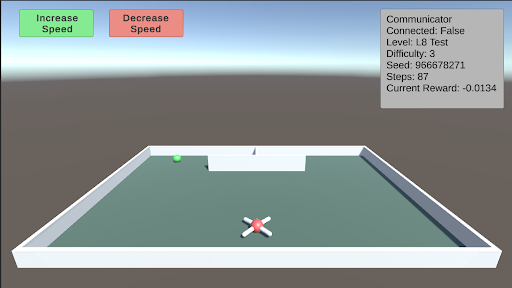

The image is a screenshot of a 3D simulation environment, likely for testing an autonomous agent or drone. It features a walled arena containing a drone, a target object, and overlaid user interface (UI) elements displaying controls and simulation state data. The scene is rendered with simple, low-polygon graphics.

### Components/Axes

The image can be segmented into two primary regions: the **3D Simulation View** (main area) and the **UI Overlay** (top portion).

**1. UI Overlay (Top of Screen):**

* **Top-Left Corner:** Two rectangular buttons.

* **Left Button:** Green background, white text: "Increase Speed".

* **Right Button:** Red background, white text: "Decrease Speed".

* **Top-Right Corner:** A semi-transparent gray information panel with white text, aligned to the right edge. The text reads:

* `Communicator Connected: False`

* `Level: L8 Test`

* `Difficulty: 3`

* `Seed: 866678271`

* `Steps: 0`

* `Current Reward: -0.0134`

**2. 3D Simulation View (Main Area):**

* **Environment:** A rectangular arena enclosed by low, white walls. The floor is a flat, dark teal/green color. The background shows a simple skybox with a gradient from light blue at the top to a hazy gray at the horizon.

* **Primary Agent:** A white, quadcopter-style drone with red "X" markings on its top surface. It is positioned in the foreground, slightly below the center of the view.

* **Target/Goal Object:** A small, bright green sphere located near the back wall, to the left of the center.

* **Background Structure:** A simple, white, rectangular block or wall segment positioned against the back wall, slightly right of center. A small, dark vertical line (possibly a pole or marker) is visible on top of it.

### Detailed Analysis

* **Simulation State:** The simulation is at its initial state (`Steps: 0`). The agent has received a small negative reward (`-0.0134`), which could be an initial penalty or a baseline cost.

* **Configuration:** The test is running on a specific level (`L8 Test`) with a set difficulty (`3`) and a deterministic seed (`866678271`), allowing for reproducible runs.

* **Connectivity:** A key system component, the "Communicator," is reported as not connected (`False`).

* **Spatial Layout:** The drone is centrally located in the foreground. The green sphere (likely a target) is in the mid-ground left. The white block is in the mid-ground right. The UI elements are non-diegetic, floating over the scene.

### Key Observations

1. **Initial Condition:** The simulation has just begun (`Steps: 0`), and the agent is already in a slightly negative reward state.

2. **Disconnected State:** The "Communicator Connected: False" status is a critical piece of information, suggesting a potential issue with external control, data logging, or multi-agent communication.

3. **Visual Cues:** The green sphere is a strong visual candidate for a target or goal location due to its distinct color and placement. The drone's design is simple, prioritizing function over visual detail.

4. **UI Design:** The control buttons use universal color semantics (green for increase/positive, red for decrease/negative). The info panel uses a monospace font for clear readability of numerical data.

### Interpretation

This screenshot captures the setup phase of a reinforcement learning or robotics simulation. The environment is designed to test an agent's ability to navigate within a bounded space, likely to reach the green sphere target. The negative initial reward is a common technique to incentivize the agent to complete tasks quickly to minimize cumulative penalty.

The "Communicator" status being false is the most significant anomaly. In a typical setup, this might mean the agent is running a pre-trained policy autonomously without a live connection to a training server or human operator. Alternatively, it could indicate a system error. The specific seed value ensures that this exact scenario—with the drone, target, and background structure in these precise positions—can be recreated perfectly for debugging or evaluation.

The presence of speed control buttons suggests this interface is for a human supervisor to adjust the simulation's real-time execution speed, which is useful for observing fast-paced agent behavior or debugging step-by-step. Overall, the image depicts a controlled, instrumented testbed for developing and evaluating autonomous navigation algorithms.