\n

## Diagram: Comparison of Benchmark Paradigms for AI Agents

### Overview

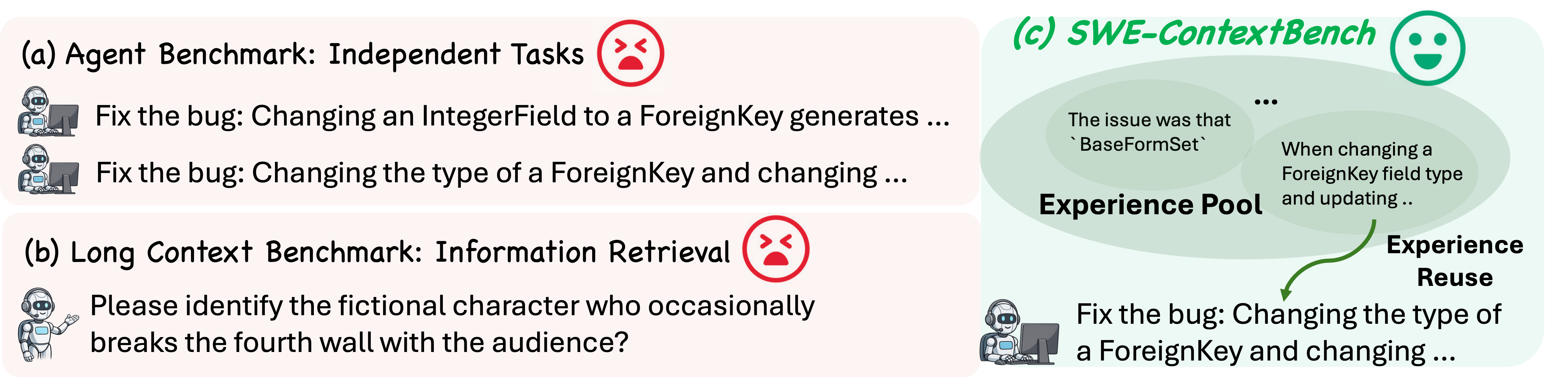

The image is a conceptual diagram comparing three different benchmarking approaches for evaluating AI agents. It is divided into three distinct panels, labeled (a), (b), and (c). Panels (a) and (b) are presented with a light pink background and are associated with negative outcomes (indicated by red, angry face icons). Panel (c) is presented with a light green background and is associated with a positive outcome (indicated by a green, happy face icon). The diagram illustrates a shift from isolated task evaluation to a context-aware, experience-reusing paradigm.

### Components/Axes

The diagram is segmented into three primary regions:

1. **Panel (a) - Top Left:**

* **Title:** `(a) Agent Benchmark: Independent Tasks`

* **Icon:** A red, angry face emoji (😡) is positioned to the right of the title.

* **Content:** Two example tasks are listed, each preceded by a small icon of a robot sitting at a computer.

* Task 1: `Fix the bug: Changing an IntegerField to a ForeignKey generates ...`

* Task 2: `Fix the bug: Changing the type of a ForeignKey and changing ...`

2. **Panel (b) - Bottom Left:**

* **Title:** `(b) Long Context Benchmark: Information Retrieval`

* **Icon:** A red, angry face emoji (😡) is positioned to the right of the title.

* **Content:** One example task is shown, preceded by a small icon of a robot gesturing.

* Task: `Please identify the fictional character who occasionally breaks the fourth wall with the audience?`

3. **Panel (c) - Right Side:**

* **Title:** `(c) SWE-ContextBench`

* **Icon:** A green, happy face emoji (🙂) is positioned to the right of the title.

* **Content:** This panel illustrates a process flow.

* **Central Element:** A large, light-green oval labeled `Experience Pool`. Inside this oval, two text bubbles are shown:

* Left bubble: `The issue was that `BaseFormSet``

* Right bubble: `When changing a ForeignKey field type and updating ..`

* **Flow Arrow:** A green, curved arrow originates from the right text bubble within the Experience Pool and points downward to a task below.

* **Label:** The arrow is labeled `Experience Reuse`.

* **Target Task:** At the bottom of the panel, a robot icon at a computer is shown next to the task: `Fix the bug: Changing the type of a ForeignKey and changing ...`

### Detailed Analysis

* **Textual Content:** All text is in English. The ellipses (`...`) in the task descriptions indicate that the text is truncated.

* **Spatial Relationships:**

* Panels (a) and (b) are stacked vertically on the left, sharing a similar visual style (pink background, angry icon) to group them as "traditional" or "problematic" approaches.

* Panel (c) occupies the entire right side, using a contrasting green background and happy icon to signify a proposed solution or improved method.

* The `Experience Pool` is the central visual element in panel (c), suggesting it is the core resource.

* The `Experience Reuse` arrow creates a direct visual link between past knowledge (in the pool) and a current task, demonstrating the proposed mechanism.

* **Iconography:**

* The robot icons differentiate the type of agent or task: a focused coder for bug-fixing tasks (panels a and c) and a gesturing presenter for an information retrieval task (panel b).

* The angry vs. happy face icons are clear, non-textual indicators of the presumed effectiveness or desirability of each benchmarking paradigm.

### Key Observations

1. **Contrast in Evaluation:** The diagram explicitly contrasts benchmarks that evaluate agents on `Independent Tasks` (a) or pure `Information Retrieval` (b) with one that evaluates them within a `Context` (c) where they can reuse prior experience.

2. **Shared Task Example:** The task `Fix the bug: Changing the type of a ForeignKey and changing ...` appears in both panel (a) and panel (c). This is a critical link, showing the same problem type being approached under different benchmarking frameworks.

3. **From Isolation to Context:** Panel (a) shows tasks in isolation. Panel (c) shows a task connected to a repository of past experiences (`BaseFormSet` issue, previous ForeignKey changes), implying that solving the new task benefits from this context.

4. **Visual Sentiment:** The use of color (pink/red for negative, green for positive) and emojis creates an immediate, strong visual argument about the relative merits of the approaches.

### Interpretation

This diagram argues that traditional agent benchmarks are flawed because they test capabilities in a vacuum. The `Agent Benchmark` (a) presents isolated coding tasks without context, and the `Long Context Benchmark` (b) tests retrieval of discrete facts from a large context, which may not reflect real-world problem-solving.

The proposed `SWE-ContextBench` (c) represents a more ecologically valid evaluation. It posits that a software engineering (SWE) agent's true capability is demonstrated not by solving a bug from scratch, but by its ability to **reuse relevant past experiences** (stored in an "Experience Pool") to inform its approach to a new, similar problem. The arrow labeled "Experience Reuse" is the core thesis: effective agents should leverage historical context (e.g., knowledge about `BaseFormSet` or previous field-type changes) to solve current tasks more efficiently and accurately.

The diagram suggests that benchmarks failing to account for this contextual reuse (the angry-faced panels) provide an incomplete or misleading picture of an agent's practical utility, while benchmarks that incorporate it (the happy-faced panel) are better aligned with real-world software development workflows.