## Diagram: SWE-ContextBench Overview

### Overview

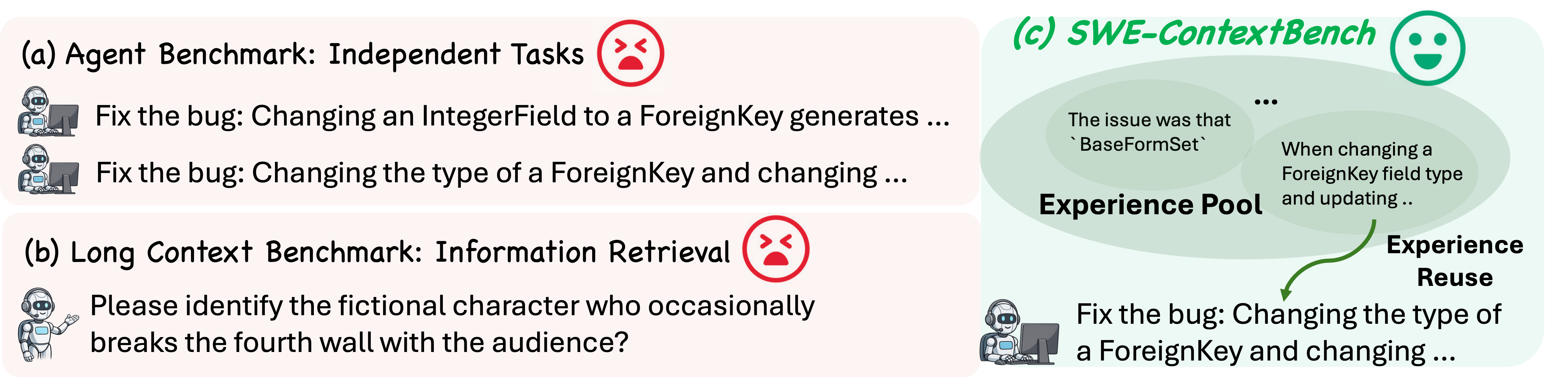

The image presents a diagram comparing different benchmark types for software engineering tasks. It highlights the challenges and potential solutions in agent benchmarks, long context benchmarks, and SWE-ContextBench. The diagram uses visual cues like robot avatars, red "sad face" icons, and a green "happy face" icon to indicate the success or failure of each benchmark type.

### Components/Axes

* **(a) Agent Benchmark: Independent Tasks**: This section describes a benchmark focused on independent tasks for agents.

* **(b) Long Context Benchmark: Information Retrieval**: This section describes a benchmark focused on information retrieval in a long context.

* **(c) SWE-ContextBench**: This section describes the SWE-ContextBench approach.

* **Robot Avatars**: Each benchmark type is associated with a robot avatar, possibly representing an automated agent.

* **Red "Sad Face" Icon**: Located next to the titles of the Agent Benchmark and Long Context Benchmark, indicating a negative outcome or challenge.

* **Green "Happy Face" Icon**: Located next to the title of the SWE-ContextBench, indicating a positive outcome or success.

* **Experience Pool**: A green oval shape containing the text "The issue was that `BaseFormSet` ... When changing a ForeignKey field type and updating...".

* **Experience Reuse**: A green oval shape containing the text "Experience Reuse".

* **Green Arrow**: A curved green arrow pointing from the "Experience Pool" to the "Experience Reuse" oval, indicating the flow of information or experience.

### Detailed Analysis or ### Content Details

* **(a) Agent Benchmark: Independent Tasks**

* Task 1: "Fix the bug: Changing an IntegerField to a ForeignKey generates ..."

* Task 2: "Fix the bug: Changing the type of a ForeignKey and changing ..."

* **(b) Long Context Benchmark: Information Retrieval**

* Task: "Please identify the fictional character who occasionally breaks the fourth wall with the audience?"

* **(c) SWE-ContextBench**

* The "Experience Pool" contains the text: "The issue was that `BaseFormSet` ... When changing a ForeignKey field type and updating..."

* The "Experience Reuse" oval is connected to the "Experience Pool" via a green arrow.

* Task: "Fix the bug: Changing the type of a ForeignKey and changing ..."

### Key Observations

* Agent Benchmark and Long Context Benchmark are marked with a red "sad face" icon, suggesting they face challenges or limitations.

* SWE-ContextBench is marked with a green "happy face" icon, suggesting it is a successful approach.

* The "Experience Pool" and "Experience Reuse" components in SWE-ContextBench suggest a mechanism for leveraging past experiences to improve performance.

### Interpretation

The diagram illustrates a comparison of different benchmark types for software engineering tasks. The Agent Benchmark and Long Context Benchmark appear to have limitations, as indicated by the red "sad face" icons. SWE-ContextBench, on the other hand, seems to offer a more effective approach, possibly by leveraging an "Experience Pool" and "Experience Reuse" mechanism. The diagram suggests that SWE-ContextBench addresses the challenges faced by the other benchmark types by incorporating a way to learn from and reuse past experiences. The specific tasks mentioned provide examples of the types of problems each benchmark is designed to address.