## Diagram: Benchmarking Approaches for AI Agent Performance

### Overview

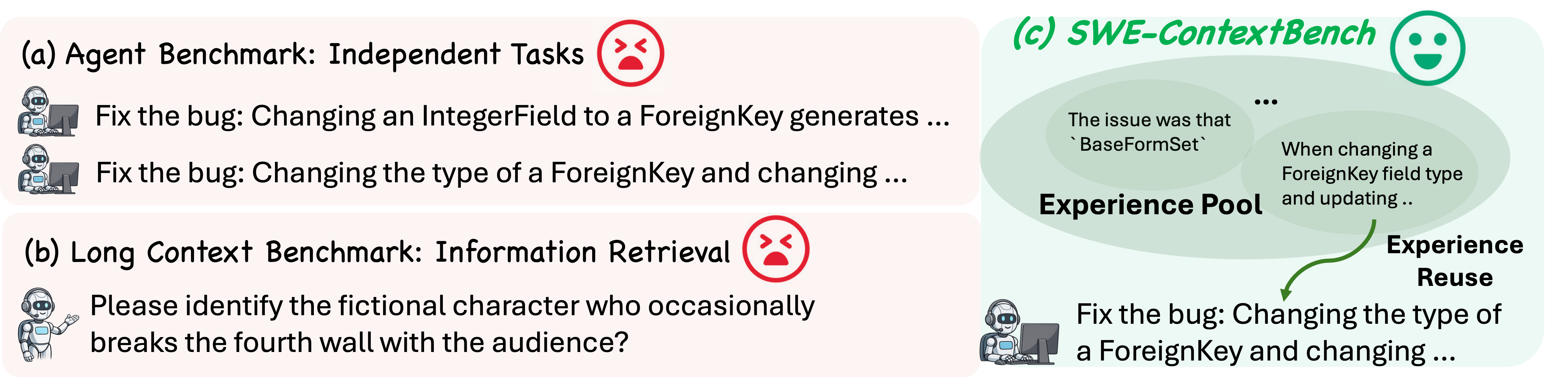

The diagram compares three benchmarking approaches for evaluating AI agent capabilities: (a) Independent Task Benchmarking, (b) Long Context Information Retrieval, and (c) SWE-ContextBench. Each approach is visualized with task examples, emotional indicators (😠/😊), and contextual relationships.

### Components/Axes

1. **Section (a): Agent Benchmark - Independent Tasks**

- Label: "Fix the bug: Changing an IntegerField to a ForeignKey generates..."

- Label: "Fix the bug: Changing the type of a ForeignKey and changing..."

- Emotional Indicator: 😠 (Red unhappy face)

- Position: Top-left quadrant

2. **Section (b): Long Context Benchmark - Information Retrieval**

- Label: "Please identify the fictional character who occasionally breaks the fourth wall with the audience?"

- Emotional Indicator: 😠 (Red unhappy face)

- Position: Bottom-left quadrant

3. **Section (c): SWE-ContextBench**

- Label: "The issue was that `BaseFormSet`..."

- Label: "When changing a ForeignKey field type and updating..."

- Label: "Fix the bug: Changing the type of a ForeignKey and changing..."

- Emotional Indicator: 😊 (Green happy face)

- Experience Pool: Central overlapping region with green gradient

- Experience Reuse: Green arrow connecting to task description

- Position: Right quadrant

### Detailed Analysis

- **Emotional Indicators**:

- Red 😠 emojis in (a) and (b) suggest negative outcomes or challenges

- Green 😊 emoji in (c) indicates successful resolution

- **Experience Pool**:

- Central overlapping region between (a) and (c) tasks

- Contains the phrase "The issue was that `BaseFormSet`..."

- Suggests shared contextual knowledge between tasks

- **Experience Reuse**:

- Green arrow from experience pool to (c)'s task description

- Implies knowledge transfer between related tasks

### Key Observations

1. Independent task benchmarking (a) and long context retrieval (b) show negative outcomes

2. SWE-ContextBench (c) demonstrates successful bug resolution through experience reuse

3. The experience pool acts as a knowledge repository connecting related tasks

4. Green color coding in (c) contrasts with red in (a) and (b), visually emphasizing effectiveness

### Interpretation

The diagram illustrates how contextual awareness and experience reuse improve AI agent performance. While traditional benchmarks (a) and (b) face challenges with isolated tasks and information retrieval, SWE-ContextBench (c) leverages an experience pool to resolve complex, interconnected tasks. The green happy face and positive outcome in (c) suggest that incorporating past experiences (via the experience pool) leads to more effective problem-solving. This aligns with human learning patterns where contextual understanding and knowledge transfer enhance performance on related tasks. The diagram implies that future AI agent development should prioritize systems that can build and utilize experience pools for better generalization across tasks.