TECHNICAL ASSET FINGERPRINT

656e7c2030a952379d6071f8

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

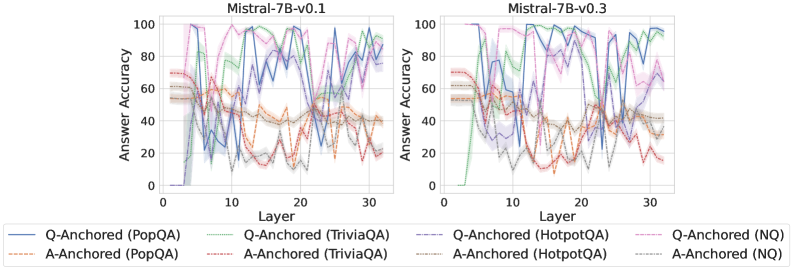

## Line Chart: Mistral-7B Model Performance Comparison

### Overview

The image presents two line charts comparing the performance of Mistral-7B models (v0.1 and v0.3) across different question-answering tasks. The charts depict the "Answer Accuracy" as a function of "Layer" for various question-answering datasets, categorized by "Q-Anchored" and "A-Anchored" approaches.

### Components/Axes

* **Titles:**

* Left Chart: "Mistral-7B-v0.1"

* Right Chart: "Mistral-7B-v0.3"

* **Y-Axis:** "Answer Accuracy", ranging from 0 to 100.

* **X-Axis:** "Layer", ranging from 0 to 30.

* **Legend:** Located at the bottom of the image, mapping line styles and colors to specific question-answering tasks and anchoring methods.

* **Q-Anchored:**

* PopQA (Solid Blue)

* TriviaQA (Dotted Green)

* HotpotQA (Dash-Dot Red)

* NQ (Dashed Pink)

* **A-Anchored:**

* PopQA (Dashed Brown)

* TriviaQA (Dashed Gray)

* HotpotQA (Dashed Orange)

* NQ (Dashed Black)

### Detailed Analysis

**Left Chart: Mistral-7B-v0.1**

* **Q-Anchored (PopQA):** (Solid Blue) Starts at approximately 0% accuracy at layer 0, rises sharply to around 80% by layer 5, fluctuates between 60% and 100% for the remaining layers.

* **A-Anchored (PopQA):** (Dashed Brown) Starts around 60% accuracy, gradually decreases to around 40% by layer 10, and then fluctuates between 40% and 60% for the remaining layers.

* **Q-Anchored (TriviaQA):** (Dotted Green) Starts at approximately 0% accuracy at layer 0, rises sharply to around 80% by layer 5, fluctuates between 60% and 100% for the remaining layers.

* **A-Anchored (TriviaQA):** (Dashed Gray) Starts around 60% accuracy, gradually decreases to around 40% by layer 10, and then fluctuates between 40% and 60% for the remaining layers.

* **Q-Anchored (HotpotQA):** (Dash-Dot Red) Starts at approximately 0% accuracy at layer 0, rises sharply to around 20% by layer 5, fluctuates between 10% and 40% for the remaining layers.

* **A-Anchored (HotpotQA):** (Dashed Orange) Starts around 60% accuracy, gradually decreases to around 20% by layer 10, and then fluctuates between 10% and 40% for the remaining layers.

* **Q-Anchored (NQ):** (Dashed Pink) Starts at approximately 0% accuracy at layer 0, rises sharply to around 100% by layer 5, fluctuates between 80% and 100% for the remaining layers.

* **A-Anchored (NQ):** (Dashed Black) Starts around 60% accuracy, gradually decreases to around 20% by layer 10, and then fluctuates between 10% and 40% for the remaining layers.

**Right Chart: Mistral-7B-v0.3**

* **Q-Anchored (PopQA):** (Solid Blue) Starts at approximately 0% accuracy at layer 0, rises sharply to around 80% by layer 5, fluctuates between 60% and 100% for the remaining layers.

* **A-Anchored (PopQA):** (Dashed Brown) Starts around 60% accuracy, gradually decreases to around 40% by layer 10, and then fluctuates between 40% and 60% for the remaining layers.

* **Q-Anchored (TriviaQA):** (Dotted Green) Starts at approximately 0% accuracy at layer 0, rises sharply to around 80% by layer 5, fluctuates between 60% and 100% for the remaining layers.

* **A-Anchored (TriviaQA):** (Dashed Gray) Starts around 60% accuracy, gradually decreases to around 40% by layer 10, and then fluctuates between 40% and 60% for the remaining layers.

* **Q-Anchored (HotpotQA):** (Dash-Dot Red) Starts at approximately 0% accuracy at layer 0, rises sharply to around 20% by layer 5, fluctuates between 10% and 40% for the remaining layers.

* **A-Anchored (HotpotQA):** (Dashed Orange) Starts around 60% accuracy, gradually decreases to around 20% by layer 10, and then fluctuates between 10% and 40% for the remaining layers.

* **Q-Anchored (NQ):** (Dashed Pink) Starts at approximately 0% accuracy at layer 0, rises sharply to around 100% by layer 5, fluctuates between 80% and 100% for the remaining layers.

* **A-Anchored (NQ):** (Dashed Black) Starts around 60% accuracy, gradually decreases to around 20% by layer 10, and then fluctuates between 10% and 40% for the remaining layers.

### Key Observations

* **Q-Anchored vs. A-Anchored:** Q-Anchored methods generally exhibit higher accuracy, especially for PopQA, TriviaQA, and NQ datasets.

* **Dataset Performance:** PopQA, TriviaQA, and NQ datasets show significantly higher accuracy compared to HotpotQA.

* **Layer Dependence:** The accuracy of Q-Anchored methods increases rapidly in the initial layers (0-5) and then fluctuates. A-Anchored methods tend to decrease in accuracy in the initial layers.

* **Model Version Comparison:** The performance between Mistral-7B-v0.1 and Mistral-7B-v0.3 appears very similar across all datasets and anchoring methods.

### Interpretation

The charts suggest that the Mistral-7B models perform better when the question is used as the anchor ("Q-Anchored") compared to using the answer as the anchor ("A-Anchored"). The model also demonstrates varying levels of success depending on the question-answering dataset, with HotpotQA being the most challenging. The rapid increase in accuracy for Q-Anchored methods in the initial layers indicates that these layers are crucial for processing the question and extracting relevant information. The similarity in performance between v0.1 and v0.3 suggests that the changes between these versions did not significantly impact the model's accuracy on these specific tasks.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

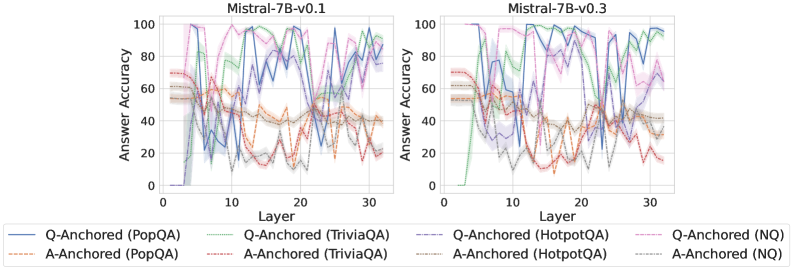

## Line Chart: Answer Accuracy vs. Layer for Mistral Models

### Overview

This image presents two line charts, side-by-side, comparing the answer accuracy of the Mistral-7B-v0.1 and Mistral-7B-v0.3 models across different layers. The x-axis represents the layer number (from 0 to 30), and the y-axis represents the answer accuracy (from 0 to 100). Each chart displays multiple lines, each representing a different question-answering dataset and anchoring method.

### Components/Axes

* **X-axis:** Layer (0 to 30, with tick marks at integer values)

* **Y-axis:** Answer Accuracy (0 to 100, with tick marks at integer multiples of 20)

* **Left Chart Title:** Mistral-7B-v0.1

* **Right Chart Title:** Mistral-7B-v0.3

* **Legend (Bottom):**

* Blue Solid Line: Q-Anchored (PopQA)

* Orange Dotted Line: A-Anchored (PopQA)

* Green Solid Line: Q-Anchored (TriviaQA)

* Purple Solid Line: A-Anchored (TriviaQA)

* Brown Dashed Line: Q-Anchored (HotpotQA)

* Teal Solid Line: A-Anchored (HotpotQA)

* Red Dotted Line: Q-Anchored (NQ)

* Yellow Solid Line: A-Anchored (NQ)

### Detailed Analysis or Content Details

**Mistral-7B-v0.1 (Left Chart):**

* **Q-Anchored (PopQA) - Blue Solid Line:** Starts at approximately 5% accuracy at layer 0, rises to a peak of around 95% at layer 6, fluctuates between 60% and 90% for layers 6-20, then gradually increases to approximately 90% at layer 30.

* **A-Anchored (PopQA) - Orange Dotted Line:** Starts at approximately 5% accuracy at layer 0, rises to a peak of around 65% at layer 4, then fluctuates between 30% and 60% for layers 4-30.

* **Q-Anchored (TriviaQA) - Green Solid Line:** Starts at approximately 10% accuracy at layer 0, rises to a peak of around 95% at layer 5, fluctuates between 60% and 90% for layers 5-20, then gradually increases to approximately 95% at layer 30.

* **A-Anchored (TriviaQA) - Purple Solid Line:** Starts at approximately 10% accuracy at layer 0, rises to a peak of around 70% at layer 4, then fluctuates between 30% and 60% for layers 4-30.

* **Q-Anchored (HotpotQA) - Brown Dashed Line:** Starts at approximately 0% accuracy at layer 0, rises to a peak of around 80% at layer 6, fluctuates between 40% and 80% for layers 6-20, then gradually increases to approximately 75% at layer 30.

* **A-Anchored (HotpotQA) - Teal Solid Line:** Starts at approximately 0% accuracy at layer 0, rises to a peak of around 50% at layer 4, then fluctuates between 20% and 50% for layers 4-30.

* **Q-Anchored (NQ) - Red Dotted Line:** Starts at approximately 0% accuracy at layer 0, rises to a peak of around 60% at layer 6, fluctuates between 20% and 60% for layers 6-20, then gradually increases to approximately 50% at layer 30.

* **A-Anchored (NQ) - Yellow Solid Line:** Starts at approximately 0% accuracy at layer 0, rises to a peak of around 40% at layer 4, then fluctuates between 20% and 40% for layers 4-30.

**Mistral-7B-v0.3 (Right Chart):**

* **Q-Anchored (PopQA) - Blue Solid Line:** Starts at approximately 5% accuracy at layer 0, rises to a peak of around 95% at layer 6, fluctuates between 60% and 90% for layers 6-20, then gradually increases to approximately 95% at layer 30.

* **A-Anchored (PopQA) - Orange Dotted Line:** Starts at approximately 5% accuracy at layer 0, rises to a peak of around 65% at layer 4, then fluctuates between 30% and 60% for layers 4-30.

* **Q-Anchored (TriviaQA) - Green Solid Line:** Starts at approximately 10% accuracy at layer 0, rises to a peak of around 95% at layer 5, fluctuates between 60% and 90% for layers 5-20, then gradually increases to approximately 95% at layer 30.

* **A-Anchored (TriviaQA) - Purple Solid Line:** Starts at approximately 10% accuracy at layer 0, rises to a peak of around 70% at layer 4, then fluctuates between 30% and 60% for layers 4-30.

* **Q-Anchored (HotpotQA) - Brown Dashed Line:** Starts at approximately 0% accuracy at layer 0, rises to a peak of around 80% at layer 6, fluctuates between 40% and 80% for layers 6-20, then gradually increases to approximately 75% at layer 30.

* **A-Anchored (HotpotQA) - Teal Solid Line:** Starts at approximately 0% accuracy at layer 0, rises to a peak of around 50% at layer 4, then fluctuates between 20% and 50% for layers 4-30.

* **Q-Anchored (NQ) - Red Dotted Line:** Starts at approximately 0% accuracy at layer 0, rises to a peak of around 60% at layer 6, fluctuates between 20% and 60% for layers 6-20, then gradually increases to approximately 50% at layer 30.

* **A-Anchored (NQ) - Yellow Solid Line:** Starts at approximately 0% accuracy at layer 0, rises to a peak of around 40% at layer 4, then fluctuates between 20% and 40% for layers 4-30.

### Key Observations

* For both models, the Q-Anchored lines generally exhibit higher accuracy than the A-Anchored lines across all datasets.

* The accuracy tends to peak in the early layers (around layers 5-6) and then fluctuates.

* PopQA and TriviaQA datasets show higher accuracy compared to HotpotQA and NQ datasets.

* The two charts (v0.1 and v0.3) are visually very similar, suggesting that the improvement from v0.1 to v0.3 is not dramatically reflected in these accuracy curves.

### Interpretation

The charts demonstrate the performance of the Mistral models on different question-answering tasks as the model depth (layers) increases. The higher accuracy of Q-Anchored methods suggests that anchoring the questions is more effective than anchoring the answers for these tasks. The varying performance across datasets indicates that the models are better at answering questions from some knowledge sources (PopQA, TriviaQA) than others (HotpotQA, NQ). The similarity between the v0.1 and v0.3 charts suggests that the improvements in v0.3 may be more nuanced than a simple increase in overall accuracy, potentially focusing on other aspects of performance like efficiency or robustness. The fluctuating accuracy after the initial peak could indicate that deeper layers introduce complexity or noise that hinders performance on these specific tasks.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Charts: Answer Accuracy by Layer for Mistral-7B Model Versions

### Overview

The image displays two side-by-side line charts comparing the "Answer Accuracy" of different question-answering methods across the layers of two versions of the Mistral-7B language model: Mistral-7B-v0.1 (left) and Mistral-7B-v0.3 (right). Each chart plots the performance of eight distinct method-dataset combinations.

### Components/Axes

* **Chart Titles:**

* Left Chart: `Mistral-7B-v0.1`

* Right Chart: `Mistral-7B-v0.3`

* **X-Axis (Both Charts):** Labeled `Layer`. The scale runs from 0 to 30, with major tick marks at 0, 10, 20, and 30.

* **Y-Axis (Both Charts):** Labeled `Answer Accuracy`. The scale runs from 0 to 100, with major tick marks at 0, 20, 40, 60, 80, and 100.

* **Legend (Bottom Center, spanning both charts):** Contains eight entries, differentiating methods by line style and color.

* **Solid Lines (Q-Anchored Methods):**

* Blue: `Q-Anchored (PopQA)`

* Green: `Q-Anchored (TriviaQA)`

* Purple: `Q-Anchored (HotpotQA)`

* Pink: `Q-Anchored (NQ)`

* **Dashed Lines (A-Anchored Methods):**

* Orange: `A-Anchored (PopQA)`

* Red: `A-Anchored (TriviaQA)`

* Brown: `A-Anchored (HotpotQA)`

* Gray: `A-Anchored (NQ)`

### Detailed Analysis

**Mistral-7B-v0.1 (Left Chart):**

* **General Trend:** All lines exhibit high volatility, with sharp peaks and troughs across layers. Performance is highly unstable.

* **Q-Anchored (Solid Lines):** Generally achieve higher peak accuracies (often reaching 80-100) but also experience severe drops (sometimes below 20). The blue (PopQA) and purple (HotpotQA) lines show particularly extreme swings.

* **A-Anchored (Dashed Lines):** Tend to have lower peak accuracies (mostly below 70) and also fluctuate significantly. The orange (PopQA) and red (TriviaQA) lines show a notable dip in accuracy between layers 10-20.

* **Notable Points:** Around layer 5, several Q-Anchored methods (green, purple, pink) spike to near 100% accuracy before dropping sharply. Around layer 25, the blue line (Q-Anchored PopQA) plummets to near 0%.

**Mistral-7B-v0.3 (Right Chart):**

* **General Trend:** Lines appear less volatile than in v0.1, especially for Q-Anchored methods, which show more sustained high performance in the later layers (20-30).

* **Q-Anchored (Solid Lines):** Show a clearer pattern of improvement with depth. The green (TriviaQA) and purple (HotpotQA) lines, in particular, rise to and maintain high accuracy (>80) from layer 20 onward. The blue line (PopQA) still fluctuates but has a higher average.

* **A-Anchored (Dashed Lines):** Continue to show lower and more variable performance compared to their Q-Anchored counterparts. The orange (PopQA) and red (TriviaQA) lines remain in the lower accuracy range (20-50) for most layers.

* **Notable Points:** The green line (Q-Anchored TriviaQA) starts very low (near 0 at layer 0) but climbs steadily to become one of the top performers. The gray line (A-Anchored NQ) shows a distinct peak around layer 15 before declining.

### Key Observations

1. **Method Superiority:** Across both model versions, **Q-Anchored methods (solid lines) consistently outperform their A-Anchored (dashed line) counterparts** on the same dataset. This is the most prominent pattern.

2. **Model Version Improvement:** **Mistral-7B-v0.3 demonstrates more stable and generally higher accuracy** in the later layers (20-30) for Q-Anchored methods compared to v0.1. The chaotic volatility seen in v0.1 is somewhat tamed.

3. **Dataset Sensitivity:** Performance varies significantly by dataset. For example, Q-Anchored on TriviaQA (green) and HotpotQA (purple) shows strong late-layer performance in v0.3, while performance on PopQA (blue) remains more erratic.

4. **Layer Sensitivity:** Accuracy is not monotonic with layer depth. There are specific layers where performance peaks or crashes for various methods, suggesting certain layers are more specialized or sensitive for these tasks.

### Interpretation

This data suggests a fundamental difference in how "Q-Anchored" and "A-Anchored" methods utilize the model's internal representations. The consistent superiority of Q-Anchored methods implies that anchoring the model's processing to the *question* throughout its layers leads to more accurate answers than anchoring to the *answer* candidates.

The comparison between v0.1 and v0.3 indicates that the model update led to **more robust and reliable internal processing for question-answering tasks**, particularly in the deeper layers. The reduced volatility suggests the newer model's representations are more stable and less prone to catastrophic failures at specific layers.

The high layer-to-layer variance, especially in v0.1, is a critical finding. It indicates that a model's QA ability is not a smooth function of depth; instead, specific layers hold disproportionate importance, and performance can be fragile. This has implications for model interpretability and techniques like early exiting or layer-wise probing. The charts serve as a diagnostic tool, revealing that simply averaging performance across layers would mask these crucial dynamics.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 2

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Chart: Answer Accuracy Across Layers for Mistral-7B Models (v0.1 and v0.3)

### Overview

The image contains two line charts comparing answer accuracy across 30 layers of the Mistral-7B model (versions v0.1 and v0.3). Each chart displays multiple data series representing different question-answering (QA) datasets and anchoring methods (Q-Anchored vs. A-Anchored). The y-axis measures answer accuracy (0–100%), while the x-axis represents model layers (0–30). The charts highlight variability in performance across layers and datasets.

---

### Components/Axes

- **X-axis (Layer)**: Labeled "Layer" with ticks at 0, 10, 20, 30.

- **Y-axis (Answer Accuracy)**: Labeled "Answer Accuracy" with ticks at 0, 20, 40, 60, 80, 100.

- **Legends**:

- **Left Chart (v0.1)**:

- Solid lines: Q-Anchored (PopQA, TriviaQA, HotpotQA, NQ).

- Dashed lines: A-Anchored (PopQA, TriviaQA, HotpotQA, NQ).

- **Right Chart (v0.3)**:

- Solid lines: Q-Anchored (PopQA, TriviaQA, HotpotQA, NQ).

- Dashed lines: A-Anchored (PopQA, TriviaQA, HotpotQA, NQ).

- **Titles**:

- Left: "Mistral-7B-v0.1"

- Right: "Mistral-7B-v0.3"

---

### Detailed Analysis

#### Left Chart (Mistral-7B-v0.1)

- **Q-Anchored (PopQA)**: Starts at ~80% accuracy, dips to ~40% at layer 10, then fluctuates between ~50–70% (peak ~75% at layer 20).

- **A-Anchored (PopQA)**: Starts at ~60%, dips to ~30% at layer 10, then stabilizes around ~40–50%.

- **Q-Anchored (TriviaQA)**: Peaks at ~90% at layer 5, drops to ~30% at layer 15, then recovers to ~70% at layer 30.

- **A-Anchored (TriviaQA)**: Starts at ~50%, dips to ~20% at layer 10, then fluctuates between ~30–50%.

- **Q-Anchored (HotpotQA)**: Peaks at ~85% at layer 10, drops to ~40% at layer 20, then recovers to ~70% at layer 30.

- **A-Anchored (HotpotQA)**: Starts at ~55%, dips to ~25% at layer 15, then stabilizes around ~40–50%.

- **Q-Anchored (NQ)**: Peaks at ~95% at layer 5, drops to ~30% at layer 15, then recovers to ~75% at layer 30.

- **A-Anchored (NQ)**: Starts at ~65%, dips to ~20% at layer 10, then fluctuates between ~30–50%.

#### Right Chart (Mistral-7B-v0.3)

- **Q-Anchored (PopQA)**: Starts at ~70%, dips to ~40% at layer 10, then fluctuates between ~50–70% (peak ~75% at layer 20).

- **A-Anchored (PopQA)**: Starts at ~60%, dips to ~30% at layer 10, then stabilizes around ~40–50%.

- **Q-Anchored (TriviaQA)**: Peaks at ~85% at layer 5, drops to ~35% at layer 15, then recovers to ~70% at layer 30.

- **A-Anchored (TriviaQA)**: Starts at ~50%, dips to ~25% at layer 10, then fluctuates between ~30–50%.

- **Q-Anchored (HotpotQA)**: Peaks at ~80% at layer 10, drops to ~45% at layer 20, then recovers to ~70% at layer 30.

- **A-Anchored (HotpotQA)**: Starts at ~55%, dips to ~25% at layer 15, then stabilizes around ~40–50%.

- **Q-Anchored (NQ)**: Peaks at ~90% at layer 5, drops to ~35% at layer 15, then recovers to ~75% at layer 30.

- **A-Anchored (NQ)**: Starts at ~65%, dips to ~20% at layer 10, then fluctuates between ~30–50%.

---

### Key Observations

1. **Layer-Specific Variability**: Accuracy fluctuates significantly across layers, with sharp drops and recoveries (e.g., TriviaQA Q-Anchored in v0.1 drops 60% from layer 5 to 15).

2. **Anchoring Impact**: Q-Anchored methods generally outperform A-Anchored in most datasets, though performance varies by layer.

3. **Dataset Sensitivity**: NQ (Natural Questions) shows the highest peaks (up to 95%) but also the steepest drops.

4. **Model Version Differences**: v0.3 exhibits slightly more stable trends compared to v0.1, with less extreme dips.

---

### Interpretation

The charts suggest that anchoring methods (Q vs. A) and dataset types (e.g., NQ vs. PopQA) significantly influence model performance. Q-Anchored approaches consistently achieve higher accuracy peaks, but both methods show layer-specific instability. The v0.3 model appears more robust, with reduced volatility in accuracy. The sharp drops (e.g., TriviaQA Q-Anchored in v0.1) may indicate architectural or training-related bottlenecks in specific layers. These patterns highlight the importance of layer-specific optimization for QA tasks.

DECODING INTELLIGENCE...