## Diagram: Feedback Mechanisms for RLLMs

### Overview

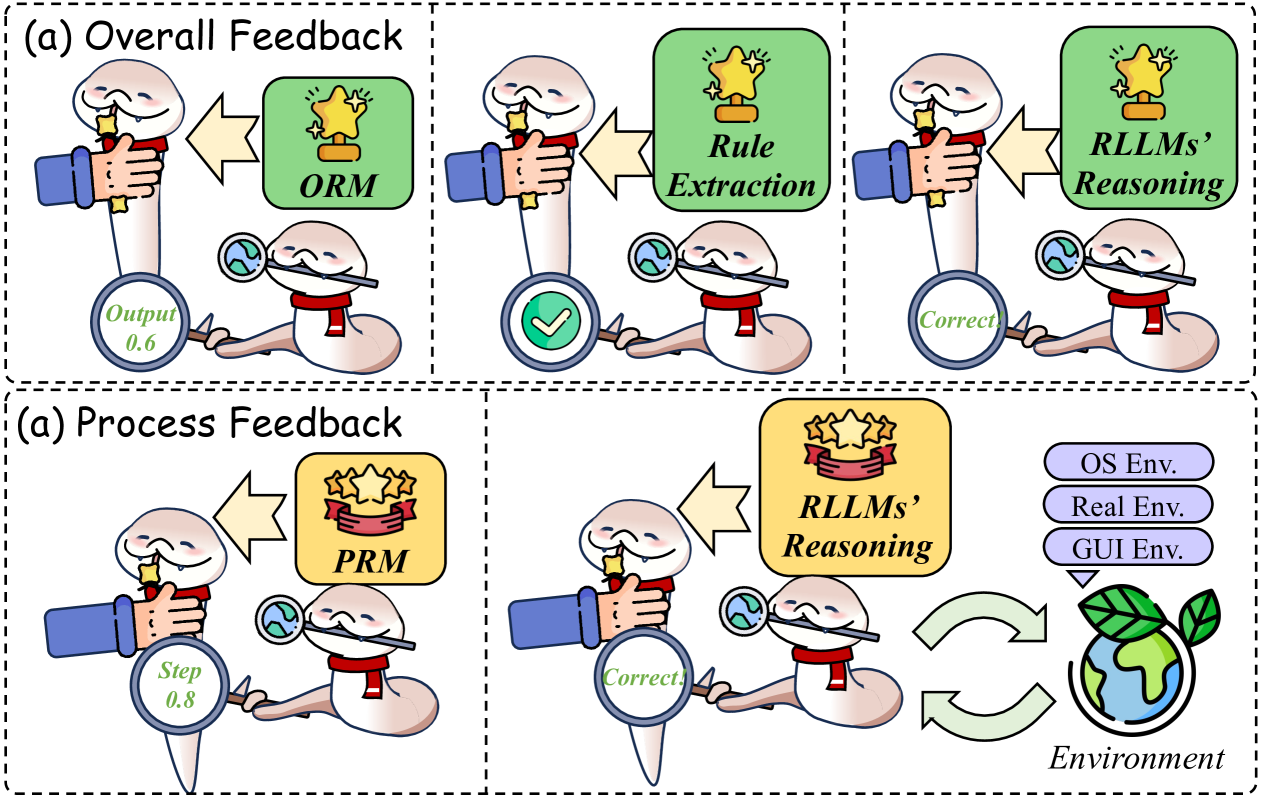

The image illustrates two types of feedback mechanisms for Reinforcement Learning Language Models (RLLMs): Overall Feedback and Process Feedback. Each mechanism is depicted using cartoon-like figures and visual cues to represent the flow of information and evaluation.

### Components/Axes

**Overall Feedback (Top Section):**

* **Title:** (a) Overall Feedback

* **Components:**

* A cartoon figure (worm-like) holding a star, representing the RLLM.

* A green box with a star icon and the label "ORM" (Objective Reward Model).

* A green box with the label "Rule Extraction".

* A green box with the label "RLLMs' Reasoning".

* A magnifying glass held by another cartoon figure, displaying "Output 0.6" in the first instance and "Correct" in the other two.

* Arrows indicating the direction of feedback.

**Process Feedback (Bottom Section):**

* **Title:** (a) Process Feedback

* **Components:**

* A cartoon figure holding a star.

* A yellow box with three stars and the label "PRM" (Process Reward Model).

* A magnifying glass displaying "Step 0.8".

* A yellow box with the label "RLLMs' Reasoning".

* A cartoon figure holding a magnifying glass displaying "Correct".

* Three rounded boxes labeled "OS Env.", "Real Env.", and "GUI Env." (representing different environments).

* A globe with leaves, labeled "Environment".

* Curved arrows indicating interaction with the environment.

### Detailed Analysis

**Overall Feedback:**

* **ORM:** The RLLM receives feedback from the Objective Reward Model (ORM). The output is evaluated as "0.6".

* **Rule Extraction:** The RLLM's rule extraction process is evaluated as "Correct".

* **RLLMs' Reasoning:** The RLLM's reasoning is evaluated as "Correct".

**Process Feedback:**

* **PRM:** The RLLM receives feedback from the Process Reward Model (PRM). The step is evaluated as "0.8".

* **RLLMs' Reasoning:** The RLLM's reasoning is evaluated as "Correct".

* **Environment Interaction:** The RLLM interacts with different environments (OS Env., Real Env., GUI Env.) and receives feedback from the "Environment".

### Key Observations

* The diagram uses visual metaphors to represent complex feedback loops in RLLMs.

* Overall Feedback focuses on the final output or result, while Process Feedback focuses on individual steps or processes.

* The use of "Correct" and numerical values (0.6, 0.8) indicates a form of evaluation or scoring.

* The environment interaction loop highlights the iterative nature of reinforcement learning.

### Interpretation

The diagram illustrates two distinct approaches to providing feedback to RLLMs. Overall Feedback assesses the final outcome, potentially using metrics like the Objective Reward Model (ORM) or evaluating the correctness of rule extraction and reasoning. Process Feedback, on the other hand, focuses on evaluating individual steps or processes within the RLLM's operation, as indicated by the Process Reward Model (PRM) and the "Step 0.8" evaluation.

The diagram also emphasizes the interaction between the RLLM and its environment. The RLLM can operate in different environments (OS, Real, GUI), and the feedback loop with the "Environment" suggests an iterative learning process where the RLLM adapts its behavior based on environmental interactions.

The use of cartoon figures and simple labels makes the diagram accessible, but it lacks specific details about the nature of the feedback signals or the internal workings of the RLLM. The diagram serves as a high-level overview of feedback mechanisms in RLLMs, highlighting the importance of both outcome-based and process-based evaluation.

```