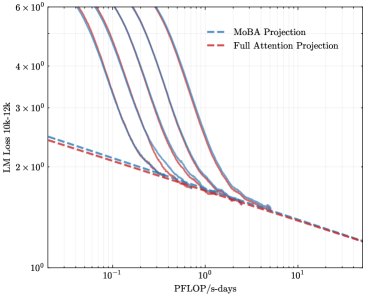

## Chart: LM Loss vs. PFLOP/s-days

### Overview

The image presents a line chart comparing the Language Model (LM) Loss of two projection methods – MoBA Projection and Full Attention Projection – against the computational cost measured in PFLOP/s-days. The chart displays a logarithmic scale on the y-axis (LM Loss) and a logarithmic scale on the x-axis (PFLOP/s-days). Multiple lines are present for each projection method, indicating potentially different runs or configurations.

### Components/Axes

* **X-axis Title:** PFLOP/s-days

* **Y-axis Title:** LM Loss 10k-12k

* **Y-axis Scale:** Logarithmic, ranging from 10⁰ to 6 x 10⁶. Markers are present at 10⁰, 10¹, 10², 10³, 10⁴, 10⁵, 10⁶.

* **X-axis Scale:** Logarithmic, with markers at 10⁻¹, 10⁰, 10¹, 10².

* **Legend:** Located in the top-right corner.

* **MoBA Projection:** Represented by a dashed blue line.

* **Full Attention Projection:** Represented by a dashed red line.

### Detailed Analysis

The chart contains multiple lines for each projection method. I will describe the trends and approximate data points for each.

**MoBA Projection (Dashed Blue Lines):**

There are approximately 5 blue lines.

* **Line 1:** Starts at approximately (10⁻¹, 2 x 10⁵) and slopes downward, reaching approximately (10², 2 x 10¹)

* **Line 2:** Starts at approximately (10⁻¹, 2.5 x 10⁵) and slopes downward, reaching approximately (10², 2.5 x 10¹)

* **Line 3:** Starts at approximately (10⁻¹, 3 x 10⁵) and slopes downward, reaching approximately (10², 3 x 10¹)

* **Line 4:** Starts at approximately (10⁻¹, 3.5 x 10⁵) and slopes downward, reaching approximately (10², 3.5 x 10¹)

* **Line 5:** Starts at approximately (10⁻¹, 4 x 10⁵) and slopes downward, reaching approximately (10², 4 x 10¹)

**Full Attention Projection (Dashed Red Lines):**

There are approximately 5 red lines.

* **Line 1:** Starts at approximately (10⁻¹, 3 x 10⁵) and slopes downward, reaching approximately (10², 3 x 10¹)

* **Line 2:** Starts at approximately (10⁻¹, 3.5 x 10⁵) and slopes downward, reaching approximately (10², 3.5 x 10¹)

* **Line 3:** Starts at approximately (10⁻¹, 4 x 10⁵) and slopes downward, reaching approximately (10², 4 x 10¹)

* **Line 4:** Starts at approximately (10⁻¹, 4.5 x 10⁵) and slopes downward, reaching approximately (10², 4.5 x 10¹)

* **Line 5:** Starts at approximately (10⁻¹, 5 x 10⁵) and slopes downward, reaching approximately (10², 5 x 10¹)

All lines exhibit a decreasing trend, indicating that as PFLOP/s-days increase, the LM Loss decreases for both projection methods.

### Key Observations

* The Full Attention Projection consistently exhibits higher LM Loss values than the MoBA Projection across the entire range of PFLOP/s-days.

* The multiple lines for each projection method suggest variability in the results, potentially due to different training runs or hyperparameter settings.

* The rate of decrease in LM Loss appears to slow down as PFLOP/s-days increase, indicating diminishing returns from increased computation.

### Interpretation

The chart demonstrates the trade-off between computational cost (PFLOP/s-days) and language model loss. Both projection methods improve LM Loss with increased computation, but the MoBA Projection achieves lower loss values for a given computational budget. This suggests that MoBA Projection is a more efficient method for reducing LM Loss. The spread of lines for each method indicates that the performance is not deterministic and is subject to variance. The logarithmic scales highlight the significant impact of even small increases in PFLOP/s-days at lower computational costs, and the diminishing returns at higher costs. The data suggests that while increasing computational resources always helps, the MoBA projection is a more efficient approach to reducing LM Loss.