## Diagram: Evaluation Framework for AI Agents

### Overview

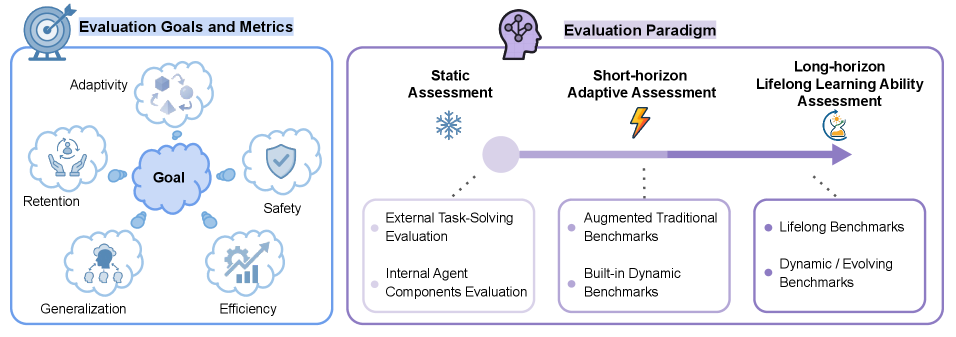

The image presents a conceptual diagram outlining a comprehensive framework for evaluating AI agents. It is divided into two primary, side-by-side panels: "Evaluation Goals and Metrics" on the left and "Evaluation Paradigm" on the right. The diagram uses a combination of text, icons, and connecting lines to illustrate the key attributes to measure and the evolving methods for assessment.

### Components/Axes

The diagram is structured into two main rectangular panels with rounded corners.

**Left Panel: Evaluation Goals and Metrics**

* **Central Element:** A large, central cloud shape labeled **"Goal"**.

* **Surrounding Elements:** Five smaller cloud shapes, each connected to the central "Goal" cloud by a line, representing key evaluation metrics:

1. **Top:** "Adaptivity" (Icon: A brain with interconnected nodes).

2. **Top-Left:** "Retention" (Icon: Hands holding a flask).

3. **Top-Right:** "Safety" (Icon: A shield with a checkmark).

4. **Bottom-Left:** "Generalization" (Icon: A network of connected nodes).

5. **Bottom-Right:** "Efficiency" (Icon: A rising bar chart with a gear).

**Right Panel: Evaluation Paradigm**

* **Title:** "Evaluation Paradigm" at the top, accompanied by an icon of a head with gears.

* **Central Timeline:** A horizontal purple arrow pointing to the right, representing a progression or spectrum.

* **Assessment Types (Above the Arrow):** Three labeled stages positioned along the timeline:

1. **Left (Start of Arrow):** "Static Assessment" (Icon: A snowflake).

2. **Middle:** "Short-horizon Adaptive Assessment" (Icon: A lightning bolt).

3. **Right (End of Arrow):** "Long-horizon Lifelong Learning Ability Assessment" (Icon: A flask with a sprouting plant).

* **Evaluation Methods (Below the Arrow):** Three corresponding boxes, each connected by a dotted line to its respective assessment type above. Each box contains a bulleted list:

1. **Under Static Assessment:**

* "External Task-Solving Evaluation"

* "Internal Agent Components Evaluation"

2. **Under Short-horizon Adaptive Assessment:**

* "Augmented Traditional Benchmarks"

* "Built-in Dynamic Benchmarks"

3. **Under Long-horizon Lifelong Learning Ability Assessment:**

* "Lifelong Benchmarks"

* "Dynamic / Evolving Benchmarks"

### Detailed Analysis

The diagram establishes a clear relationship between *what* to evaluate (the goals) and *how* to evaluate it (the paradigm).

* **Goals & Metrics:** The five surrounding clouds (Adaptivity, Retention, Safety, Generalization, Efficiency) are presented as the core objectives or dimensions of a comprehensive evaluation. They are all linked to a central "Goal," suggesting these are the target attributes a successful agent should possess.

* **Paradigm Progression:** The right panel illustrates a shift in evaluation methodology from simple to complex.

* **Static Assessment** involves fixed tests, evaluating either the agent's final output on external tasks or its internal architecture.

* **Short-horizon Adaptive Assessment** introduces more dynamic elements, using benchmarks that are either enhanced versions of traditional ones or designed to be dynamic within a limited scope.

* **Long-horizon Lifelong Learning Ability Assessment** represents the most advanced stage, focusing on benchmarks designed to test continuous learning and adaptation over extended periods, where the evaluation environment itself may change.

### Key Observations

1. **Holistic View:** The framework connects high-level goals (left) with concrete evaluation methodologies (right), providing a complete picture of an evaluation strategy.

2. **Progression of Complexity:** There is a clear left-to-right progression on the paradigm side, moving from static, one-time evaluations to dynamic, continuous ones. This mirrors the increasing complexity of the agent capabilities being tested (from basic task-solving to lifelong learning).

3. **Iconography:** Each element is paired with a symbolic icon (e.g., snowflake for static, lightning for adaptive, plant for lifelong learning), which reinforces the conceptual meaning of the text.

4. **Layout:** The "Evaluation Goals" are presented as a clustered, interconnected network, emphasizing their interrelated nature. The "Evaluation Paradigm" is presented as a linear timeline, emphasizing progression and evolution.

### Interpretation

This diagram outlines a mature framework for assessing advanced AI systems, likely autonomous agents. It argues that meaningful evaluation must move beyond static, narrow benchmarks.

* **The "Why":** The left panel defines the *purpose* of evaluation: to measure an agent's ability to adapt, retain knowledge, operate safely, generalize skills, and do so efficiently. These are hallmarks of robust, real-world AI.

* **The "How":** The right panel defines the *methodology*, advocating for a paradigm shift. It suggests that evaluating the complex goals on the left requires equally sophisticated methods. Simple, static tests ("Static Assessment") are insufficient for measuring "Adaptivity" or "Lifelong Learning." Therefore, the field must develop and adopt "Dynamic / Evolving Benchmarks" and "Lifelong Benchmarks."

* **Underlying Message:** The framework implies that the current state of evaluation (often stuck in the "Static" or early "Adaptive" phases) needs to evolve to keep pace with the capabilities of modern AI agents. True assessment of an agent's "Goal"-oriented performance requires placing it in dynamic, long-term scenarios that test the very attributes listed on the left. The connection between the two panels is implicit but clear: to measure the goals, you must employ the advanced paradigms.