## Diagram: Evaluation Framework Architecture

### Overview

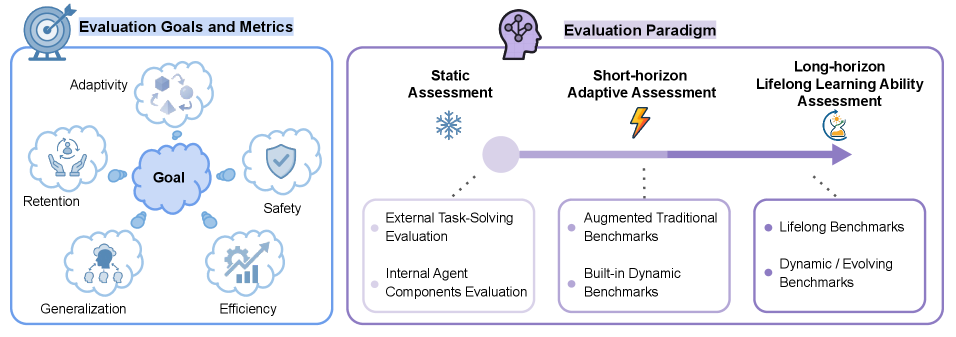

The image presents a two-part technical framework for AI system evaluation. The left section defines core evaluation goals and metrics, while the right section outlines a three-stage evaluation paradigm with progressive complexity.

### Components/Axes

**Left Diagram (Evaluation Goals and Metrics):**

- Central node labeled "Goal"

- Five radiating elements with icons:

1. **Adaptivity** (geometric shapes in motion)

2. **Retention** (hands holding a brain)

3. **Safety** (shield with checkmark)

4. **Generalization** (group of people under thought bubble)

5. **Efficiency** (graph with upward arrow)

**Right Diagram (Evaluation Paradigm):**

- Three sequential stages connected by a gradient arrow:

1. **Static Assessment** (snowflake icon)

- Sub-components:

- External Task-Solving Evaluation

- Internal Agent Components Evaluation

2. **Short-horizon Adaptive Assessment** (lightning bolt)

- Sub-components:

- Augmented Traditional Benchmarks

- Built-in Dynamic Benchmarks

3. **Long-horizon Lifelong Learning Ability Assessment** (lightbulb with circuit)

- Sub-components:

- Lifelong Benchmarks

- Dynamic/Evolving Benchmarks

### Detailed Analysis

- **Spatial Relationships:**

- Left diagram uses radial layout with "Goal" at center (coordinates: center-left)

- Right diagram employs horizontal flow from Static (left) to Long-horizon (right)

- No numerical axes present; all elements are categorical labels

- **Iconography:**

- Adaptivity: Dynamic geometric transformations

- Retention: Cognitive preservation metaphor

- Safety: Protective barrier visualization

- Generalization: Collaborative human elements

- Efficiency: Performance improvement indicator

### Key Observations

1. Evaluation goals are presented as interconnected rather than hierarchical

2. Paradigm progression shows increasing temporal complexity (static → adaptive → lifelong)

3. Each assessment stage incorporates both external and internal evaluation components

4. Long-horizon assessment emphasizes continuous adaptation through dynamic benchmarks

### Interpretation

This framework suggests a holistic approach to AI evaluation where:

- **Core Goals** (left) represent desired system characteristics rather than measurement techniques

- **Paradigm Stages** (right) demonstrate increasing evaluation sophistication:

- Static assessments provide foundational capability checks

- Short-horizon assessments introduce real-time adaptability testing

- Long-horizon assessments focus on sustained learning and evolution

- The gradient arrow between stages implies that effective evaluation requires progression through these complexity levels

- The absence of quantitative metrics suggests this is a conceptual framework rather than empirical measurement system

The diagram emphasizes that comprehensive AI evaluation must balance multiple dimensions (safety, efficiency, etc.) while progressively challenging systems across different temporal horizons.