# Technical Document Extraction: Attention Forward Speed Benchmark

## 1. Header Information

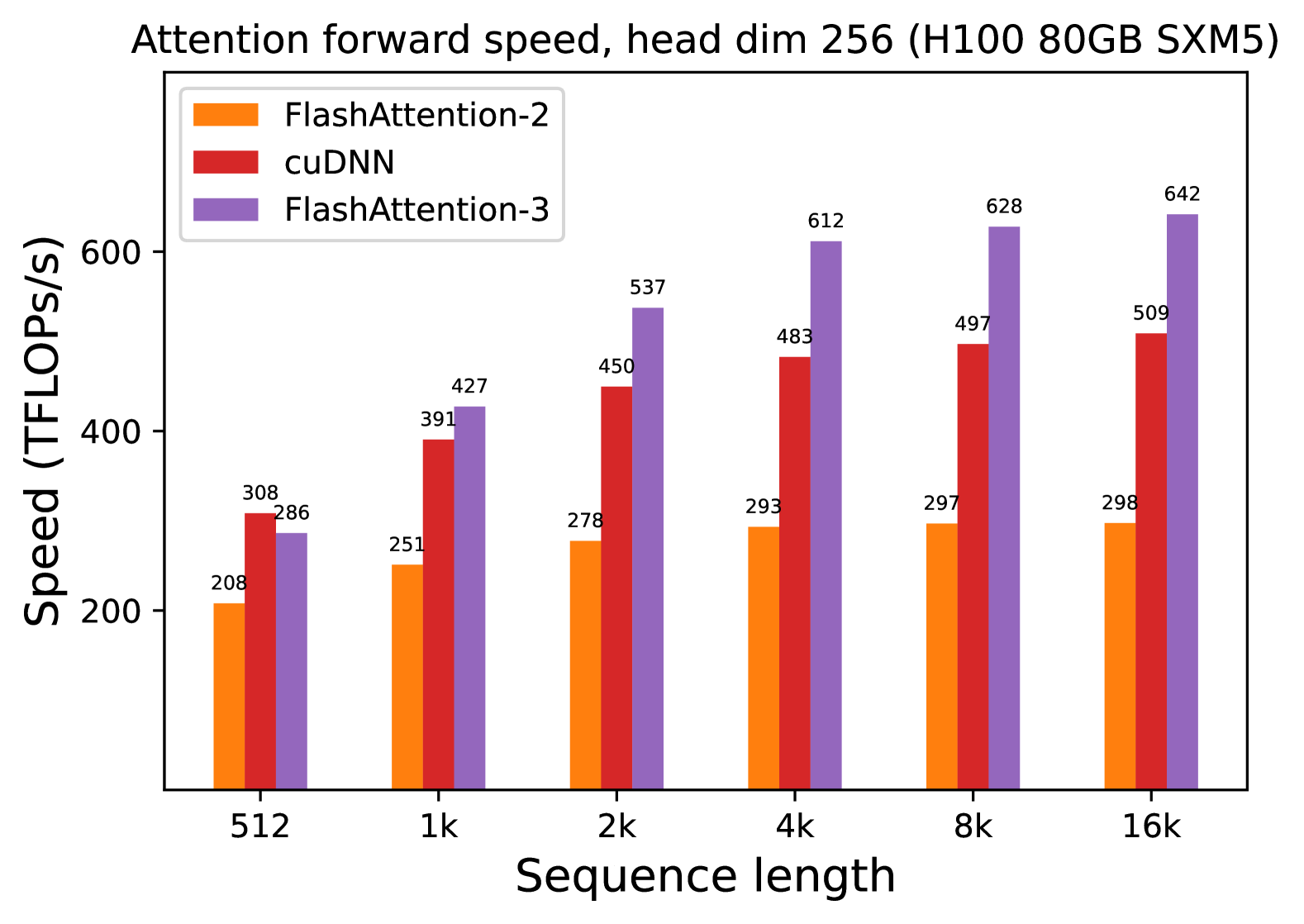

* **Title:** Attention forward speed, head dim 256 (H100 80GB SXM5)

* **Hardware Context:** NVIDIA H100 80GB SXM5 GPU.

* **Parameter Context:** Head dimension is fixed at 256.

## 2. Chart Component Analysis

### Spatial Grounding & Legend

* **Legend Location:** Top-left corner of the plot area.

* **Data Series Identification:**

* **Orange Bar:** FlashAttention-2

* **Red Bar:** cuDNN

* **Purple Bar:** FlashAttention-3

### Axis Definitions

* **Y-Axis (Vertical):** Speed (TFLOPS/s). Scale ranges from 200 to 600+ with major tick marks every 200 units.

* **X-Axis (Horizontal):** Sequence length. Categorical values: 512, 1k, 2k, 4k, 8k, 16k.

## 3. Trend Verification

* **FlashAttention-2 (Orange):** Shows a steady but slow upward trend, starting at 208 TFLOPS/s and plateauing near 298 TFLOPS/s as sequence length increases.

* **cuDNN (Red):** Shows a significant upward trend from 512 to 4k, then begins to taper off, reaching a peak of 509 TFLOPS/s at the 16k sequence length.

* **FlashAttention-3 (Purple):** Shows the most aggressive upward trend. While it starts lower than cuDNN at the 512 length, it quickly overtakes both other methods and continues to scale significantly, reaching the highest recorded value of 642 TFLOPS/s.

## 4. Data Table Reconstruction

The following table represents the precise numerical values labeled above each bar in the chart.

| Sequence Length | FlashAttention-2 (Orange) [TFLOPS/s] | cuDNN (Red) [TFLOPS/s] | FlashAttention-3 (Purple) [TFLOPS/s] |

| :--- | :--- | :--- | :--- |

| **512** | 208 | 308 | 286 |

| **1k** | 251 | 391 | 427 |

| **2k** | 278 | 450 | 537 |

| **4k** | 293 | 483 | 612 |

| **8k** | 297 | 497 | 628 |

| **16k** | 298 | 509 | 642 |

## 5. Key Observations

* **Performance Leadership:** FlashAttention-3 is the fastest method for all sequence lengths of 1k and above.

* **Scaling Efficiency:** FlashAttention-3 demonstrates superior scaling with sequence length compared to FlashAttention-2 and cuDNN. At 16k sequence length, FlashAttention-3 is approximately 2.15x faster than FlashAttention-2 and 1.26x faster than cuDNN.

* **Small Sequence Exception:** At the smallest measured sequence length (512), cuDNN (308 TFLOPS/s) outperforms FlashAttention-3 (286 TFLOPS/s).