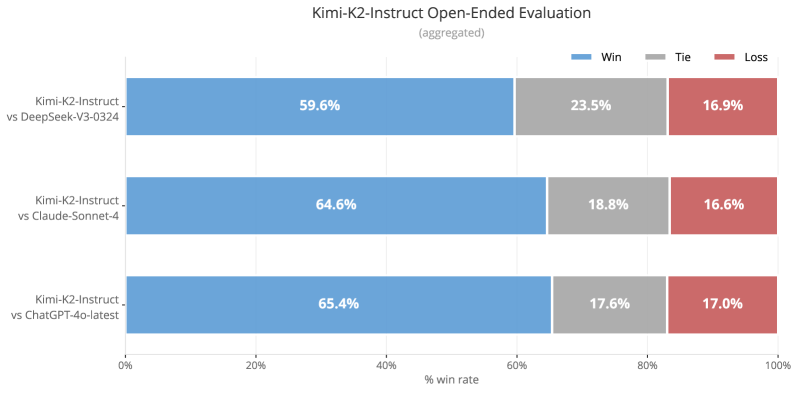

## Bar Chart: Kimi-K2-Instruct Open-Ended Evaluation

### Overview

This is a horizontal bar chart comparing the win rates of Kimi-K2-Instruct against three other models: DeepSeek-V3-0324, Claude-Sonnet-4, and ChatGLM3-6b-latest. The chart displays the percentage of wins, ties, and losses for each comparison. The data appears to be aggregated.

### Components/Axes

* **Title:** Kimi-K2-Instruct Open-Ended Evaluation (aggregated) - positioned at the top-center.

* **X-axis:** "% win rate" - ranging from 0% to 100%, with tick marks at 20%, 40%, 60%, 80%, and 100%.

* **Y-axis:** Lists the comparisons:

* Kimi-K2-Instruct\_vs DeepSeek-V3-0324

* Kimi-K2-Instruct\_vs Claude-Sonnet-4

* Kimi-K2-Instruct\_vs ChatGLM3-6b-latest

* **Legend:** Located at the top-right, with three entries:

* Blue: Win

* Gray: Tie

* Red: Loss

### Detailed Analysis

The chart consists of three sets of horizontal bars, one for each comparison. Each set is divided into three segments representing Win, Tie, and Loss percentages.

**1. Kimi-K2-Instruct vs DeepSeek-V3-0324:**

* Win (Blue): Approximately 59.6% - extends to just past the 60% mark on the x-axis.

* Tie (Gray): Approximately 23.5% - extends to just past the 20% mark on the x-axis.

* Loss (Red): Approximately 16.9% - extends to just past the 15% mark on the x-axis.

**2. Kimi-K2-Instruct vs Claude-Sonnet-4:**

* Win (Blue): Approximately 64.6% - extends to just past the 65% mark on the x-axis.

* Tie (Gray): Approximately 18.8% - extends to just past the 20% mark on the x-axis.

* Loss (Red): Approximately 16.6% - extends to just past the 15% mark on the x-axis.

**3. Kimi-K2-Instruct vs ChatGLM3-6b-latest:**

* Win (Blue): Approximately 65.4% - extends to just past the 65% mark on the x-axis.

* Tie (Gray): Approximately 17.6% - extends to just past the 15% mark on the x-axis.

* Loss (Red): Approximately 17.0% - extends to just past the 15% mark on the x-axis.

### Key Observations

* Kimi-K2-Instruct consistently wins against all three models.

* The win rate is highest against ChatGLM3-6b-latest (65.4%) and Claude-Sonnet-4 (64.6%), and slightly lower against DeepSeek-V3-0324 (59.6%).

* The loss rate is relatively consistent across all three comparisons, hovering around 16-17%.

* The tie rate is lowest against Claude-Sonnet-4 (18.8%) and highest against DeepSeek-V3-0324 (23.5%).

### Interpretation

The data suggests that Kimi-K2-Instruct generally outperforms DeepSeek-V3-0324, Claude-Sonnet-4, and ChatGLM3-6b-latest in open-ended evaluation tasks. The consistent win rates across all comparisons indicate a robust advantage for Kimi-K2-Instruct. The relatively low loss rates suggest that when Kimi-K2-Instruct does not win, it rarely performs significantly worse than the other models. The variations in tie rates might indicate differences in the types of responses generated by each model – for example, DeepSeek-V3-0324 may produce more responses that are difficult to definitively categorize as wins or losses. The aggregated nature of the data obscures potential nuances in performance across different types of open-ended tasks. Further analysis, disaggregated by task type, could provide a more detailed understanding of the strengths and weaknesses of each model.