## Diagram: Knowledge Graph Reasoning with CoT and Coconut

### Overview

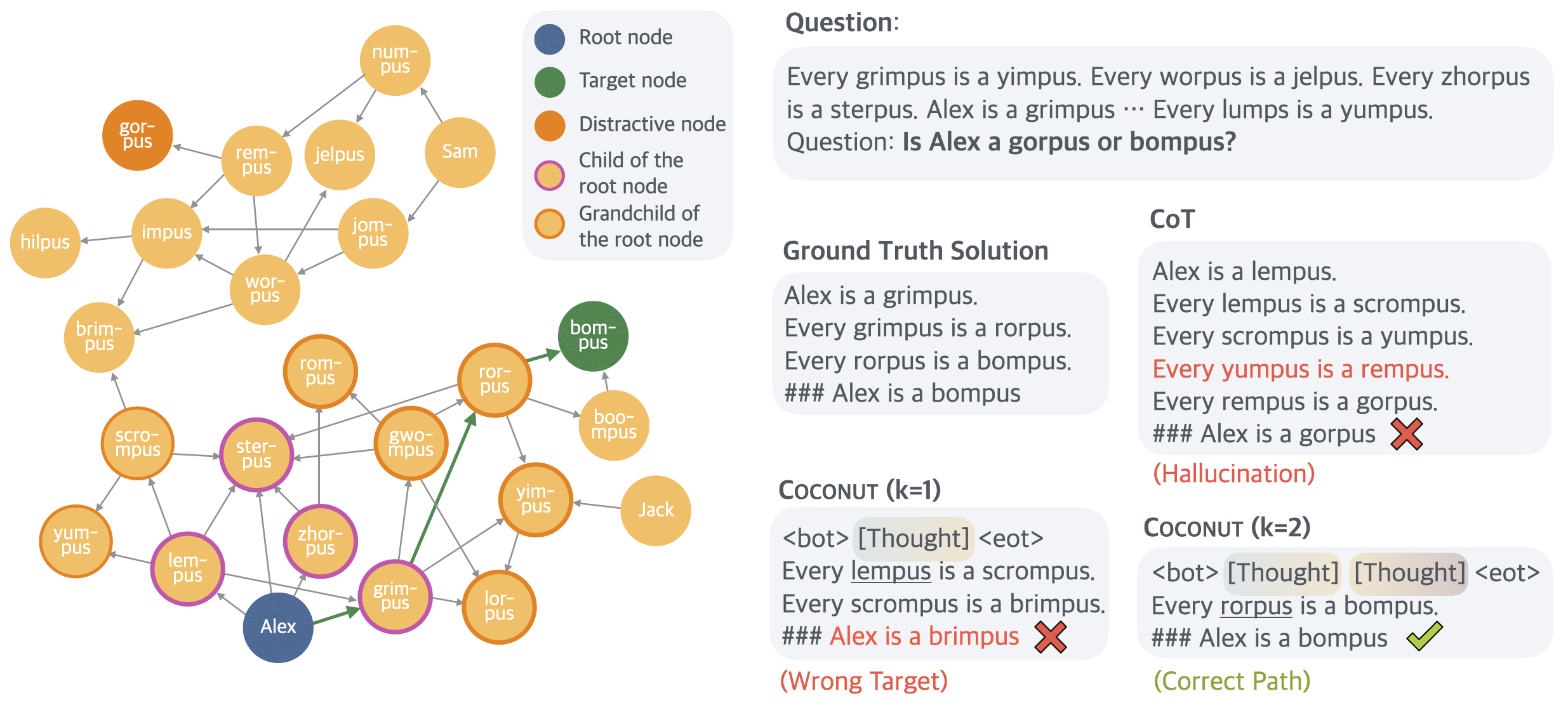

This diagram illustrates a knowledge graph reasoning task, comparing a "Ground Truth Solution" with outputs from "CoT" (Chain of Thought) and "Coconut" models (with k=1 and k=2). The graph represents relationships between entities like "Alex", "grimpus", "worpus", etc., and the task is to determine if Alex is a "gorpus" or "bompus". The diagram highlights potential "hallucinations" in the reasoning process.

### Components/Axes

The diagram is divided into three main sections:

1. **Knowledge Graph (Left):** A network of nodes and edges representing relationships between entities.

2. **Question & Solution (Right-Top):** Textual question and the correct answer.

3. **Reasoning Outputs (Right-Bottom):** Outputs from different reasoning models (CoT, Coconut) with annotations indicating correctness.

**Legend (Top-Right):**

* **Root node:** Orange

* **Target node:** Yellow

* **Distractive node:** Red

* **Child of the root node:** Light Green

* **Grandchild of the root node:** Light Yellow

**Nodes in the Knowledge Graph:**

* Alex (Dark Blue)

* grimpus (Green)

* worpus (Green)

* zhorpus (Green)

* rempus (Green)

* jelpus (Green)

* num-pus (Orange)

* gor-pus (Green)

* hilpus (Green)

* brim-pus (Green)

* scro-mpus (Green)

* em-pus (Green)

* yum-pus (Green)

* rom-pus (Green)

* jor-pus (Green)

* bom-pus (Green)

* boo-mpus (Green)

* yim-pus (Green)

* jack (Orange)

* gwo-impus (Green)

* ster-mpus (Green)

* lor-pus (Green)

* impus (Green)

* jom-pus (Green)

**Edges:** Represent relationships between nodes (not explicitly labeled, but visually indicated by lines connecting nodes).

### Detailed Analysis or Content Details

**Question (Right-Top):**

"Every grimpus is a yimpus. Every worpus is a jelpus. Every zhorpus is a sterpus. Alex is a grimpus … Every lumps is a yumpus. Question: Is Alex a gorpus or bompus?"

**Ground Truth Solution (Right-Top):**

"Alex is a lempus.

Every lempus is a scrompus.

Every scrompus is a yumpus.

Every yumpus is a rempus.

### Alex is a bompus"

**CoT (Right-Bottom):**

"Alex is a lempus.

Every lempus is a scrompus.

Every scrompus is a yumpus.

Every yumpus is a rempus.

Every rempus is a gorpus.

### Alex is a gorpus ❌ (Hallucination)"

**Coconut (k=1) (Right-Bottom):**

"<bot> [Thought1] <eot>

Every lempus is a scrompus.

Every scrompus is a brimpus.

### Alex is a brimpus ❌ (Wrong Target)"

**Coconut (k=2) (Right-Bottom):**

"<bot> [Thought1] [Thought1] <eot>

Every rorpus is a bompus.

### Alex is a bompus ✅ (Correct Path)"

**Knowledge Graph Analysis:**

* "Alex" is a dark blue node, connected to "grimpus" via an edge.

* "grimpus" is a green node, connected to several other nodes.

* "Sam" and "Jack" are orange nodes, designated as "Root nodes".

* The graph appears to be a directed graph, with edges indicating a "is a" relationship (implied).

* The graph is relatively dense, with many interconnected nodes.

### Key Observations

* The "CoT" model hallucinates, incorrectly concluding that Alex is a "gorpus" despite the correct path leading to "bompus".

* "Coconut" with k=1 fails to find the correct path, identifying Alex as a "brimpus".

* "Coconut" with k=2 successfully identifies Alex as a "bompus".

* The diagram visually emphasizes the incorrect reasoning steps with a red "❌" and the correct step with a green "✅".

* The use of different colors for nodes helps to distinguish between root nodes, target nodes, and distractive nodes.

### Interpretation

This diagram demonstrates the challenges of knowledge graph reasoning and the potential for large language models (LLMs) to generate incorrect inferences (hallucinations). The comparison between "CoT" and "Coconut" suggests that increasing the number of reasoning steps (k=2) can improve the accuracy of the model. The "Ground Truth Solution" provides a baseline for evaluating the performance of the models. The visual representation of the knowledge graph helps to understand the relationships between entities and the flow of reasoning. The diagram highlights the importance of careful evaluation and validation of LLM outputs, especially in tasks that require complex reasoning. The "hallucination" in the CoT output is a critical observation, demonstrating the need for more robust reasoning mechanisms. The diagram effectively illustrates how different model configurations can impact the accuracy of knowledge graph reasoning.