## State Transition Diagram: Reward Machines for Agents A1, A2, and A3

### Overview

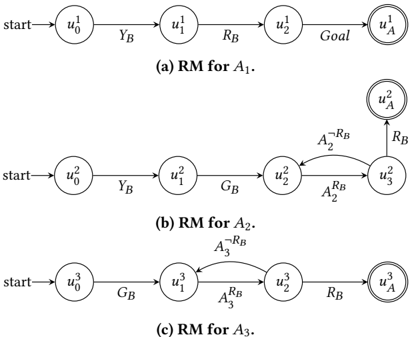

The image displays three separate finite state machine diagrams, specifically labeled as "RM" (likely Reward Machines) for three distinct agents: A1, A2, and A3. Each diagram illustrates a sequence of states and the transitions between them, culminating in a goal state. The diagrams are arranged vertically, labeled (a), (b), and (c) from top to bottom.

### Components/Axes

* **Diagram Type:** State Transition Diagram / Finite State Machine.

* **Common Elements:**

* **States:** Represented by circles. Each state has a unique label (e.g., `u_0^1`).

* **Start State:** Indicated by an arrow labeled "start" pointing to the initial state (`u_0^x`).

* **Goal State:** Represented by a double-circled state labeled `u_A^x`.

* **Transitions:** Represented by directed arrows between states. Each transition is labeled with a symbol (e.g., `Y_B`, `R_B`, `G_B`, `A_2^{R_B}`).

* **Specific Labels per Diagram:**

* **(a) RM for A1:** States: `u_0^1`, `u_1^1`, `u_2^1`, `u_A^1`. Transition Labels: `Y_B`, `R_B`, `Goal`.

* **(b) RM for A2:** States: `u_0^2`, `u_1^2`, `u_2^2`, `u_3^2`, `u_A^2`. Transition Labels: `Y_B`, `G_B`, `A_2^{~R_B}`, `A_2^{R_B}`, `R_B`.

* **(c) RM for A3:** States: `u_0^3`, `u_1^3`, `u_2^3`, `u_A^3`. Transition Labels: `G_B`, `A_3^{~R_B}`, `A_3^{R_B}`, `R_B`.

### Detailed Analysis

**Diagram (a) RM for A1:**

* **Flow:** Linear and sequential.

* **Path:** `start` → `u_0^1` → (via `Y_B`) → `u_1^1` → (via `R_B`) → `u_2^1` → (via `Goal`) → `u_A^1` (Goal State).

* **Structure:** This is the simplest machine, with a single, non-branching path from start to goal.

**Diagram (b) RM for A2:**

* **Flow:** Contains a loop between two states.

* **Path:** `start` → `u_0^2` → (via `Y_B`) → `u_1^2` → (via `G_B`) → `u_2^2`.

* **Loop:** Between `u_2^2` and `u_3^2`. From `u_2^2`, transition `A_2^{R_B}` leads to `u_3^2`. From `u_3^2`, transition `A_2^{~R_B}` leads back to `u_2^2`.

* **Exit:** From `u_3^2`, transition `R_B` leads to the goal state `u_A^2`.

**Diagram (c) RM for A3:**

* **Flow:** Contains a loop between two states, but the loop occurs earlier in the sequence compared to (b).

* **Path:** `start` → `u_0^3` → (via `G_B`) → `u_1^3`.

* **Loop:** Between `u_1^3` and `u_2^3`. From `u_1^3`, transition `A_3^{R_B}` leads to `u_2^3`. From `u_2^3`, transition `A_3^{~R_B}` leads back to `u_1^3`.

* **Exit:** From `u_2^3`, transition `R_B` leads to the goal state `u_A^3`.

### Key Observations

1. **Structural Progression:** The machines increase in complexity from (a) to (b). Machine (c) shares the loop structure of (b) but places it at a different point in the state sequence.

2. **Symbol Consistency:** The transition labels `Y_B`, `G_B`, and `R_B` appear across multiple machines, suggesting they represent common events or conditions (e.g., "Yellow Button", "Green Button", "Red Button").

3. **Conditional Transitions:** The labels `A_2^{R_B}`, `A_2^{~R_B}`, `A_3^{R_B}`, and `A_3^{~R_B}` indicate conditional transitions. The superscript `R_B` likely means "if Red Button is pressed/active," while `~R_B` means "if Red Button is NOT pressed/active." This creates a decision point or a waiting loop within the machine.

4. **Goal State Notation:** The goal state is consistently denoted by a double circle and the label `u_A^x`, where `x` corresponds to the agent number.

### Interpretation

These diagrams formally specify the task or reward structure for three different agents in a multi-agent or sequential decision-making environment. They define the permissible sequences of events (button presses) that each agent must experience to achieve its goal.

* **Agent A1 (a):** Has a straightforward, fixed sequence: Yellow, then Red, then "Goal." It cannot deviate or wait.

* **Agent A2 (b):** Must first experience Yellow, then Green. After Green, it enters a loop where it can repeatedly experience the Red Button event (`A_2^{R_B}`) and its absence (`A_2^{~R_B}`) before finally transitioning to the goal upon a final Red Button event. This suggests A2's goal is contingent on a specific pattern or count of Red Button interactions *after* seeing Green.

* **Agent A3 (c):** Must first experience Green. It then enters a similar Red Button loop (`A_3^{R_B}` / `A_3^{~R_B}`) *before* its final transition to the goal, which is also triggered by a Red Button event. The key difference from A2 is the order of the initial event (Green first) and the placement of the loop.

**Underlying Logic:** The machines likely model a scenario where agents must coordinate or follow specific protocols involving shared resources (the buttons). The loops represent states where an agent is waiting for a specific condition (the Red Button) to be met before it can proceed. The differences between A2 and A3 show how the same conditional logic (the Red Button loop) can be embedded within different overall task sequences. This is a classic representation in formal methods and reinforcement learning for defining complex, event-driven tasks.