## Line Chart: Model Size vs. Center Accuracy

### Overview

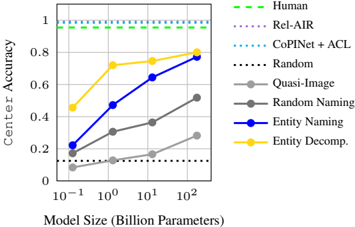

The image is a line chart comparing the "Center Accuracy" performance of various computational models and human baselines as a function of model size, measured in billions of parameters. The chart uses a logarithmic scale for the x-axis. The primary purpose is to illustrate how scaling model size impacts accuracy for different approaches, with several baseline comparisons.

### Components/Axes

* **Chart Type:** Line chart with multiple series.

* **X-Axis:**

* **Label:** "Model Size (Billion Parameters)"

* **Scale:** Logarithmic (base 10).

* **Markers/Ticks:** 10⁻¹ (0.1), 10⁰ (1), 10¹ (10), 10² (100).

* **Y-Axis:**

* **Label:** "Center Accuracy"

* **Scale:** Linear, from 0 to 1.

* **Markers/Ticks:** 0, 0.2, 0.4, 0.6, 0.8, 1.0.

* **Legend:** Located in the top-right quadrant of the chart area. It contains 8 entries, each with a distinct line style/color and a label.

1. **Human:** Green dashed line (`--`).

2. **Rel-AIR:** Purple dotted line (`:`).

3. **CoPINet + ACL:** Cyan dotted line (`:`).

4. **Random:** Black dotted line (`:`).

5. **Quasi-Image:** Gray solid line with circle markers.

6. **Random Naming:** Gray solid line with circle markers (lighter shade than Quasi-Image).

7. **Entity Naming:** Blue solid line with circle markers.

8. **Entity Decomp.:** Yellow solid line with circle markers.

### Detailed Analysis

**Data Series Trends and Approximate Points:**

1. **Human (Green dashed line):**

* **Trend:** Perfectly horizontal, constant line.

* **Value:** ~1.0 (or 100% accuracy) across all model sizes. This represents the human performance ceiling.

2. **Rel-AIR (Purple dotted line):**

* **Trend:** Perfectly horizontal, constant line.

* **Value:** ~0.95 across all model sizes.

3. **CoPINet + ACL (Cyan dotted line):**

* **Trend:** Perfectly horizontal, constant line.

* **Value:** ~0.92 across all model sizes.

4. **Random (Black dotted line):**

* **Trend:** Perfectly horizontal, constant line.

* **Value:** ~0.12 across all model sizes. This represents a random guess baseline.

5. **Quasi-Image (Gray solid line, darker):**

* **Trend:** Slopes upward from left to right, showing improvement with scale.

* **Approximate Points:**

* At 0.1B params: ~0.08

* At 1B params: ~0.15

* At 10B params: ~0.22

* At 100B params: ~0.28

6. **Random Naming (Gray solid line, lighter):**

* **Trend:** Slopes upward from left to right, but remains below all other non-random models.

* **Approximate Points:**

* At 0.1B params: ~0.05

* At 1B params: ~0.10

* At 10B params: ~0.18

* At 100B params: ~0.25

7. **Entity Naming (Blue solid line):**

* **Trend:** Strong upward slope, showing significant improvement with scale. It surpasses the "Quasi-Image" and "Random Naming" models.

* **Approximate Points:**

* At 0.1B params: ~0.20

* At 1B params: ~0.48

* At 10B params: ~0.65

* At 100B params: ~0.78

8. **Entity Decomp. (Yellow solid line):**

* **Trend:** Strong upward slope, similar to "Entity Naming" but consistently higher. It is the best-performing scalable model shown.

* **Approximate Points:**

* At 0.1B params: ~0.45

* At 1B params: ~0.72

* At 10B params: ~0.75

* At 100B params: ~0.80

### Key Observations

1. **Performance Hierarchy:** The chart establishes a clear performance hierarchy: Human > Rel-AIR > CoPINet+ACL > Entity Decomp. > Entity Naming > Quasi-Image > Random Naming > Random.

2. **Scaling Laws:** The four models with gray, blue, and yellow lines ("Quasi-Image", "Random Naming", "Entity Naming", "Entity Decomp.") all demonstrate that increasing model size (parameters) leads to higher Center Accuracy. The relationship appears roughly linear on this log-linear plot.

3. **Diminishing Returns:** The slope of the "Entity Decomp." and "Entity Naming" lines appears to flatten slightly between 10B and 100B parameters compared to the jump from 1B to 10B, suggesting potential diminishing returns at very large scales.

4. **Baselines:** The "Human", "Rel-AIR", "CoPINet+ACL", and "Random" lines are flat, indicating their performance is independent of the model size being evaluated on the x-axis. They serve as fixed reference points.

5. **Gap to Human Performance:** Even the best-scaling model ("Entity Decomp.") at 100B parameters (~0.80 accuracy) remains significantly below the human baseline (~1.0).

### Interpretation

This chart is a comparative analysis of model performance on a task measured by "Center Accuracy." The data suggests several key insights:

* **The Power of Scale:** For the class of models represented by the solid lines (Quasi-Image, Random Naming, Entity Naming, Entity Decomp.), computational scale (model size) is a primary driver of performance. This aligns with modern deep learning scaling laws.

* **Architectural/Methodological Superiority:** The "Entity Decomp." and "Entity Naming" approaches are fundamentally more effective for this task than "Quasi-Image" or "Random Naming," as they achieve higher accuracy at every model size. The gap between them widens with scale, indicating their architectures or training objectives are better suited to leverage additional parameters.

* **The Ceiling of Current Methods:** The flat lines for "Rel-AIR" and "CoPINet+ACL" represent specialized, likely non-scalable or fixed-size models that perform very well but are outpaced by the largest "Entity Decomp." models. The fact that no model reaches the "Human" line indicates this task remains challenging, with a clear gap between machine and human-level performance.

* **The "Random" Baseline:** The "Random" line at ~0.12 provides a crucial floor. Any model performing near this line (like the smallest "Random Naming" model) is not learning meaningful patterns. The upward trend of the other models shows they are learning increasingly sophisticated representations.

In essence, the chart argues that for this specific task, scaling up "Entity Decomp." and "Entity Naming" models is a promising path toward higher accuracy, but specialized models and human performance still set the standard. The investigation would benefit from knowing the specific task "Center Accuracy" refers to, as this context defines the significance of the performance gaps.