## Diagram: Document Generation and Augmentation Process

### Overview

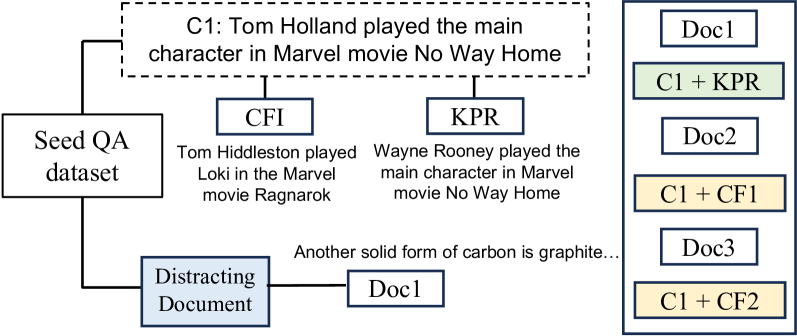

The image is a flowchart or schematic diagram illustrating a process for generating and augmenting documents, likely for a machine learning or natural language processing task. It shows how a "Seed QA dataset" leads to the creation of a core claim (C1), which is then combined with other documents or transformed into different versions. The diagram uses boxes, connecting lines, and text labels to depict relationships and data flow.

### Components/Axes

The diagram is composed of several labeled boxes connected by lines, indicating relationships or processes. There are no traditional chart axes. The key components are:

1. **Main Claim (C1):** A dashed-line box at the top-center containing the text: "C1: Tom Holland played the main character in Marvel movie No Way Home".

2. **Source Dataset:** A box on the left labeled "Seed QA dataset".

3. **Derived Documents/Transformations:**

* A box labeled "CFI" connected to C1, with descriptive text below: "Tom Hiddleston played Loki in the Marvel movie Ragnarok".

* A box labeled "KPR" connected to C1, with descriptive text below: "Wayne Rooney played the main character in Marvel movie No Way Home".

4. **Distracting Element:** A box labeled "Distracting Document" connected to the "Seed QA dataset". It is further connected to a box labeled "Doc1", which has descriptive text: "Another solid form of carbon is graphite...".

5. **Output Document Stack:** A vertical column on the far right, enclosed in a blue border, listing final document outputs. The boxes are:

* "Doc1" (white background)

* "C1 + KPR" (light green background)

* "Doc2" (white background)

* "C1 + CF1" (light yellow background)

* "Doc3" (white background)

* "C1 + CF2" (light yellow background)

### Detailed Analysis

The diagram maps a flow from a source to various outputs:

* **Flow from Seed QA Dataset:** The "Seed QA dataset" has two primary outputs:

1. It generates the core claim "C1".

2. It produces a "Distracting Document", which is exemplified by "Doc1" containing unrelated factual text about carbon.

* **Flow from Core Claim (C1):** The claim C1 is used to generate two related but distinct documents:

* **CFI:** This appears to be a "Counterfactual" or similar transformation, changing the subject and movie while keeping the structure ("played [role] in the Marvel movie [title]").

* **KPR:** This appears to be a "Knowledge Perturbation" or similar, changing the subject to an implausible one (Wayne Rooney) while keeping the original movie and claim structure.

* **Final Document Composition:** The right-hand column shows the final set of documents, which includes:

* Original documents from the distracting stream ("Doc1", "Doc2", "Doc3").

* Augmented documents that combine the core claim (C1) with the generated variants ("C1 + KPR", "C1 + CF1", "C1 + CF2"). The color coding (green for KPR, yellow for CF variants) visually groups these augmented documents.

### Key Observations

1. **Purpose-Driven Generation:** The diagram explicitly shows the creation of specific types of documents (CFI, KPR) from a seed claim, suggesting a controlled data augmentation or adversarial example generation process.

2. **Inclusion of Noise:** The "Distracting Document" stream (Doc1, Doc2, Doc3) appears to be intentionally included, likely to test a model's ability to distinguish relevant from irrelevant information.

3. **Combinatorial Output:** The final output is not just the original or the augmented documents in isolation, but specific combinations (e.g., "C1 + KPR"), indicating the task may involve reasoning over paired or concatenated texts.

4. **Visual Grouping:** The use of background color in the final document stack (green for KPR combination, yellow for CFI combinations) provides a clear visual cue to distinguish between the types of augmentations applied to the core claim.

### Interpretation

This diagram outlines a methodology for constructing a **challenge dataset** for evaluating AI systems, particularly in tasks like question answering, fact verification, or reading comprehension.

* **What it demonstrates:** It shows a pipeline for creating a test set that includes:

* A **core factual claim** (C1).

* **Counterfactual or perturbed versions** of that claim (CFI, KPR) to test robustness.

* **Irrelevant "distractor" documents** to test a model's ability to filter noise.

* **Combined documents** that force the model to integrate or choose between conflicting or complementary information.

* **Relationships:** The "Seed QA dataset" is the root. The core claim (C1) is the central subject of manipulation. The CFI and KPR are systematic transformations of C1. The distracting documents are an independent stream. The final column represents the actual input samples presented to an AI model.

* **Notable Implications:** The specific examples used (actors, movies, football players, carbon) suggest the dataset is designed to test **world knowledge** and **commonsense reasoning**. The structure implies the evaluation would measure a model's ability to:

1. Identify the correct fact from a set of similar but incorrect statements.

2. Ignore irrelevant supporting documents.

3. Handle combinations of correct and incorrect information.

**Language Note:** All text in the image is in English.