\n

## Bar Chart: Multimodal Troubleshooting Virology

### Overview

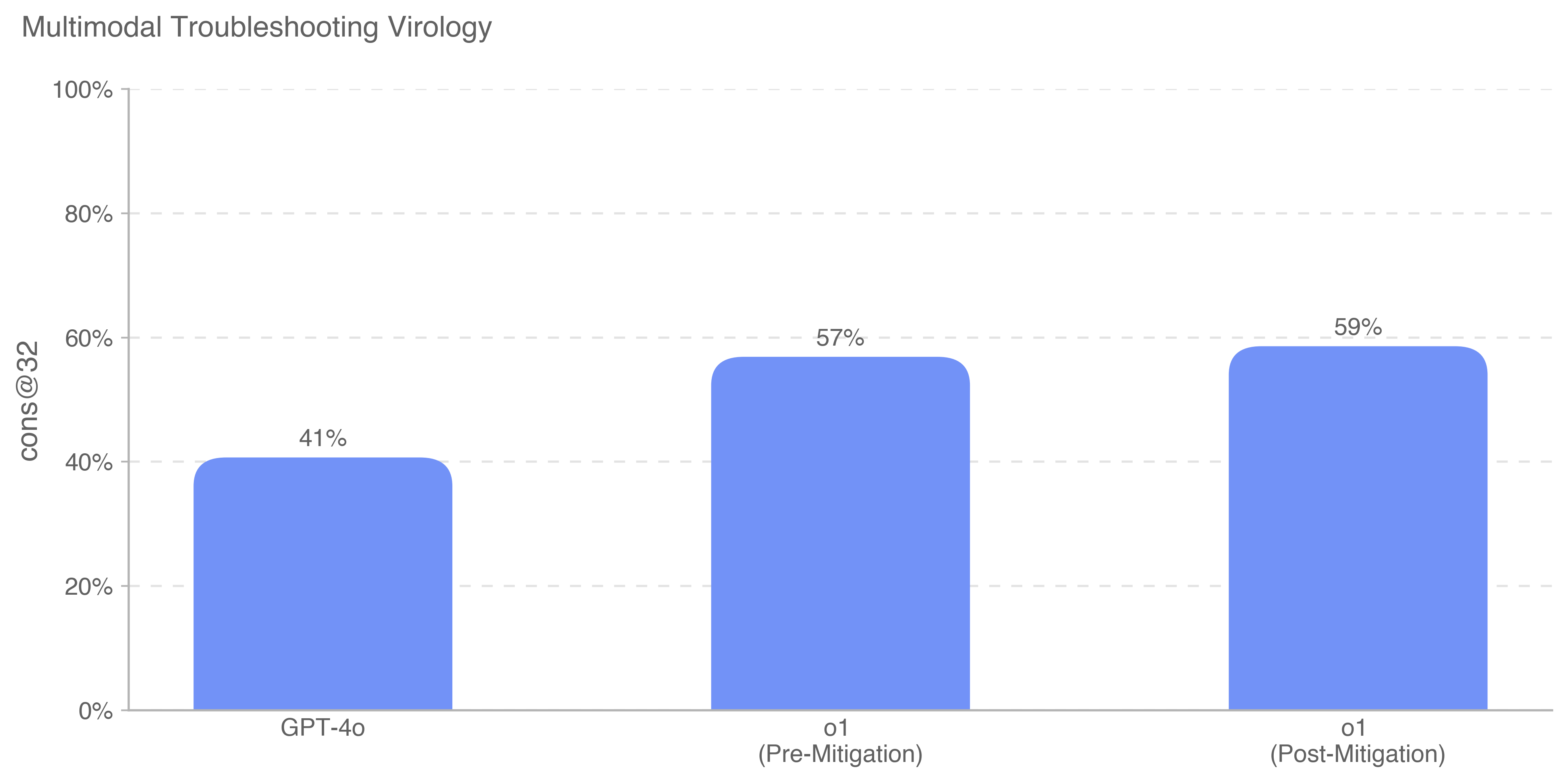

This is a vertical bar chart comparing the performance of three different AI models or model states on a task labeled "Multimodal Troubleshooting Virology." The performance metric is "cons@32," presented as a percentage. The chart shows a clear performance hierarchy among the three entities.

### Components/Axes

* **Chart Title:** "Multimodal Troubleshooting Virology" (located at the top-left of the chart area).

* **Y-Axis:**

* **Label:** "cons@32" (rotated vertically on the left side).

* **Scale:** Linear scale from 0% to 100%, with major tick marks and gridlines at 20% intervals (0%, 20%, 40%, 60%, 80%, 100%).

* **X-Axis:**

* **Categories:** Three distinct bars representing different models or conditions.

* **Category Labels (from left to right):**

1. "GPT-4o"

2. "o1 (Pre-Mitigation)"

3. "o1 (Post-Mitigation)"

* **Data Series:** A single data series represented by solid blue bars. There is no legend, as the categories are directly labeled on the x-axis.

* **Data Labels:** The exact percentage value is displayed above each bar.

### Detailed Analysis

The chart presents the following specific data points:

1. **GPT-4o:**

* **Position:** Leftmost bar.

* **Value:** 41% (as labeled above the bar).

* **Visual Trend:** This is the shortest bar, indicating the lowest performance among the three.

2. **o1 (Pre-Mitigation):**

* **Position:** Center bar.

* **Value:** 57% (as labeled above the bar).

* **Visual Trend:** This bar is significantly taller than the GPT-4o bar, showing a substantial performance increase.

3. **o1 (Post-Mitigation):**

* **Position:** Rightmost bar.

* **Value:** 59% (as labeled above the bar).

* **Visual Trend:** This is the tallest bar, but only marginally taller than the "Pre-Mitigation" bar. The visual difference between the two "o1" bars is small.

**Trend Verification:** The visual trend across the three bars is a stepwise increase from left to right. The jump from the first to the second bar is large, while the increase from the second to the third is minimal.

### Key Observations

* **Performance Hierarchy:** The "o1" model, in both states, significantly outperforms "GPT-4o" on this specific virology troubleshooting task.

* **Mitigation Impact:** The application of "Mitigation" to the "o1" model resulted in a very small performance gain of only 2 percentage points (from 57% to 59%).

* **Metric:** The performance is measured by "cons@32," which likely refers to a specific evaluation metric (e.g., consistency at a certain threshold or sample size of 32). The exact definition is not provided in the chart.

### Interpretation

The data suggests that the "o1" model architecture or training is fundamentally more capable than "GPT-4o" for the complex, multimodal task of troubleshooting in virology, as measured by the "cons@32" metric. The primary finding is the large performance gap between the model generations (GPT-4o vs. o1).

The "Mitigation" step applied to "o1" appears to have a negligible positive effect on this particular performance metric. This could imply several things: the mitigation was targeted at a different problem (e.g., safety, bias, or a different failure mode) not captured by "cons@32"; the model was already near a performance ceiling for this task; or the mitigation process involved a trade-off that slightly improved one aspect while minimally affecting this specific score. The chart alone does not reveal the nature of the "Mitigation," only its measured outcome on this benchmark.