TECHNICAL ASSET FINGERPRINT

67c79b0a72c11d7132905402

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

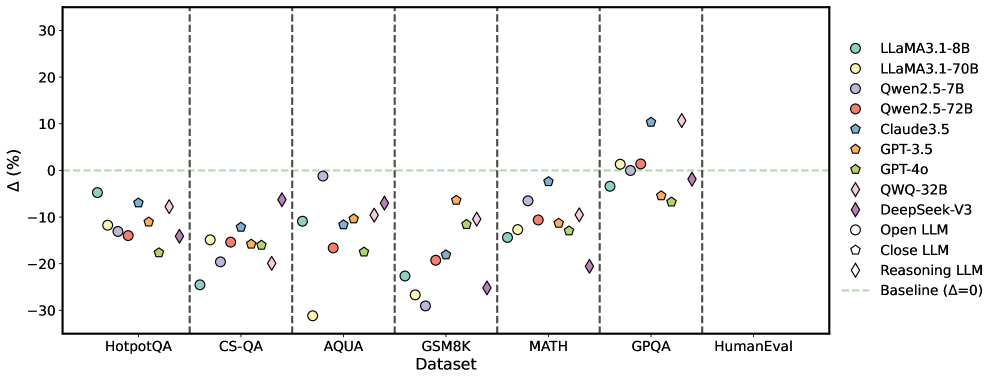

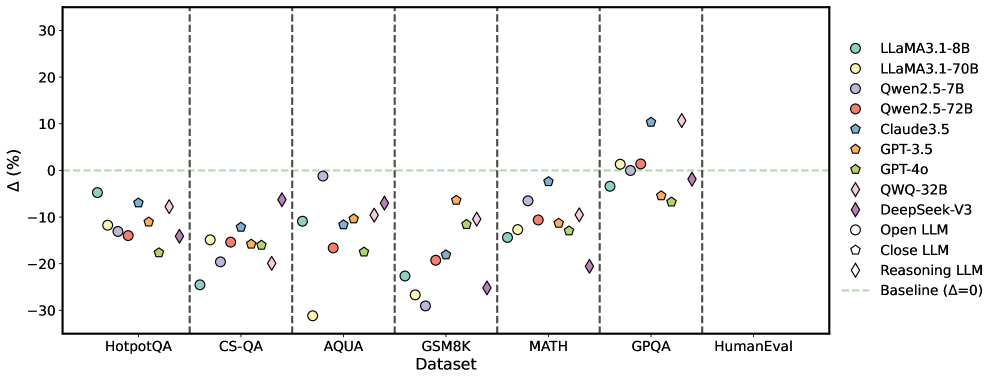

## Scatter Plot: Model Performance Across Datasets

### Overview

The image is a scatter plot comparing the performance of various Large Language Models (LLMs) across different datasets. The y-axis represents the percentage difference (Δ (%)) from a baseline, and the x-axis represents the datasets. Each LLM is represented by a unique color and marker.

### Components/Axes

* **X-Axis:** "Dataset" with categories: HotpotQA, CS-QA, AQUA, GSM8K, MATH, GPQA, HumanEval. Vertical dashed lines separate each dataset.

* **Y-Axis:** "Δ (%)" ranging from -30 to 30, with tick marks at -30, -20, -10, 0, 10, 20, and 30.

* **Legend (Top-Right):**

* Light Blue Circle: LLaMA3.1-8B

* Yellow Circle: LLaMA3.1-70B

* Light Purple Circle: Qwen2.5-7B

* Red Circle: Qwen2.5-72B

* Dark Blue Pentagon: Claude3.5

* Orange Pentagon: GPT-3.5

* Green Pentagon: GPT-4o

* Yellow Diamond: QWQ-32B

* Dark Purple Diamond: DeepSeek-V3

* White Circle: Open LLM

* White Pentagon: Close LLM

* White Diamond: Reasoning LLM

* Light Green Dashed Line: Baseline (Δ=0)

* **Baseline:** A horizontal dashed light green line at Δ (%) = 0.

### Detailed Analysis

Here's a breakdown of the approximate performance of each model on each dataset:

* **HotpotQA:**

* LLaMA3.1-8B (Light Blue Circle): Approximately -5%

* LLaMA3.1-70B (Yellow Circle): Approximately -12%

* Qwen2.5-7B (Light Purple Circle): Approximately -13%

* Qwen2.5-72B (Red Circle): Approximately -14%

* Claude3.5 (Dark Blue Pentagon): Approximately -7%

* GPT-3.5 (Orange Pentagon): Approximately -10%

* GPT-4o (Green Pentagon): Approximately -17%

* QWQ-32B (Yellow Diamond): Approximately -8%

* DeepSeek-V3 (Dark Purple Diamond): Approximately -12%

* Open LLM (White Circle): Approximately -14%

* Close LLM (White Pentagon): Approximately -12%

* Reasoning LLM (White Diamond): Approximately -10%

* **CS-QA:**

* LLaMA3.1-8B (Light Blue Circle): Approximately -23%

* LLaMA3.1-70B (Yellow Circle): Approximately -19%

* Qwen2.5-7B (Light Purple Circle): Approximately -12%

* Qwen2.5-72B (Red Circle): Approximately -15%

* Claude3.5 (Dark Blue Pentagon): Approximately -12%

* GPT-3.5 (Orange Pentagon): Approximately -15%

* GPT-4o (Green Pentagon): Approximately -15%

* QWQ-32B (Yellow Diamond): Approximately -20%

* DeepSeek-V3 (Dark Purple Diamond): Approximately -12%

* Open LLM (White Circle): Approximately -19%

* Close LLM (White Pentagon): Approximately -15%

* Reasoning LLM (White Diamond): Approximately -15%

* **AQUA:**

* LLaMA3.1-8B (Light Blue Circle): Approximately -22%

* LLaMA3.1-70B (Yellow Circle): Approximately -27%

* Qwen2.5-7B (Light Purple Circle): Approximately -6%

* Qwen2.5-72B (Red Circle): Approximately -17%

* Claude3.5 (Dark Blue Pentagon): Approximately -18%

* GPT-3.5 (Orange Pentagon): Approximately -6%

* GPT-4o (Green Pentagon): Approximately -10%

* QWQ-32B (Yellow Diamond): Approximately -8%

* DeepSeek-V3 (Dark Purple Diamond): Approximately -6%

* Open LLM (White Circle): Approximately -22%

* Close LLM (White Pentagon): Approximately -27%

* Reasoning LLM (White Diamond): Approximately -10%

* **GSM8K:**

* LLaMA3.1-8B (Light Blue Circle): Approximately -23%

* LLaMA3.1-70B (Yellow Circle): Approximately -28%

* Qwen2.5-7B (Light Purple Circle): Approximately -14%

* Qwen2.5-72B (Red Circle): Approximately -17%

* Claude3.5 (Dark Blue Pentagon): Approximately -18%

* GPT-3.5 (Orange Pentagon): Approximately -10%

* GPT-4o (Green Pentagon): Approximately -10%

* QWQ-32B (Yellow Diamond): Approximately -10%

* DeepSeek-V3 (Dark Purple Diamond): Approximately -14%

* Open LLM (White Circle): Approximately -20%

* Close LLM (White Pentagon): Approximately -28%

* Reasoning LLM (White Diamond): Approximately -10%

* **MATH:**

* LLaMA3.1-8B (Light Blue Circle): Approximately -10%

* LLaMA3.1-70B (Yellow Circle): Approximately -10%

* Qwen2.5-7B (Light Purple Circle): Approximately -5%

* Qwen2.5-72B (Red Circle): Approximately -1%

* Claude3.5 (Dark Blue Pentagon): Approximately -1%

* GPT-3.5 (Orange Pentagon): Approximately -5%

* GPT-4o (Green Pentagon): Approximately -10%

* QWQ-32B (Yellow Diamond): Approximately -8%

* DeepSeek-V3 (Dark Purple Diamond): Approximately -5%

* Open LLM (White Circle): Approximately -10%

* Close LLM (White Pentagon): Approximately -10%

* Reasoning LLM (White Diamond): Approximately -5%

* **GPQA:**

* LLaMA3.1-8B (Light Blue Circle): Approximately 0%

* LLaMA3.1-70B (Yellow Circle): Approximately -5%

* Qwen2.5-7B (Light Purple Circle): Approximately 10%

* Qwen2.5-72B (Red Circle): Approximately 10%

* Claude3.5 (Dark Blue Pentagon): Approximately 11%

* GPT-3.5 (Orange Pentagon): Approximately 1%

* GPT-4o (Green Pentagon): Approximately -5%

* QWQ-32B (Yellow Diamond): Approximately 12%

* DeepSeek-V3 (Dark Purple Diamond): Approximately 1%

* Open LLM (White Circle): Approximately 0%

* Close LLM (White Pentagon): Approximately -5%

* Reasoning LLM (White Diamond): Approximately 1%

* **HumanEval:**

* LLaMA3.1-8B (Light Blue Circle): Approximately -10%

* LLaMA3.1-70B (Yellow Circle): Approximately -10%

* Qwen2.5-7B (Light Purple Circle): Approximately -10%

* Qwen2.5-72B (Red Circle): Approximately -15%

* Claude3.5 (Dark Blue Pentagon): Approximately -12%

* GPT-3.5 (Orange Pentagon): Approximately -15%

* GPT-4o (Green Pentagon): Approximately -15%

* QWQ-32B (Yellow Diamond): Approximately -2%

* DeepSeek-V3 (Dark Purple Diamond): Approximately -10%

* Open LLM (White Circle): Approximately -10%

* Close LLM (White Pentagon): Approximately -10%

* Reasoning LLM (White Diamond): Approximately -2%

### Key Observations

* Most models perform below the baseline (Δ=0) on the HotpotQA, CS-QA, AQUA, GSM8K, and MATH datasets.

* The performance varies significantly across different datasets for all models.

* QWQ-32B, Claude3.5, Qwen2.5-7B, and Qwen2.5-72B tend to perform relatively better on GPQA.

* QWQ-32B and Reasoning LLM perform relatively better on HumanEval.

### Interpretation

The scatter plot provides a comparative analysis of the performance of various LLMs on different question-answering and reasoning datasets. The negative Δ (%) values indicate that most models underperform relative to the baseline on several datasets. The variability in performance across datasets suggests that different models have varying strengths and weaknesses depending on the type of task or knowledge required. The better performance of some models on GPQA and HumanEval indicates that these models might be better suited for tasks requiring general problem-solving abilities or human-like evaluation skills. The plot highlights the importance of evaluating LLMs on a diverse set of benchmarks to understand their capabilities and limitations.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Scatter Plot: Performance Comparison of LLMs Across Datasets

### Overview

The image presents a scatter plot comparing the performance of various Large Language Models (LLMs) across seven different datasets. The y-axis represents the performance difference (Δ) in percentage points, while the x-axis lists the datasets. Each point on the plot represents the performance of a specific LLM on a given dataset, relative to a baseline.

### Components/Axes

* **X-axis:** "Dataset" with the following categories: HotpotQA, CS-QA, AQUA, GSM8K, MATH, GPQA, HumanEval.

* **Y-axis:** "Δ (%)" (Delta in percentage), ranging from approximately -30% to 30%.

* **Legend:** Located in the top-right corner, identifying each LLM with a unique color and marker shape. The legend includes:

* LLaMA3.1-8B (Light Blue Circle)

* LLaMA3.1-70B (Dark Blue Circle)

* Qwen2.5-7B (Light Red Circle)

* Qwen2.5-72B (Dark Red Circle)

* Claude3.5 (Light Green Diamond)

* GPT-3.5 (Dark Green Triangle)

* GPT-4o (Light Purple Diamond)

* QWQ-32B (Dark Purple Diamond)

* DeepSeek-V3 (Magenta Diamond)

* Open LLM (Grey Circle)

* Close LLM (Grey Hexagon)

* Reasoning LLM (Grey Diamond)

* **Baseline:** A dashed horizontal line at Δ=0, representing the baseline performance.

### Detailed Analysis

The plot shows the performance variation of each LLM across the datasets. Here's a breakdown of the approximate values, noting the inherent uncertainty in reading values from a visual plot:

* **HotpotQA:**

* LLaMA3.1-8B: ~-5%

* LLaMA3.1-70B: ~-10%

* Qwen2.5-7B: ~-10%

* Qwen2.5-72B: ~-15%

* Claude3.5: ~0%

* GPT-3.5: ~-5%

* GPT-4o: ~5%

* QWQ-32B: ~-5%

* DeepSeek-V3: ~-10%

* Open LLM: ~-5%

* Close LLM: ~-10%

* Reasoning LLM: ~0%

* **CS-QA:**

* LLaMA3.1-8B: ~-10%

* LLaMA3.1-70B: ~-15%

* Qwen2.5-7B: ~-10%

* Qwen2.5-72B: ~-15%

* Claude3.5: ~-5%

* GPT-3.5: ~-10%

* GPT-4o: ~5%

* QWQ-32B: ~-5%

* DeepSeek-V3: ~-10%

* Open LLM: ~-10%

* Close LLM: ~-15%

* Reasoning LLM: ~0%

* **AQUA:**

* LLaMA3.1-8B: ~-10%

* LLaMA3.1-70B: ~-10%

* Qwen2.5-7B: ~-10%

* Qwen2.5-72B: ~-15%

* Claude3.5: ~0%

* GPT-3.5: ~-5%

* GPT-4o: ~10%

* QWQ-32B: ~-5%

* DeepSeek-V3: ~-10%

* Open LLM: ~-10%

* Close LLM: ~-15%

* Reasoning LLM: ~0%

* **GSM8K:**

* LLaMA3.1-8B: ~-20%

* LLaMA3.1-70B: ~-20%

* Qwen2.5-7B: ~-20%

* Qwen2.5-72B: ~-25%

* Claude3.5: ~-10%

* GPT-3.5: ~-15%

* GPT-4o: ~0%

* QWQ-32B: ~-10%

* DeepSeek-V3: ~-15%

* Open LLM: ~-20%

* Close LLM: ~-25%

* Reasoning LLM: ~-5%

* **MATH:**

* LLaMA3.1-8B: ~-25%

* LLaMA3.1-70B: ~-25%

* Qwen2.5-7B: ~-20%

* Qwen2.5-72B: ~-25%

* Claude3.5: ~-10%

* GPT-3.5: ~-20%

* GPT-4o: ~0%

* QWQ-32B: ~-10%

* DeepSeek-V3: ~-15%

* Open LLM: ~-25%

* Close LLM: ~-25%

* Reasoning LLM: ~-5%

* **GPQA:**

* LLaMA3.1-8B: ~0%

* LLaMA3.1-70B: ~0%

* Qwen2.5-7B: ~-5%

* Qwen2.5-72B: ~-10%

* Claude3.5: ~10%

* GPT-3.5: ~0%

* GPT-4o: ~5%

* QWQ-32B: ~0%

* DeepSeek-V3: ~-5%

* Open LLM: ~0%

* Close LLM: ~-5%

* Reasoning LLM: ~5%

* **HumanEval:**

* LLaMA3.1-8B: ~-10%

* LLaMA3.1-70B: ~-10%

* Qwen2.5-7B: ~-10%

* Qwen2.5-72B: ~-15%

* Claude3.5: ~0%

* GPT-3.5: ~-5%

* GPT-4o: ~5%

* QWQ-32B: ~-5%

* DeepSeek-V3: ~-10%

* Open LLM: ~-10%

* Close LLM: ~-15%

* Reasoning LLM: ~0%

### Key Observations

* GPT-4o consistently outperforms other models across most datasets, often showing positive Δ values.

* LLaMA3.1-8B and LLaMA3.1-70B show similar performance across all datasets.

* Qwen2.5-7B and Qwen2.5-72B also exhibit similar performance, generally lower than GPT-4o.

* The GSM8K and MATH datasets consistently show the lowest performance for most LLMs.

* The GPQA dataset shows the most variability in performance, with some models achieving positive Δ values.

### Interpretation

The data suggests that GPT-4o is the most robust and versatile LLM among those tested, demonstrating superior performance across a wide range of tasks and datasets. The consistent underperformance on GSM8K and MATH indicates these datasets pose a significant challenge for current LLMs, likely due to their complexity and reliance on mathematical reasoning. The relatively similar performance of the two LLaMA3.1 models and the two Qwen2.5 models suggests that increasing model size within these architectures does not necessarily translate to substantial performance gains. The grouping of "Open LLM", "Close LLM", and "Reasoning LLM" suggests a categorization based on model architecture or training methodology, and their performance is generally lower than the leading models like GPT-4o and Claude3.5. The scatter plot effectively visualizes the trade-offs between different LLMs and highlights the areas where further research and development are needed. The baseline (Δ=0) provides a crucial reference point for evaluating the relative performance of each model.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Scatter Plot: Performance Change (Δ%) of Various LLMs Across Multiple Datasets

### Overview

The image is a scatter plot comparing the performance change (Δ%) of multiple Large Language Models (LLMs) across seven different benchmark datasets. The plot uses distinct symbols and colors to represent each model, with a horizontal baseline at Δ=0 indicating no change in performance. The data points are grouped by dataset along the x-axis.

### Components/Axes

* **X-axis (Categorical):** Labeled "Dataset". The seven datasets listed from left to right are:

1. HotpotQA

2. CS-QA

3. AQUA

4. GSM8K

5. MATH

6. GPQA

7. HumanEval

* **Y-axis (Numerical):** Labeled "Δ(%)". The scale ranges from -30 to +30, with major tick marks at intervals of 10 (-30, -20, -10, 0, 10, 20, 30).

* **Legend (Top-Right):** Contains 12 entries, each pairing a model name with a unique symbol/color:

* LLaMA3.1-8B: Light blue circle

* LLaMA3.1-70B: Yellow circle

* Qwen2.5-7B: Light purple circle

* Qwen2.5-72B: Red circle

* Claude3.5: Blue pentagon

* GPT-3.5: Orange pentagon

* GPT-4o: Green pentagon

* QWQ-32B: Purple diamond

* DeepSeek-V3: Pink diamond

* Open LLM: Circle symbol (category)

* Close LLM: Pentagon symbol (category)

* Reasoning LLM: Diamond symbol (category)

* **Baseline:** A horizontal, dashed, light green line at y=0, labeled "Baseline (Δ=0)".

### Detailed Analysis

Performance change (Δ%) is plotted for each model on each dataset. Values are approximate based on visual positioning.

**1. HotpotQA:**

* All models show negative Δ% (performance decrease).

* Values range from approximately -5% (LLaMA3.1-8B) to -18% (GPT-4o).

* Cluster of points between -10% and -15% includes LLaMA3.1-70B, Qwen2.5-7B, Qwen2.5-72B, GPT-3.5, and Claude3.5.

**2. CS-QA:**

* All models show negative Δ%.

* Values range from approximately -12% (Claude3.5) to -25% (LLaMA3.1-8B).

* Most models cluster between -15% and -20%.

**3. AQUA:**

* All models show negative Δ%.

* Significant outlier: LLaMA3.1-70B shows the largest decrease at approximately -31%.

* Other models range from approximately -1% (Qwen2.5-7B) to -18% (Qwen2.5-72B).

**4. GSM8K:**

* All models show negative Δ%.

* Values range from approximately -6% (GPT-3.5) to -29% (Qwen2.5-7B).

* DeepSeek-V3 shows a decrease of approximately -25%.

**5. MATH:**

* All models show negative Δ%.

* Values range from approximately -2% (Claude3.5) to -21% (DeepSeek-V3).

* Most models cluster between -10% and -15%.

**6. GPQA:**

* Mixed performance. Some models show positive Δ%, others negative.

* **Positive Δ%:** Claude3.5 (~+10%), DeepSeek-V3 (~+11%), Qwen2.5-72B (~+1%).

* **Near Baseline:** LLaMA3.1-8B (~-3%), GPT-3.5 (~0%).

* **Negative Δ%:** LLaMA3.1-70B (~-5%), GPT-4o (~-6%), QWQ-32B (~-2%).

**7. HumanEval:**

* Data is sparse. Only two data points are clearly visible.

* Qwen2.5-72B: Approximately -2%.

* DeepSeek-V3: Approximately -2%.

* Other models are not plotted for this dataset.

### Key Observations

1. **Predominant Negative Trend:** Across the first five datasets (HotpotQA through MATH), every single model shows a negative performance change (Δ < 0), indicating a consistent decrease in performance on these benchmarks.

2. **GPQA as an Exception:** GPQA is the only dataset where multiple models (Claude3.5, DeepSeek-V3, Qwen2.5-72B) show a positive performance change.

3. **Model Performance Variability:**

* **LLaMA3.1-70B** shows extreme variability, with the largest decrease on AQUA (~-31%) but a relatively smaller decrease on GPQA (~-5%).

* **Claude3.5** and **DeepSeek-V3** (both "Reasoning LLMs" per the legend) are the top performers on GPQA, showing the only significant positive gains.

* **Qwen2.5-7B** shows one of the largest decreases on GSM8K (~-29%).

4. **Symbol/Category Correlation:** The legend categorizes models by symbol shape: circles for "Open LLM", pentagons for "Close LLM", and diamonds for "Reasoning LLM". This categorization is visually consistent throughout the plot.

### Interpretation

This chart likely illustrates the performance delta of various LLMs when subjected to a specific intervention, condition, or evaluation method compared to a baseline. The consistent negative Δ% across most datasets suggests the intervention generally hinders performance on tasks like question answering (HotpotQA, CS-QA, AQUA) and mathematical reasoning (GSM8K, MATH).

The notable exception is the GPQA dataset, where "Reasoning LLMs" (Claude3.5, DeepSeek-V3) and one other model (Qwen2.5-72B) show improved performance. This suggests the intervention or evaluation condition may specifically benefit certain model architectures or training paradigms on this particular type of task (GPQA is a graduate-level science QA benchmark).

The extreme negative outlier for LLaMA3.1-70B on AQUA indicates a severe and specific failure mode for that model under the tested condition. The sparse data for HumanEval limits conclusions but shows minimal negative impact for the two models plotted.

In summary, the data demonstrates that the evaluated condition has a broadly negative impact on LLM performance across diverse benchmarks, with a specific, positive exception for a subset of models on the GPQA dataset. This highlights the importance of evaluating model robustness across multiple domains, as performance impacts can be highly dataset- and model-specific.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Scatter Plot: Model Performance Comparison Across Datasets

### Overview

The image is a scatter plot comparing the performance change (Δ%) of various large language models (LLMs) across multiple question-answering and reasoning datasets. The plot includes 10 datasets on the x-axis and percentage change on the y-axis, with a baseline line at Δ=0%. Different models are represented by distinct colors and markers.

### Components/Axes

- **X-axis (Datasets)**:

- HotpotQA, CS-QA, AQUA, GSM8K, MATH, GPQA, HumanEval

- Dashed vertical lines separate datasets

- **Y-axis (Δ%)**:

- Range: -30% to 30%

- Baseline line at 0% (green dashed)

- **Legend**:

- Position: Right side

- Models:

- LLaMA3.1-8B (teal circle)

- LLaMA3.1-70B (yellow circle)

- Qwen2.5-7B (purple circle)

- Qwen2.5-72B (red circle)

- Claude3.5 (blue pentagon)

- GPT-3.5 (orange pentagon)

- GPT-4o (green pentagon)

- QWQ-32B (pink diamond)

- DeepSeek-V3 (purple diamond)

- Open LLM (white circle)

- Close LLM (blue pentagon)

- Reasoning LLM (purple diamond)

- Baseline (Δ=0): Green dashed line

### Detailed Analysis

1. **HotpotQA**:

- LLaMA3.1-8B: ~-15%

- DeepSeek-V3: ~-25%

- GPT-4o: ~-18%

- QWQ-32B: ~-12%

2. **CS-QA**:

- LLaMA3.1-70B: ~-10%

- Qwen2.5-72B: ~-15%

- GPT-3.5: ~-5%

- Reasoning LLM: ~-20%

3. **AQUA**:

- LLaMA3.1-8B: ~-10%

- Qwen2.5-7B: ~-5%

- GPT-4o: ~-12%

- DeepSeek-V3: ~-18%

4. **GSM8K**:

- LLaMA3.1-70B: ~-20%

- Qwen2.5-72B: ~-10%

- GPT-3.5: ~-15%

- Reasoning LLM: ~-25%

5. **MATH**:

- LLaMA3.1-8B: ~-5%

- Qwen2.5-7B: ~-10%

- GPT-4o: ~-8%

- DeepSeek-V3: ~-12%

6. **GPQA**:

- LLaMA3.1-70B: ~5%

- Qwen2.5-72B: ~3%

- GPT-4o: ~-2%

- DeepSeek-V3: ~10%

7. **HumanEval**:

- LLaMA3.1-8B: ~-2%

- Qwen2.5-7B: ~-5%

- GPT-4o: ~-3%

- DeepSeek-V3: ~8%

### Key Observations

- **DeepSeek-V3** consistently shows the largest negative deviations (e.g., -25% in HotpotQA, -25% in GSM8K).

- **LLaMA3.1-70B** and **GPT-4o** generally cluster near the baseline (Δ≈0%) across most datasets.

- **QWQ-32B** and **DeepSeek-V3** exhibit significant variability, with some datasets showing extreme negative performance (e.g., -20% in GSM8K for QWQ-32B).

- **HumanEval** shows the smallest deviations overall, with most models within ±10% of baseline.

### Interpretation

The data suggests significant variability in LLM performance across different question types and reasoning tasks. Models like **DeepSeek-V3** and **QWQ-32B** underperform in complex reasoning tasks (GSM8K, MATH), while **LLaMA3.1-70B** and **GPT-4o** demonstrate more consistent performance. The baseline line (Δ=0%) serves as a critical reference point, revealing that many models struggle to match human-level performance in specialized domains. Notably, **HumanEval** shows the least deviation, implying better alignment with human evaluation metrics compared to other datasets. The plot highlights the need for model specialization in specific reasoning domains, as no single model dominates across all tasks.

DECODING INTELLIGENCE...