TECHNICAL ASSET FINGERPRINT

68286e783cc762694c314991

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

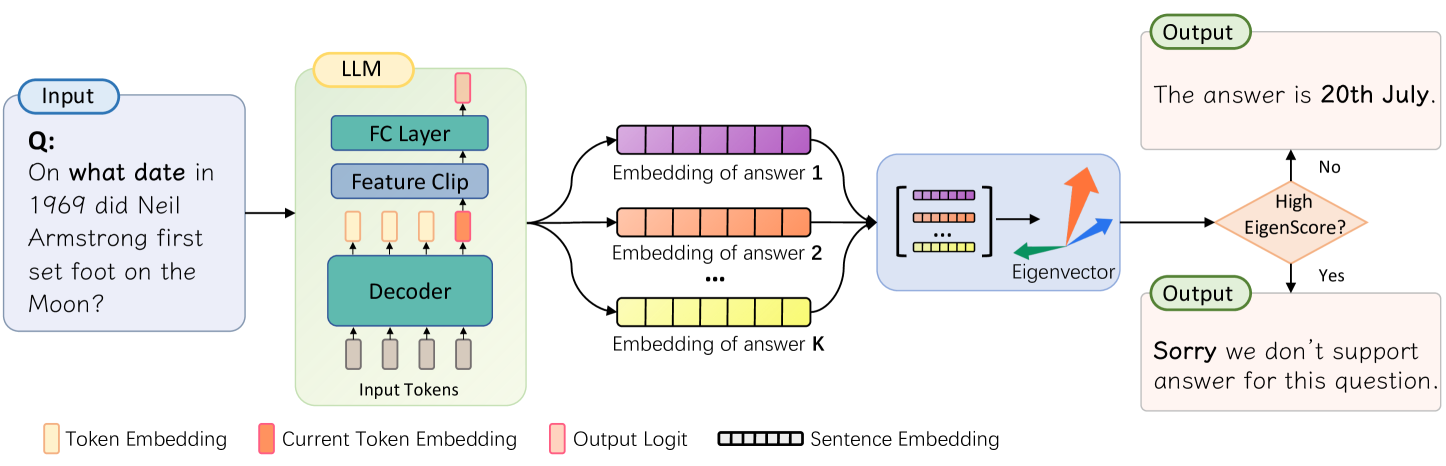

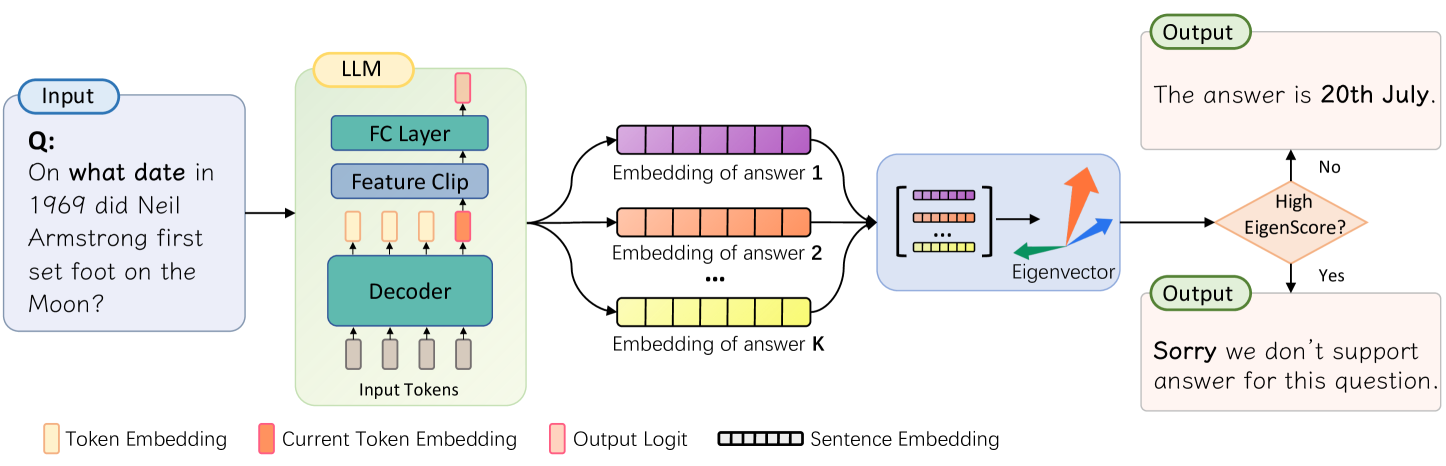

## Diagram: LLM Question Answering Process

### Overview

The image is a diagram illustrating the process of a Language Model (LLM) answering a question. It shows the flow of information from the input question, through the LLM's internal components, to the final output. The diagram includes decision-making based on an "EigenScore" to determine if the LLM can provide a supported answer.

### Components/Axes

* **Input:** A rounded rectangle labeled "Input" containing the question: "Q: On what date in 1969 did Neil Armstrong first set foot on the Moon?".

* **LLM:** A rounded rectangle labeled "LLM" containing the following components:

* FC Layer (Fully Connected Layer)

* Feature Clip

* Decoder

* Input Tokens (represented by gray rectangles)

* **Embedding of answer 1:** A series of connected purple rectangles.

* **Embedding of answer 2:** A series of connected orange rectangles.

* **Embedding of answer K:** A series of connected yellow rectangles.

* **Eigenvector:** A blue rounded rectangle containing a matrix of embeddings and three vectors (orange, blue, and green) labeled "Eigenvector".

* **High EigenScore?:** A diamond shape colored orange, used as a decision point.

* **Output (Top):** A rounded rectangle labeled "Output" containing the answer: "The answer is 20th July.".

* **Output (Bottom):** A rounded rectangle labeled "Output" containing the statement: "Sorry we don't support answer for this question.".

* **Legend (Bottom):**

* Token Embedding (light yellow rectangle)

* Current Token Embedding (light orange rectangle)

* Output Logit (light pink rectangle)

* Sentence Embedding (black outlined rectangle)

### Detailed Analysis or ### Content Details

1. **Input:** The input question is "On what date in 1969 did Neil Armstrong first set foot on the Moon?".

2. **LLM Processing:**

* The input tokens are fed into the Decoder.

* The Decoder's output is processed by the Feature Clip and FC Layer.

3. **Answer Embeddings:** The LLM generates multiple answer embeddings (1, 2, ..., K), represented by sequences of colored rectangles (purple, orange, yellow).

4. **Eigenvector Calculation:** The answer embeddings are combined and processed to calculate an Eigenvector.

5. **Decision Point:** The EigenScore is evaluated.

* If the EigenScore is high ("Yes"), the LLM outputs "Sorry we don't support answer for this question.".

* If the EigenScore is not high ("No"), the LLM outputs "The answer is 20th July.".

### Key Observations

* The diagram illustrates a question-answering system using an LLM.

* The LLM processes the input question and generates multiple potential answers.

* An Eigenvector is calculated based on the answer embeddings.

* The EigenScore is used to determine if the LLM can provide a supported answer.

* If the EigenScore is not high, the LLM provides an answer. If it is high, the LLM indicates that it cannot support the question.

### Interpretation

The diagram depicts a system where an LLM attempts to answer a question. The use of an EigenScore suggests a confidence or relevance metric. If the LLM is confident in its answer (low EigenScore), it provides the answer. If the LLM is not confident (high EigenScore), it declines to answer, indicating a mechanism for avoiding incorrect or unsupported responses. The "Sorry we don't support answer for this question" output suggests a fallback mechanism when the LLM's confidence in its answer is low. The diagram highlights the complexity of question-answering systems, including the need for confidence metrics and fallback mechanisms.

DECODING INTELLIGENCE...

EXPERT: gemini-3-flash-free VERSION 1

RUNTIME: google-free/gemini-3-flash-preview

INTEL_VERIFIED

## Diagram Type: LLM Uncertainty/Verification Workflow using EigenScore

### Overview

This technical diagram illustrates a process for evaluating the reliability of a Large Language Model (LLM) response. It depicts a pipeline where an input question is processed by an LLM to generate multiple candidate answer embeddings. These embeddings are then analyzed using eigenvector decomposition to calculate an "EigenScore." Based on this score, the system decides whether to provide a direct answer or a refusal message, likely to mitigate hallucinations or low-confidence outputs.

### Components/Axes

#### 1. Legend (Bottom)

Located at the bottom of the image, the legend defines the visual symbols used throughout the diagram:

* **Token Embedding:** Represented by a light orange vertical rectangle.

* **Current Token Embedding:** Represented by a solid orange vertical rectangle.

* **Output Logit:** Represented by a pink vertical rectangle with a red border.

* **Sentence Embedding:** Represented by a horizontal bar divided into segments (colored purple, orange, or yellow).

#### 2. Input Section (Far Left)

* **Label:** "Input" (blue pill-shaped header).

* **Content:** A light blue box containing the text: "Q: On what date in 1969 did Neil Armstrong first set foot on the Moon?"

#### 3. LLM Architecture (Center-Left)

* **Label:** "LLM" (yellow pill-shaped header).

* **Container:** A light green rounded rectangle.

* **Internal Flow (Bottom to Top):**

* **Input Tokens:** A sequence of four vertical rectangles (three light orange "Token Embeddings" followed by one solid orange "Current Token Embedding").

* **Decoder:** A teal horizontal block receiving the input tokens.

* **Intermediate State:** Another sequence of four rectangles (three light orange, one solid orange) above the Decoder.

* **Feature Clip:** A blue horizontal block.

* **FC Layer (Fully Connected):** A teal horizontal block.

* **Output Logit:** A single pink rectangle at the top of the stack.

#### 4. Embedding Generation (Center)

* Three distinct horizontal segmented bars representing different answer candidates:

* **Top (Purple):** "Embedding of answer 1"

* **Middle (Orange):** "Embedding of answer 2"

* **Bottom (Yellow):** "Embedding of answer K" (where 'K' implies multiple samples).

* An ellipsis ("...") is placed between the second and K-th embedding to indicate a variable number of samples.

#### 5. Analysis Block (Center-Right)

* **Container:** A light blue rounded rectangle.

* **Content:**

* A matrix representation stacking the purple, orange, and yellow sentence embeddings.

* An arrow pointing to a 3D coordinate system with three colored arrows (green, blue, and orange) labeled "**Eigenvector**".

#### 6. Decision Logic and Output (Far Right)

* **Decision Node:** An orange diamond labeled "**High EigenScore?**".

* **Path "No" (Upward):** Leads to an "Output" box (light orange) containing: "The answer is **20th July**."

* **Path "Yes" (Downward):** Leads to an "Output" box (light orange) containing: "**Sorry** we don't support answer for this question."

---

### Content Details

#### Process Flow

1. **Input:** The system receives a factual query about Neil Armstrong.

2. **Processing:** The LLM processes the input tokens through its internal layers (Decoder, Feature Clip, FC Layer).

3. **Sampling:** Instead of a single output, the system generates $K$ different sentence embeddings for potential answers.

4. **Mathematical Analysis:** These $K$ embeddings are aggregated into a matrix. Eigenvector analysis is performed on this matrix to determine the variance or consistency between the answers.

5. **Scoring:** An "EigenScore" is derived from this analysis.

6. **Branching:**

* If the EigenScore is **not high** (indicating high consensus/low variance among samples), the system outputs the factual answer.

* If the EigenScore is **high** (indicating high variance/uncertainty among samples), the system triggers a safety refusal.

---

### Key Observations

* **Multi-Sample Verification:** The diagram emphasizes that the system doesn't rely on a single pass but generates multiple "embeddings of answer 1...K" to check for consistency.

* **Feature Clipping:** The inclusion of a "Feature Clip" layer within the LLM block suggests a specific architectural modification to regularize or bound the features before the final classification/logit layer.

* **Inverse Logic:** Usually, a "high" score is good, but here a "High EigenScore" leads to a refusal. This suggests the EigenScore measures dispersion or entropy—the higher the score, the more "spread out" and inconsistent the candidate answers are.

---

### Interpretation

This diagram represents a **Self-Check or Uncertainty Estimation mechanism** for LLMs.

The use of "Eigenvectors" suggests that the system is performing a Principal Component Analysis (PCA) or a similar spectral analysis on the latent space of multiple generated answers.

* If the generated answers are semantically similar, their embeddings will cluster together, resulting in a dominant primary eigenvector and a **low EigenScore** (low dispersion). This gives the system "confidence" to provide the answer "20th July."

* If the generated answers are wildly different (hallucinations or conflicting facts), the embeddings will be scattered. This results in a **high EigenScore**, signaling that the model is "confused" or "uncertain," prompting the system to provide a canned refusal message to avoid spreading misinformation.

This is a sophisticated approach to the "hallucination" problem in AI, moving beyond simple probability scores to a more robust geometric analysis of the model's internal representations across multiple samples.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: LLM Answer Selection Process

### Overview

This diagram illustrates the process by which a Large Language Model (LLM) selects an answer to a given question. The process involves generating multiple answer embeddings, evaluating them using an eigenvector, and outputting the best answer or a rejection message if the score is too low.

### Components/Axes

The diagram consists of the following components:

* **Input:** A question posed to the LLM. The question is: "On what date in 1969 did Neil Armstrong first set foot on the Moon?".

* **LLM:** The core of the system, composed of:

* **Decoder:** Processes input tokens.

* **Feature Clip:** Transforms the decoder output.

* **FC Layer:** Further processes the features.

* **Answer Embeddings:** Multiple embeddings generated from the LLM, labeled "Embedding of answer 1", "Embedding of answer 2", and "Embedding of answer K".

* **Eigenvector:** A vector used to evaluate the quality of the answer embeddings.

* **Output:** The final answer or a rejection message. Two possible outputs are shown: "The answer is 20th July." and "Sorry we don't support answer for this question."

* **Legend:** Provides color-coding for different data types:

* Yellow: Token Embedding

* Light Green: Current Token Embedding

* Red: Output Logit

* Black & White Striped: Sentence Embedding

### Detailed Analysis or Content Details

The diagram shows a flow of information from the input question through the LLM to the output answer.

1. **Input Processing:** The question is fed into the LLM.

2. **LLM Processing:** The LLM's decoder processes the input tokens. The output of the decoder is passed through a Feature Clip and then an FC Layer.

3. **Answer Generation:** The LLM generates 'K' number of answer embeddings. Each embedding is represented as a series of colored blocks (black and white striped).

4. **Eigenvector Evaluation:** The answer embeddings are compared to an eigenvector. A curved arrow indicates the direction of comparison.

5. **Decision Point:** A decision is made based on whether the "EigenScore" is high enough.

* **High EigenScore (Yes):** The LLM outputs the answer: "The answer is 20th July."

* **Low EigenScore (No):** The LLM outputs a rejection message: "Sorry we don't support answer for this question."

### Key Observations

* The LLM generates multiple potential answers (up to K) before selecting the best one.

* The eigenvector serves as a quality filter, ensuring that only high-confidence answers are outputted.

* The system has a mechanism for handling questions it cannot answer.

* The diagram does not provide specific numerical values for the EigenScore threshold.

### Interpretation

This diagram illustrates a sophisticated answer selection process within an LLM. The use of multiple answer embeddings and an eigenvector suggests a probabilistic approach to answer generation and evaluation. The eigenvector likely represents a desired characteristic of a good answer (e.g., relevance, coherence, factual accuracy). The system is designed to avoid providing incorrect or unsupported answers by rejecting low-confidence responses. The diagram highlights the importance of not only generating potential answers but also rigorously evaluating their quality before presenting them to the user. The 'K' number of embeddings suggests a search for the best answer within a set of possibilities, rather than a deterministic output. The diagram is a conceptual illustration and does not provide details on the specific algorithms or parameters used in the LLM.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## System Architecture Diagram: LLM Answer Filtering via EigenScore

### Overview

The image is a technical system architecture diagram illustrating a process for filtering answers generated by a Large Language Model (LLM). The system takes a question as input, processes it through an LLM to generate multiple candidate answer embeddings, computes an Eigenvector from these embeddings, and uses a derived "EigenScore" to decide whether to output a final answer or a rejection message. The flow is depicted from left to right.

### Components/Axes

The diagram is composed of several interconnected blocks and decision points, with a legend at the bottom explaining the color-coding of specific elements.

**1. Input Block (Leftmost, light blue rounded rectangle):**

* **Label:** `Input`

* **Content:** A sample question: `Q: On what date in 1969 did Neil Armstrong first set foot on the Moon?`

**2. LLM Processing Block (Center-left, light green rounded rectangle):**

* **Label:** `LLM` (at the top)

* **Internal Components (from bottom to top):**

* `Input Tokens` (represented by four small grey rectangles).

* `Decoder` (large teal rectangle).

* `Feature Clip` (blue rectangle).

* `FC Layer` (teal rectangle).

* `Output Logit` (a single pink rectangle at the top).

* **Flow:** Arrows indicate data flow from `Input Tokens` up through the `Decoder`, `Feature Clip`, and `FC Layer` to the `Output Logit`.

**3. Embedding Generation (Center, branching from LLM):**

* The LLM output branches into multiple parallel paths, each generating an "Embedding of answer".

* **Labels:** `Embedding of answer 1`, `Embedding of answer 2`, ..., `Embedding of answer K`.

* **Visual Representation:** Each embedding is shown as a horizontal bar composed of multiple colored segments (purple, orange, yellow, etc.), representing a `Sentence Embedding` as per the legend.

**4. Eigenvector Computation Block (Center-right, light blue rounded rectangle):**

* **Input:** The collection of K answer embeddings.

* **Internal Representation:** A matrix symbol `[...]` containing the colored embedding bars.

* **Output:** An `Eigenvector`, visualized as three colored arrows (orange, blue, green) radiating from a central point.

**5. Decision Diamond (Right, orange diamond):**

* **Label:** `High EigenScore?`

* **Function:** This is a decision node that evaluates the computed Eigenvector.

**6. Output Blocks (Rightmost):**

* **Top Output (Green rounded rectangle, "No" path):**

* **Label:** `Output`

* **Content:** `The answer is 20th July.`

* **Bottom Output (Green rounded rectangle, "Yes" path):**

* **Label:** `Output`

* **Content:** `Sorry we don't support answer for this question.`

**7. Legend (Bottom of the image):**

* **Token Embedding:** Light yellow rectangle.

* **Current Token Embedding:** Orange rectangle.

* **Output Logit:** Pink rectangle.

* **Sentence Embedding:** A horizontal bar composed of multiple small black-outlined rectangles.

### Detailed Analysis

The diagram details a specific technical workflow:

1. **Input Processing:** A natural language question is fed into an LLM.

2. **Candidate Generation:** The LLM's decoder architecture processes the input tokens to generate multiple potential answer candidates (from 1 to K). Each candidate is represented as a high-dimensional vector (a `Sentence Embedding`).

3. **Dimensionality Reduction & Analysis:** These K embeddings are analyzed together. The system computes an `Eigenvector` from this set, likely through a method like Principal Component Analysis (PCA) or a similar spectral technique. The Eigenvector captures the principal directions of variance among the candidate answers.

4. **Scoring & Decision:** A scalar "EigenScore" is derived from this Eigenvector. The diagram implies this score measures the consistency, confidence, or semantic coherence of the candidate answers.

* If the EigenScore is **NOT High** (the "No" path), the system proceeds to generate and output a specific answer (e.g., "20th July").

* If the EigenScore **IS High** (the "Yes" path), the system interprets this as an indicator of an unsupported or problematic question and outputs a rejection message instead.

### Key Observations

* **Process Flow:** The flow is strictly linear and unidirectional from input to output, with a single branching point at the decision diamond.

* **Color-Coding Consistency:** The colors in the legend are used consistently in the diagram. The `Sentence Embedding` bars in the center match the legend's pattern. The `Output Logit` (pink) in the LLM block matches the legend.

* **Spatial Grounding:** The `Input` is on the far left. The `LLM` block is central-left. The `Embedding` generation is in the center. The `Eigenvector` computation is center-right. The `Decision` and `Output` blocks are on the far right. The `Legend` is anchored at the bottom-left.

* **Example Logic:** The sample question about Neil Armstrong leads to a "No" decision (Low EigenScore), resulting in a factual answer. This suggests the system is designed to answer straightforward, fact-based questions confidently. A "High EigenScore" likely corresponds to questions where the LLM generates highly variable, uncertain, or nonsensical candidate answers, triggering the rejection.

### Interpretation

This diagram illustrates a **confidence or reliability filtering mechanism** for LLM outputs. Instead of relying on a single output, the system generates multiple candidate answers and analyzes their collective properties.

* **What it demonstrates:** The core idea is that the *variance* or *structure* among multiple generated answers (captured by the Eigenvector and its score) can be a proxy for the model's confidence or the question's answerability. A low variance (or a specific spectral signature) among candidates suggests consensus, leading to answer output. High variance or an anomalous spectral signature suggests uncertainty, leading to a refusal.

* **Relationships:** The LLM is the generator. The embedding analysis block is the evaluator. The decision diamond is the gatekeeper. This creates a feedback loop where the model's own output distribution is used to self-assess reliability before finalizing a response.

* **Notable Implications:** This approach moves beyond simple token-level probability scores. It uses a more holistic, semantic-level analysis of multiple potential responses. It's a form of **ensemble reasoning** or **self-consistency checking** implemented within a single model's inference pass. The rejection message ("Sorry we don't support answer for this question") implies this system is part of a larger application that defines a specific domain of "supportable" questions, and this EigenScore mechanism is the filter for that domain.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Question-Answering System with Language Model

### Overview

The image depicts a technical workflow for a question-answering system using a language model (LLM). It illustrates how input tokens are processed through a decoder architecture, generates candidate answer embeddings, and uses an eigenvector-based scoring mechanism to select the final output. The system includes confidence-based response validation.

### Components/Axes

1. **Input Section** (Leftmost block):

- Text: "On what date in 1969 did Neil Armstrong first set foot on the Moon?"

- Color coding:

- Yellow: Token Embedding

- Red: Current Token Embedding

- Pink: Output Logit

2. **LLM Processing Block** (Central):

- Components:

- FC Layer (Feature Clip)

- Decoder

- Input Tokens: Represented as vertical bars with color gradients

- Output: Three answer embeddings (purple, orange, yellow) labeled "Embedding of answer 1", "Embedding of answer 2", ..., "Embedding of answer K"

3. **Eigenvector Processing** (Middle-right):

- Input: Matrix of answer embeddings

- Output: Eigenvector with directional arrows (red for positive, blue for negative)

4. **Output Section** (Rightmost):

- Decision diamond: "High EigenScore?"

- Two possible outputs:

- "The answer is 20th July."

- "Sorry we don't support answer for this question."

### Detailed Analysis

- **Token Processing**: Input tokens are color-coded (yellow for embeddings, red for current token) and fed into the LLM's decoder architecture.

- **Answer Generation**: The decoder produces K candidate answer embeddings, visualized as horizontal bars with gradient colors.

- **Eigenvector Analysis**: The eigenvector component processes embeddings through a matrix operation, with directional arrows indicating vector relationships.

- **Confidence Threshold**: A binary decision node evaluates the EigenScore to determine output validity.

### Key Observations

1. The system uses a confidence threshold (EigenScore) to filter unsupported answers.

2. Color coding distinguishes different processing stages:

- Yellow/Red: Input token representations

- Purple/Orange/Yellow: Answer embeddings

- Pink: Output logits

3. The eigenvector component suggests a mathematical approach to answer selection.

4. The flowchart implies a probabilistic or vector-based similarity matching mechanism.

### Interpretation

This system demonstrates a hybrid approach combining:

1. **Neural Language Modeling**: For answer generation through token embeddings and decoder architecture

2. **Linear Algebra**: Using eigenvectors to analyze answer embeddings

3. **Confidence Scoring**: Implementing a threshold mechanism for response validation

The eigenvector-based scoring suggests the system measures answer relevance through vector space relationships, potentially identifying the most semantically similar answer to the question. The confidence threshold indicates an awareness of answer reliability, preventing responses to unsupported queries. The color-coded visualization aids in understanding the multi-stage processing pipeline from raw input to final output.

DECODING INTELLIGENCE...