## Game Environment Screenshot: Reinforcement Learning Experiment

### Overview

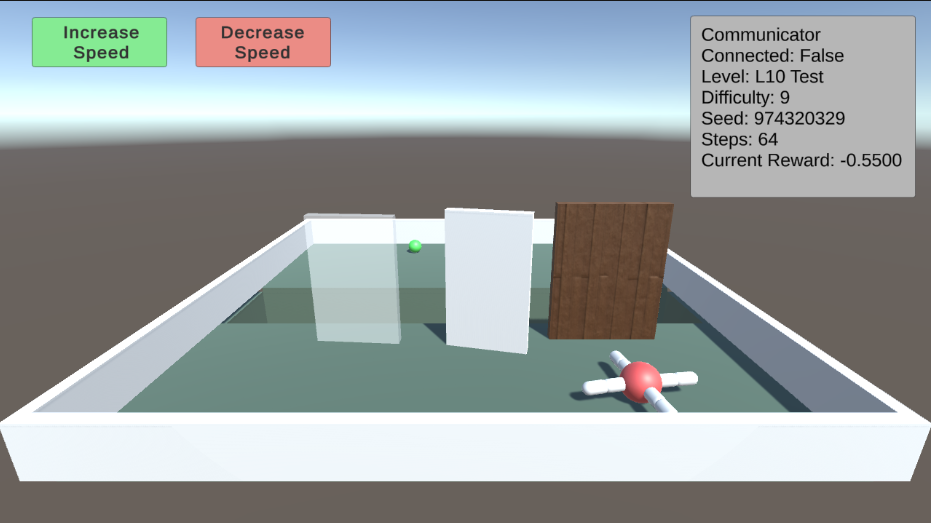

The image is a screenshot of a 3D game environment, likely used for reinforcement learning experiments. It shows a simple maze-like setup with a green sphere (presumably the agent), obstacles, and a target. The top-right corner displays game-related information, and there are buttons to control the agent's speed.

### Components/Axes

* **Environment:** A rectangular enclosure with light blue walls and a light blue floor.

* **Agent:** A green sphere located in the left side of the environment.

* **Obstacles:** Three vertical barriers: a transparent barrier on the left, a white barrier in the middle, and a brown wooden barrier on the right.

* **Target:** A red sphere with four white cylinders extending from it, located in the bottom-right corner of the environment.

* **UI Elements (Top):**

* "Increase Speed" button (green background) on the top-left.

* "Decrease Speed" button (red background) next to the "Increase Speed" button.

* Text box (top-right) displaying game information.

### Detailed Analysis or ### Content Details

**UI Text Box (Top-Right):**

* **Communicator:**

* **Connected:** False

* **Level:** L10 Test

* **Difficulty:** 9

* **Seed:** 974320329

* **Steps:** 64

* **Current Reward:** -0.5500

**Buttons (Top-Left):**

* **Increase Speed:** Green button with the text "Increase Speed".

* **Decrease Speed:** Red button with the text "Decrease Speed".

### Key Observations

* The agent (green sphere) is positioned at the start of the maze.

* The goal (red sphere with cylinders) is at the end of the maze.

* The agent needs to navigate around the obstacles to reach the goal.

* The "Connected" status is "False," suggesting the agent might not be connected to a central server or network.

* The "Current Reward" is negative, indicating a penalty or that the agent has not yet reached the goal.

### Interpretation

The screenshot depicts a reinforcement learning environment where an agent (the green sphere) is trained to navigate a maze and reach a target. The agent's performance is evaluated based on the "Current Reward," which is likely updated as the agent interacts with the environment. The "Steps" counter indicates the number of actions the agent has taken. The negative reward suggests the agent may be penalized for taking steps or colliding with obstacles. The "Difficulty" and "Level" parameters indicate the complexity of the maze. The "Seed" value is used for random number generation, ensuring reproducibility of the experiment. The "Increase Speed" and "Decrease Speed" buttons allow for manual adjustment of the agent's movement speed, potentially for debugging or demonstration purposes. The fact that the communicator is not connected could mean that the agent is running locally and not communicating with a remote server.