## Line Chart: Model Accuracy Comparison During Training

### Overview

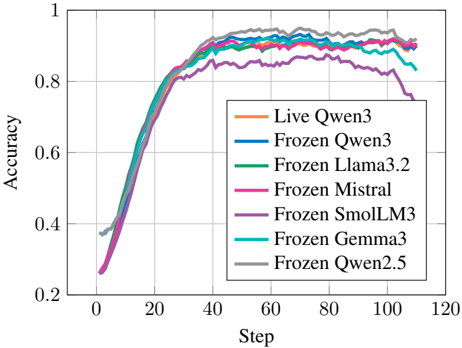

This image is a line chart comparing the training accuracy of seven different language models over 110 training steps. The chart plots "Accuracy" on the y-axis against "Step" on the x-axis, showing the learning progression of each model.

### Components/Axes

* **Chart Type:** Line chart with multiple series.

* **X-Axis:** Labeled "Step". Scale ranges from 0 to 120, with major tick marks every 20 steps (0, 20, 40, 60, 80, 100, 120).

* **Y-Axis:** Labeled "Accuracy". Scale ranges from 0.2 to 1.0, with major tick marks every 0.2 units (0.2, 0.4, 0.6, 0.8, 1.0).

* **Legend:** Located in the bottom-right quadrant of the chart area. It contains seven entries, each associating a model name with a specific line color.

1. **Live Qwen3** - Orange line

2. **Frozen Qwen3** - Blue line

3. **Frozen Llama3.2** - Green line

4. **Frozen Mistral** - Pink line

5. **Frozen SmolLM3** - Purple line

6. **Frozen Gemma3** - Cyan line

7. **Frozen Qwen2.5** - Gray line

### Detailed Analysis

The chart displays the training trajectory for each model. All models start at a low accuracy (between ~0.25 and ~0.40) at Step 0 and show a rapid, steep increase in accuracy until approximately Step 40. After Step 40, the rate of improvement slows significantly, and the models enter a plateau phase with minor fluctuations.

**Trend Verification & Data Points (Approximate):**

1. **Live Qwen3 (Orange):**

* **Trend:** Sharp initial rise, then plateaus at the highest level among all models.

* **Key Points:** Starts ~0.28. Reaches ~0.85 by Step 40. Peaks at ~0.95 around Step 70-80. Ends at ~0.92 at Step 110.

2. **Frozen Qwen3 (Blue):**

* **Trend:** Very similar trajectory to Live Qwen3, but consistently slightly lower after the initial rise.

* **Key Points:** Starts ~0.28. Reaches ~0.83 by Step 40. Plateaus around ~0.90-0.92. Ends at ~0.90 at Step 110.

3. **Frozen Llama3.2 (Green):**

* **Trend:** Follows the general pack closely, ending in the middle of the high-performing group.

* **Key Points:** Starts ~0.27. Reaches ~0.82 by Step 40. Plateaus around ~0.88-0.90. Ends at ~0.89 at Step 110.

4. **Frozen Mistral (Pink):**

* **Trend:** Rises with the group but shows a more pronounced decline in the later stages.

* **Key Points:** Starts ~0.26. Reaches ~0.80 by Step 40. Peaks near ~0.90 around Step 60, then begins a gradual decline. Ends at ~0.84 at Step 110.

5. **Frozen SmolLM3 (Purple):**

* **Trend:** Clearly underperforms the other models throughout the entire training run. It has the lowest accuracy after the initial rise and shows a significant drop after Step 80.

* **Key Points:** Starts ~0.25. Reaches only ~0.75 by Step 40. Plateaus around ~0.82-0.85. Begins a sharp decline after Step 90, ending at ~0.75 at Step 110.

6. **Frozen Gemma3 (Cyan):**

* **Trend:** Performs well initially but exhibits a notable drop in accuracy towards the end of the plotted steps.

* **Key Points:** Starts ~0.38 (highest initial point). Reaches ~0.85 by Step 40. Plateaus around ~0.88-0.90. Shows a sharp dip starting around Step 100, ending at ~0.84 at Step 110.

7. **Frozen Qwen2.5 (Gray):**

* **Trend:** Consistently among the top performers, closely matching Live Qwen3 after Step 40.

* **Key Points:** Starts ~0.37. Reaches ~0.84 by Step 40. Plateaus at a high level, ~0.92-0.94. Ends at ~0.91 at Step 110.

### Key Observations

1. **Performance Clustering:** After Step 40, the models separate into distinct performance tiers. The top tier includes Live Qwen3, Frozen Qwen2.5, Frozen Qwen3, and Frozen Llama3.2 (all >0.88 accuracy). The middle tier includes Frozen Mistral and Frozen Gemma3 (~0.84-0.89). Frozen SmolLM3 is in a clear bottom tier.

2. **Late-Stage Degradation:** Three models show a decline in accuracy in the final 20 steps: Frozen SmolLM3 (most severe), Frozen Gemma3, and Frozen Mistral (moderate). This could indicate overfitting or training instability.

3. **"Live" vs. "Frozen" Qwen3:** The "Live" version of Qwen3 (orange) maintains a slight but consistent advantage over its "Frozen" counterpart (blue) throughout the plateau phase.

4. **Initial Conditions:** Frozen Gemma3 and Frozen Qwen2.5 start at a notably higher accuracy (~0.37-0.38) compared to the others (~0.25-0.28), suggesting different initialization or pre-training.

### Interpretation

This chart likely visualizes a comparative study of model fine-tuning or continued training methodologies. The "Frozen" prefix suggests these models have their core parameters frozen, and only a smaller subset (like an adapter layer) is being trained. "Live Qwen3" may represent a fully trainable baseline.

The data demonstrates that:

* **Architecture Matters:** Different base models (Qwen, Llama, Mistral, etc.) exhibit different learning dynamics and final performance ceilings even under the same training protocol.

* **Stability Varies:** The late-stage drops for SmolLM3, Gemma3, and Mistral suggest their training processes became unstable or began to overfit, while Qwen-based models remained stable.

* **The "Qwen Family" Excels:** Both Qwen2.5 and Qwen3 variants (live and frozen) occupy the top performance positions, indicating strong results from this model family in this specific task.

* **Trade-offs Exist:** The highest-performing models (Qwen family) also show the most stability. The model with the best starting point (Gemma3) did not maintain its lead, and the smallest model (SmolLM3, implied by name) performed worst, highlighting potential trade-offs between model size, initial capability, and training stability.

The chart provides a clear visual argument for the effectiveness of the Qwen architectures in this context and raises questions about the causes of performance degradation in other models during extended training.