TECHNICAL ASSET FINGERPRINT

6872e0fc32baf49abecd26c1

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Line Charts: Model Performance on Math Problems

### Overview

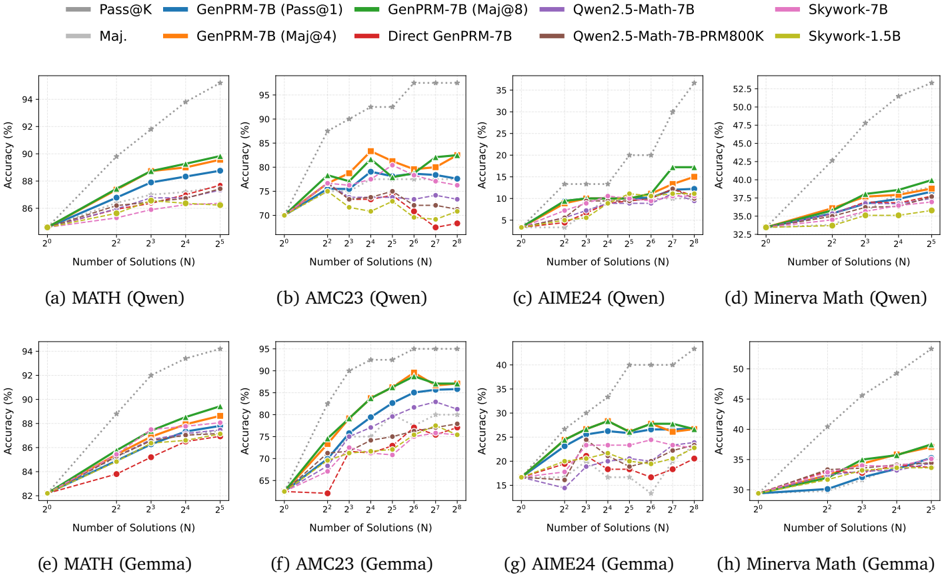

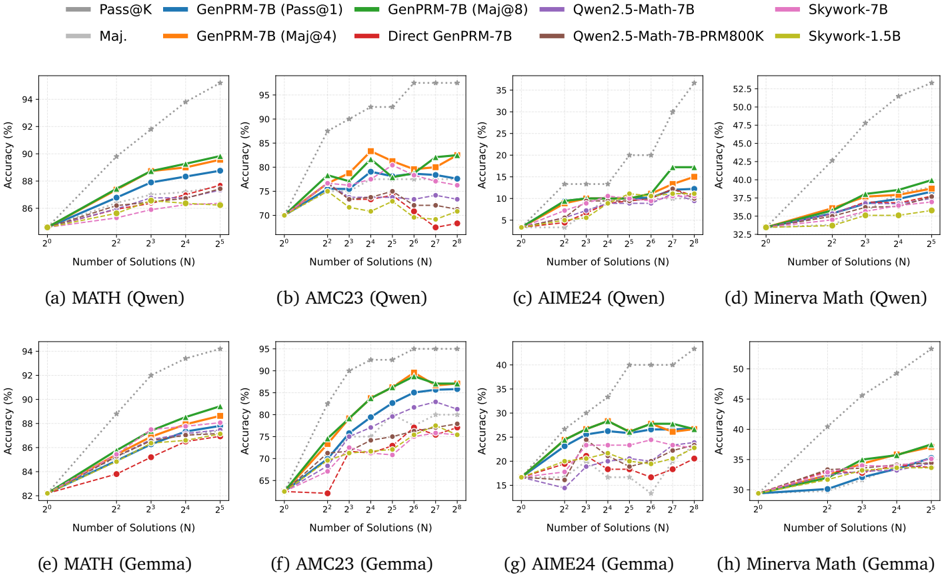

The image contains eight line charts comparing the performance of different language models on various math problem datasets. The charts are arranged in a 2x4 grid, with the top row showing results for models using "Qwen" and the bottom row using "Gemma." Each chart plots the accuracy of the models against the number of solutions used. The models compared include Pass@K, GenPRM-7B with different configurations (Pass@1, Maj@4, Maj@8, Direct), Qwen2.5-Math-7B, Qwen2.5-Math-7B-PRM800K, Skywork-7B, and Skywork-1.5B.

### Components/Axes

* **X-axis (Horizontal):** Number of Solutions (N). The x-axis is logarithmic, with values ranging from 2<sup>0</sup> to 2<sup>5</sup> in most charts, and up to 2<sup>8</sup> in the AMC23 charts.

* **Y-axis (Vertical):** Accuracy (%). The y-axis scale varies depending on the chart, ranging from approximately 85% to 95% for MATH, 65% to 95% for AMC23, 5% to 35% for AIME24, and 32.5% to 52.5% for Minerva Math.

* **Chart Titles:** Each chart has a title indicating the dataset and the model family used:

* (a) MATH (Qwen)

* (b) AMC23 (Qwen)

* (c) AIME24 (Qwen)

* (d) Minerva Math (Qwen)

* (e) MATH (Gemma)

* (f) AMC23 (Gemma)

* (g) AIME24 (Gemma)

* (h) Minerva Math (Gemma)

* **Legend (Top):** The legend is located at the top of the image and identifies each model by color and name:

* **Gray dotted line:** Pass@K

* **Blue line:** GenPRM-7B (Pass@1)

* **Gray line:** Maj.

* **Orange line:** GenPRM-7B (Maj@4)

* **Green line:** GenPRM-7B (Maj@8)

* **Red line:** Direct GenPRM-7B

* **Purple line:** Qwen2.5-Math-7B

* **Brown line:** Qwen2.5-Math-7B-PRM800K

* **Pink line:** Skywork-7B

* **Yellow-Green line:** Skywork-1.5B

### Detailed Analysis

#### (a) MATH (Qwen)

* **Pass@K (Gray dotted line):** Accuracy increases sharply from approximately 82% at 2<sup>0</sup> to 94% at 2<sup>5</sup>.

* **GenPRM-7B (Pass@1) (Blue line):** Accuracy increases from approximately 84% at 2<sup>0</sup> to 89% at 2<sup>5</sup>.

* **Maj. (Gray line):** Accuracy remains relatively flat around 86%.

* **GenPRM-7B (Maj@4) (Orange line):** Accuracy increases slightly from approximately 85% to 88%.

* **GenPRM-7B (Maj@8) (Green line):** Accuracy increases slightly from approximately 85% to 90%.

* **Direct GenPRM-7B (Red line):** Accuracy remains relatively flat around 85%.

* **Qwen2.5-Math-7B (Purple line):** Accuracy remains relatively flat around 87%.

* **Qwen2.5-Math-7B-PRM800K (Brown line):** Accuracy remains relatively flat around 86%.

* **Skywork-7B (Pink line):** Accuracy remains relatively flat around 87%.

* **Skywork-1.5B (Yellow-Green line):** Accuracy remains relatively flat around 86%.

#### (b) AMC23 (Qwen)

* **Pass@K (Gray dotted line):** Accuracy increases sharply from approximately 65% at 2<sup>0</sup> to 95% at 2<sup>8</sup>.

* **GenPRM-7B (Pass@1) (Blue line):** Accuracy increases from approximately 70% at 2<sup>0</sup> to 85% at 2<sup>8</sup>.

* **Maj. (Gray line):** Accuracy fluctuates between 75% and 80%.

* **GenPRM-7B (Maj@4) (Orange line):** Accuracy fluctuates between 75% and 85%.

* **GenPRM-7B (Maj@8) (Green line):** Accuracy fluctuates between 75% and 85%.

* **Direct GenPRM-7B (Red line):** Accuracy fluctuates between 70% and 75%.

* **Qwen2.5-Math-7B (Purple line):** Accuracy fluctuates between 75% and 80%.

* **Qwen2.5-Math-7B-PRM800K (Brown line):** Accuracy fluctuates between 70% and 75%.

* **Skywork-7B (Pink line):** Accuracy fluctuates between 75% and 80%.

* **Skywork-1.5B (Yellow-Green line):** Accuracy fluctuates between 70% and 75%.

#### (c) AIME24 (Qwen)

* **Pass@K (Gray dotted line):** Accuracy increases sharply from approximately 5% at 2<sup>0</sup> to 35% at 2<sup>8</sup>.

* **GenPRM-7B (Pass@1) (Blue line):** Accuracy increases from approximately 5% at 2<sup>0</sup> to 20% at 2<sup>8</sup>.

* **Maj. (Gray line):** Accuracy fluctuates between 5% and 10%.

* **GenPRM-7B (Maj@4) (Orange line):** Accuracy fluctuates between 10% and 25%.

* **GenPRM-7B (Maj@8) (Green line):** Accuracy fluctuates between 10% and 25%.

* **Direct GenPRM-7B (Red line):** Accuracy fluctuates between 5% and 10%.

* **Qwen2.5-Math-7B (Purple line):** Accuracy fluctuates between 10% and 20%.

* **Qwen2.5-Math-7B-PRM800K (Brown line):** Accuracy fluctuates between 5% and 10%.

* **Skywork-7B (Pink line):** Accuracy fluctuates between 10% and 20%.

* **Skywork-1.5B (Yellow-Green line):** Accuracy fluctuates between 5% and 10%.

#### (d) Minerva Math (Qwen)

* **Pass@K (Gray dotted line):** Accuracy increases sharply from approximately 32.5% at 2<sup>0</sup> to 52.5% at 2<sup>5</sup>.

* **GenPRM-7B (Pass@1) (Blue line):** Accuracy increases from approximately 32.5% at 2<sup>0</sup> to 40% at 2<sup>5</sup>.

* **Maj. (Gray line):** Accuracy remains relatively flat around 37.5%.

* **GenPRM-7B (Maj@4) (Orange line):** Accuracy remains relatively flat around 42.5%.

* **GenPRM-7B (Maj@8) (Green line):** Accuracy remains relatively flat around 40%.

* **Direct GenPRM-7B (Red line):** Accuracy remains relatively flat around 37.5%.

* **Qwen2.5-Math-7B (Purple line):** Accuracy remains relatively flat around 37.5%.

* **Qwen2.5-Math-7B-PRM800K (Brown line):** Accuracy remains relatively flat around 37.5%.

* **Skywork-7B (Pink line):** Accuracy remains relatively flat around 37.5%.

* **Skywork-1.5B (Yellow-Green line):** Accuracy remains relatively flat around 37.5%.

#### (e) MATH (Gemma)

* **Pass@K (Gray dotted line):** Accuracy increases sharply from approximately 82% at 2<sup>0</sup> to 94% at 2<sup>5</sup>.

* **GenPRM-7B (Pass@1) (Blue line):** Accuracy increases from approximately 82% at 2<sup>0</sup> to 90% at 2<sup>5</sup>.

* **Maj. (Gray line):** Accuracy remains relatively flat around 84%.

* **GenPRM-7B (Maj@4) (Orange line):** Accuracy increases slightly from approximately 83% to 86%.

* **GenPRM-7B (Maj@8) (Green line):** Accuracy increases slightly from approximately 83% to 88%.

* **Direct GenPRM-7B (Red line):** Accuracy remains relatively flat around 84%.

* **Qwen2.5-Math-7B (Purple line):** Accuracy remains relatively flat around 85%.

* **Qwen2.5-Math-7B-PRM800K (Brown line):** Accuracy remains relatively flat around 84%.

* **Skywork-7B (Pink line):** Accuracy remains relatively flat around 85%.

* **Skywork-1.5B (Yellow-Green line):** Accuracy remains relatively flat around 84%.

#### (f) AMC23 (Gemma)

* **Pass@K (Gray dotted line):** Accuracy increases sharply from approximately 65% at 2<sup>0</sup> to 95% at 2<sup>8</sup>.

* **GenPRM-7B (Pass@1) (Blue line):** Accuracy increases from approximately 65% at 2<sup>0</sup> to 90% at 2<sup>8</sup>.

* **Maj. (Gray line):** Accuracy fluctuates between 70% and 75%.

* **GenPRM-7B (Maj@4) (Orange line):** Accuracy fluctuates between 75% and 85%.

* **GenPRM-7B (Maj@8) (Green line):** Accuracy fluctuates between 75% and 85%.

* **Direct GenPRM-7B (Red line):** Accuracy fluctuates between 70% and 75%.

* **Qwen2.5-Math-7B (Purple line):** Accuracy fluctuates between 75% and 80%.

* **Qwen2.5-Math-7B-PRM800K (Brown line):** Accuracy fluctuates between 70% and 75%.

* **Skywork-7B (Pink line):** Accuracy fluctuates between 75% and 80%.

* **Skywork-1.5B (Yellow-Green line):** Accuracy fluctuates between 70% and 75%.

#### (g) AIME24 (Gemma)

* **Pass@K (Gray dotted line):** Accuracy increases sharply from approximately 15% at 2<sup>0</sup> to 40% at 2<sup>8</sup>.

* **GenPRM-7B (Pass@1) (Blue line):** Accuracy increases from approximately 15% at 2<sup>0</sup> to 30% at 2<sup>8</sup>.

* **Maj. (Gray line):** Accuracy fluctuates between 15% and 20%.

* **GenPRM-7B (Maj@4) (Orange line):** Accuracy fluctuates between 20% and 25%.

* **GenPRM-7B (Maj@8) (Green line):** Accuracy fluctuates between 20% and 25%.

* **Direct GenPRM-7B (Red line):** Accuracy fluctuates between 15% and 20%.

* **Qwen2.5-Math-7B (Purple line):** Accuracy fluctuates between 20% and 25%.

* **Qwen2.5-Math-7B-PRM800K (Brown line):** Accuracy fluctuates between 15% and 20%.

* **Skywork-7B (Pink line):** Accuracy fluctuates between 20% and 25%.

* **Skywork-1.5B (Yellow-Green line):** Accuracy fluctuates between 15% and 20%.

#### (h) Minerva Math (Gemma)

* **Pass@K (Gray dotted line):** Accuracy increases sharply from approximately 30% at 2<sup>0</sup> to 52% at 2<sup>5</sup>.

* **GenPRM-7B (Pass@1) (Blue line):** Accuracy increases from approximately 30% at 2<sup>0</sup> to 40% at 2<sup>5</sup>.

* **Maj. (Gray line):** Accuracy remains relatively flat around 35%.

* **GenPRM-7B (Maj@4) (Orange line):** Accuracy remains relatively flat around 37%.

* **GenPRM-7B (Maj@8) (Green line):** Accuracy remains relatively flat around 37%.

* **Direct GenPRM-7B (Red line):** Accuracy remains relatively flat around 35%.

* **Qwen2.5-Math-7B (Purple line):** Accuracy remains relatively flat around 35%.

* **Qwen2.5-Math-7B-PRM800K (Brown line):** Accuracy remains relatively flat around 35%.

* **Skywork-7B (Pink line):** Accuracy remains relatively flat around 35%.

* **Skywork-1.5B (Yellow-Green line):** Accuracy remains relatively flat around 35%.

### Key Observations

* **Pass@K consistently outperforms other models** when the number of solutions increases, showing a steep upward trend in all charts.

* The performance of other models (GenPRM-7B variants, Qwen2.5-Math-7B, Skywork models) tends to plateau or fluctuate, with less significant improvements as the number of solutions increases.

* The AMC23 and AIME24 datasets show a wider range of accuracy values and more fluctuation compared to the MATH and Minerva Math datasets.

* The "Qwen" models generally show slightly better performance than the "Gemma" models, especially in the MATH and Minerva Math datasets.

### Interpretation

The charts demonstrate the impact of increasing the number of solutions (N) on the accuracy of different language models when solving math problems. The Pass@K model benefits significantly from a higher number of solutions, indicating its ability to leverage multiple attempts to find the correct answer. Other models show limited improvement with increasing N, suggesting they may be less effective at utilizing multiple solutions or have reached a performance ceiling.

The differences in performance across datasets (MATH, AMC23, AIME24, Minerva Math) highlight the varying difficulty and characteristics of these problem sets. The AMC23 and AIME24 datasets, with their wider accuracy ranges and fluctuations, may present more complex challenges for the models.

The comparison between "Qwen" and "Gemma" models suggests that the underlying architecture or training data of the "Qwen" models may provide a slight advantage in solving these math problems, particularly in the MATH and Minerva Math datasets.

DECODING INTELLIGENCE...