TECHNICAL ASSET FINGERPRINT

68f7f61ba46f120af21384f6

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Bar Chart: Features from Gemma-7B, LLAMA2-7B, and LLAMA3-8B

### Overview

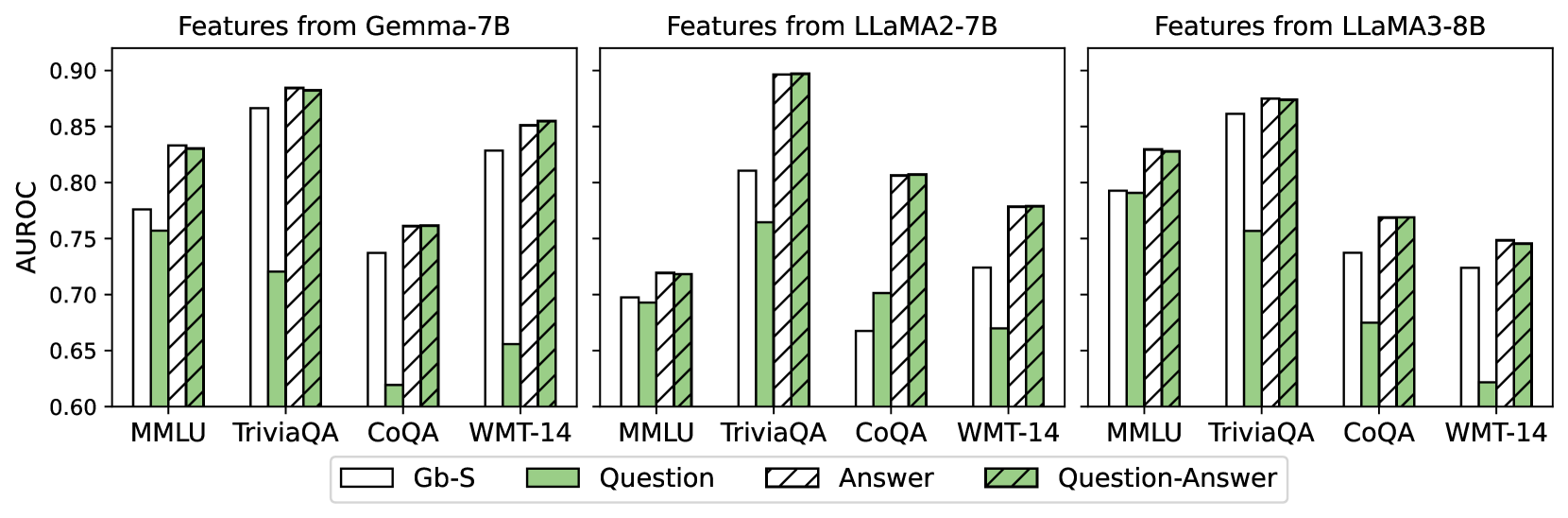

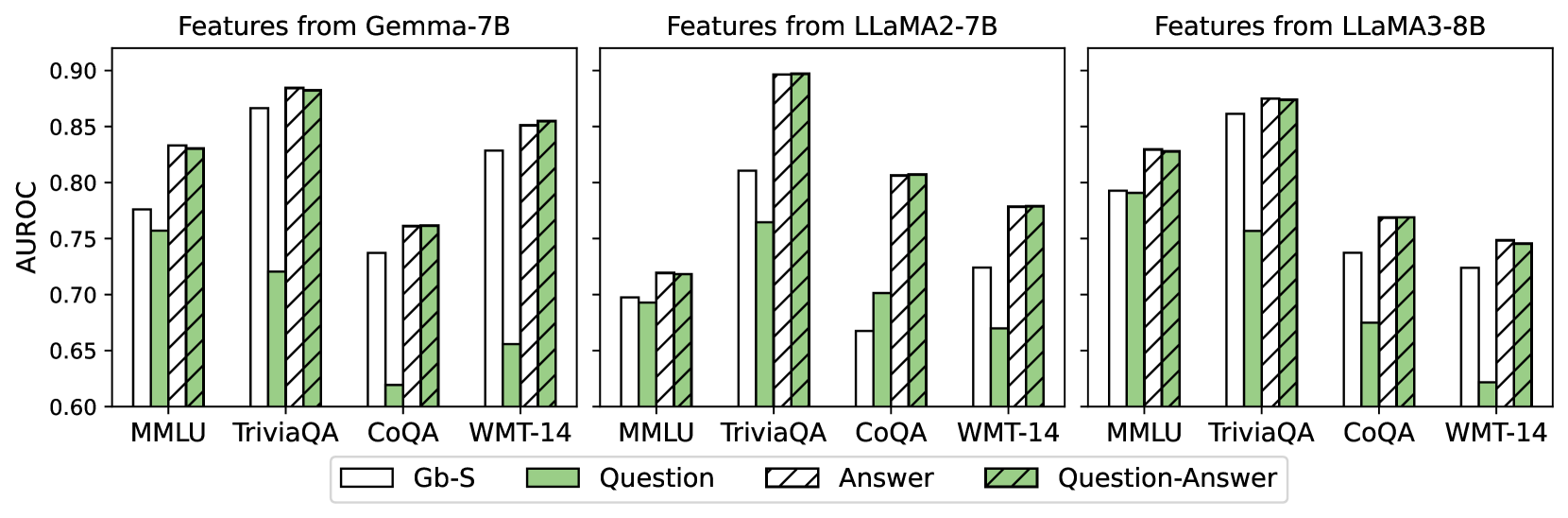

The image presents three bar charts comparing the AUROC scores of different features extracted from three language models: Gemma-7B, LLAMA2-7B, and LLAMA3-8B. Each chart displays the AUROC scores for four tasks (MMLU, TriviaQA, CoQA, and WMT-14) across three feature types: Gb-S, Question, and Question-Answer.

### Components/Axes

* **Title:** Features from Gemma-7B (left), Features from LLAMA2-7B (center), Features from LLAMA3-8B (right)

* **Y-axis:** AUROC, ranging from 0.60 to 0.90 in increments of 0.05.

* **X-axis:** Categorical, representing the tasks: MMLU, TriviaQA, CoQA, WMT-14.

* **Legend:** Located at the bottom of the chart.

* Gb-S: White bars

* Question: Light green bars

* Answer: White bars with black diagonal stripes

* Question-Answer: Light green bars with black diagonal stripes

### Detailed Analysis

**Chart 1: Features from Gemma-7B**

* **MMLU:**

* Gb-S: ~0.78

* Question: ~0.76

* Question-Answer: ~0.83

* **TriviaQA:**

* Gb-S: ~0.87

* Question: ~0.72

* Question-Answer: ~0.89

* **CoQA:**

* Gb-S: ~0.74

* Question: ~0.76

* Question-Answer: ~0.77

* **WMT-14:**

* Gb-S: ~0.66

* Question: ~0.85

* Question-Answer: ~0.86

**Chart 2: Features from LLAMA2-7B**

* **MMLU:**

* Gb-S: ~0.72

* Question: ~0.70

* Question-Answer: ~0.73

* **TriviaQA:**

* Gb-S: ~0.81

* Question: ~0.77

* Question-Answer: ~0.90

* **CoQA:**

* Gb-S: ~0.62

* Question: ~0.67

* Question-Answer: ~0.79

* **WMT-14:**

* Gb-S: ~0.78

* Question: ~0.78

* Question-Answer: ~0.80

**Chart 3: Features from LLAMA3-8B**

* **MMLU:**

* Gb-S: ~0.78

* Question: ~0.79

* Question-Answer: ~0.83

* **TriviaQA:**

* Gb-S: ~0.86

* Question: ~0.72

* Question-Answer: ~0.88

* **CoQA:**

* Gb-S: ~0.72

* Question: ~0.77

* Question-Answer: ~0.78

* **WMT-14:**

* Gb-S: ~0.73

* Question: ~0.75

* Question-Answer: ~0.75

### Key Observations

* For all three models, TriviaQA generally yields the highest AUROC scores, especially for the Question-Answer feature.

* CoQA tends to have the lowest AUROC scores across all models and feature types.

* The Question-Answer feature generally performs better than the Question feature, and both are often better than Gb-S.

* LLAMA2-7B shows a particularly low AUROC score for CoQA across all feature types.

### Interpretation

The charts compare the performance of different features extracted from three language models on various tasks, as measured by AUROC. The results suggest that the type of feature used significantly impacts performance, with Question-Answer features generally outperforming Gb-S and Question features. The models also exhibit varying levels of success across different tasks, indicating that some tasks are inherently more challenging or better suited to the models' capabilities. The relatively low performance on CoQA across all models suggests that this task may require different or more sophisticated features. The high performance on TriviaQA, especially with Question-Answer features, indicates that these models are particularly adept at answering trivia questions when provided with both the question and answer information.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Bar Chart: AUROC Scores for Different Models and Feature Sets

### Overview

The image presents a bar chart comparing the Area Under the Receiver Operating Characteristic curve (AUROC) scores for three different language models – Gemma-7B, LLaMA2-7B, and LLaMA3-8B – across four different datasets: MMLU, TriviaQA, CoQA, and WMT-14. Each model's performance is evaluated using four different feature sets: "Gb-S", "Question", "Answer", and "Question-Answer". The chart uses a grouped bar format to display the AUROC scores for each combination of model and feature set.

### Components/Axes

* **X-axis:** Datasets - MMLU, TriviaQA, CoQA, WMT-14.

* **Y-axis:** AUROC score, ranging from approximately 0.60 to 0.90.

* **Models (Columns):** Gemma-7B, LLaMA2-7B, LLaMA3-8B.

* **Feature Sets (Bar Groups):**

* Gb-S (White bars)

* Question (Light Green bars)

* Answer (Light Blue bars)

* Question-Answer (Dark Green bars)

* **Legend:** Located at the bottom-center of the chart, clearly labeling the color-coding for each feature set.

### Detailed Analysis

The chart consists of three sets of four grouped bar charts, one for each model. Within each set, each dataset has four bars representing the AUROC score for each feature set.

**Gemma-7B:**

* **MMLU:** Gb-S ≈ 0.84, Question ≈ 0.86, Answer ≈ 0.85, Question-Answer ≈ 0.88

* **TriviaQA:** Gb-S ≈ 0.87, Question ≈ 0.90, Answer ≈ 0.86, Question-Answer ≈ 0.89

* **CoQA:** Gb-S ≈ 0.85, Question ≈ 0.88, Answer ≈ 0.84, Question-Answer ≈ 0.87

* **WMT-14:** Gb-S ≈ 0.83, Question ≈ 0.85, Answer ≈ 0.82, Question-Answer ≈ 0.84

**LLaMA2-7B:**

* **MMLU:** Gb-S ≈ 0.72, Question ≈ 0.74, Answer ≈ 0.71, Question-Answer ≈ 0.73

* **TriviaQA:** Gb-S ≈ 0.88, Question ≈ 0.91, Answer ≈ 0.87, Question-Answer ≈ 0.90

* **CoQA:** Gb-S ≈ 0.78, Question ≈ 0.81, Answer ≈ 0.77, Question-Answer ≈ 0.79

* **WMT-14:** Gb-S ≈ 0.70, Question ≈ 0.72, Answer ≈ 0.69, Question-Answer ≈ 0.71

**LLaMA3-8B:**

* **MMLU:** Gb-S ≈ 0.81, Question ≈ 0.83, Answer ≈ 0.80, Question-Answer ≈ 0.85

* **TriviaQA:** Gb-S ≈ 0.86, Question ≈ 0.88, Answer ≈ 0.85, Question-Answer ≈ 0.87

* **CoQA:** Gb-S ≈ 0.79, Question ≈ 0.82, Answer ≈ 0.78, Question-Answer ≈ 0.81

* **WMT-14:** Gb-S ≈ 0.74, Question ≈ 0.76, Answer ≈ 0.73, Question-Answer ≈ 0.75

**Trends:**

* For all models, the "Question" feature set generally yields the highest AUROC scores, followed closely by "Question-Answer".

* The "Gb-S" feature set consistently shows the lowest AUROC scores across all datasets and models.

* LLaMA2-7B generally has lower AUROC scores compared to Gemma-7B and LLaMA3-8B.

* LLaMA3-8B generally outperforms Gemma-7B.

### Key Observations

* The "Question" feature set consistently provides the best performance across all models and datasets.

* The performance gap between the best and worst feature sets ("Question" vs. "Gb-S") is substantial, particularly for Gemma-7B and LLaMA3-8B.

* LLaMA2-7B shows significantly lower performance on the MMLU dataset compared to the other two models.

* The "Question-Answer" feature set consistently improves performance over the "Question" feature set, but the improvement is often marginal.

### Interpretation

The data suggests that incorporating question-related features (either "Question" alone or in combination with "Answer") is crucial for achieving high AUROC scores in these tasks. The consistently lower performance of the "Gb-S" feature set indicates that this feature set may not be as informative or relevant for these specific datasets and models. The superior performance of LLaMA3-8B over Gemma-7B and LLaMA2-7B suggests that model size and architecture play a significant role in performance. The relatively low performance of LLaMA2-7B on MMLU could indicate a specific weakness in this model's ability to handle the type of knowledge and reasoning required by the MMLU dataset. The consistent improvement from "Question" to "Question-Answer" suggests that combining question and answer information provides a more complete representation of the input, leading to better performance. Overall, the chart provides valuable insights into the effectiveness of different feature sets and the relative performance of different language models on a variety of tasks.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Bar Chart: AUROC Performance of Different Feature Types Across Language Models and Tasks

### Overview

The image displays a set of three grouped bar charts comparing the AUROC (Area Under the Receiver Operating Characteristic curve) performance of four different feature types ("Gb-S", "Question", "Answer", "Question-Answer") extracted from three different large language models (Gemma-7B, LLaMA2-7B, LLaMA3-8B). The performance is evaluated across four distinct tasks or datasets: MMLU, TriviaQA, CoQA, and WMT-14.

### Components/Axes

* **Main Titles (Top of each subplot):**

* Left: "Features from Gemma-7B"

* Center: "Features from LLaMA2-7B"

* Right: "Features from LLaMA3-8B"

* **Y-Axis (Common to all subplots):**

* Label: "AUROC"

* Scale: Linear, ranging from 0.60 to 0.90, with major tick marks at 0.05 intervals (0.60, 0.65, 0.70, 0.75, 0.80, 0.85, 0.90).

* **X-Axis (Within each subplot):**

* Categories (from left to right): "MMLU", "TriviaQA", "CoQA", "WMT-14".

* **Legend (Centered at the bottom of the entire figure):**

* **Gb-S:** Represented by a white bar with a black outline.

* **Question:** Represented by a solid light green bar.

* **Answer:** Represented by a white bar with diagonal black hatching (\\).

* **Question-Answer:** Represented by a light green bar with diagonal black hatching (\\).

### Detailed Analysis

The analysis is segmented by subplot (model) and then by task category. Values are approximate visual estimates from the chart.

**1. Features from Gemma-7B (Left Subplot)**

* **MMLU:**

* Gb-S: ~0.78

* Question: ~0.76

* Answer: ~0.83

* Question-Answer: ~0.83

* *Trend:* Answer and Question-Answer features perform similarly and are highest, followed by Gb-S, then Question.

* **TriviaQA:**

* Gb-S: ~0.87

* Question: ~0.72

* Answer: ~0.88

* Question-Answer: ~0.88

* *Trend:* Answer and Question-Answer are highest and nearly identical. Gb-S is slightly lower. Question is the lowest by a significant margin.

* **CoQA:**

* Gb-S: ~0.74

* Question: ~0.62

* Answer: ~0.76

* Question-Answer: ~0.76

* *Trend:* Answer and Question-Answer are highest and equal. Gb-S is next. Question is the lowest.

* **WMT-14:**

* Gb-S: ~0.83

* Question: ~0.66

* Answer: ~0.85

* Question-Answer: ~0.85

* *Trend:* Answer and Question-Answer are highest and equal. Gb-S is slightly lower. Question is the lowest.

**2. Features from LLaMA2-7B (Center Subplot)**

* **MMLU:**

* Gb-S: ~0.70

* Question: ~0.69

* Answer: ~0.72

* Question-Answer: ~0.72

* *Trend:* Answer and Question-Answer are highest and equal. Gb-S and Question are very close and lower.

* **TriviaQA:**

* Gb-S: ~0.81

* Question: ~0.77

* Answer: ~0.90

* Question-Answer: ~0.90

* *Trend:* Answer and Question-Answer are highest and equal, reaching the top of the scale. Gb-S is next, followed by Question.

* **CoQA:**

* Gb-S: ~0.67

* Question: ~0.70

* Answer: ~0.81

* Question-Answer: ~0.81

* *Trend:* Answer and Question-Answer are highest and equal. Question is next, followed by Gb-S.

* **WMT-14:**

* Gb-S: ~0.73

* Question: ~0.67

* Answer: ~0.78

* Question-Answer: ~0.78

* *Trend:* Answer and Question-Answer are highest and equal. Gb-S is next, followed by Question.

**3. Features from LLaMA3-8B (Right Subplot)**

* **MMLU:**

* Gb-S: ~0.79

* Question: ~0.79

* Answer: ~0.83

* Question-Answer: ~0.83

* *Trend:* Answer and Question-Answer are highest and equal. Gb-S and Question are very close and lower.

* **TriviaQA:**

* Gb-S: ~0.86

* Question: ~0.76

* Answer: ~0.88

* Question-Answer: ~0.88

* *Trend:* Answer and Question-Answer are highest and equal. Gb-S is next, followed by Question.

* **CoQA:**

* Gb-S: ~0.74

* Question: ~0.68

* Answer: ~0.77

* Question-Answer: ~0.77

* *Trend:* Answer and Question-Answer are highest and equal. Gb-S is next, followed by Question.

* **WMT-14:**

* Gb-S: ~0.73

* Question: ~0.62

* Answer: ~0.75

* Question-Answer: ~0.75

* *Trend:* Answer and Question-Answer are highest and equal. Gb-S is next, followed by Question.

### Key Observations

1. **Consistent Superiority of Answer-Based Features:** Across all three models and all four tasks, the "Answer" and "Question-Answer" feature types consistently achieve the highest AUROC scores. Their performance is virtually identical in every single case.

2. **Performance of Gb-S and Question Features:** The "Gb-S" and "Question" features generally perform worse than the answer-inclusive features. The "Question" feature is frequently the lowest-performing, with a notable exception in LLaMA2-7B's CoQA task where it outperforms Gb-S.

3. **Task Difficulty Variation:** The absolute AUROC values vary by task. For example, performance on TriviaQA tends to be higher (often >0.85 for top features) compared to CoQA or WMT-14, suggesting differences in task difficulty or the suitability of the features for those tasks.

4. **Model Comparison:** While all models show the same general pattern, the absolute performance levels differ. For instance, LLaMA2-7B achieves the highest single score (~0.90 on TriviaQA with Answer/QA features), while its performance on MMLU is the lowest among the three models for that task.

### Interpretation

This chart investigates the efficacy of different textual feature types (derived from questions, answers, or both) for some downstream evaluation metric (measured by AUROC) across various language models and benchmarks.

The central finding is that **features incorporating the "Answer" component—either alone or combined with the "Question"—are significantly more informative or predictive** than features based solely on the "Question" or the baseline "Gb-S" (which likely represents a different, perhaps model-internal, feature set). The identical performance of "Answer" and "Question-Answer" features suggests that adding the question to the answer provides no marginal benefit for this specific evaluation metric; the answer text alone carries the critical signal.

This implies that for the tasks evaluated (MMLU, TriviaQA, CoQA, WMT-14), the model's generated or retrieved answer contains the most discriminative information. The question text, while necessary to prompt the model, does not contribute additional predictive power once the answer is available. The "Gb-S" features serve as a middle-ground baseline, often outperforming pure "Question" features but falling short of answer-based ones.

The consistency of this pattern across three distinct model architectures (Gemma, LLaMA2, LLaMA3) strengthens the conclusion that this is a robust phenomenon related to the nature of the tasks and the information content of answers versus questions, rather than an artifact of a specific model.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: AUROC Comparison Across Models and Datasets

### Overview

The image is a grouped bar chart comparing the Area Under the Receiver Operating Characteristic curve (AUROC) values for different feature combinations across three language models (Gemma-7B, LLaMA2-7B, LLaMA3-8B) and four datasets (MMLU, TriviaQA, CoQA, WMT-14). The chart uses four feature types: Gb-S (white), Question (green), Answer (striped), and Question-Answer (green with diagonal lines).

### Components/Axes

- **X-axis**: Datasets (MMLU, TriviaQA, CoQA, WMT-14), repeated for each model.

- **Y-axis**: AUROC values (0.6–0.9), labeled "AUROC".

- **Legend**: Located at the bottom, with four categories:

- Gb-S (solid white)

- Question (solid green)

- Answer (striped white/green)

- Question-Answer (green with diagonal lines)

- **Model Groups**: Three vertical clusters labeled "Features from Gemma-7B", "Features from LLaMA2-7B", and "Features from LLaMA3-8B".

### Detailed Analysis

#### Gemma-7B

- **MMLU**:

- Gb-S: ~0.78

- Question: ~0.76

- Answer: ~0.83

- Question-Answer: ~0.83

- **TriviaQA**:

- Gb-S: ~0.87

- Question: ~0.72

- Answer: ~0.89

- Question-Answer: ~0.89

- **CoQA**:

- Gb-S: ~0.74

- Question: ~0.62

- Answer: ~0.76

- Question-Answer: ~0.76

- **WMT-14**:

- Gb-S: ~0.83

- Question: ~0.65

- Answer: ~0.85

- Question-Answer: ~0.85

#### LLaMA2-7B

- **MMLU**:

- Gb-S: ~0.70

- Question: ~0.70

- Answer: ~0.72

- Question-Answer: ~0.72

- **TriviaQA**:

- Gb-S: ~0.81

- Question: ~0.77

- Answer: ~0.90

- Question-Answer: ~0.90

- **CoQA**:

- Gb-S: ~0.66

- Question: ~0.70

- Answer: ~0.81

- Question-Answer: ~0.81

- **WMT-14**:

- Gb-S: ~0.73

- Question: ~0.67

- Answer: ~0.78

- Question-Answer: ~0.78

#### LLaMA3-8B

- **MMLU**:

- Gb-S: ~0.79

- Question: ~0.79

- Answer: ~0.83

- Question-Answer: ~0.83

- **TriviaQA**:

- Gb-S: ~0.86

- Question: ~0.76

- Answer: ~0.88

- Question-Answer: ~0.88

- **CoQA**:

- Gb-S: ~0.74

- Question: ~0.68

- Answer: ~0.77

- Question-Answer: ~0.77

- **WMT-14**:

- Gb-S: ~0.73

- Question: ~0.62

- Answer: ~0.75

- Question-Answer: ~0.75

### Key Observations

1. **Question-Answer Feature Dominance**:

- The Question-Answer feature (green with diagonal lines) consistently achieves the highest AUROC across all models and datasets, often matching or slightly exceeding the Answer feature (striped).

- Example: LLaMA2-7B on TriviaQA shows Question-Answer at 0.90, the highest value in the chart.

2. **Gb-S Variability**:

- Gb-S (solid white) performs inconsistently. It outperforms other features in Gemma-7B's TriviaQA (0.87) but underperforms in LLaMA2-7B's MMLU (0.70).

3. **Dataset-Specific Trends**:

- **TriviaQA**: All models show strong performance, with AUROC values clustering near 0.85–0.90.

- **WMT-14**: Lowest performance across all models, with AUROC values generally below 0.80.

- **CoQA**: Mixed results, with Question-Answer often matching Answer feature performance.

4. **Model Performance**:

- LLaMA3-8B generally outperforms Gemma-7B and LLaMA2-7B, particularly in TriviaQA and MMLU.

- Gemma-7B shows the highest Gb-S performance in TriviaQA (0.87).

### Interpretation

The data suggests that **combining question and answer features (Question-Answer)** yields the most robust performance across models and datasets, likely due to richer contextual information. The Gb-S feature's inconsistent results imply it may be less effective or model-dependent. TriviaQA and MMLU datasets appear more amenable to feature-based improvements, while WMT-14's lower performance might reflect task-specific challenges (e.g., translation vs. QA). The Answer feature alone often bridges the gap between Gb-S and Question-Answer, highlighting its utility as a standalone feature. Model architecture differences (e.g., LLaMA3-8B's larger size) may explain performance variations, though the chart does not explicitly test this hypothesis.

DECODING INTELLIGENCE...