TECHNICAL ASSET FINGERPRINT

6909ce34750ac6e7ad08a961

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

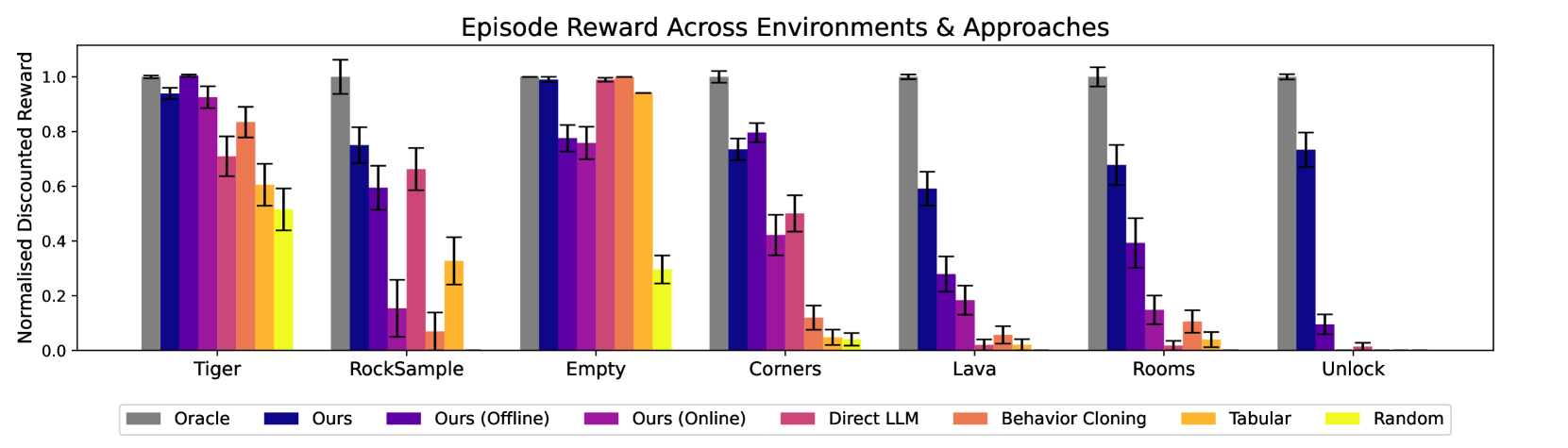

## Bar Chart: Episode Reward Across Environments & Approaches

### Overview

The image is a bar chart comparing the performance of different reinforcement learning approaches across various environments. The y-axis represents the normalized discounted reward, ranging from 0.0 to 1.0. The x-axis represents different environments: Tiger, RockSample, Empty, Corners, Lava, Rooms, and Unlock. Each environment has a set of bars representing different approaches: Oracle, Ours, Ours (Offline), Ours (Online), Direct LLM, Behavior Cloning, Tabular, and Random. Error bars are included on each bar to indicate the variance in performance.

### Components/Axes

* **Title:** Episode Reward Across Environments & Approaches

* **Y-axis:** Normalised Discounted Reward, ranging from 0.0 to 1.0 in increments of 0.2.

* **X-axis:** Environments: Tiger, RockSample, Empty, Corners, Lava, Rooms, Unlock.

* **Legend:** Located at the bottom of the chart.

* Gray: Oracle

* Dark Blue: Ours

* Purple: Ours (Offline)

* Pinkish-Purple: Ours (Online)

* Pink: Direct LLM

* Orange: Behavior Cloning

* Yellow-Orange: Tabular

* Yellow: Random

### Detailed Analysis

**Tiger Environment:**

* Oracle (Gray): ~0.99

* Ours (Dark Blue): ~0.95

* Ours (Offline) (Purple): ~0.90

* Ours (Online) (Pinkish-Purple): ~0.80

* Direct LLM (Pink): ~0.70

* Behavior Cloning (Orange): ~0.60

* Tabular (Yellow-Orange): ~0.50

* Random (Yellow): ~0.45

**RockSample Environment:**

* Oracle (Gray): ~1.0

* Ours (Dark Blue): ~0.75

* Ours (Offline) (Purple): ~0.68

* Ours (Online) (Pinkish-Purple): ~0.65

* Direct LLM (Pink): ~0.15

* Behavior Cloning (Orange): ~0.30

* Tabular (Yellow-Orange): ~0.25

* Random (Yellow): ~0.10

**Empty Environment:**

* Oracle (Gray): ~1.0

* Ours (Dark Blue): ~1.0

* Ours (Offline) (Purple): ~0.98

* Ours (Online) (Pinkish-Purple): ~0.95

* Direct LLM (Pink): ~0.90

* Behavior Cloning (Orange): ~0.85

* Tabular (Yellow-Orange): ~0.30

* Random (Yellow): ~0.10

**Corners Environment:**

* Oracle (Gray): ~1.0

* Ours (Dark Blue): ~0.75

* Ours (Offline) (Purple): ~0.70

* Ours (Online) (Pinkish-Purple): ~0.75

* Direct LLM (Pink): ~0.75

* Behavior Cloning (Orange): ~0.10

* Tabular (Yellow-Orange): ~0.05

* Random (Yellow): ~0.05

**Lava Environment:**

* Oracle (Gray): ~1.0

* Ours (Dark Blue): ~0.35

* Ours (Offline) (Purple): ~0.20

* Ours (Online) (Pinkish-Purple): ~0.10

* Direct LLM (Pink): ~0.05

* Behavior Cloning (Orange): ~0.02

* Tabular (Yellow-Orange): ~0.01

* Random (Yellow): ~0.01

**Rooms Environment:**

* Oracle (Gray): ~1.0

* Ours (Dark Blue): ~0.70

* Ours (Offline) (Purple): ~0.20

* Ours (Online) (Pinkish-Purple): ~0.30

* Direct LLM (Pink): ~0.10

* Behavior Cloning (Orange): ~0.05

* Tabular (Yellow-Orange): ~0.02

* Random (Yellow): ~0.01

**Unlock Environment:**

* Oracle (Gray): ~1.0

* Ours (Dark Blue): ~0.75

* Ours (Offline) (Purple): ~0.10

* Ours (Online) (Pinkish-Purple): ~0.05

* Direct LLM (Pink): ~0.02

* Behavior Cloning (Orange): ~0.01

* Tabular (Yellow-Orange): ~0.01

* Random (Yellow): ~0.01

### Key Observations

* The "Oracle" approach consistently achieves the highest normalized discounted reward across all environments, often reaching a value of 1.0.

* The "Ours" approach (dark blue) generally performs well, but its performance varies depending on the environment.

* The "Random" approach (yellow) consistently performs the worst across all environments.

* The performance of "Ours (Offline)", "Ours (Online)", "Direct LLM", "Behavior Cloning", and "Tabular" varies significantly depending on the environment.

### Interpretation

The bar chart provides a comparative analysis of different reinforcement learning approaches across a range of environments. The "Oracle" approach serves as a benchmark, representing the optimal performance achievable in each environment. The performance of the other approaches relative to the "Oracle" indicates their effectiveness in solving the specific challenges posed by each environment. The significant performance differences across environments highlight the importance of choosing an appropriate learning approach based on the characteristics of the environment. The error bars indicate the variability in performance, which can be attributed to factors such as the stochastic nature of the learning process and the complexity of the environment. The data suggests that the "Ours" approach is a viable alternative to the "Oracle" approach in some environments, while other approaches may be more suitable for specific tasks. The consistently poor performance of the "Random" approach underscores the importance of using informed learning strategies.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Bar Chart: Episode Reward Across Environments & Approaches

### Overview

This is a grouped bar chart comparing the performance of eight different algorithmic approaches across seven distinct reinforcement learning environments. Performance is measured by "Normalised Discounted Reward," a metric scaled between 0.0 and 1.0, where higher values indicate better performance. Each environment cluster contains eight bars, one for each approach, with error bars indicating variability.

### Components/Axes

* **Chart Title:** "Episode Reward Across Environments & Approaches"

* **Y-Axis:**

* **Label:** "Normalised Discounted Reward"

* **Scale:** Linear, from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-Axis:**

* **Label:** Environment names.

* **Categories (from left to right):** Tiger, RockSample, Empty, Corners, Lava, Rooms, Unlock.

* **Legend:** Positioned at the bottom of the chart, centered. It maps colors to approach names:

* **Grey:** Oracle

* **Dark Blue:** Ours

* **Purple:** Ours (Offline)

* **Magenta:** Ours (Online)

* **Pink:** Direct LLM

* **Salmon/Orange-Red:** Behavior Cloning

* **Orange:** Tabular

* **Yellow:** Random

### Detailed Analysis

Performance is analyzed per environment cluster, moving left to right. Approximate values are estimated from the bar heights relative to the y-axis.

**1. Tiger:**

* **Trend:** Oracle, Ours, and Ours (Online) perform best, near the maximum reward. Performance generally decreases across the remaining approaches.

* **Approximate Values:**

* Oracle: ~1.0

* Ours: ~0.95

* Ours (Offline): ~0.92

* Ours (Online): ~0.92

* Direct LLM: ~0.70

* Behavior Cloning: ~0.82

* Tabular: ~0.60

* Random: ~0.52

**2. RockSample:**

* **Trend:** Oracle is highest. "Ours" and "Ours (Online)" show moderate performance. "Ours (Offline)" and "Direct LLM" are notably lower. "Behavior Cloning" is very low.

* **Approximate Values:**

* Oracle: ~1.0

* Ours: ~0.75

* Ours (Offline): ~0.60

* Ours (Online): ~0.66

* Direct LLM: ~0.15

* Behavior Cloning: ~0.08

* Tabular: ~0.33

* Random: ~0.0 (near zero)

**3. Empty:**

* **Trend:** A high-performance cluster. Oracle, Ours, Ours (Online), and Tabular all achieve near-maximum reward. Ours (Offline) is slightly lower. Random is the only low-performing approach.

* **Approximate Values:**

* Oracle: ~1.0

* Ours: ~0.99

* Ours (Offline): ~0.76

* Ours (Online): ~0.99

* Direct LLM: ~1.0

* Behavior Cloning: ~0.94

* Tabular: ~0.94

* Random: ~0.28

**4. Corners:**

* **Trend:** Oracle is highest. "Ours" and "Ours (Online)" are the next best group. Performance drops significantly for the remaining approaches.

* **Approximate Values:**

* Oracle: ~1.0

* Ours: ~0.72

* Ours (Offline): ~0.79

* Ours (Online): ~0.49

* Direct LLM: ~0.50

* Behavior Cloning: ~0.12

* Tabular: ~0.05

* Random: ~0.05

**5. Lava:**

* **Trend:** Oracle is highest. "Ours" is the only other approach with substantial reward. All other approaches perform very poorly, near zero.

* **Approximate Values:**

* Oracle: ~1.0

* Ours: ~0.59

* Ours (Offline): ~0.28

* Ours (Online): ~0.18

* Direct LLM: ~0.03

* Behavior Cloning: ~0.05

* Tabular: ~0.03

* Random: ~0.02

**6. Rooms:**

* **Trend:** Oracle is highest. "Ours" shows moderate performance. All other approaches have low to very low rewards.

* **Approximate Values:**

* Oracle: ~1.0

* Ours: ~0.68

* Ours (Offline): ~0.39

* Ours (Online): ~0.15

* Direct LLM: ~0.03

* Behavior Cloning: ~0.11

* Tabular: ~0.05

* Random: ~0.02

**7. Unlock:**

* **Trend:** Oracle is highest. "Ours" is the only other approach with a significant reward. All other approaches perform at or near zero.

* **Approximate Values:**

* Oracle: ~1.0

* Ours: ~0.73

* Ours (Offline): ~0.09

* Ours (Online): ~0.01

* Direct LLM: ~0.02

* Behavior Cloning: ~0.0

* Tabular: ~0.0

* Random: ~0.0

### Key Observations

1. **Oracle Dominance:** The "Oracle" approach (grey bar) consistently achieves the maximum normalized reward (~1.0) across all seven environments, serving as an upper-bound benchmark.

2. **"Ours" Consistency:** The "Ours" approach (dark blue) is the most consistent non-oracle performer, maintaining moderate to high rewards in every environment.

3. **Environment Difficulty:** There is a clear gradient in task difficulty. "Empty" appears to be the easiest, with six of eight approaches scoring above 0.75. "Lava," "Rooms," and "Unlock" appear to be the hardest, with only Oracle and "Ours" achieving meaningful rewards.

4. **Approach Variability:** The performance of approaches like "Direct LLM," "Behavior Cloning," and "Tabular" is highly environment-dependent. They perform well in "Empty" but fail in "Lava" or "Unlock."

5. **"Random" Baseline:** The "Random" approach (yellow) performs poorly across all environments, as expected, confirming the tasks require learned policy.

### Interpretation

This chart demonstrates the comparative efficacy of different learning paradigms in varied reinforcement learning settings. The "Oracle" represents an idealized upper bound, likely using perfect model knowledge. The strong, consistent performance of "Ours" suggests it is a robust method that generalizes well across diverse task structures (from navigation in "Empty" to complex manipulation in "Unlock").

The data highlights a key challenge in AI: methods that excel in simple or specific domains (like "Tabular" in "Empty") often fail to transfer to more complex, sparse-reward environments. The significant drop-off for most methods in "Lava," "Rooms," and "Unlock" indicates these environments pose fundamental challenges related to exploration, long-term planning, or partial observability that only the most sophisticated approaches ("Oracle" and "Ours") can handle. The chart argues for the development of generalizable algorithms, as specialized techniques show brittle performance.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: Episode Reward Across Environments & Approaches

### Overview

The chart compares normalized discounted rewards across seven environments (Tiger, RockSample, Empty, Corners, Lava, Rooms, Unlock) using eight different approaches. The y-axis ranges from 0.0 to 1.0, with error bars indicating uncertainty. Oracle (grey) consistently achieves the highest rewards, while Random (light yellow) performs the worst.

### Components/Axes

- **X-axis**: Environments (Tiger, RockSample, Empty, Corners, Lava, Rooms, Unlock)

- **Y-axis**: Normalised Discounted Reward (0.0–1.0)

- **Legend**:

- Oracle (grey)

- Ours (blue)

- Ours (Offline) (purple)

- Ours (Online) (pink)

- Direct LLM (orange)

- Behavior Cloning (yellow)

- Tabular (light orange)

- Random (light yellow)

### Detailed Analysis

1. **Tiger**:

- Oracle: ~1.0

- Ours (blue): ~0.95

- Ours (Offline): ~0.98

- Ours (Online): ~0.92

- Direct LLM: ~0.85

- Behavior Cloning: ~0.60

- Tabular: ~0.55

- Random: ~0.50

2. **RockSample**:

- Oracle: ~1.0

- Ours (blue): ~0.75

- Ours (Offline): ~0.60

- Ours (Online): ~0.15

- Direct LLM: ~0.65

- Behavior Cloning: ~0.30

- Tabular: ~0.05

- Random: ~0.00

3. **Empty**:

- Oracle: ~1.0

- Ours (blue): ~0.98

- Ours (Offline): ~0.85

- Ours (Online): ~0.80

- Direct LLM: ~0.95

- Behavior Cloning: ~0.90

- Tabular: ~0.85

- Random: ~0.30

4. **Corners**:

- Oracle: ~1.0

- Ours (blue): ~0.70

- Ours (Offline): ~0.80

- Ours (Online): ~0.45

- Direct LLM: ~0.50

- Behavior Cloning: ~0.10

- Tabular: ~0.05

- Random: ~0.02

5. **Lava**:

- Oracle: ~1.0

- Ours (blue): ~0.60

- Ours (Offline): ~0.30

- Ours (Online): ~0.20

- Direct LLM: ~0.20

- Behavior Cloning: ~0.05

- Tabular: ~0.03

- Random: ~0.00

6. **Rooms**:

- Oracle: ~1.0

- Ours (blue): ~0.65

- Ours (Offline): ~0.40

- Ours (Online): ~0.15

- Direct LLM: ~0.20

- Behavior Cloning: ~0.05

- Tabular: ~0.03

- Random: ~0.00

7. **Unlock**:

- Oracle: ~1.0

- Ours (blue): ~0.75

- Ours (Offline): ~0.10

- Ours (Online): ~0.05

- Direct LLM: ~0.05

- Behavior Cloning: ~0.00

- Tabular: ~0.00

- Random: ~0.00

### Key Observations

- **Oracle Dominance**: Oracle achieves near-perfect rewards (~1.0) in all environments.

- **Proposed Methods ("Ours")**:

- Blue ("Ours") and purple ("Ours Offline") perform closest to Oracle in most environments.

- Pink ("Ours Online") underperforms in complex environments (e.g., Lava, Rooms).

- **Weak Approaches**:

- Random (light yellow) consistently scores near 0.0.

- Tabular (light orange) and Behavior Cloning (yellow) show minimal rewards in challenging environments.

- **Environment-Specific Trends**:

- "Ours Online" (pink) struggles in Lava and Rooms (~0.20–0.15).

- Direct LLM (orange) performs better in Empty (~0.95) but poorly in Lava (~0.20).

### Interpretation

The chart demonstrates that the proposed methods ("Ours") achieve rewards close to the theoretical Oracle, particularly in simpler environments (Tiger, Empty). However, performance degrades in complex environments like Lava and Rooms, where "Ours Online" (pink) and Direct LLM (orange) show significant drops. The Random approach (light yellow) serves as a clear baseline, performing worst across all scenarios. The error bars suggest moderate uncertainty in rewards, with larger variability in complex environments. This highlights the importance of environment complexity in evaluating reinforcement learning approaches, with "Ours" methods showing robustness compared to traditional baselines like Behavior Cloning and Tabular.

DECODING INTELLIGENCE...