## Bar Chart: Episode Reward Across Environments & Approaches

### Overview

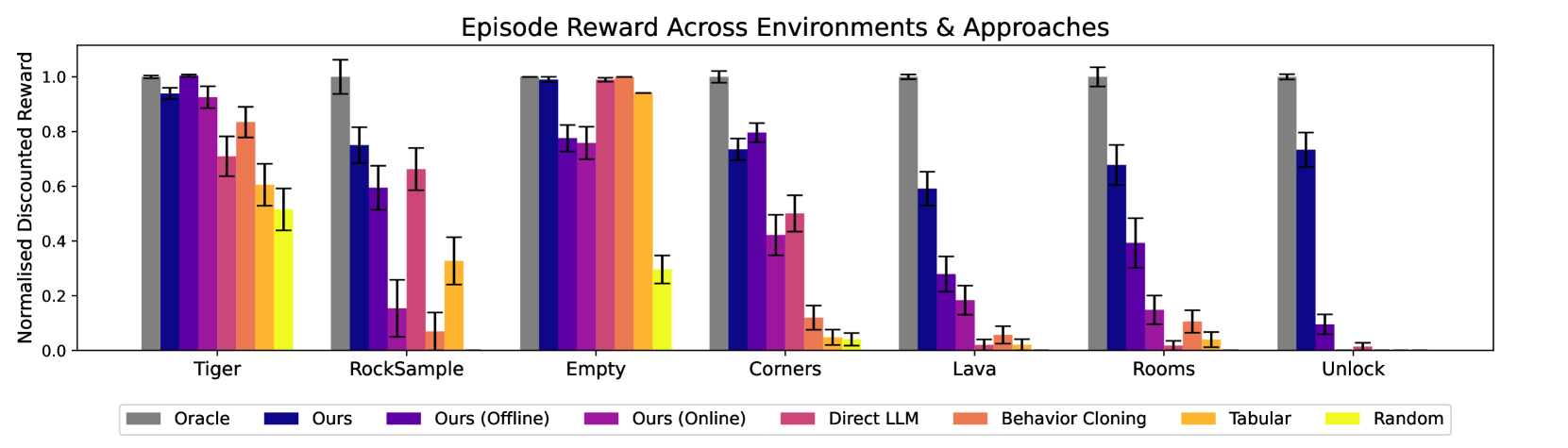

The chart compares normalized discounted rewards across seven environments (Tiger, RockSample, Empty, Corners, Lava, Rooms, Unlock) using eight different approaches. The y-axis ranges from 0.0 to 1.0, with error bars indicating uncertainty. Oracle (grey) consistently achieves the highest rewards, while Random (light yellow) performs the worst.

### Components/Axes

- **X-axis**: Environments (Tiger, RockSample, Empty, Corners, Lava, Rooms, Unlock)

- **Y-axis**: Normalised Discounted Reward (0.0–1.0)

- **Legend**:

- Oracle (grey)

- Ours (blue)

- Ours (Offline) (purple)

- Ours (Online) (pink)

- Direct LLM (orange)

- Behavior Cloning (yellow)

- Tabular (light orange)

- Random (light yellow)

### Detailed Analysis

1. **Tiger**:

- Oracle: ~1.0

- Ours (blue): ~0.95

- Ours (Offline): ~0.98

- Ours (Online): ~0.92

- Direct LLM: ~0.85

- Behavior Cloning: ~0.60

- Tabular: ~0.55

- Random: ~0.50

2. **RockSample**:

- Oracle: ~1.0

- Ours (blue): ~0.75

- Ours (Offline): ~0.60

- Ours (Online): ~0.15

- Direct LLM: ~0.65

- Behavior Cloning: ~0.30

- Tabular: ~0.05

- Random: ~0.00

3. **Empty**:

- Oracle: ~1.0

- Ours (blue): ~0.98

- Ours (Offline): ~0.85

- Ours (Online): ~0.80

- Direct LLM: ~0.95

- Behavior Cloning: ~0.90

- Tabular: ~0.85

- Random: ~0.30

4. **Corners**:

- Oracle: ~1.0

- Ours (blue): ~0.70

- Ours (Offline): ~0.80

- Ours (Online): ~0.45

- Direct LLM: ~0.50

- Behavior Cloning: ~0.10

- Tabular: ~0.05

- Random: ~0.02

5. **Lava**:

- Oracle: ~1.0

- Ours (blue): ~0.60

- Ours (Offline): ~0.30

- Ours (Online): ~0.20

- Direct LLM: ~0.20

- Behavior Cloning: ~0.05

- Tabular: ~0.03

- Random: ~0.00

6. **Rooms**:

- Oracle: ~1.0

- Ours (blue): ~0.65

- Ours (Offline): ~0.40

- Ours (Online): ~0.15

- Direct LLM: ~0.20

- Behavior Cloning: ~0.05

- Tabular: ~0.03

- Random: ~0.00

7. **Unlock**:

- Oracle: ~1.0

- Ours (blue): ~0.75

- Ours (Offline): ~0.10

- Ours (Online): ~0.05

- Direct LLM: ~0.05

- Behavior Cloning: ~0.00

- Tabular: ~0.00

- Random: ~0.00

### Key Observations

- **Oracle Dominance**: Oracle achieves near-perfect rewards (~1.0) in all environments.

- **Proposed Methods ("Ours")**:

- Blue ("Ours") and purple ("Ours Offline") perform closest to Oracle in most environments.

- Pink ("Ours Online") underperforms in complex environments (e.g., Lava, Rooms).

- **Weak Approaches**:

- Random (light yellow) consistently scores near 0.0.

- Tabular (light orange) and Behavior Cloning (yellow) show minimal rewards in challenging environments.

- **Environment-Specific Trends**:

- "Ours Online" (pink) struggles in Lava and Rooms (~0.20–0.15).

- Direct LLM (orange) performs better in Empty (~0.95) but poorly in Lava (~0.20).

### Interpretation

The chart demonstrates that the proposed methods ("Ours") achieve rewards close to the theoretical Oracle, particularly in simpler environments (Tiger, Empty). However, performance degrades in complex environments like Lava and Rooms, where "Ours Online" (pink) and Direct LLM (orange) show significant drops. The Random approach (light yellow) serves as a clear baseline, performing worst across all scenarios. The error bars suggest moderate uncertainty in rewards, with larger variability in complex environments. This highlights the importance of environment complexity in evaluating reinforcement learning approaches, with "Ours" methods showing robustness compared to traditional baselines like Behavior Cloning and Tabular.