## Diagram: Modified Attention Mechanism with Group Normalization

### Overview

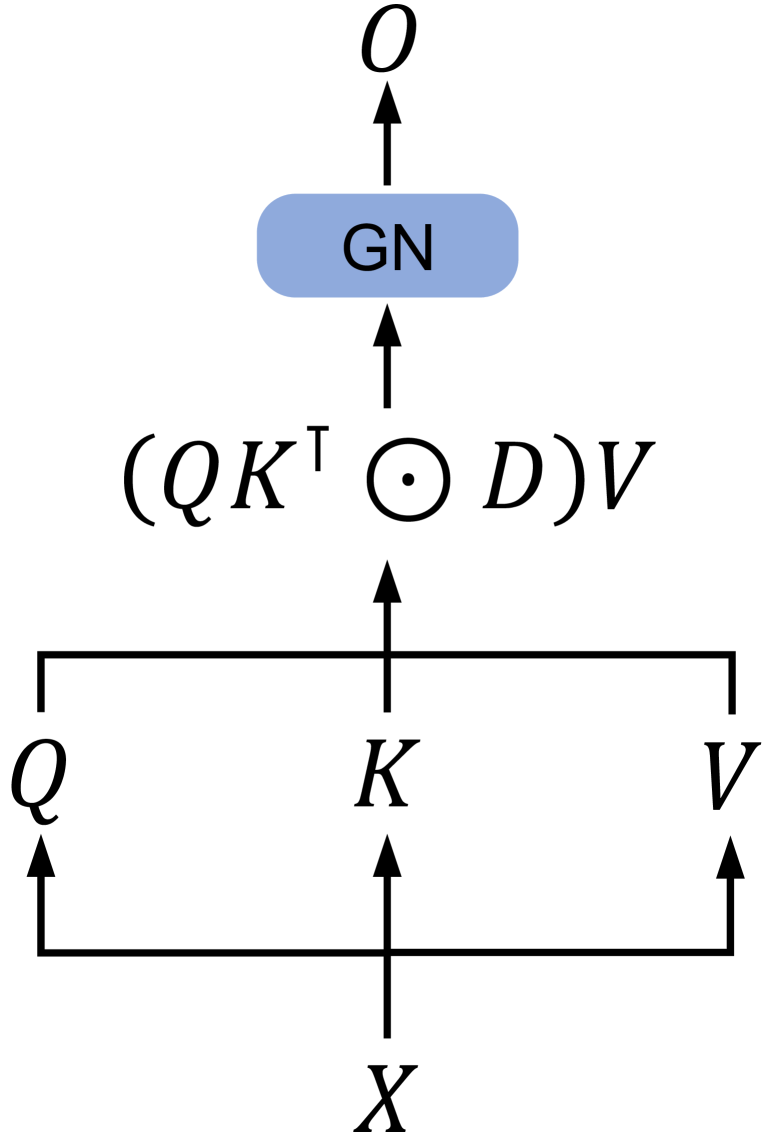

The image displays a computational graph or data flow diagram illustrating a modified attention mechanism, likely from a neural network architecture. The diagram shows the transformation of an input tensor `X` through a series of operations to produce an output tensor `O`. The core operation appears to be a variant of scaled dot-product attention, incorporating an additional element-wise multiplication with a term `D` and a subsequent Group Normalization (GN) step.

### Components/Axes

The diagram is structured vertically, with data flowing from the bottom to the top, indicated by black arrows.

**1. Input Layer (Bottom):**

* **Component:** A single input labeled `X`.

* **Position:** Centered at the bottom of the diagram.

* **Function:** The source tensor that is fed into the system.

**2. Linear Projection Layer (Middle-Bottom):**

* **Components:** Three parallel branches originating from `X`.

* **Labels:** `Q`, `K`, `V`.

* **Position:** `Q` is on the left, `K` is in the center, and `V` is on the right. They are horizontally aligned above `X`.

* **Function:** Represents the linear projections of the input `X` into Query (`Q`), Key (`K`), and Value (`V`) matrices, a standard step in attention mechanisms.

**3. Core Attention Operation (Center):**

* **Component:** A mathematical expression: `(QKᵀ ⊙ D)V`.

* **Position:** Centered above the `Q`, `K`, `V` layer.

* **Breakdown of the Expression:**

* `QKᵀ`: Matrix multiplication of `Q` and the transpose of `K`.

* `⊙`: The Hadamard (element-wise) product symbol.

* `D`: A matrix or tensor that is multiplied element-wise with the result of `QKᵀ`.

* `( ... )V`: The result of the element-wise product is then matrix-multiplied with `V`.

* **Function:** This is the central computation. It modifies the standard attention score calculation (`QKᵀ`) by incorporating an additional term `D` via element-wise multiplication before applying it to the values `V`.

**4. Normalization Layer (Upper-Middle):**

* **Component:** A blue, rounded rectangular block labeled `GN`.

* **Position:** Centered above the core attention operation.

* **Function:** `GN` most commonly stands for **Group Normalization**. This layer normalizes the output of the attention operation, which can help stabilize training.

**5. Output Layer (Top):**

* **Component:** A single output labeled `O`.

* **Position:** Centered at the very top of the diagram.

* **Function:** The final output tensor of this computational block.

### Detailed Analysis

The diagram defines a precise sequence of tensor operations:

1. An input tensor `X` is projected into three tensors: `Q`, `K`, and `V`.

2. The attention scores are computed as the matrix product `QKᵀ`.

3. These scores are modified by an element-wise multiplication with a tensor `D`. The nature of `D` (learnable parameter, mask, bias, etc.) is not specified in the diagram.

4. The modified scores are applied to the value tensor `V` via matrix multiplication.

5. The resulting tensor undergoes Group Normalization (`GN`).

6. The normalized tensor is the final output `O`.

The flow is strictly feedforward and sequential from `X` to `O`, with the only parallelism occurring in the initial projection to `Q`, `K`, and `V`.

### Key Observations

* **Non-Standard Attention:** The inclusion of the element-wise product with `D` (`⊙ D`) is a key deviation from the vanilla scaled dot-product attention formula (which is typically `softmax(QKᵀ/√d_k)V`).

* **Explicit Normalization:** The diagram explicitly includes a normalization step (`GN`) after the attention computation, which is not always depicted in standard attention diagrams but is a common practical component.

* **Abstract Notation:** The diagram uses abstract mathematical notation (`Q`, `K`, `V`, `D`) without specifying dimensions, activation functions, or scaling factors (like the typical `1/√d_k`).

* **Clear Data Flow:** The arrows provide an unambiguous representation of the data dependency and order of operations.

### Interpretation

This diagram represents a **customized attention module** for a neural network, likely a Transformer variant. The core innovation highlighted is the modulation of the attention score matrix (`QKᵀ`) by an additional term `D` before the value aggregation.

**What the data suggests:**

* The term `D` could serve multiple purposes: it might be a **learnable bias** added to the attention scores, a **mask** (e.g., for causality or relative position encoding), or a **gating mechanism** to dynamically weight attention patterns.

* The application of Group Normalization (`GN`) suggests this module is designed for scenarios where batch normalization is less suitable (e.g., with small batch sizes or in generative models), aiming to improve training stability and convergence.

**How elements relate:**

The architecture follows the classic "project, attend, aggregate" pattern of attention but inserts a novel, element-wise interaction (`⊙ D`) between the score computation and value aggregation. This creates a point of potential architectural innovation where the model can learn to suppress or enhance specific attention relationships. The final `GN` layer acts as a stabilizer for the output of this potentially non-linear interaction.

**Notable implications:**

This module would allow a network to learn more complex relationships than standard attention, as `D` provides an extra degree of freedom to condition the attention scores. The diagram serves as a blueprint for implementing this specific layer, clearly defining the required operations and their order. The absence of a softmax operation in the diagram is notable; it may be implied within the `QKᵀ` step or omitted for simplicity, though its absence would be highly unusual for an attention mechanism.