## Diagram: Attention Mechanism

### Overview

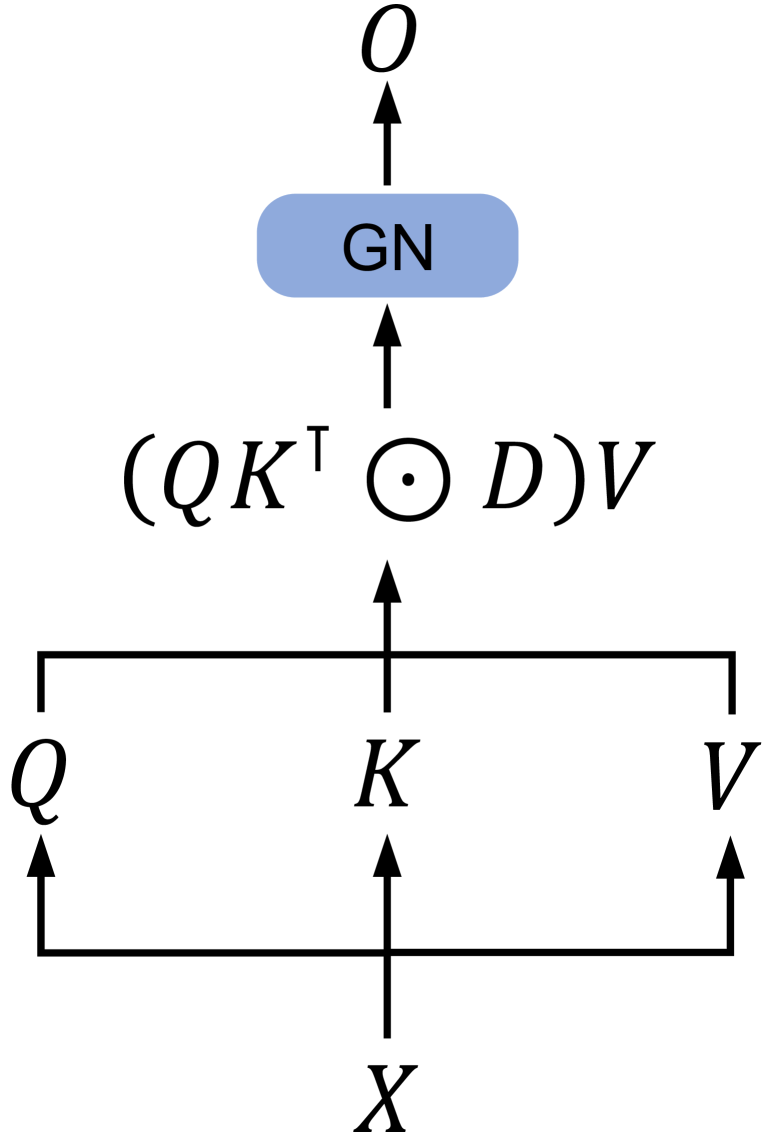

The image depicts a diagram of an attention mechanism, likely used in a neural network architecture. It shows the flow of data from an input `X` through several transformations involving `Q`, `K`, and `V` to produce an output `O`. A Gate Network (GN) is also present.

### Components/Axes

* **Input:** `X`

* **Query:** `Q`

* **Key:** `K`

* **Value:** `V`

* **Intermediate Calculation:** `(QKᵀ ⊙ D)V` (where ⊙ represents the Hadamard product)

* **Gate Network:** GN (light blue rounded rectangle)

* **Output:** `O`

### Detailed Analysis

1. **Input `X`:** Located at the bottom center of the diagram.

2. **Query `Q`:** Located at the bottom left of the diagram. An arrow points from `X` to `Q`.

3. **Key `K`:** Located at the bottom center of the diagram. An arrow points from `X` to `K`.

4. **Value `V`:** Located at the bottom right of the diagram. An arrow points from `X` to `V`.

5. **Horizontal Line:** A horizontal line connects `Q`, `K`, and `V` at the top.

6. **Intermediate Calculation `(QKᵀ ⊙ D)V`:** Located in the center of the diagram. An arrow points from the horizontal line connecting `Q`, `K`, and `V` to this calculation.

7. **Gate Network `GN`:** A light blue rounded rectangle located near the top of the diagram. An arrow points from the intermediate calculation to the `GN`.

8. **Output `O`:** Located at the top of the diagram. An arrow points from the `GN` to `O`.

### Key Observations

* The diagram illustrates a process where the input `X` is transformed into `Q`, `K`, and `V`.

* The intermediate calculation involves the transpose of `K` (`Kᵀ`), a Hadamard product (⊙) with `D`, and multiplication with `V`.

* The Gate Network `GN` processes the result of the intermediate calculation before producing the final output `O`.

### Interpretation

The diagram represents a typical attention mechanism used in neural networks. The input `X` is used to generate queries (`Q`), keys (`K`), and values (`V`). The attention weights are calculated using `Q` and `K` (likely through a dot product and scaling), and these weights are then used to weight the values `V`. The Hadamard product with `D` suggests a masking or scaling operation. The Gate Network `GN` likely controls the flow of information or modulates the output based on the attention mechanism's result. This architecture allows the model to focus on relevant parts of the input when generating the output.