\n

## Diagram: Data Flow Representation

### Overview

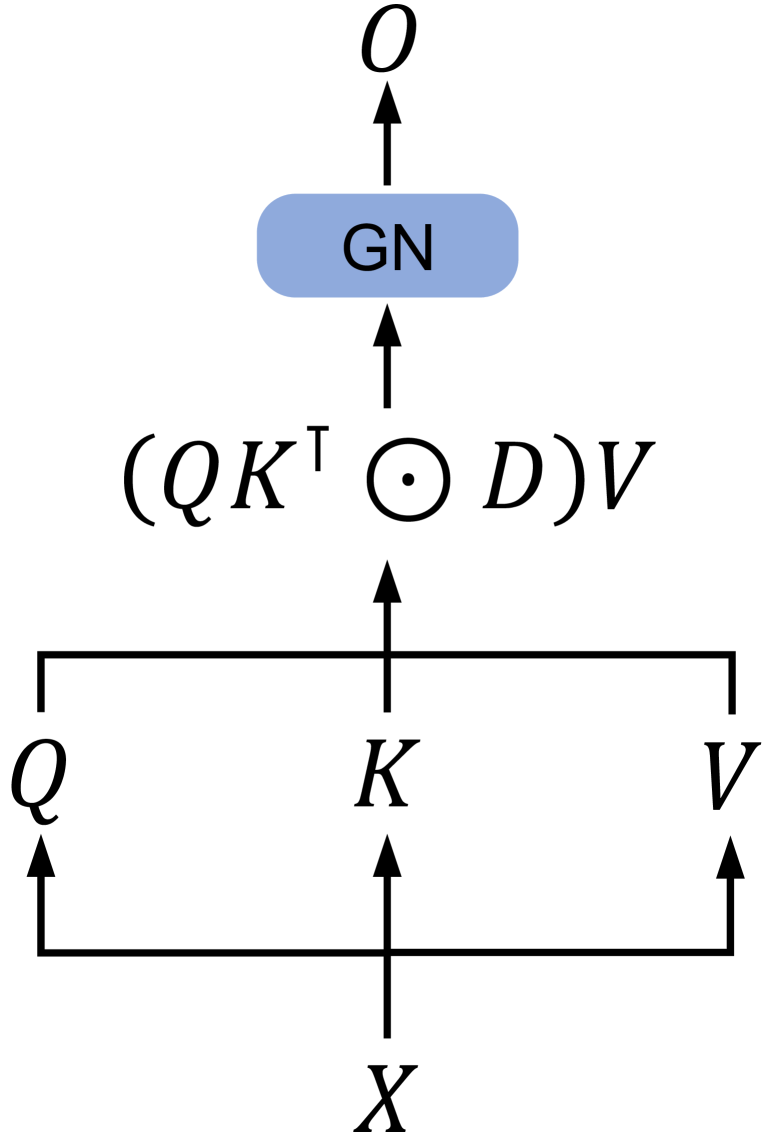

The image depicts a diagram illustrating a data flow or transformation process. It shows a series of variables (X, K, Q, V, O) and operations performed on them, culminating in an output. The diagram uses arrows to indicate the direction of data flow and mathematical notation to represent operations.

### Components/Axes

The diagram consists of the following components:

* **Variables:** X, K, Q, V, O

* **Intermediate Expression:** (QKT ⊙ D)V

* **Node:** GN (represented as a light blue rounded rectangle)

* **Arrows:** Indicating the direction of data flow.

* **Mathematical Symbols:** T (transpose), ⊙ (likely representing an element-wise product or other operation).

There are no axes or scales present in this diagram.

### Detailed Analysis or Content Details

The diagram shows the following data flow:

1. **X** flows upwards to **K**.

2. **K** flows upwards to the expression **(QKT ⊙ D)V**.

3. **Q** flows upwards and connects to the expression **(QKT ⊙ D)V**.

4. **V** flows upwards and connects to the expression **(QKT ⊙ D)V**.

5. The expression **(QKT ⊙ D)V** flows upwards to the node **GN**.

6. **GN** flows upwards to **O**.

The expression **(QKT ⊙ D)V** suggests a matrix operation. Specifically:

* **KT** indicates the transpose of matrix K.

* **QKT** indicates the product of matrices Q and the transpose of K.

* **⊙** likely represents an element-wise multiplication (Hadamard product) between the result of QKT and matrix D.

* **V** indicates a multiplication of the result of the Hadamard product with matrix V.

### Key Observations

The diagram represents a computational process where input **X** is transformed through a series of matrix operations involving **K**, **Q**, **D**, and **V**, ultimately resulting in output **O** via the intermediate node **GN**. The node **GN** appears to be a processing step or a function applied to the result of the matrix operation.

### Interpretation

This diagram likely represents a component within a larger machine learning or signal processing system. The expression **(QKT ⊙ D)V** is reminiscent of attention mechanisms used in neural networks, where Q, K, and V represent query, key, and value matrices, respectively. The node **GN** could represent a gain or normalization function. The overall process transforms an input **X** into an output **O** through a weighted combination of features represented by the matrices involved. The use of the transpose (T) and the element-wise product (⊙) suggests a specific type of attention or weighting scheme. Without further context, it's difficult to determine the exact purpose of this component, but it clearly represents a data transformation process. The diagram is a high-level representation and does not provide details about the dimensions of the matrices or the specific implementation of the operations.